Wikipedia policies: How rules keep the world’s largest encyclopedia accurate and fair

When you read a Wikipedia article, you’re not just seeing facts—you’re seeing the result of Wikipedia policies, a set of community-driven rules designed to ensure accuracy, neutrality, and reliability. Also known as Wikipedia guidelines, these policies aren’t written by executives or algorithms—they’re shaped by hundreds of thousands of volunteers who argue, edit, and revise every day to keep the site trustworthy. Without them, Wikipedia would be a mess of opinions, rumors, and corporate spin. Instead, it stays the go-to starting point for millions because these rules force clarity, evidence, and fairness.

These policies don’t work in a vacuum. They rely on other key systems like reliable sources, the standard for what counts as credible information on Wikipedia. Also known as verifiable sources, this rule means you can’t just cite a blog post or a tweet—you need books, peer-reviewed journals, or established news outlets. Then there’s Wikipedia neutrality, the policy that demands articles reflect the balance of published opinion, not personal bias. Also known as neutral point of view, it’s why controversial topics don’t become propaganda wars. And underpinning it all is due weight, the rule that ensures minority views aren’t ignored, but also aren’t given equal space to majority evidence. Without these, even the best-intentioned edits could mislead.

These aren’t abstract ideas. They’re the reason a local historian can get their town’s founding documented, why a journalist can trace a fact back to its original source, and why a student doesn’t walk away with false information. They’re also why Wikipedia fights back against copyright takedowns that erase history, why AI-generated summaries are checked for misleading citations, and why volunteers spend hours cleaning up biased language or fixing broken links. The system isn’t perfect—it’s messy, slow, and sometimes frustrating—but it’s built to be fairer than any corporate algorithm.

What you’ll find below isn’t a list of dry rules. It’s a collection of real stories about how these policies play out: how they protect Indigenous voices, how they handle harassment, how they keep AI from rewriting history, and how they turn volunteer effort into global knowledge. These are the policies that make Wikipedia more than a website—they make it a living, breathing community.

Wikipedia’s Response to AI Competitors: Tools, Policies, and Community Strategy

Wikipedia is fighting back against AI encyclopedias not with technology alone, but with its community, strict policies, and tools that prioritize accuracy over speed. Here's how it's staying relevant in the age of AI.

Avoiding Original Research in Wikipedia Real-Time Coverage

Wikipedia's real-time coverage must avoid original research by relying only on confirmed, published sources. Adding speculation, rumors, or personal analysis during breaking news undermines its credibility and spreads misinformation.

UCoC Enforcement Guidelines and Their Impact on Wikipedia

The UCoC Enforcement Guidelines transformed Wikipedia from a volunteer-run project into a safer, more inclusive platform. By standardizing conduct rules globally, they reduced harassment, improved editor retention, and set a new standard for open communities.

Policy Literacy for New Wikipedians: Avoiding Common Mistakes

New Wikipedia editors often make avoidable mistakes by ignoring policies like neutrality, sourcing, and notability. Learn the top five errors and how to fix them to become a trusted contributor.

Case Study: German Wikipedia’s Quality and Policy Rigour

German Wikipedia stands out for its strict sourcing rules, trained editors, and policy-driven editing culture. With fewer articles but far fewer errors, it offers one of the most reliable encyclopedias in the world.

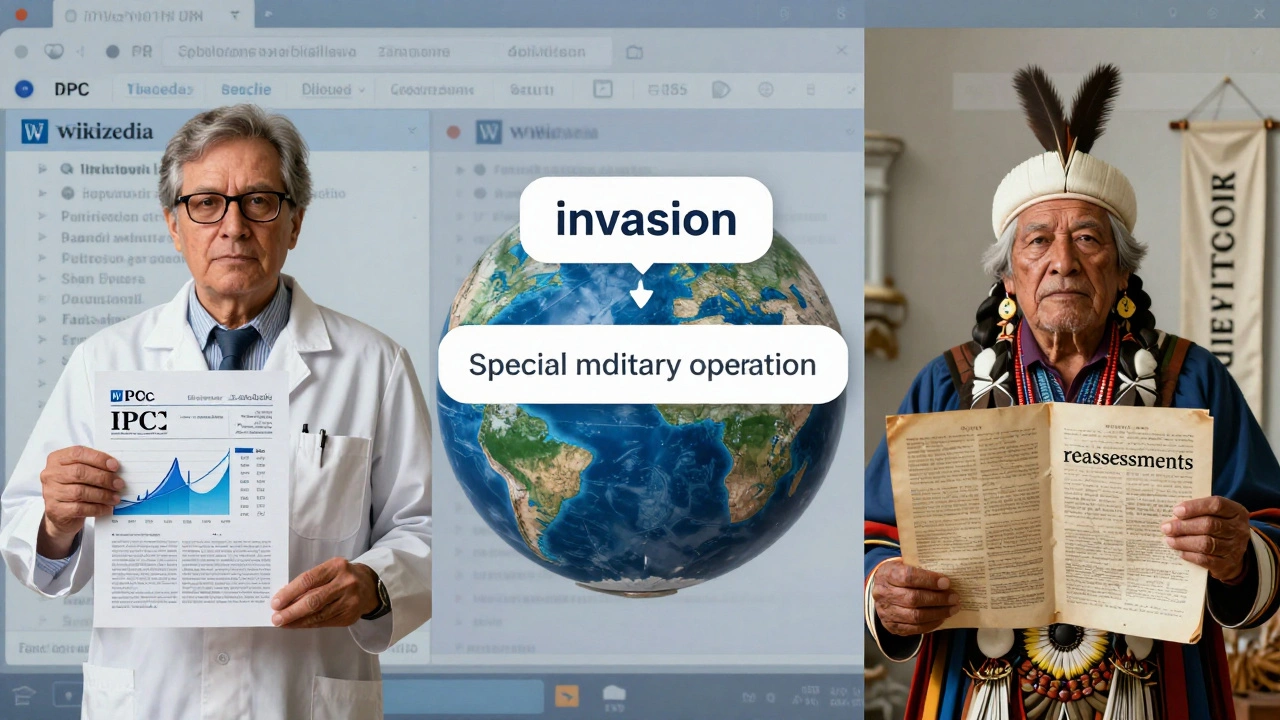

Recent NPOV Disputes on Wikipedia and How They Were Resolved

Recent NPOV disputes on Wikipedia show how neutrality is maintained through source-based consensus, mediation, and policy-not votes or power. Learn how high-profile conflicts over climate change, war narratives, and historical figures were resolved.

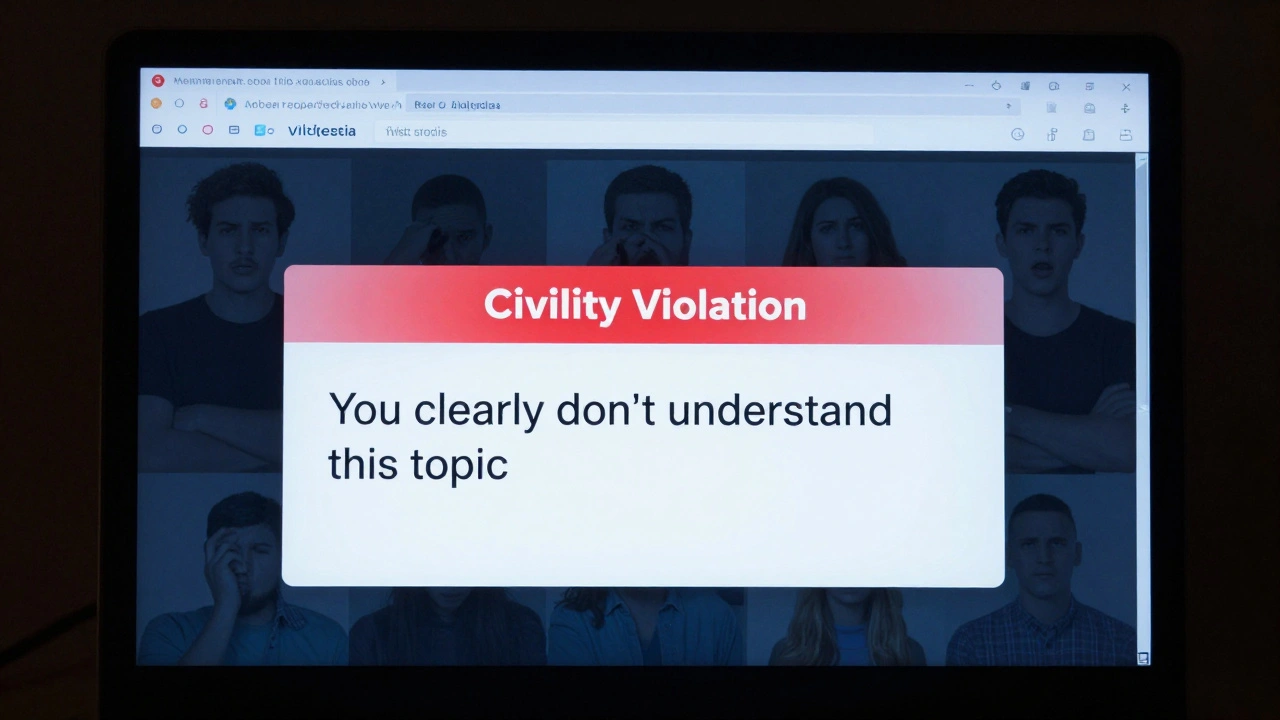

Wikipedia Editor Behavior Standards and Civility Requirements

Wikipedia's civility standards ensure collaborative editing by requiring editors to remain respectful, assume good faith, and resolve conflicts through policy-driven processes rather than personal attacks.

Local vs. Global Policies: How Wikipedia Language Editions Differ

Wikipedia's language editions follow different policies shaped by local culture, politics, and community norms - not global rules. What's allowed on one version may be banned on another.

Corporate and Government Wikipedia Editing: Ethical Debates and Scandals

This article explains the ethical debates around corporate and government editing on Wikipedia, covering real scandals, Wikipedia's policies, and how the community fights bias. Learn why transparency matters for reliable information.

Civility Sanctions on Wikipedia: Where Lines Are Drawn

Wikipedia enforces civility to keep collaboration alive. Sanctions aren't about being polite-they're about preventing toxic behavior that drives away editors and undermines the encyclopedia's accuracy.

Policy Case Studies: BLP Reforms and Aftermath on Wikipedia

Wikipedia's BLP policy reforms since 2006 have made biographies safer but less inclusive. Learn how changes in sourcing, protection, and equity efforts shaped modern editing practices and who gets left behind.

What Wikipedia Administrators Do: Roles and Responsibilities Explained

Wikipedia administrators are unpaid volunteers who maintain the site by enforcing policies, handling vandalism, and mediating disputes. They don't decide what's true-they ensure rules are followed.