Edit Wars on Wikipedia: What They Are, Why They Happen, and How They’re Stopped

When two or more editors keep reverting each other’s changes on the same Wikipedia article, that’s an edit war, a recurring conflict where editors repeatedly overwrite each other’s edits without reaching consensus. Also known as content disputes, these clashes aren’t just about typos—they’re fights over facts, bias, representation, and what counts as reliable information. They happen on everything from political figures to pop culture topics, and they don’t go away just because someone clicks ‘save’.

Behind every edit war is a deeper issue: conflicting views on neutrality, sourcing, or due weight. One editor might add a claim based on a niche blog; another reverts it because it violates Wikipedia’s reliable sources, the standard for verifying information on Wikipedia, requiring publication in established media, academic journals, or books. Someone else might push to include a minority perspective, triggering a due weight, the policy that requires articles to reflect the proportion of coverage in reliable sources, not the loudest voices debate. These aren’t random tantrums—they’re systemic tensions playing out in real time. And while Wikipedia has tools like watchlists, a feature that lets editors track changes to specific pages to catch vandalism or repeated disputes and vandalism moderation, the process of identifying and reversing malicious or disruptive edits to help, they don’t stop the root cause: people who believe they’re right and refuse to compromise.

What makes edit wars dangerous isn’t just the back-and-forth—it’s how they drain energy from the community. Volunteers who could be improving articles on climate science or Indigenous history end up spending hours arguing over a single sentence. The solution isn’t always blocking users. Sometimes it’s mediation, sometimes it’s locking the page temporarily, and sometimes it’s just waiting for a new editor with fresh eyes to step in. The best edit wars end when someone finds a source that settles the argument—and everyone agrees to move on.

What you’ll find in this collection aren’t just stories about fights—they’re case studies in how Wikipedia tries to keep its knowledge fair, accurate, and alive. From how the Signpost covers these clashes to how Wikidata helps reduce contradictions across languages, these posts show the real machinery behind the scenes. You’ll see how policies like due weight and reliable sources are applied in practice, how volunteers use watchlists to stay ahead of chaos, and why some edit wars never really end—they just change shape.

Wikipedia Controversies: A Timeline of Major Governance Conflicts

Explore the history of Wikipedia's biggest governance battles, from edit wars and NPOV disputes to the tension between volunteers and the Wikimedia Foundation.

Off-Wiki Canvassing and How It Undermines Wikipedia Consensus

Off-wiki canvassing undermines Wikipedia's consensus by letting outside groups influence edits through social media and other platforms. This violates the core principle of neutral, evidence-based collaboration and erodes trust in the encyclopedia.

Off-Wiki Canvassing and How It Undermines Wikipedia Consensus

Off-wiki canvassing undermines Wikipedia's consensus by manipulating edits from outside the platform. It erodes trust, triggers edit wars, and threatens the integrity of one of the world's most trusted information sources.

Off-Wiki Canvassing and Its Impact on Wikipedia Consensus

Off-wiki canvassing undermines Wikipedia's consensus by allowing external influence on edits. This practice distorts collaboration, erodes trust, and drives away contributors. Learn how it works, why it's banned, and what you can do to protect Wikipedia's integrity.

Wikipedia's Response to Paid Editing Scandals

Wikipedia responded to paid editing scandals by enforcing transparency, requiring editors to disclose paid relationships. Volunteers and automated tools now flag suspicious edits, and companies like Google and Microsoft have adopted strict internal policies. Trust in Wikipedia remains intact because of its open, community-driven enforcement.

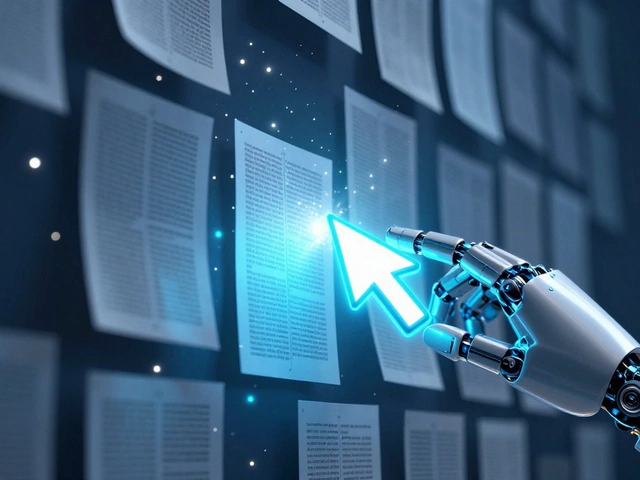

What Computer Science Research Reveals About Wikipedia's Infrastructure

Computer science research reveals how Wikipedia’s infrastructure uses bots, caching, and community-driven rules to handle billions of edits. Its resilient design offers a blueprint for managing large-scale online collaboration.

How Wikipedia Handles Controversial Topics: Disputes, Mediation, and Consensus

Wikipedia handles controversial topics through a system of mediation, consensus, and source-based editing. Disputes are expected, not avoided. Editors rely on reliable sources, not opinions. Conflict is managed, not suppressed.

The Complete Process for Proposing and Implementing New Wikipedia Policies

Learn how Wikipedia volunteers propose, debate, and implement new policies through open, consensus-driven discussions - no authority needed, just clear reasoning and patience.

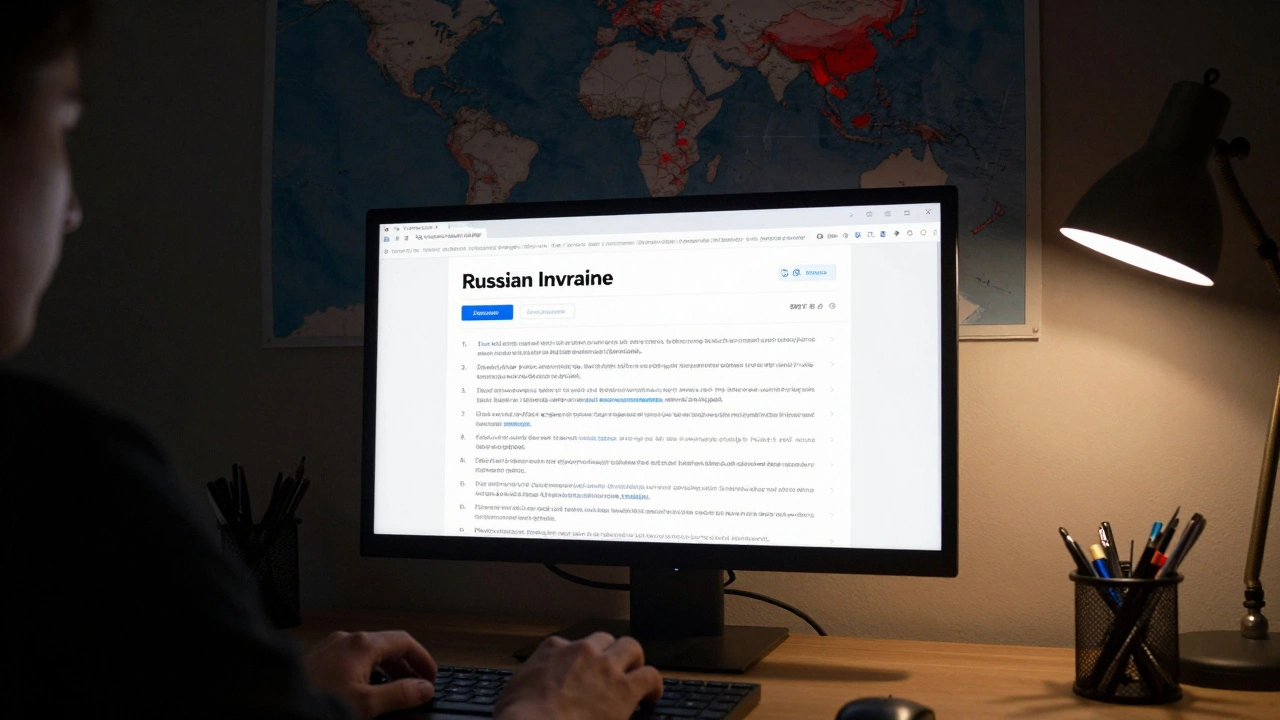

How Wikipedia Documents Sensitive War Crimes and Human Rights Topics

Wikipedia documents war crimes and human rights violations through open, source-based editing by volunteers. It doesn't decide truth - it maps claims, verifies evidence, and preserves records when governments try to erase them.

The Signpost's Tech Report: Key Updates for Wikipedia Editors

The Signpost's Tech Report keeps Wikipedia editors informed about critical updates to editing tools, bots, mobile apps, and infrastructure changes. Learn what’s new, what’s gone, and how to adapt quickly.

Evidence and Diffs: How to Present Your Case in Wikipedia Disputes

Winning Wikipedia disputes isn't about being loud-it's about using verifiable evidence and clear diffs to support your edits. Learn how to cite reliable sources, respond calmly, and use Wikipedia's tools to resolve conflicts effectively.

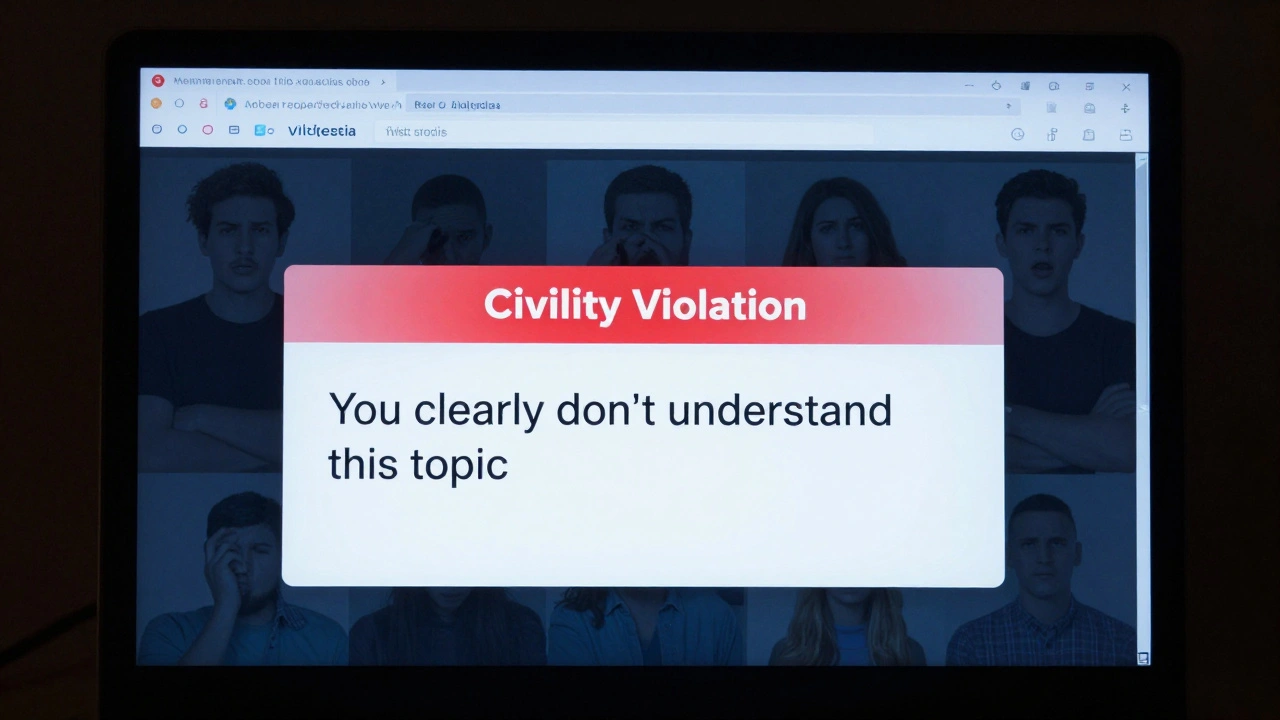

Civility Sanctions on Wikipedia: Where Lines Are Drawn

Wikipedia enforces civility to keep collaboration alive. Sanctions aren't about being polite-they're about preventing toxic behavior that drives away editors and undermines the encyclopedia's accuracy.