Wikipedia governance: How volunteers, policies, and tools shape the world's largest encyclopedia

When you think of Wikipedia governance, the system of rules, roles, and processes that guide how content is created and maintained on Wikipedia. Also known as Wikipedia community governance, it's not run by a board of executives or an algorithm—it's held together by volunteers who follow written policies, debate in talk pages, and vote on changes. Unlike corporate platforms, Wikipedia doesn't have ads, paid content teams, or corporate owners. Its structure relies on Wikipedia policies, mandatory rules that editors must follow to ensure neutrality, reliability, and consistency, and volunteer editors, a global network of unpaid contributors who monitor, edit, and defend the encyclopedia. These aren't just suggestions—they're enforced through edit reverts, warnings, blocks, and even formal arbitration.

How does this system stay stable? It’s built on layers: Wikipedia governance starts with core policies like Neutral Point of View and Verifiability, then moves to guidelines that give advice, and finally to essays that reflect community opinion. Tools like watchlists and talk pages let editors track changes and resolve disputes without top-down control. Meanwhile, the Wikimedia Foundation, the nonprofit that provides technical infrastructure and legal support for Wikipedia but doesn't control content stays out of editorial decisions. This separation is key. The Foundation runs the servers and handles copyright takedowns, but it doesn’t decide if an article about climate change should mention 97% consensus—or if a local history page should be deleted. That’s up to the editors.

Real governance happens in the quiet spaces: in the back-and-forth on a talk page, in the careful sourcing of a disputed fact, in the volunteer who spends hours undoing vandalism from a botnet. It’s not glamorous. No one gets paid. But it works. Surveys show people still trust Wikipedia more than AI encyclopedias—not because it’s perfect, but because you can see how every edit was debated, sourced, and reviewed. You’ll find stories here about how WikiProjects coordinate article improvements, how paid editors clash with volunteers, how AI tools are being tested, and how harassment off-wiki is forcing new safety policies. This isn’t theory. It’s the daily reality of keeping the world’s largest encyclopedia honest, accurate, and open. Below, you’ll see how real editors navigate this system—what works, what breaks, and what’s changing next.

Wikipedia Universal Code of Conduct: How Rules and Enforcement Work

Explore the Wikipedia Universal Code of Conduct. Learn about the rules, how behavior is enforced by the community and foundation, and its impact on editor diversity.

How Wikipedia Manages Disruptive Editing Without Using Sanctions

Explore how Wikipedia uses social norms, consensus building, and technical filters to stop disruptive editing without relying on bans or sanctions.

Wikipedia Policy Enforcement: How Admins and Sanctions Keep the Site Clean

Explore how Wikipedia uses administrator tools and community sanctions to maintain neutrality and stop vandalism in a decentralized environment.

Understanding the Wikimedia Foundation's Role in Wikipedia Governance

Wikipedia governance involves a unique split between the Wikimedia Foundation's legal support and the volunteer community's editorial control. Learn how rules are made.

Understanding Long-Term Abuse and Community Sanctions on Wikipedia Case Files

Explore how Wikipedia handles persistent conflicts through Long-Term Abuse policies and Community Sanctions. Learn about the Arbitration Committee process, Case Files, and enforcement mechanisms in 2026.

Wikimedia Foundation Board of Trustees: Leadership and Governance Explained

The Wikimedia Foundation Board of Trustees oversees the governance of Wikipedia and its sister projects. It sets financial strategy, hires leadership, and protects the mission of free knowledge. Learn how its members are chosen and why their decisions impact every reader and editor.

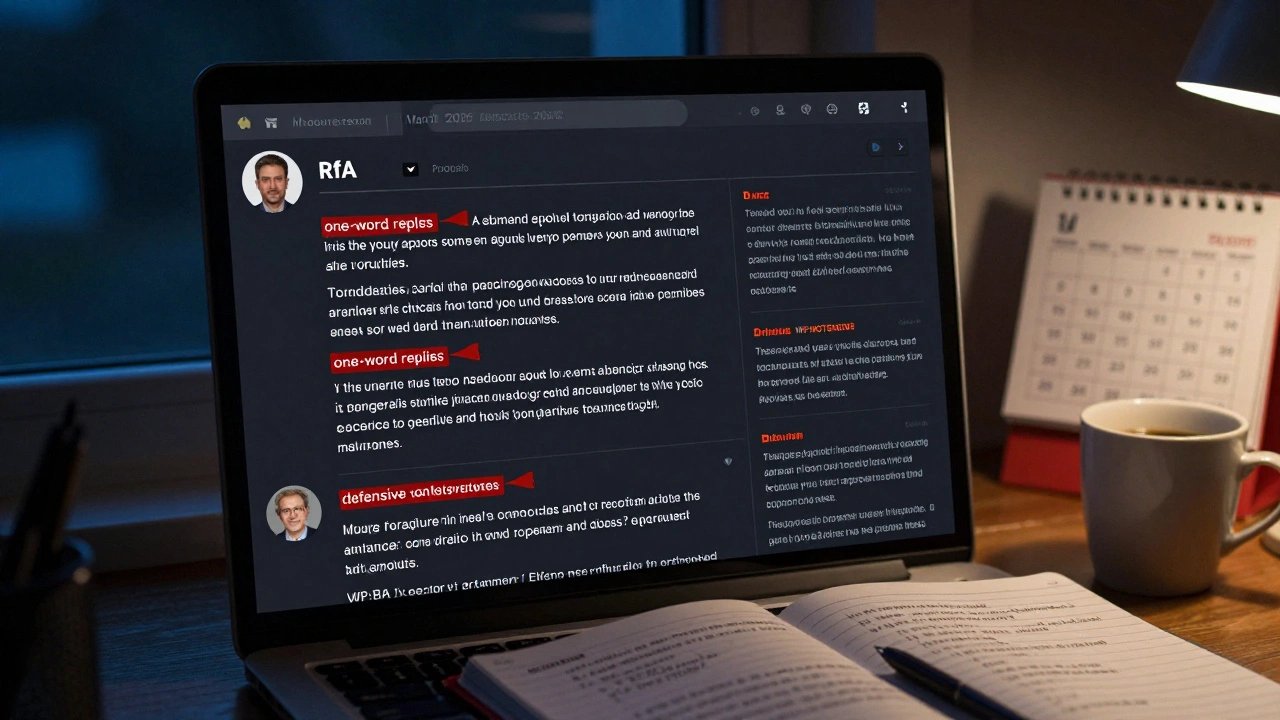

RfA Trends in 2025: Success Rates and Community Expectations

In 2025, Wikipedia's RfA success rate has dropped to 17% as community expectations rise. Admins now need conflict resolution skills, cultural awareness, and emotional maturity-not just edit counts. Learn what really matters today.

The Complete Process for Proposing and Implementing New Wikipedia Policies

Learn how Wikipedia volunteers propose, debate, and implement new policies through open, consensus-driven discussions - no authority needed, just clear reasoning and patience.

Wikipedia Topic-Area Arbitration Remedies: How Enforcement Works and What Actually Changes

Wikipedia's topic-area arbitration enforces rules in high-conflict editing zones through bans, co-editing rules, and automated checks. It's not perfect, but it's the most effective system of its kind, keeping articles stable and credible despite intense disputes.

How Wikipedia Protects High-Profile Articles During Breaking Events

Wikipedia uses automated alerts and volunteer editors to lock down high-profile articles during breaking events, preventing vandalism and misinformation. Protection levels vary based on threat level, and decisions are made rapidly by a global team of trusted editors.

Mass Deletion Debates on Wikipedia: Lessons From Notability Wars

Mass deletion debates on Wikipedia reveal how notability rules silently erase marginalized voices. Who gets remembered-and who gets deleted-depends not on importance, but on who’s editing the page.

How Wikipedia's Arbitration Committee Makes Final Editorial Decisions

Wikipedia's Arbitration Committee handles the most serious editing disputes, making final, binding decisions based on community policies. Composed of elected volunteers, it enforces sanctions like topic bans and blocks when community mediation fails.