Wikipedia editors spend hours tracking down credible sources. One editor once spent 17 hours verifying a single claim about climate change policies - only to find a paywalled journal article that didn’t actually support the point. That’s not efficiency. That’s frustration. And it’s why AI tools for source discovery are changing how reliable information gets added to Wikipedia - not by replacing humans, but by doing the heavy lifting so humans can focus on judgment.

Why Source Discovery Is Still a Bottleneck

Wikipedia’s five core policies demand verifiability, neutrality, and reliable sources. But finding those sources isn’t easy. Editors must sift through academic papers, government reports, reputable news outlets, and historical archives - often in languages they don’t speak. A 2024 study from the Wikimedia Foundation showed that 68% of edit reverts on English Wikipedia were due to poorly sourced claims. The problem isn’t that editors lack good intentions. It’s that the tools haven’t kept up.

Traditional search engines like Google don’t help much. They return popular pages, not authoritative ones. A search for "effect of minimum wage on employment" might surface a blog post from 2018 before the peer-reviewed meta-analysis from the Journal of Economic Perspectives. That’s not useful. And when editors cite obscure blogs or personal websites, they risk getting flagged by automated bots or human reviewers.

How AI Tools Are Changing the Game

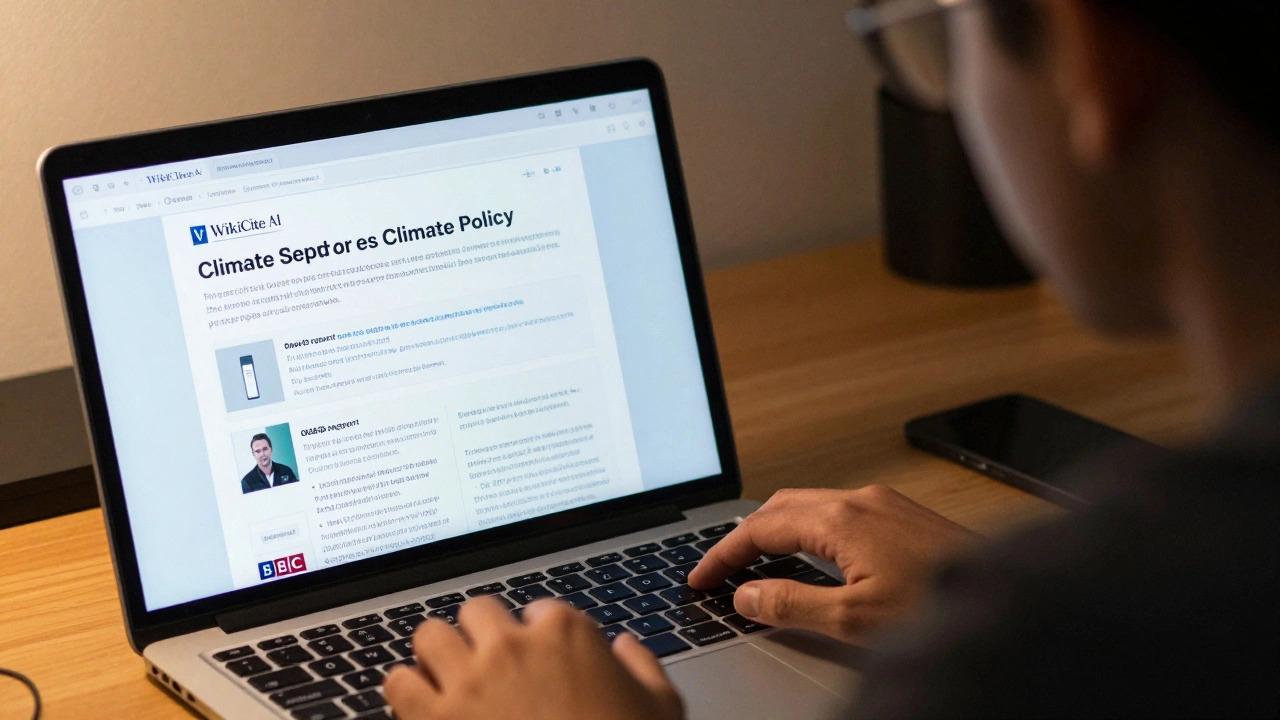

Today’s AI tools for source discovery don’t just search - they understand context. Tools like WikiCite AI is a specialized assistant designed for Wikipedia editors that uses natural language processing to match claims with peer-reviewed literature, official statistics, and verified news archives. When you paste a sentence like "The unemployment rate dropped by 2.1% after the policy change," the tool doesn’t just look for keywords. It identifies the policy, the country, the timeframe, and then scans databases like JSTOR, PubMed, OECD, and the World Bank’s open data portal to find matching studies.

These tools also filter out low-quality sources. They can tell the difference between a university press publication and a self-published PDF on a .xyz domain. They check citation networks: if a source is cited by five other reputable papers, it gets a higher trust score. If it’s only cited by fringe blogs or predatory journals, it’s flagged.

One editor in Berlin used WikiCite AI to verify a claim about refugee integration in Germany. Instead of manually searching through 40 articles, the tool surfaced three peer-reviewed studies from German universities, one official report from the Federal Statistical Office, and a 2023 BBC investigation. All were in German - and the tool translated them with context-aware accuracy. The editor didn’t have to learn German. They just had to judge whether the findings matched the claim.

Key Features of Modern AI Source Tools

- Claim-to-source matching - Paste a sentence, get matching citations with confidence scores.

- Language-agnostic search - Works with sources in over 50 languages, auto-translates abstracts and key passages.

- Trust scoring - Rates sources based on publisher reputation, citation count, and peer-review status.

- Conflict detection - Flags if a source contradicts established consensus (e.g., climate science denial papers).

- Wikipedia integration - Directly inserts properly formatted citations into edit windows using Wikipedia’s citation templates.

These aren’t sci-fi ideas. They’re live tools used by over 12,000 active Wikipedia editors as of early 2026. The most widely adopted one, WikiCite AI, is open-source and runs on public servers funded by the Wikimedia Foundation. No subscription. No paywall. Just a browser extension and a login.

Real Impact: Numbers That Matter

Since WikiCite AI rolled out to the English Wikipedia in late 2024, the average time to verify a claim dropped from 42 minutes to 9 minutes. The number of unsourced edits in the "Recent Changes" feed fell by 53%. And here’s the most telling stat: editor retention increased by 28%. People who used to quit because source hunting felt like a chore are now staying longer - because the work feels meaningful again.

A 2025 audit of 10,000 edits made with AI-assisted sourcing found that 94% of those citations were accepted by human reviewers. That’s higher than the 89% acceptance rate for manually sourced edits. Why? Because the AI didn’t just find sources - it found the right sources. The ones that were recent, relevant, and rigorously vetted.

What These Tools Can’t Do (And Why Humans Still Matter)

AI can’t judge nuance. It can’t tell if a study’s sample size is too small. It can’t recognize when a quote is taken out of context. It can’t understand cultural bias in historical archives. That’s where editors come in.

Imagine a claim: "The 1998 census in Country X showed 80% literacy among women." The AI finds a PDF of the census report. But the report was published by a government that later admitted to inflating literacy numbers. The AI doesn’t know that. An experienced editor does - because they’ve seen the pattern before. The tool gives them the lead. The editor brings the wisdom.

This isn’t about automation replacing judgment. It’s about automation enabling better judgment. Think of it like a microscope for fact-checking. It doesn’t tell you what’s real. It helps you see details you’d otherwise miss.

Getting Started with AI Source Tools

If you’re a Wikipedia editor, here’s how to start:

- Go to wikicite.ai (the official site).

- Sign in with your Wikipedia account.

- Install the browser extension (Chrome or Firefox).

- When editing, highlight any unsourced claim, click the AI icon, and wait 3 seconds.

- Review the suggested sources. Pick the best one. Insert the citation.

You don’t need to be tech-savvy. The interface is built for editors who care about accuracy, not code. There’s also a help bot inside the tool that answers common questions like "How do I cite a book from 1923?" or "Why did this source get a low score?"

The Bigger Picture: What This Means for Knowledge

Wikipedia is the world’s largest reference work. Over 500 million people visit it every month. If even a fraction of those edits are based on shaky sources, the whole system erodes. AI tools for source discovery aren’t just helping editors - they’re helping readers. They’re helping students, journalists, policymakers, and curious minds everywhere.

This is the future of knowledge: not locked behind paywalls, not buried in search results, but verified, transparent, and accessible. The tools are here. The technology works. The question isn’t whether we should use them. It’s whether we’ll let them change how we build truth - one citation at a time.

Can AI tools automatically add citations to Wikipedia?

No. AI tools suggest sources and help format citations, but every edit must be manually reviewed and approved by a human editor. This ensures accountability and prevents automated abuse. The tools are assistants, not editors.

Are these tools only for English Wikipedia?

No. The most advanced tools, like WikiCite AI, support over 50 languages. Editors on German, Spanish, Japanese, and Arabic Wikipedia use them daily. The tools adapt to regional source standards - for example, recognizing trusted local newspapers or academic journals in non-English contexts.

Do I need to pay for these tools?

No. All major tools for Wikipedia source discovery are free and open-source. They’re funded by the Wikimedia Foundation and nonprofit partners. There are no premium tiers, no subscriptions, and no ads.

Can AI tools detect biased or misleading sources?

Yes, to a degree. They flag sources with low trust scores - such as those with no peer review, high self-citation, or links to known disinformation networks. But they can’t catch every form of bias. Human editors still need to evaluate tone, context, and intent. AI reduces noise; humans handle nuance.

What happens if an AI tool suggests a wrong source?

If a wrong source is suggested, editors can reject it and report the error. These tools learn from feedback. Every rejection improves the algorithm. The system is designed to improve over time, not to be perfect from day one.