Fact-Checking on Wikipedia: How Reliable Sources and Human Editors Keep Truth Alive

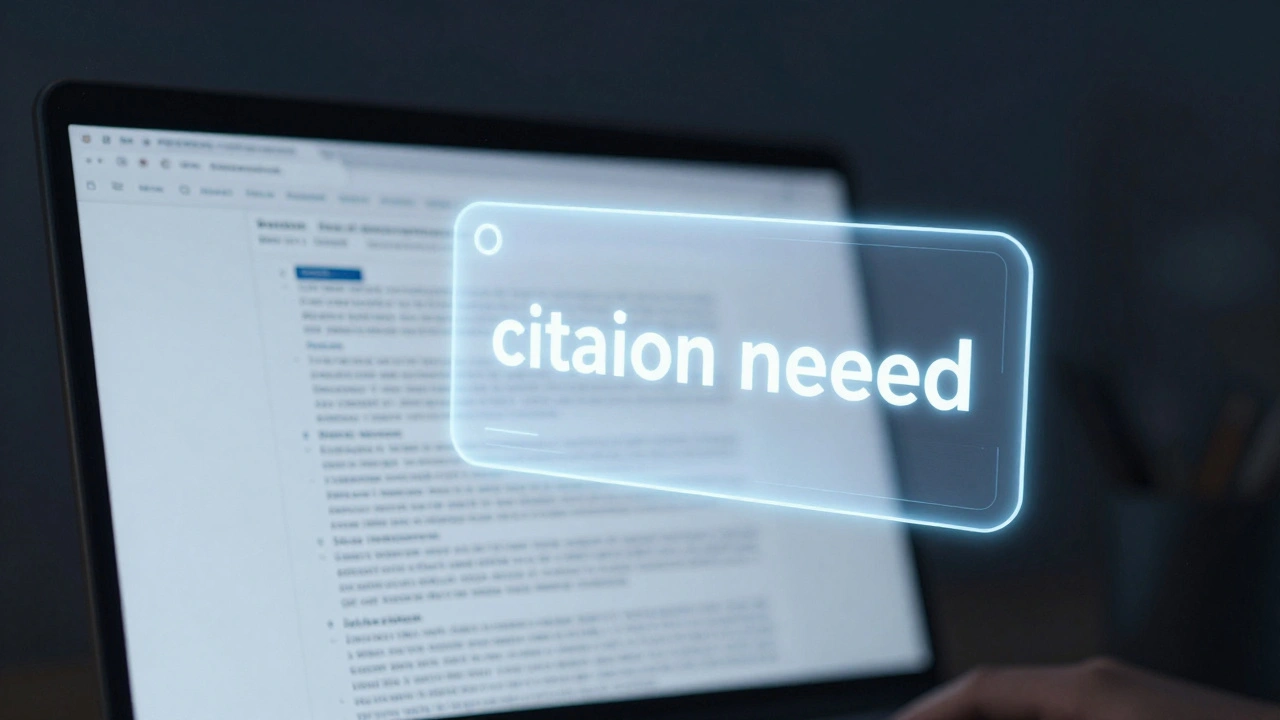

When you need to verify a claim, fact-checking, the process of verifying the accuracy of information against trusted evidence. Also known as source verification, it’s what keeps Wikipedia from becoming just another collection of rumors. Unlike social media or AI-generated summaries, Wikipedia doesn’t guess. It requires every statement to be backed by a published, reliable source—books, peer-reviewed journals, reputable news outlets. This isn’t optional. It’s the rule. And it’s why millions still turn to Wikipedia when they need to know what’s real.

Behind every accurate article are volunteers who act like digital detectives. They check citations, track down original studies, and flag claims that don’t hold up. Tools like the watchlist, a feature that lets editors monitor changes to specific articles help them catch errors fast. When someone adds a false date, a misquoted statistic, or a made-up fact, these editors revert it—and often leave a note explaining why. This isn’t about being strict. It’s about being honest. The reliable sources, published materials with editorial oversight that can be independently verified policy is the backbone of this system. Primary sources like personal blogs or press releases? They’re rarely enough. Secondary sources—like news reports that analyze events or academic papers that review multiple studies—are preferred because they add context and reduce bias.

What makes Wikipedia’s fact-checking different from AI tools? AI can spit out citations that look real but don’t actually support the claim. Wikipedia’s editors don’t just copy-paste—they read. They check if the source says what it’s supposed to. They know the difference between a study that’s been peer-reviewed and one that’s just posted online. And when there’s disagreement? They don’t fight. They discuss. Using policies like due weight, the rule that ensures all significant viewpoints are represented in proportion to their presence in reliable sources, they balance competing claims without giving equal space to fringe ideas. This is how Wikipedia stays ahead of AI encyclopedias that rely on patterns, not proof.

Journalists use Wikipedia not as a source—but as a starting point. They look at the citations, follow the links, and find the real documents. That’s fact-checking in action: turning a Wikipedia page into a trail of evidence. And when misinformation spreads? The community reacts. Whether it’s a false rumor about a public figure or a misleading claim about science, volunteers jump in. They update, they cite, they explain. It’s slow. It’s quiet. But it works.

Below, you’ll find real stories from the front lines of this effort—how editors track down sources, how the community handles false claims, and why human judgment still beats algorithms when it comes to truth.

How Wikipedia Stops Misinformation During Breaking News

Discover how Wikipedia uses a mix of human moderators, automated bots, and strict sourcing rules to stop misinformation during breaking news events.

Inside the Fact-Checking and Correction Process at The Signpost

Explore how The Signpost maintains journalistic integrity through a rigorous fact-checking process and transparent corrections policy to build community trust.

How to Fact-Check and Verify Sources for Wikipedia Quality

Master the art of Wikipedia source verification. Learn how to spot reliable sources, avoid circular reporting, and use the SIFT method to improve article quality.

The Citation Cycle Problem: How Journalists Use Wikipedia Sources

Explore how citation cycles form when journalists rely on Wikipedia sources without independent verification. Learn the risks, real-world impacts, and practical solutions for maintaining media integrity.

Lessons from Past Breaking News Coverage on Wikipedia

Explore how Wikipedia handles breaking news, the risks involved with crowdsourced journalism, and lessons learned from past events.

Designing an Editorial Checklist for Citing Wikipedia in Newsrooms

A practical guide for newsrooms to create safe protocols for researching and verifying information found on collaborative online platforms.

Collaborative Journalism: How Newsrooms Partner With Wikipedians

Explore how professional newsrooms and Wikipedia volunteers are partnering to improve factual accuracy. Learn about the operational mechanics, benefits, and challenges of this growing trend in digital media.

Measuring Knowledge Integrity Across Encyclopedias with Open Benchmarks

Open benchmarks now measure how accurately encyclopedias like Wikipedia and Britannica present facts. Learn how knowledge integrity is being tested, what the results show, and why transparency matters more than ever.

When Wikipedia Should Not Be Used: Red Flags for Reporters

Wikipedia is a useful tool for journalists - but never a source. Learn the red flags that mean you should walk away from Wikipedia and how to find real, reliable information instead.

How Wikipedia Handles Rumors and Unconfirmed Reports During Crises

Wikipedia handles rumors during crises by relying on verified sources, protecting sensitive pages, and using community-driven fact-checking. It doesn't rush to publish-only confirms what trusted outlets report. This method makes it one of the most reliable sources in chaotic moments.

Wikipedia Is Not a News Organization: Understanding the Philosophical Differences

Wikipedia isn't a news outlet - it doesn't break stories or chase deadlines. It waits for verified sources before updating, making it a reference tool, not a live feed. Understanding this difference helps you use it correctly.

How Press Freedom Shapes the Reliability of News Sources on Wikipedia

Press freedom ensures accurate, independent journalism-which is the foundation of reliable information on Wikipedia. Without it, Wikipedia's content becomes incomplete, biased, or outdated.