Imagine waking up to a 90-second news briefing that sounds like it was written by a human-except it was generated entirely from data. No reporter was on the phone. No editor reviewed a draft. Just clean, accurate, up-to-the-minute facts pulled from a public database. This isn’t science fiction. It’s happening right now, and Wikidata is at the center of it.

Audio journalism has been around for decades, but most podcasts and radio segments still rely on human reporting: interviews, field recordings, narrative storytelling. That’s powerful. But it’s slow. And expensive. What if you could automate the daily updates-like stock prices, sports scores, weather alerts, or election results-without losing accuracy or tone? Enter structured data. And Wikidata, the free, collaborative knowledge base behind Wikipedia, is making it possible.

What Wikidata Actually Does

Most people know Wikipedia. Few know Wikidata. But if you’ve ever seen a Wikipedia infobox with clean numbers-like population stats, Olympic medal counts, or birth dates of celebrities-that data didn’t come from a journalist. It came from Wikidata.

Wikidata is a machine-readable database. It doesn’t tell stories. It stores facts: Barack Obama was born on August 4, 1961. Paris has a population of 2,161,000. The 2025 World Cup final was played on July 19. Each fact is a triple: subject, predicate, object. And every piece is linked to sources, updated in real time, and checked by volunteers.

Unlike traditional databases, Wikidata isn’t locked behind paywalls or APIs with usage limits. It’s open. Free. And globally maintained. Over 100 million items. More than 1 billion statements. And it’s growing every minute.

Why Audio Journalism Needs This

Newsrooms are shrinking. Local radio stations can’t afford full-time reporters for every beat. But people still want to know: Did the city council approve the budget? How many people voted in the mayoral election? What’s the latest on the flu outbreak?

These aren’t opinion pieces. They’re facts. And facts are perfect for automation.

Take a small-town radio station in Wisconsin. Every morning at 7:15, they broadcast a 60-second update: local weather, school closures, bus delays, and county crime stats. Before, a volunteer had to call three offices, check three websites, and write a script. Now? They use a simple script that pulls data from Wikidata, formats it into natural speech, and plays it automatically.

The result? Faster updates. Fewer errors. And no one working at 4 a.m.

How It Works: From Data to Voice

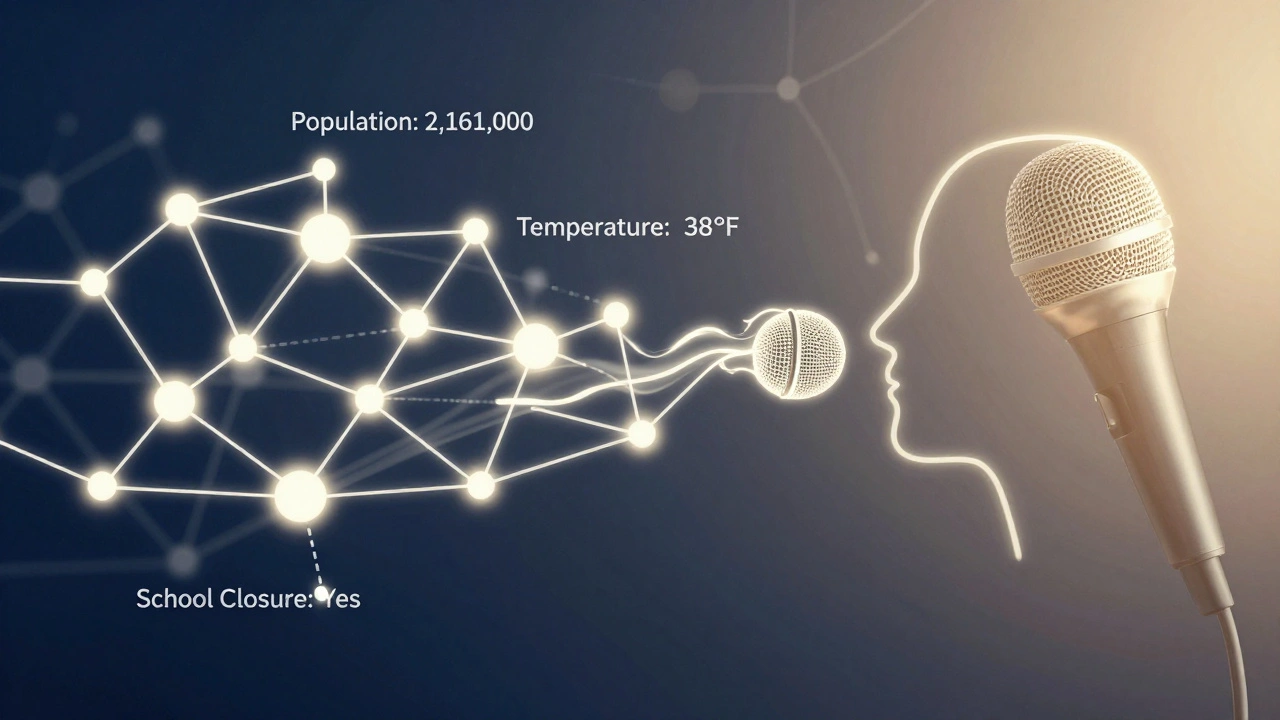

Here’s the step-by-step process behind a Wikidata-powered audio briefing:

- Define the data needs: What facts are needed? (e.g., daily temperature, highest wind gust, precipitation chance)

- Query Wikidata: Use SPARQL, the language for querying structured data, to pull the latest values. Example: "What was the average temperature in Madison on March 3, 2026?"

- Format the output: Convert numbers into plain language. "The high was 38°F" not "38.2".

- Text-to-speech: Use a natural-sounding voice engine (like Coqui TTS or Amazon Polly) to turn text into audio.

- Schedule and play: Automate delivery via a radio streaming platform or podcast feed.

This isn’t theoretical. The BBC tested it in 2023 with local weather updates. NPR used it for daily congressional vote tallies. A nonprofit in Minnesota now delivers daily school closure alerts to 12 rural radio stations-all powered by Wikidata.

The Hidden Advantage: Accuracy and Trust

When a human writes a news brief, mistakes happen. A typo. A misheard number. A source that’s outdated.

With Wikidata, every fact is traceable. Click a link in the Wikipedia article? You’ll see the exact Wikidata item. Click that? You’ll see the source-maybe a government report, a scientific journal, or a census update. If the data changes, the audio changes. Automatically.

That’s trust you can’t buy. Listeners don’t need to wonder if the station got it right. They know it came from a public, verifiable source.

What You Can Build With This

Here are real examples already in use:

- Local transit updates: Bus delays, route changes, service outages pulled from transit agency open data feeds linked to Wikidata.

- Environmental alerts: Air quality index, river levels, wildfire risk-updated hourly.

- School and public service notices: Closures, holiday schedules, public meeting times.

- Community milestones: "Today marks the 50th anniversary of the opening of the Madison Public Library." (Wikidata has event dates for thousands of local landmarks.)

Even sports. A high school radio station in Iowa now plays a 30-second update after every game: final score, leading scorer, next opponent-all pulled from Wikidata’s sports event database.

Limitations and Challenges

This isn’t magic. There are limits.

First, not all data is in Wikidata. If a town doesn’t publish its budget online, Wikidata won’t have it. You still need human reporters for context, investigation, and storytelling.

Second, voice synthesis isn’t perfect. Robotic tones still exist. But tools like ElevenLabs and Coqui TTS now sound so human that most listeners can’t tell the difference.

Third, quality control. Who checks the data? Volunteers. Wikidata relies on community moderation. Most entries are accurate, but bad data slips through. That’s why smart systems include a confidence score-only using data with two or more reliable sources.

Where This Is Headed

By 2027, we’ll see local newsrooms using Wikidata to generate daily briefings in multiple languages. A Spanish-speaking community in Texas might get its weather update in Spanish, while the English version runs on the main station. All from the same data source.

Imagine a world where every small-town radio station, every community podcast, every library’s audio bulletin board can deliver accurate, timely news without hiring a single journalist. That’s not just efficiency. It’s equity.

Audio journalism isn’t dying. It’s evolving. And structured data-clean, open, global-is giving it a new voice.

Can Wikidata replace journalists entirely?

No. Wikidata handles facts, not context. It can tell you how many people voted, but not why they voted-or what it means for the community. Human journalists are still needed for investigation, analysis, and storytelling. Wikidata just takes over the repetitive, data-heavy tasks.

Do I need technical skills to use Wikidata for audio news?

Not necessarily. Tools like Wikidata Query Service have visual builders. You can drag and drop data fields. There are also open-source templates-like the "Audio Briefing Generator"-that let you plug in your location and get a working script in minutes. No coding required.

Is Wikidata data reliable enough for news?

Yes, for factual data. Wikidata requires at least one verifiable source, and many entries have three or more. Government data, scientific studies, and official statistics are commonly used. For critical reporting, systems are designed to only use data with high confidence scores and multiple sources.

Can I use this for my local podcast?

Absolutely. Many small podcasters are already doing it. You can start with one daily update-like weather or local events-and expand. There are free tools, tutorials, and even community forums where people share their scripts and voice settings.

What if the data changes after the audio is recorded?

If you’re automating the process, the audio is generated fresh every time. So if a new report comes in at 5 a.m., the 7 a.m. briefing reflects it. No need to re-record. The system pulls the latest data each run.

Next Steps for Stations and Creators

If you’re running a local audio news project, here’s how to start:

- Identify one repetitive fact you report daily: temperature, election results, school closures.

- Search Wikidata for that data point. Type it into Wikidata.org-you’ll likely find it.

- Use the Wikidata Query Service to build a simple SPARQL query. There are templates for weather, events, and population.

- Connect it to a text-to-speech tool. Try Coqui TTS (open source) or Google’s Text-to-Speech API.

- Test it. Play it. Listen. Adjust the tone. Add a human intro.

- Scale. Add one more fact next week.

This isn’t about replacing journalism. It’s about freeing journalists to do what humans do best: ask why, dig deeper, and tell stories that matter.