When a major event breaks-like a plane crash, a political resignation, or a natural disaster-Wikipedia doesn’t sit back and wait for the news cycle to catch up. It updates in real time. And behind every change you see on a Wikipedia page during a fast-moving event, there’s a system built for speed, accuracy, and accountability: time-stamping and edit summaries.

Why Time-Stamping Matters More Than You Think

Every edit on Wikipedia gets a precise timestamp down to the second. That’s not just for show. During breaking news, when conflicting reports flood in, those timestamps become the first line of defense against misinformation. If two editors add contradictory facts about a developing story, you can scroll through the history and see exactly when each version was posted. One might say a building collapsed at 3:14 p.m., another says 3:17 p.m. The timestamp tells you which edit came first, which one was later corrected, and which one might have been based on a rumor.

It’s not just about order. It’s about trust. A reader checking Wikipedia during a crisis doesn’t have time to dig through sources. They rely on the sequence of edits to infer reliability. If an edit made at 3:15 p.m. includes a quote from a verified news outlet and gets no reverts, it’s more likely to be accurate than an edit made at 3:16 p.m. with no sourcing. The timestamp doesn’t prove truth-but it helps you find it faster.

Edit Summaries: The Quiet Heroes of Real-Time Editing

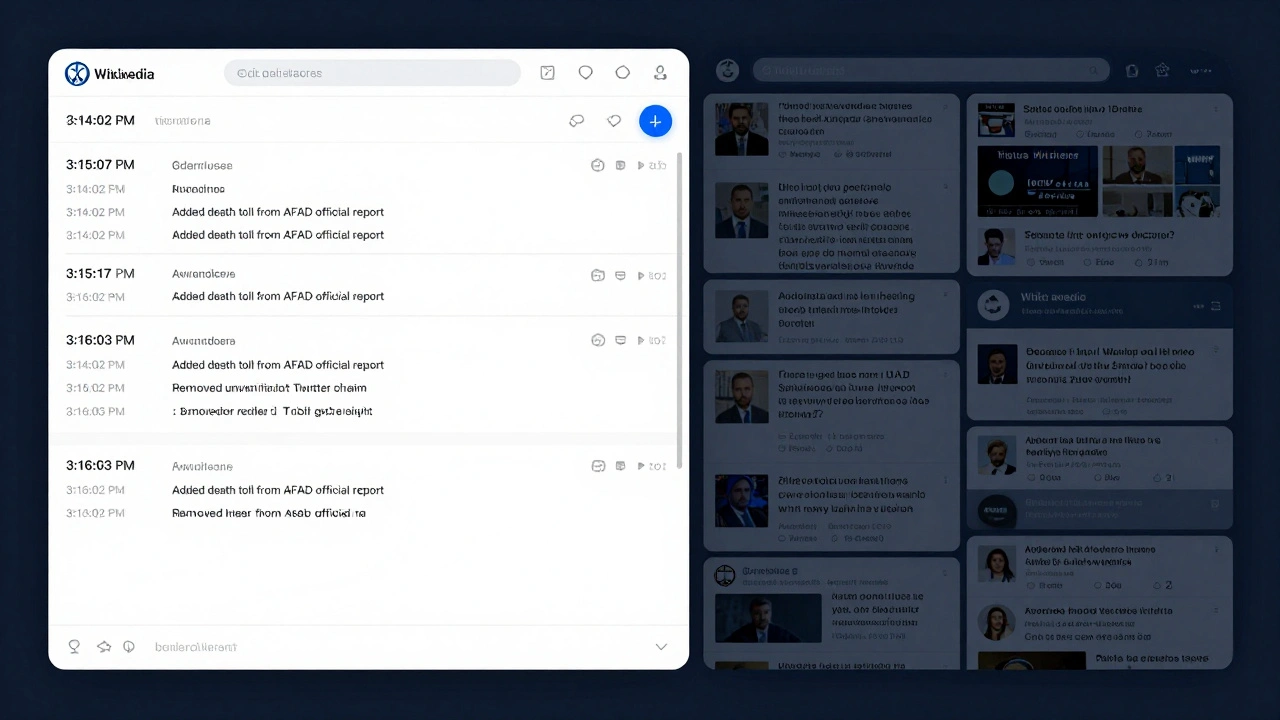

Ever notice that tiny line below an edit that says “Added death toll from official statement” or “Fixed location based on AP report”? That’s an edit summary. It’s optional, but during fast changes, it’s mandatory in practice.

Without summaries, Wikipedia’s edit history would be a wall of meaningless changes: “updated,” “fixed,” “added info.” That’s useless when you’re trying to trace how a page evolved during a crisis. Good summaries answer three questions: What changed? Why? and Where did you get it?

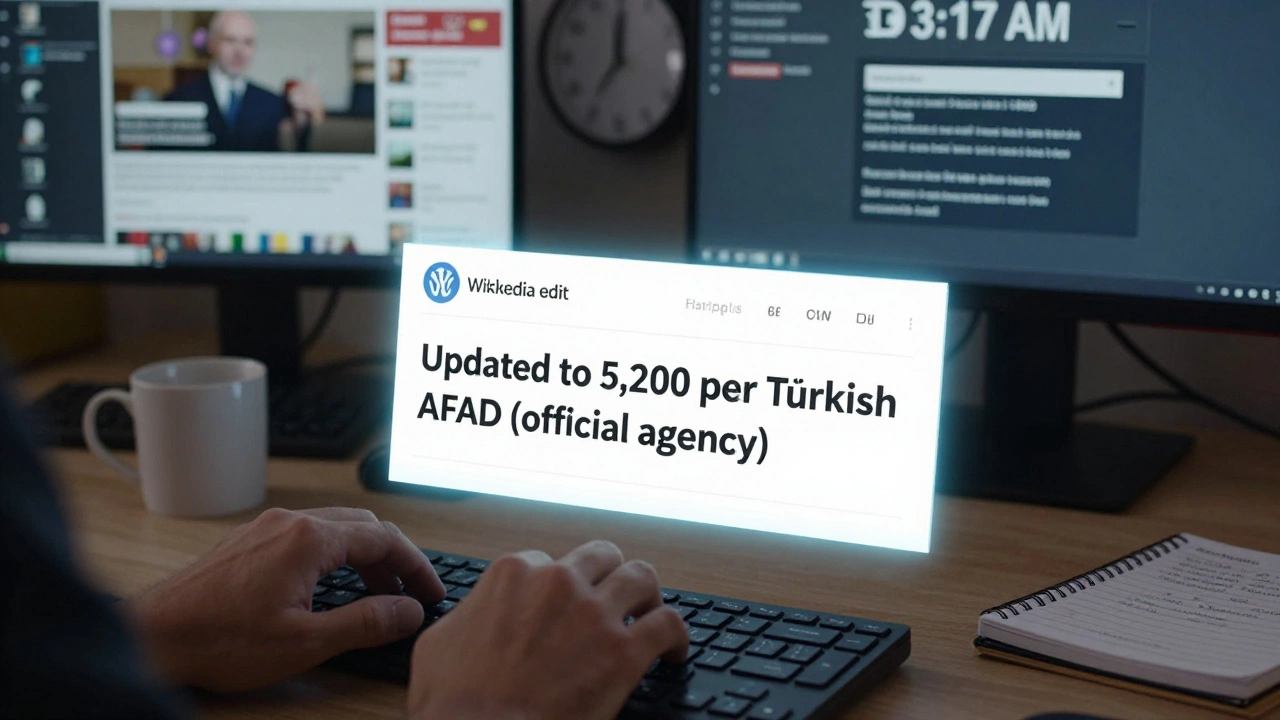

During the 2023 earthquake in Turkey, editors on the main page added the death toll five times in 48 hours. Each time, the summary included the source: “Updated to 5,200 per Turkish AFAD (official agency).” That meant anyone-journalists, researchers, emergency responders-could verify the number without clicking through links. No guesswork. No confusion.

Bad summaries? They’re dangerous. “Changed number” or “Fixed typo” during a live event invites suspicion. Editors who write vague summaries often get flagged, reverted, or even blocked. The community doesn’t tolerate ambiguity when lives are on the line.

How Wikipedia Stays Accurate When Everyone’s Rushing

Wikipedia doesn’t have editors on standby for every global event. But it does have a network of thousands who monitor trending pages. Tools like Recent Changes and Watchlists alert experienced users when a page is being edited rapidly. These aren’t bots. These are real people-teachers, journalists, students-who have spent years learning how to spot false claims.

Here’s how it works in practice:

- A breaking news story triggers a spike in edits to a related Wikipedia page.

- Experienced editors notice the surge and jump in to review changes.

- They check each edit against trusted sources: official government releases, major news agencies (AP, Reuters, BBC), and verified social media accounts.

- Unsourced or speculative edits are reverted with clear summaries: “Removed unverified claim from Twitter account.”

- Verified updates are added with full citations and precise summaries.

This isn’t perfect. Sometimes false info slips through. In 2022, a false report that a U.S. senator had died spread across multiple platforms, including Wikipedia. It was live for 22 minutes before being corrected. That’s faster than most news outlets, but still too long. The fix? More editors now monitor high-risk pages 24/7 during major events.

The Role of Auto-Flags and Bots

Wikipedia isn’t all human. Automated tools help, but they don’t make decisions-they flag risks. A bot might notice that a page is being edited 15 times in 10 minutes and automatically tag it as “high-risk.” That triggers a warning for experienced editors to review it. Another bot checks if new edits include links to known misinformation sites and blocks them before they’re saved.

But bots can’t judge context. If a headline says “President resigns,” a bot might think it’s fake if the president hasn’t officially confirmed it. But a human editor knows that if multiple major news outlets report it, and the White House press secretary hasn’t denied it, it’s likely true. That’s why bots are assistants, not arbiters.

What Happens When Sources Conflict?

During fast-moving events, even trusted sources disagree. That’s when Wikipedia’s policy of verifiability over truth kicks in. Wikipedia doesn’t claim to know what’s true. It only reports what reliable sources say.

For example, during the 2024 U.S. presidential debate, one network reported a candidate said “climate change is a hoax.” Another reported they said “climate change is a serious threat.” Both were live-streamed. Wikipedia editors didn’t pick a side. They wrote: “Multiple news outlets reported conflicting statements from the candidate regarding climate change. AP reported X, CNN reported Y.”

That’s the gold standard. No bias. No guesswork. Just a clear record of what was said, by whom, and when. The edit summary? “Added conflicting reports from AP and CNN during debate.”

Why This System Works-And Why It’s Unique

No other public knowledge source does this. News sites update, but they don’t show you every draft. Academic databases wait for peer review. Wikipedia’s magic is transparency. You can see every version. Every edit. Every reason. Every source.

It’s not just for experts. A high school student researching a natural disaster can trace how the page evolved. A journalist can verify how a statistic changed over time. A researcher can analyze editing patterns to detect misinformation trends.

The system works because it’s built on community trust, not authority. No one person controls Wikipedia. But thousands of people-many of them volunteers with no formal training-work together to keep it accurate. And during breaking news, that collective effort is what keeps the truth from getting lost in the noise.

What You Can Do to Help

If you’re reading this during a breaking event, you might think, “I’m not an expert-I can’t help.” But you can. Here’s how:

- If you see an edit with no source, don’t just revert it-add a comment like “Can you cite a source?”

- If you’re confident in a fact from a reputable outlet, edit the page and write a clear summary: “Added death toll from FEMA official statement (fema.gov, 10:32 a.m.).”

- Don’t edit from unverified social media. Even if it’s trending, if it’s not confirmed by a major news organization, it doesn’t belong on Wikipedia.

- Use the “View history” tab. See how the page changed. That’s how you learn what works.

You don’t need to be a Wikipedia veteran. You just need to be careful, curious, and clear.

Why doesn’t Wikipedia just wait for official announcements before updating?

Wikipedia doesn’t wait because breaking news evolves faster than official statements. Governments and agencies often release updates slowly or in fragments. Wikipedia’s goal is to reflect what reliable media sources are reporting *right now*, not what an official body says later. If multiple credible outlets report the same fact, Wikipedia includes it-even if the government hasn’t confirmed it yet. Waiting too long risks leaving readers with outdated or incomplete information.

Can anyone edit Wikipedia during a breaking news event?

Yes, anyone can. But not all edits stick. During fast-moving events, experienced editors monitor changes closely. Unsourced edits, speculative claims, or edits from new or suspicious accounts are often reverted within minutes. The system is open, but it’s also self-correcting. The community acts quickly to protect accuracy.

Do edit summaries have to be perfect?

They don’t have to be perfect, but they do have to be clear and specific. “Updated info” is too vague. “Added death toll from AP report at 2:15 p.m. EST” is good. Editors who consistently write poor summaries may be asked to improve or temporarily restricted. During crises, clarity isn’t optional-it’s critical.

What happens if someone adds false information on purpose?

False edits are usually caught within minutes, sometimes seconds. Automated tools flag rapid changes, and experienced editors patrol high-traffic pages. Once detected, the edit is reverted, and the user may be blocked. Repeat offenders, especially those adding misinformation during emergencies, face long-term bans. The community takes this seriously-false edits during crises can have real-world consequences.

Is Wikipedia more reliable than news sites during breaking news?

It’s not necessarily more reliable-it’s more transparent. News sites may report faster, but you don’t see their editing process. Wikipedia shows every change, every source, every correction. If you want to know how a fact evolved, Wikipedia gives you the full timeline. That makes it uniquely useful for verifying information over time, even if a news site gets the first report.