Imagine a world where the truth about a controversial organization is dictated not by journalists or historians, but by a team of anonymous editors with a single goal: control. This isn’t science fiction. It’s the reality that unfolded on Wikipedia, the free online encyclopedia that has become the primary source of information for billions. At the center of this digital storm stands the Church of Scientologya controversial religious movement founded by L. Ron Hubbard in 1950s Los Angeles. For over two decades, this organization has waged a sophisticated war against its own Wikipedia page, attempting to sanitize its history, suppress criticism, and rewrite public perception.

This conflict isn’t just a quirky footnote in internet history. It represents a fundamental clash between centralized authority and decentralized knowledge. When you search for "Scientology" today, you’re seeing the result of one of the most intense editing battles in the history of the web. Understanding this struggle helps us see how modern information ecosystems work-and how fragile they can be when powerful entities decide to fight back.

The Anatomy of a Wiki War

To understand why the Scientology page became such a battleground, you have to look at what makes Wikipedia unique. Unlike traditional encyclopedias like Encyclopedia Britannicaa long-standing print encyclopedia known for expert-reviewed content, which employs professional editors and fact-checkers, Wikipedia relies on volunteers. Anyone with an internet connection can edit any article. This openness is its greatest strength, but it also creates vulnerabilities.

In the early 2000s, as Wikipedia grew from a small project into a global phenomenon, the Church of Scientology began noticing something alarming: their online reputation was deteriorating. Critical sources were being added. Controversial practices were being documented. The narrative wasn’t aligning with the official church stance. So, they didn’t just complain-they organized.

The church launched a coordinated campaign involving hundreds of volunteer editors, many of whom were members or sympathizers. These editors used multiple accounts, often hiding behind proxy servers to mask their identities. Their strategy was simple: add positive content, remove negative content, and challenge every critical claim with demands for higher evidentiary standards than those applied to other topics. This tactic is known in wiki circles as "edit warring."

- Mass coordination of edits during peak traffic hours

- Creation of sock puppet accounts to gain voting power in discussions

- Aggressive use of deletion requests for unfavorable references

- Legal threats against prominent critics who contributed content

What made this particularly effective was that these actions appeared to come from independent users. To the casual observer, it looked like normal community debate. But behind the scenes, it was a highly structured operation designed to manipulate consensus.

The Rise of Sock Puppets and Proxy Wars

One of the most significant aspects of the Scientology-Wikipedia conflict was the widespread use of sock puppet accountsfake user profiles created to deceive others about identity or affiliation. In Wikipedia terminology, a sock puppet is an alternate account used by an editor to circumvent rules, create false impressions of consensus, or avoid bans. The church’s supporters deployed dozens-if not hundreds-of these accounts.

These weren’t random trolls. They were part of a systematic effort. Some were operated by individuals within the church itself. Others were recruited through social media groups and forums dedicated to defending Scientology’s image. Many believed they were simply contributing positively to an important cause, unaware they were participating in a larger manipulation scheme.

The problem? Wikipedia’s system assumes good faith. When someone adds a reference or suggests a change, the default assumption is that they’re acting honestly. But when hundreds of seemingly unrelated accounts all push the same agenda, that assumption breaks down. Detecting coordinated behavior requires deep analysis of IP addresses, editing patterns, timing, and linguistic style-a task that falls heavily on unpaid administrators.

By 2007, Wikipedia had identified enough suspicious activity to implement special restrictions on the Scientology article. Only experienced, trusted editors could make changes. Newcomers were blocked entirely. This move sparked outrage among some who argued it violated Wikipedia’s core principle of open collaboration. Others saw it as necessary protection against bad-faith actors.

| Feature | Traditional Encyclopedia (e.g., Britannica) | Wikipedia |

|---|---|---|

| Editor Selection | Professional experts hired by publisher | Open to anyone; self-selected volunteers |

| Content Review | Centralized editorial board | Distributed community consensus |

| Update Speed | Slow (months to years) | Near-instantaneous |

| Vulnerability to Manipulation | Low (high barrier to entry) | High (low barrier to entry) |

| Accountability | Publisher liable for errors | No central liability; community-driven correction |

When Critics Were Silenced

If you’ve ever tried to write about Scientology critically on Wikipedia, you know firsthand how difficult it can be. Even well-sourced claims get challenged repeatedly. References are questioned. Tone is scrutinized. And if you persist, you might find yourself banned-not because your facts were wrong, but because your persistence was interpreted as disruptive.

This dynamic reached a breaking point in 2008 when journalist James RisenPulitzer Prize-winning investigative reporter for The New York Times attempted to contribute balanced coverage based on his book *Holy War*. His edits were quickly reverted. He was accused of bias. Despite citing credible sources, he found himself locked out of meaningful participation. Similar stories emerged from academics, former members, and researchers-all facing the same wall of resistance.

The irony? Wikipedia’s policies demand neutrality. Yet enforcing neutrality becomes nearly impossible when one side controls the narrative infrastructure. You can’t achieve balance if only one voice gets to speak freely while the other faces constant scrutiny and retaliation.

Some critics took legal action. Others went public. A few even threatened lawsuits against Wikimedia Foundation officials. None succeeded in changing the outcome-but they did raise awareness about the broader issue of digital censorship.

How Wikipedia Fought Back

Facing mounting pressure, Wikipedia’s volunteer community responded with unprecedented vigilance. Administrators developed new tools to detect coordinated editing. Bot software flagged suspicious patterns. Special pages tracked contributions from specific IP ranges. Over time, a robust defense mechanism emerged-one built not by corporate executives, but by ordinary people committed to preserving accuracy.

Key developments included:

- Protected Articles: High-conflict pages like Scientology were locked so only seasoned editors could modify them.

- CheckUser Tools: Technical capabilities allowing admins to verify whether multiple accounts belonged to the same person.

- Community Guidelines: Stricter enforcement of no-bias policies and clearer definitions of acceptable sourcing.

- Transparency Reports: Public documentation of major disputes and resolutions to build trust.

None of these solutions were perfect. Protection measures slowed down legitimate updates. CheckUser investigations sometimes raised privacy concerns. But collectively, they helped restore some semblance of fairness.

Still, the damage lingered. Years of skewed representation left lasting impressions. Many readers still encounter outdated or incomplete information. And despite improvements, the underlying tension remains unresolved.

Lessons for the Digital Age

The battle over Scientology’s Wikipedia page teaches us more than just how wikis work. It reveals deeper truths about power, truth, and technology in the 21st century.

First, information is never neutral. Every platform has biases-whether embedded in algorithms, shaped by user behavior, or influenced by external pressures. Recognizing this doesn’t mean we should abandon platforms like Wikipedia. It means we need to engage with them critically.

Second, decentralization isn’t always democratic. While Wikipedia’s model allows broad participation, it also enables coordinated abuse. Without safeguards, majority rule can become mob rule-or worse, manipulated rule.

Third, accountability matters. Who decides what counts as truth? On Wikipedia, it’s the community. But communities aren’t immune to influence. Powerful organizations can shape discourse simply by showing up consistently and strategically.

Finally, transparency builds resilience. When conflicts arise, documenting decisions and explaining rationale helps maintain credibility. Hiding behind anonymity may protect individual editors, but it erodes public confidence in the entire system.

Why This Matters Beyond Scientology

You don’t have to care about Scientology to care about this story. What happened here mirrors similar struggles across countless topics-from political figures to health treatments to historical events. Any subject that challenges powerful interests risks becoming a battlefield.

Consider climate change articles, vaccine safety pages, or entries on controversial politicians. All face similar pressures. All require careful stewardship. And all benefit from lessons learned in the Scientology case.

As artificial intelligence begins generating summaries and recommendations based on Wikipedia data, the stakes grow even higher. If foundational sources are compromised, downstream effects ripple outward-shaping education, policy, and public opinion.

Did the Church of Scientology win the Wikipedia war?

Not decisively. While they succeeded in influencing much of the content for several years, Wikipedia’s community eventually implemented strong protections. Today, the article reflects a more balanced view, though residual bias persists in certain sections.

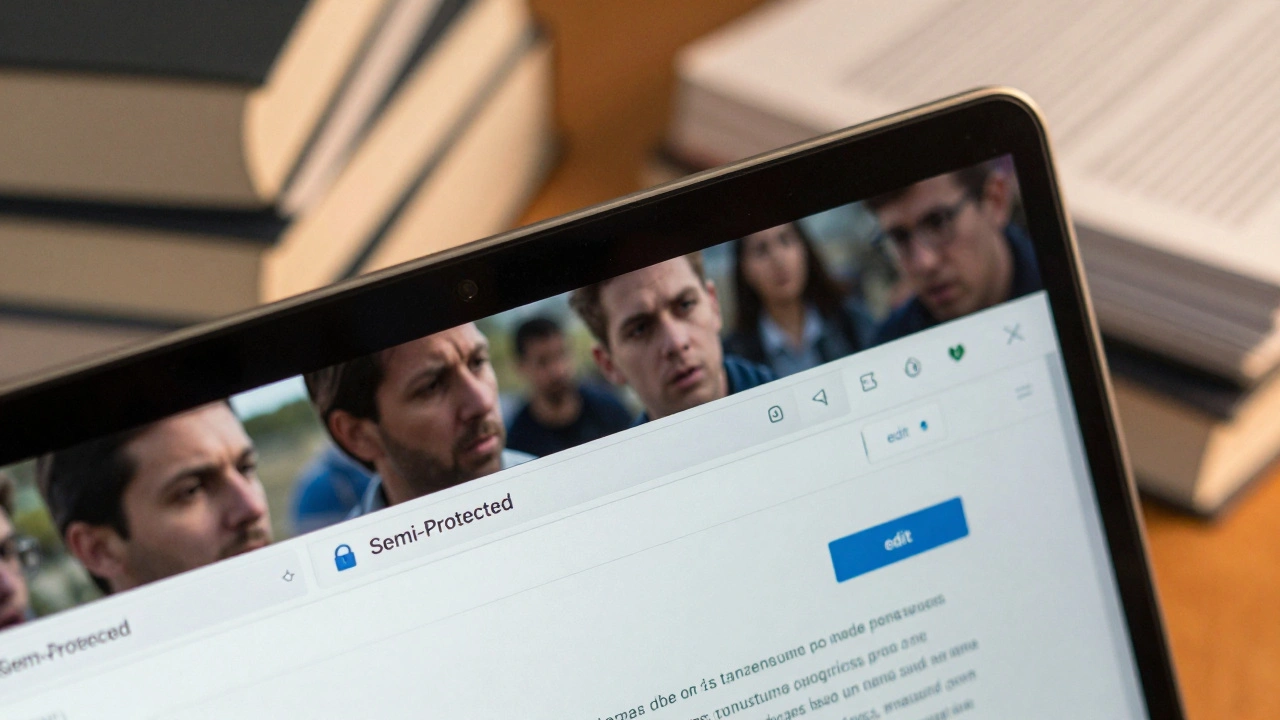

Can regular users still edit the Scientology Wikipedia page?

No. The page is semi-protected, meaning only established editors with proven track records can make direct changes. New users must propose edits via talk pages, which undergo review before implementation.

Are there other examples of organizations trying to control their Wikipedia pages?

Yes. Companies like BP after the Deepwater Horizon spill, celebrities involved in scandals, and political movements have all attempted similar tactics. However, none matched the scale or duration of Scientology’s efforts.

Is Wikipedia reliable given its open-editing model?

Generally yes-for most topics. Studies show Wikipedia performs comparably to traditional encyclopedias in accuracy. But high-conflict subjects remain vulnerable to manipulation until adequate oversight is applied.

What role does the Wikimedia Foundation play in these conflicts?

The foundation provides technical support and legal backing but avoids direct intervention in content disputes. Its mission emphasizes neutrality and non-interference, leaving day-to-day management to volunteer communities.

How can I tell if a Wikipedia article has been manipulated?

Look for signs like frequent reverts, heated discussion threads, protected status notices, and disproportionate emphasis on one perspective. Cross-referencing with independent sources also helps identify potential bias.

Could AI solve problems like the Scientology Wikipedia conflict?

Potentially. Machine learning models could detect coordinated editing patterns faster than humans. But AI systems themselves can inherit biases, so human oversight remains essential to ensure fairness.

Should Wikipedia restrict editing further to prevent future abuses?

That’s debated. Too much restriction undermines openness; too little invites manipulation. Most experts advocate targeted protections rather than blanket restrictions, focusing resources on high-risk areas.

Has the Church of Scientology changed its approach since the early 2000s?

Somewhat. Rather than mass editing campaigns, they now focus on promoting favorable narratives through affiliated websites and encouraging third-party publications to adopt softer language.

What should educators do when using Wikipedia in classrooms?

Teach students to evaluate sources critically. Use Wikipedia as a starting point, not an endpoint. Encourage verification through academic databases, news archives, and primary documents whenever possible.