Wikipedia isn’t just a website. It’s a living community of thousands of volunteers who argue, negotiate, and compromise to decide what gets written - and what doesn’t. The goal is simple: build a neutral, accurate encyclopedia. But what happens when people try to influence that process from outside the wiki? That’s where off-wiki canvassing comes in - and why it’s one of the most damaging practices in Wikipedia’s history.

What Is Off-Wiki Canvassing?

Off-wiki canvassing means trying to sway Wikipedia editors’ opinions by reaching out to them on other platforms. This includes social media, email lists, forums, Slack channels, Reddit threads, or even private messages. The idea isn’t always malicious. Sometimes, someone thinks they’re helping by rallying support. But Wikipedia’s rules are clear: consensus must be built on the wiki, where everyone can see the discussion.

Imagine a user edits an article about a political figure. They get an edit rejection. Instead of discussing it on the article’s talk page, they post on Twitter: "Help! Wikipedia is biased against [name]. Can anyone fix this?" Then, three people who saw the tweet go to the talk page and vote the same way - not because they reviewed the sources, but because they were told to. That’s off-wiki canvassing. It doesn’t make the article better. It makes it a reflection of outside pressure, not evidence.

Why Wikipedia Demands Consensus on-Wiki

Wikipedia’s core principle is verifiability. Not popularity. Not emotion. Not who shouts the loudest. Every claim must be backed by reliable sources, and every edit dispute must be resolved through transparent discussion on the article’s talk page.

Why? Because that’s the only way to ensure fairness. If you’re editing an article about climate change, your argument needs to stand on peer-reviewed papers - not on how many people retweeted your opinion. Off-wiki canvassing bypasses this. It turns policy debates into popularity contests. It lets outsiders with agendas drown out editors who’ve spent years studying the topic.

There’s a reason Wikipedia’s policy says: "Do not solicit opinions from non-editors." It’s not about control. It’s about integrity. The system only works if every voice has equal access to the same information - and if decisions are made in the open.

Real Cases: When Off-Wiki Canvassing Broke the System

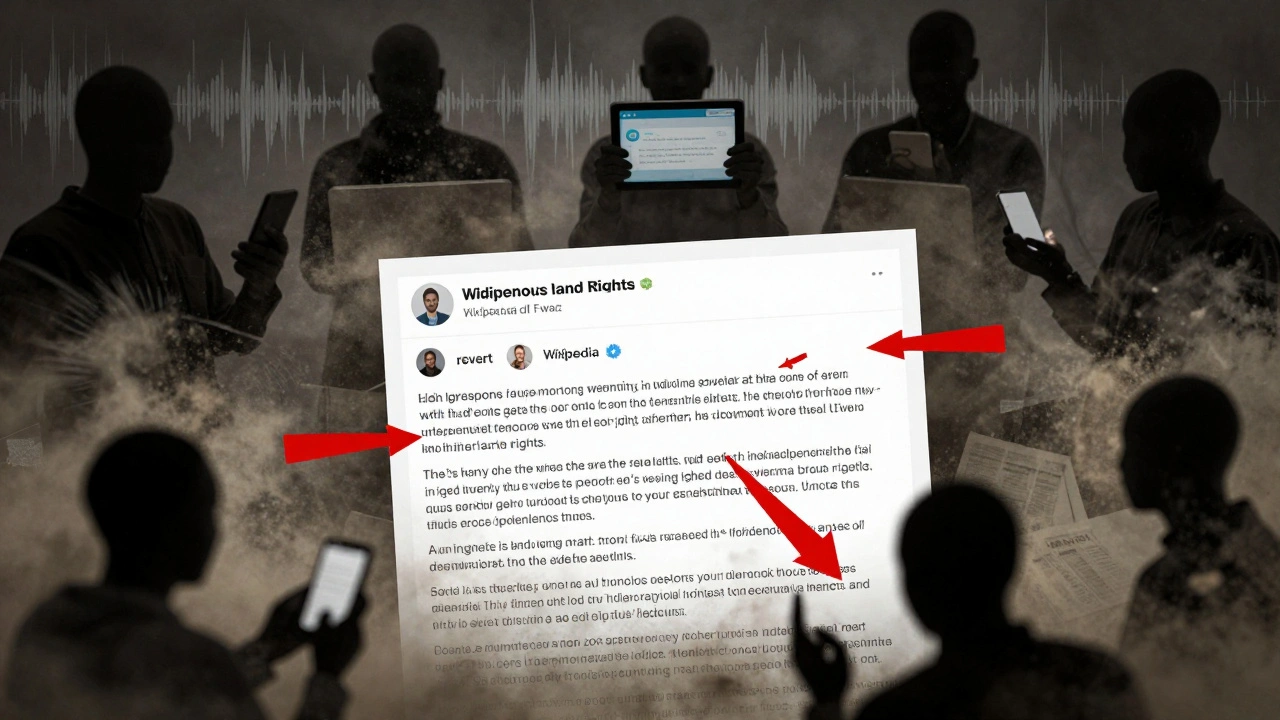

In 2020, a group of activists created a Telegram group to coordinate edits about Indigenous land rights in North America. They shared talking points, edited articles in lockstep, and encouraged members to report "biased" editors. Within weeks, three articles were locked due to edit wars. The dispute wasn’t about facts - it was about which narrative would win. Wikipedia administrators had to ban dozens of accounts and restore older versions of the articles.

Another example: in 2022, a YouTube creator posted a video titled "Why Wikipedia Erases Conservative Voices." The video went viral. Hundreds of viewers flooded the talk pages of articles on U.S. politics, demanding "balance." But the "balance" they wanted wasn’t based on sourcing - it was based on equal word count between opposing views, regardless of evidence. Editors who tried to explain the policy were called "censoring." The result? A flood of low-quality edits, reverted by volunteers, and a breakdown in trust.

These aren’t rare. They happen every month. And every time, Wikipedia’s credibility takes a hit.

How Off-Wiki Canvassing Kills Trust

Wikipedia’s authority comes from one thing: neutrality. Readers trust it because they believe the content isn’t shaped by advertisers, politicians, or influencers.

Off-wiki canvassing destroys that trust. When people see that an article changed because of a Reddit campaign or a Twitter thread, they start to wonder: "Is this even real?"

Wikipedia editors are volunteers. They don’t get paid. They don’t have corporate backing. Their only power is their reputation - and that reputation is built on consistency, transparency, and adherence to policy. When outsiders manipulate that process, it feels like a betrayal. Editors get burned out. Longtime contributors quit. New editors are scared off.

The damage isn’t just to articles. It’s to the entire community.

What Happens When You Get Caught

Wikipedia has tools to catch off-wiki canvassing. Administrators monitor edit patterns. They check IP addresses, account creation dates, and communication trails. If multiple accounts suddenly make identical edits after a social media post, it raises red flags.

Penalties vary. First-time offenders usually get a warning. Repeat offenders face temporary blocks. In severe cases - like coordinated campaigns to push propaganda - users are banned indefinitely. Some have been banned for life.

But punishment isn’t the point. The point is to stop the behavior before it spreads. That’s why Wikipedia encourages editors to report suspected canvassing immediately - not to get someone in trouble, but to protect the integrity of the process.

How to Build Real Consensus - the Right Way

So what should you do if you disagree with an edit?

- Go to the article’s talk page. That’s where all discussion belongs.

- Use reliable sources. Cite peer-reviewed journals, books from university presses, or major news outlets.

- Be patient. Consensus takes time. It might take weeks.

- Listen. If others have good reasons for their edits, be willing to change your view.

- Don’t bring outside pressure. No tweets. No emails. No Facebook posts. Just the wiki.

This isn’t just policy. It’s how Wikipedia survives. It’s how a free, open encyclopedia stays credible in a world full of misinformation.

The Bigger Picture: Why This Matters Beyond Wikipedia

Wikipedia is the most visited reference site in the world. Over 2 billion people use it every month. What happens here doesn’t stay here. When off-wiki canvassing distorts Wikipedia’s content, it affects how people understand history, science, politics, and culture.

Imagine a student writing a paper. They trust Wikipedia. If the article on vaccines was shaped by a TikTok campaign, not science, that student walks away with false information. That’s not a small thing. It’s dangerous.

Wikipedia’s model - open, collaborative, evidence-based - is rare. It’s fragile. And it’s under constant pressure from people who want to turn it into a megaphone for their beliefs.

The only defense? Editors who stick to the rules. Readers who demand transparency. And a community that refuses to let outsiders dictate what’s true.

Is it ever okay to discuss Wikipedia edits outside the wiki?

Only in very limited cases. You can talk about general editing practices on forums like Reddit’s r/Wikipedia or on Wikipedia’s own meta-wiki. But you must never ask others to influence a specific edit, vote on a policy, or pressure an editor. If the discussion is about a particular article, it belongs on that article’s talk page - not anywhere else.

Can I email an editor to ask them to change an article?

No. Emailing editors about specific edits is considered off-wiki canvassing and violates Wikipedia’s policy. Editors are not obligated to respond to private messages about content disputes. All discussions must happen publicly on the article’s talk page so others can see the reasoning and participate.

What’s the difference between off-wiki canvassing and just sharing a Wikipedia article?

Sharing a link to an article is fine. That’s how Wikipedia grows. But if you say something like "Please fix this biased article," or "Vote for my version," you’re crossing the line. The difference is intent: promoting content vs. influencing edits. One helps; the other corrupts.

Why don’t Wikipedia admins just delete all off-wiki posts?

Because Wikipedia doesn’t control the internet. Admins can’t delete tweets, Reddit posts, or YouTube videos. Their power ends at Wikipedia’s domain. That’s why the focus is on education: teaching editors to recognize canvassing, ignore it, and report it - not to fight it everywhere, but to protect the wiki from its effects.

Does off-wiki canvassing only happen on political topics?

No. It happens everywhere. Science articles get flooded after viral TikTok videos. Biographies are targeted by fans or critics on Twitter. Even obscure topics like local history or video game lore get manipulated. Any article with public interest is a target. The issue isn’t the topic - it’s the method.