Imagine waking up to find that a historical event in your country's history has been completely rewritten overnight. Suddenly, a nuanced account of a territorial conflict is replaced by a one-sided narrative that reads like a government press release. This isn't a glitch or a random act of vandalism; it's a coordinated effort. When groups of editors synchronize to push a specific nationalistic agenda, they aren't just fixing typos-they are fighting a digital war for legitimacy.

Quick Takeaways for Community Moderators

- Nationalist campaigns often use "sockpuppet" accounts to create a false sense of consensus.

- Detection relies on spotting unnatural patterns in edit history and high-frequency reversals.

- Response requires a mix of technical restrictions and diplomatic mediation via neutral third parties.

- Long-term stability comes from diversifying the editor base to prevent single-nation dominance.

The Anatomy of a Digital Border War

At its core, Nationalist Editing Campaigns is a coordinated effort by users to manipulate an encyclopedia's content to favor the political, historical, or cultural narrative of a specific nation. Unlike a lone vandal who sprays graffiti on a page, these editors are strategic. They don't just delete information; they subtly shift adjectives, omit inconvenient facts, and cite sources that are state-funded or biased.

This behavior often manifests as Edit Warring, where two or more groups repeatedly undo each other's changes. You'll see a cycle: Editor A adds a critical point about a border dispute; Editor B removes it citing "lack of neutrality"; Editor A puts it back; Editor B reports Editor A for disruption. This loop can last for years, turning a high-traffic page into a battlefield. The danger is that the average reader, who just wants a quick fact, assumes the most recent version is the most accurate one.

These campaigns usually target a few specific types of pages: national histories, territorial disputes, and the biographies of political leaders. For example, during peak tensions in the Balkans or the South China Sea, you'll notice a spike in edits on pages related to sovereignty. The goal isn't truth; it's dominance. If they can control the Wikipedia page, they believe they can influence global perception.

How to Spot the Signs of Coordination

Detecting these campaigns requires looking past the words and into the metadata. Individual edits might look reasonable, but the pattern across dozens of accounts is what gives them away. One of the biggest red flags is the use of Sockpuppetry, which is the act of a single person creating multiple accounts to make it look like many different people agree with a specific viewpoint.

If you see five different accounts all making similar changes within a three-hour window, and all those accounts were created in the same week, you're likely looking at a coordinated campaign. Experienced admins often use tools like "CheckUser" to see if multiple accounts share the same IP address. When a group of editors from one specific city or region starts aggressively patrolling a page about a foreign neighbor, the correlation is rarely a coincidence.

Another tell-tale sign is "citation stacking." This is where editors flood a page with sources that all come from the same state-run media outlet or a single nationalist think tank. They aren't providing a variety of perspectives; they are building a wall of citations that all say the same biased thing. It creates an illusion of authority that can fool a novice reviewer.

| Attribute | Random Vandalism | Nationalist Campaigns |

|---|---|---|

| Goal | Chaos or humor | Narrative control |

| Method | Blatant deletion/slurs | Subtle phrasing shifts |

| Duration | Short-lived | Persistent/Long-term |

| Source Use | None/Fake | Biased but "official" sources |

| Account Type | Anonymous/New | Established/Networked |

The Response Toolkit: From Soft to Hard Measures

Once a campaign is identified, the response needs to be proportional. Jumping straight to a permanent ban often backfires, as it gives the campaigners a "martyr" narrative to rally around, prompting them to create even more accounts. Instead, a tiered approach works best.

The first step is usually a Page Protection. By locking a page, administrators can prevent new or unregistered users from editing. This slows the momentum of a campaign. For highly volatile topics, "semi-protection" allows only established editors to make changes. If the battle is particularly fierce, the page might be placed under "full protection," meaning only administrators can edit it until a community consensus is reached.

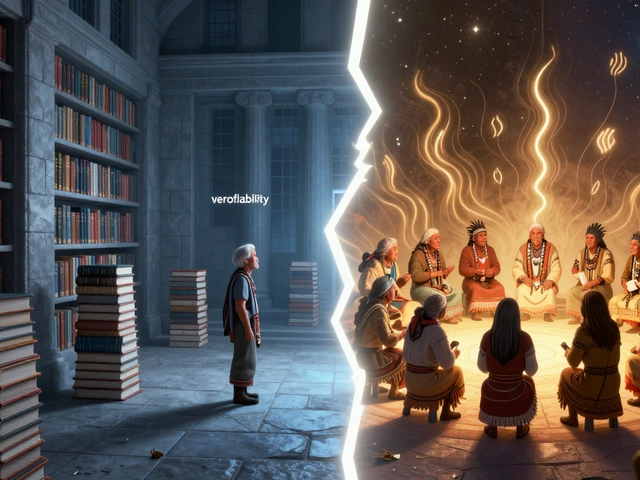

Next comes the process of Content Dispute Resolution. This involves moving the conversation out of the article's edit summary and into the "Talk" page. The goal here is to establish a Neutral Point of View (NPOV). A neutral editor-someone with no ties to any of the nations involved-is often brought in to moderate. They ask the warring parties to provide independent, peer-reviewed sources rather than state-media clippings.

When coordination is proven, the "hard" measures kick in. This includes the bulk-blocking of sockpuppet accounts and the removal of biased content. However, the most effective long-term strategy is "active diversification." If a page is dominated by editors from one country, the community actively encourages editors from other regions and academic backgrounds to contribute. This breaks the monopoly on the narrative and forces the nationalists to engage with actual counter-evidence.

The Role of External Influence and State Actors

It is a mistake to think all nationalist editing is just a few passionate citizens in a bedroom. In some cases, this becomes a tool of Information Warfare. Some governments have been known to encourage civil servants or military personnel to "correct" the record on international platforms. This elevates the stakes from a simple disagreement to a geopolitical struggle.

When state actors are involved, the campaigns become much more sophisticated. They may use VPNs to mask their location, making it look like support for their narrative is coming from across the globe. They might also use "sleeper accounts"-profiles that spend months making boring, helpful edits to unrelated pages to build a high reputation score before they suddenly switch to aggressive nationalist editing on a high-profile page.

Fighting this requires a high level of technical literacy from the moderation team. They have to analyze the timing of edits against real-world political events. For instance, if a government announces a new territorial claim on Tuesday, and a surge of "corrections" appears on the corresponding Wikipedia page by Wednesday morning, the link is obvious. The response here isn't just about editing; it's about documenting the pattern of state interference for the wider community to see.

Common Pitfalls in Moderation

The biggest mistake a moderator can make is taking a side. The moment an admin says, "Country A is right and Country B is wrong," they lose their authority. The goal of a Wikipedia moderator isn't to decide which nation is "correct," but to ensure that the Nationalist Editing Campaigns are countered by a commitment to verifiable, third-party sourcing.

Another common error is over-reliance on "the majority rule." In a coordinated campaign, the majority of editors on a page might be part of the same nationalist group. If a moderator simply follows the consensus of the current editors, they are inadvertently helping the campaign. True neutrality requires looking at the *quality* and *independence* of the sources, not the number of people shouting for a specific version of the text.

Finally, ignoring the "slow burn" is a risk. Some campaigns don't happen in a flash of edit wars. Instead, they slowly erode the neutrality of a page over years, changing one word here and another there. This "salami slicing" tactic is harder to detect than a loud battle but just as damaging. Regular audits of sensitive pages by neutral experts are the only way to catch this drift toward bias.

What is the difference between a biased editor and a nationalist campaign?

A biased editor is usually an individual acting on their own beliefs. A nationalist campaign is coordinated. It involves multiple accounts, often created in clusters, working toward a specific political goal, often using shared talking points and synchronized timing to overwhelm opposition.

Can a page ever be truly neutral in a territorial dispute?

Total neutrality is hard, but the goal is "descriptive neutrality." This means instead of saying "The land belongs to Country X," the article says "Country X claims the land, while Country Y also claims it, citing historical treaty Z." It describes the conflict rather than resolving it.

How do I report a suspected sockpuppet account?

You should document the suspicious patterns-such as identical phrasing, overlapping edit times, and similar source citations-and report them through the official administrator channels or the specific project's dispute resolution board. Avoid accusing the user on the public Talk page, as this often triggers a defensive edit war.

Do these campaigns affect the actual trust in Wikipedia?

Yes. When users notice blatant bias or constant instability on a page, it undermines the site's reputation as a reliable source. This is why rapid detection and the use of stable, peer-reviewed citations are critical for maintaining the platform's credibility.

Why not just ban all editors from certain countries?

That would violate the core principle of global openness. Many editors from those countries are honest, academic, and helpful. Banning by geography would alienate legitimate contributors and turn the site into an exclusionary club, which is the opposite of its mission.

Next Steps for Community Health

If you're a contributor noticing these trends, the best thing you can do is focus on verifiability. Don't argue about who is "right"; argue about which source is more reliable. When you find a biased claim, don't just delete it-find a high-quality, independent source (like a university press or an international body) that provides a more complete picture and add that instead.

For those in moderation roles, consider implementing "watchlists" for high-risk topics. By keeping a close eye on pages related to sensitive geopolitics, you can catch the first signs of a campaign before it snowballs into a full-blown edit war. Encouraging the use of the "Request for Comment" (RFC) process before making major changes to sensitive pages can also force potential campaigners to justify their edits in a public, scrutinized forum.