Wikipedia editing: How volunteers shape the world's largest encyclopedia

When you think of Wikipedia editing, the collaborative process where volunteers write, fix, and update encyclopedia entries in real time. Also known as crowdsourced knowledge building, it’s what keeps Wikipedia alive without paid staff or ads. It’s not just typing words into a box—it’s a quiet, constant battle over truth, fairness, and what gets remembered. Every edit, every rollback, every discussion on a talk page is part of a system designed to let anyone help, but only if they follow the rules.

Behind every clean article is a network of Wikipedia volunteers, tens of thousands of unpaid people who spend hours checking sources, fixing grammar, and defending policies. They’re not experts in every topic—they’re just careful readers who care about getting it right. These volunteers follow Wikipedia policy, a set of community-agreed rules that govern how content is created and maintained, like neutral point of view, verifiability, and no original research. These aren’t suggestions—they’re the backbone of trust. If you’ve ever wondered why Wikipedia doesn’t just let anyone say anything, it’s because these policies stop chaos. They’re enforced by people, not bots, and they’re constantly debated. You can’t just add your opinion—even if you’re right. You need a reliable source to back it up.

That’s why Wikipedia neutrality, the rule that articles must fairly represent all significant viewpoints based on published sources matters so much. On topics like climate change, politics, or history, neutrality isn’t about being boring—it’s about being honest. It means giving space to minority views only if they’re well-documented, not because they’re popular. And it’s why Wikipedia bias, the uneven representation of topics due to gaps in who edits and what they focus on is such a big deal. Most editors are male, urban, and from wealthy countries. That skews coverage. But volunteer task forces are working to fix that—adding Indigenous knowledge, women’s history, and local stories that were left out. You won’t find this on the front page. You’ll find it in the quiet edits, the long discussions, the midnight copyediting drives. This is editing as a public service. And what you’ll see in the posts below is the full picture: how policy fights bias, how volunteers win small battles, how AI tries to interfere, and why, despite everything, Wikipedia still works.

How Press Releases Influence Wikipedia Article Updates

Press releases don’t directly update Wikipedia, but they can trigger changes when journalists turn them into credible news stories. Wikipedia relies on independent reporting-not corporate announcements-to verify and add information.

Training Modules for Students Editing Wikipedia: What to Include

Effective training modules for students editing Wikipedia must teach the Five Pillars, reliable sourcing, notability rules, and conflict navigation-not just editing tools. Real examples and structured practice turn beginners into confident contributors.

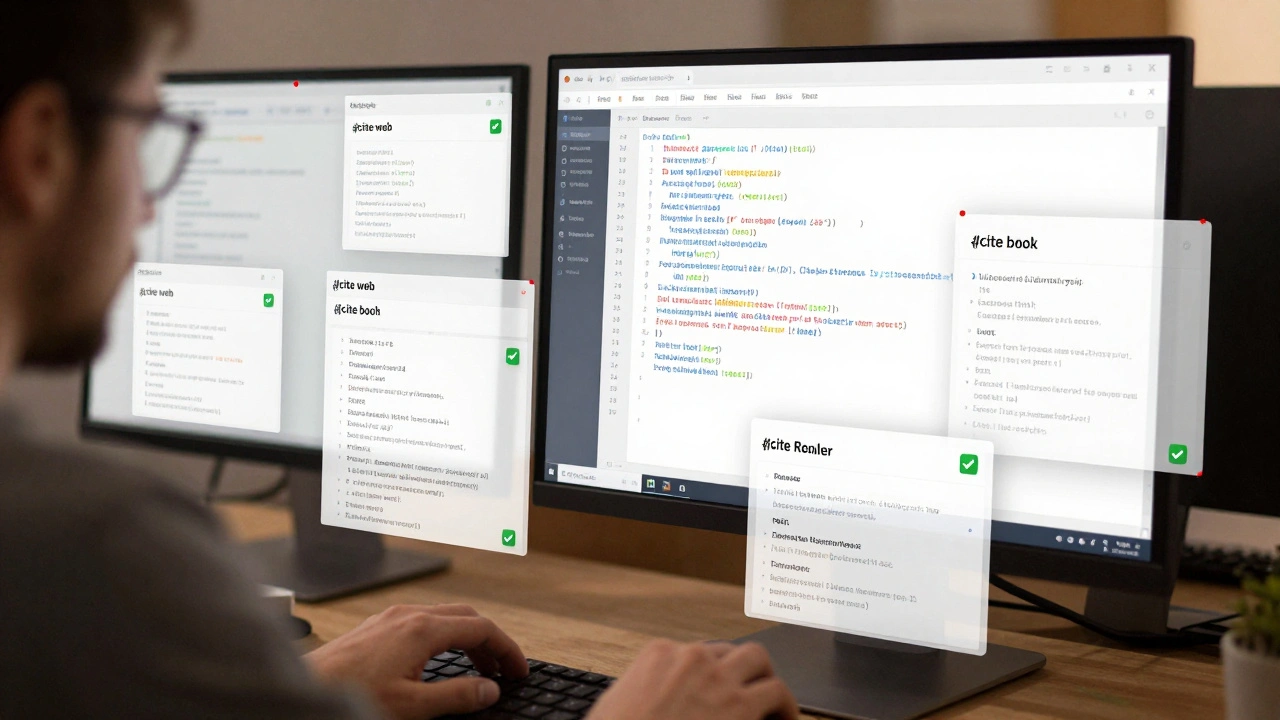

Best Refill and Citation Cleanup Tools for Wikipedia References

Learn how refill and citation cleanup tools automatically fix broken, incomplete, or messy Wikipedia references - saving hours of manual work and improving article reliability. Essential for editors who care about accuracy.

Student Safety on Wikipedia: Managing On-Wiki Interactions

Student editors on Wikipedia often face hostile feedback that can discourage participation. This guide explains why it happens, how to stay safe, and what schools and Wikipedia can do to make editing a positive experience.

Wikipedia Verifiability Policy: What Counts as a Reliable Source and Why

Wikipedia's verifiability policy ensures every claim is backed by reliable, published sources. Learn what counts as credible-like peer-reviewed journals and major newspapers-and why personal blogs, social media, and self-published content are rejected.

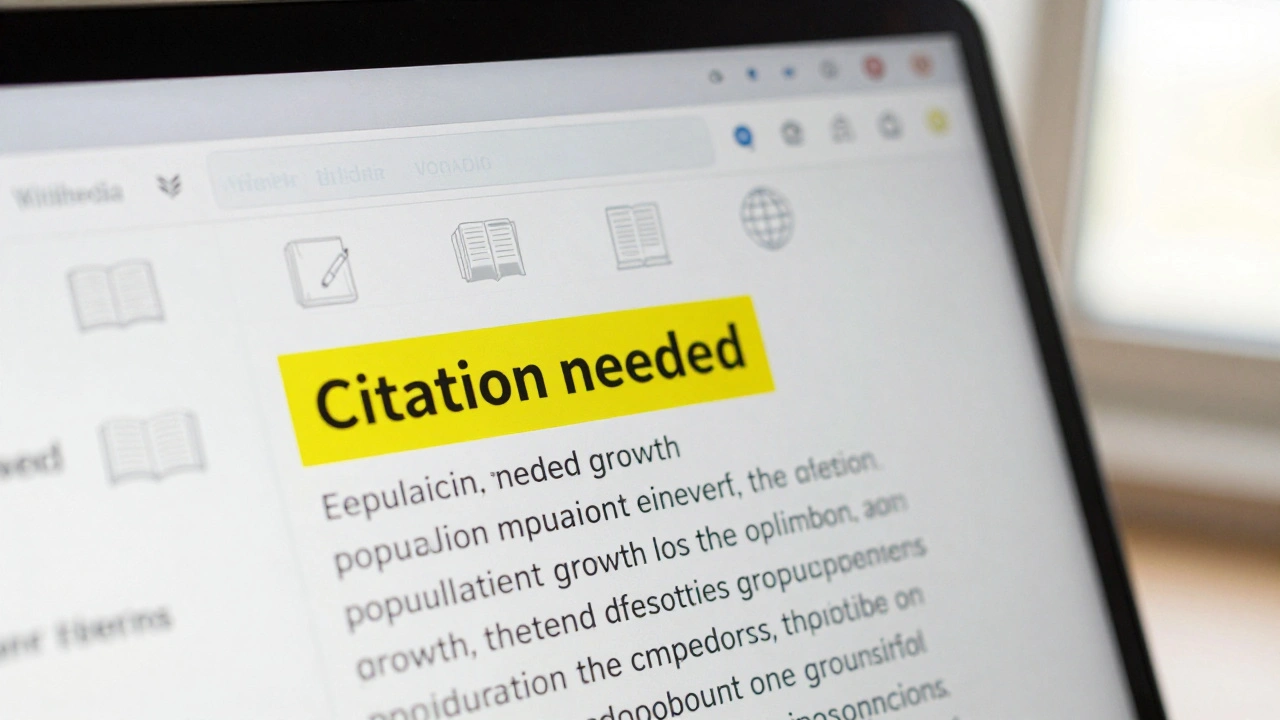

Verifiability Tags on Wikipedia: How to Read and Use Maintenance Templates

Verifiability tags on Wikipedia are essential for maintaining content quality. They flag claims without reliable sources and help readers and editors ensure accuracy. Learn how to interpret and fix these maintenance templates to support trustworthy information.

The Signpost's Special Reports: Deep Dives Into Major Wikipedia Changes

The Signpost's Special Reports reveal the real stories behind major Wikipedia changes-from AI policy updates to global edit-a-thons. These aren't just technical tweaks; they're community-driven shifts that shape how knowledge is built and trusted.

Detecting Editorial Slant in Wikipedia Text with Talk Page Tools

Wikipedia claims neutrality, but subtle editorial slant often slips in. Learn how talk pages reveal hidden bias through edit histories, source disputes, and silent consensus-tools anyone can use to spot when neutrality breaks down.

Timelines and Chronologies on Wikipedia: How to Build Reliable Event Pages

Learn how to build accurate, reliable timelines on Wikipedia by using verified sources, maintaining neutrality, and structuring events clearly. Avoid common mistakes that make event pages misleading or incomplete.

Source Misuse on Wikipedia: Common Errors and How to Fix Them

Source misuse on Wikipedia is a common problem that undermines accuracy. Learn the top errors editors make with citations and how to fix them using reliable, peer-reviewed, and independent sources.

How to Protect New Wikipedia Articles During Notability Challenges

Learn how to protect new Wikipedia articles from deletion by meeting notability standards with reliable sources, avoiding common mistakes, and using the draft space effectively. This guide shows exactly what editors look for-and how to respond when your article is challenged.

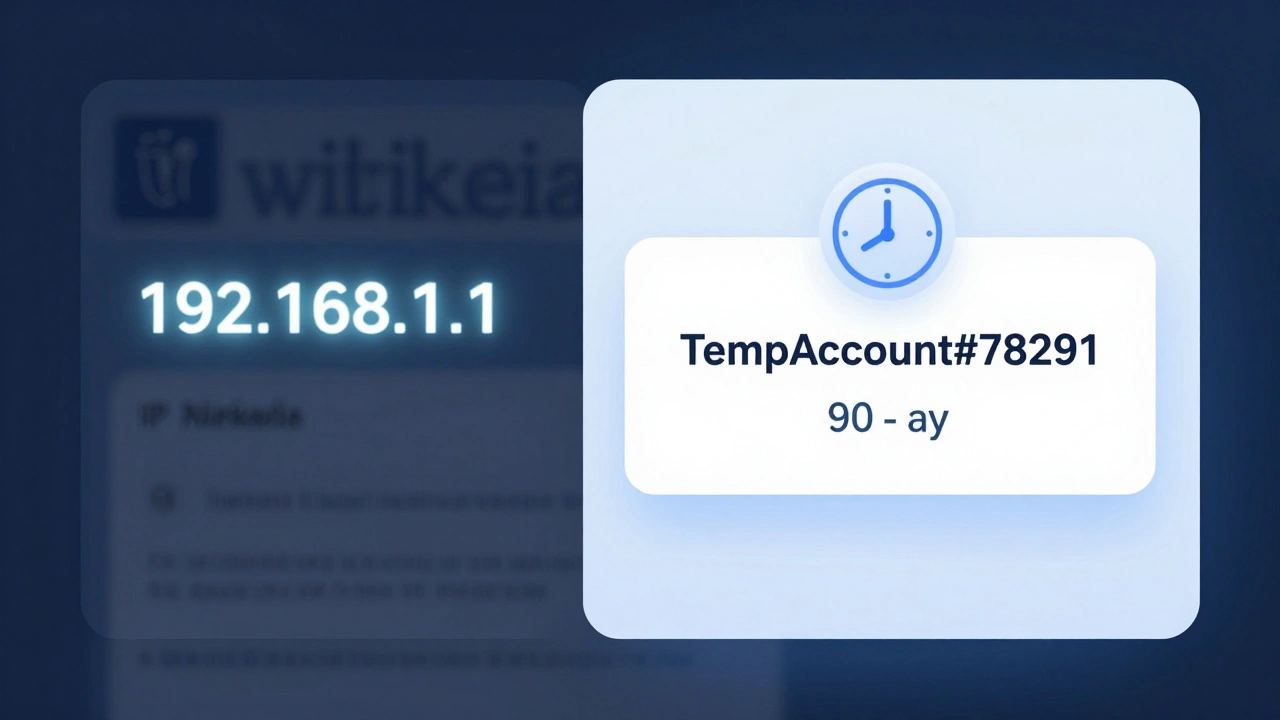

Temporary Accounts on Wikipedia: What's Changing for Editors

Wikipedia is replacing anonymous editing with temporary accounts to fight vandalism and improve edit tracking. Learn how this change affects contributors and why it matters for the future of the encyclopedia.