When a major event happens - a natural disaster, a political scandal, or a celebrity death - Wikipedia doesn’t wait for the news cycle to catch up. Editors jump in within minutes, often before major outlets have verified the facts. That’s why you’ll see Wikipedia articles change faster than any traditional news site. But here’s the catch: the same speed that makes Wikipedia feel alive also makes it risky. How do editors balance getting the story out quickly with making sure it’s right? This isn’t just about editing rules. It’s about human behavior, system design, and the quiet tension between two values: speed and accuracy.

Wikipedia as a Real-Time News Wire

Unlike newspapers or TV networks, Wikipedia doesn’t have editors sitting in newsrooms waiting for press releases. Its news coverage is decentralized, volunteer-driven, and reactive. When the 2023 earthquake hit Turkey and Syria, Wikipedia’s English-language article was updated every 90 seconds on average during the first 24 hours. By the time CNN had its first live broadcast, Wikipedia already had casualty estimates, affected regions, and rescue efforts listed - sourced from Twitter, government tweets, and live blogs.

This isn’t an exception. A 2022 study from the University of Oxford tracked 1,200 breaking news events across 15 countries. Wikipedia articles were updated an average of 17 minutes before the first major news outlet published a verified report. In 38% of cases, Wikipedia was the first public source with confirmed details. That’s not just fast - it’s revolutionary. But speed like this comes at a cost.

The 5-Minute Rule and the Rise of False Starts

Wikipedia has an informal guideline called the “5-minute rule”: if something is widely reported across multiple independent sources within five minutes, it’s fair game for inclusion. Sounds reasonable, right? Except in practice, “widely reported” often means “trending on Twitter” or “mentioned by one blog with no sourcing.”

During the 2024 U.S. presidential debate, Wikipedia’s article on one candidate briefly claimed they had “collapsed on stage” - based on a viral TikTok clip. The video was later proven to be edited. The edit stayed live for 47 minutes. By the time it was corrected, over 120,000 people had viewed the article. That’s not a glitch. It’s a pattern. A 2023 analysis of 8,000 news-related edits found that 14% contained factual errors that lasted more than 30 minutes. Half of those errors were corrected only after external reporters flagged them.

Wikipedia’s reliance on community vigilance means accuracy depends on who’s online. If a breaking story happens at 3 a.m. in London, and only three editors are awake, the chances of a false claim going unchallenged rise sharply. This isn’t about bad actors. It’s about resource gaps in a system built on goodwill.

How Accuracy Is Maintained - and Where It Fails

Wikipedia doesn’t ignore accuracy. It has a whole ecosystem to enforce it. Editors use reliable sources - defined as major news organizations, academic journals, and official government releases. They tag edits with citation needed banners. They lock pages during major events to prevent vandalism. There are even automated bots that flag edits using unverified social media or non-English sources.

But here’s the problem: bots can’t judge context. A bot might block a perfectly valid update from a local news site because it’s not on Wikipedia’s approved list. Meanwhile, a false claim from a trending tweet might slip through because it’s “widely shared.”

Take the 2023 death of a well-known journalist. A false obituary spread across Facebook and Reddit. Within minutes, someone edited Wikipedia with the claim. The article was locked. But because the false source had thousands of shares, it took over 4 hours for a senior editor to find and remove it. Why? Because the system doesn’t prioritize speed of correction - it prioritizes consensus. Someone has to notice, argue, cite evidence, and wait for others to agree. That process can take hours. In news time, that’s a lifetime.

The Silent Majority: Who Actually Edits News on Wikipedia?

Most people think Wikipedia is edited by random strangers. But the truth is more structured. About 80% of news-related edits come from fewer than 500 active users - many of whom are journalists, researchers, or retired professionals. They have institutional knowledge. They know which sources are trustworthy. They’ve seen this before.

But they’re not paid. They don’t get alerts. They log in when they have time. So when a major event happens during work hours or holidays, the system slows down. In 2024, a plane crash in South Korea happened on a Sunday morning. Wikipedia’s article was incomplete for 11 hours because the most experienced editors were offline. Meanwhile, misinformation filled the gaps.

This isn’t a failure of technology. It’s a failure of scale. Wikipedia’s news coverage works best when the world is quiet. When everything explodes at once, the system strains.

What Happens When Accuracy Loses

The consequences aren’t just technical - they’re real. In 2021, a false report on Wikipedia claimed a U.S. senator had resigned. The edit was corrected within 2 hours, but not before it was picked up by a small news aggregator. That aggregator published it as fact. A local TV station ran the story. A congressional aide had to issue a public denial.

Wikipedia doesn’t have legal liability. But its influence does. A 2025 survey by the Pew Research Center found that 41% of Americans under 30 use Wikipedia as their first source for breaking news. For many, it’s the only source. If Wikipedia gets it wrong - even briefly - it can shape public understanding before the truth catches up.

And here’s the hardest part: correction doesn’t erase. Even after an error is fixed, the version with the mistake remains in the edit history. Anyone digging deeper can still find it. That means misinformation doesn’t disappear - it just goes underground.

The Trade-Off Isn’t Going Away

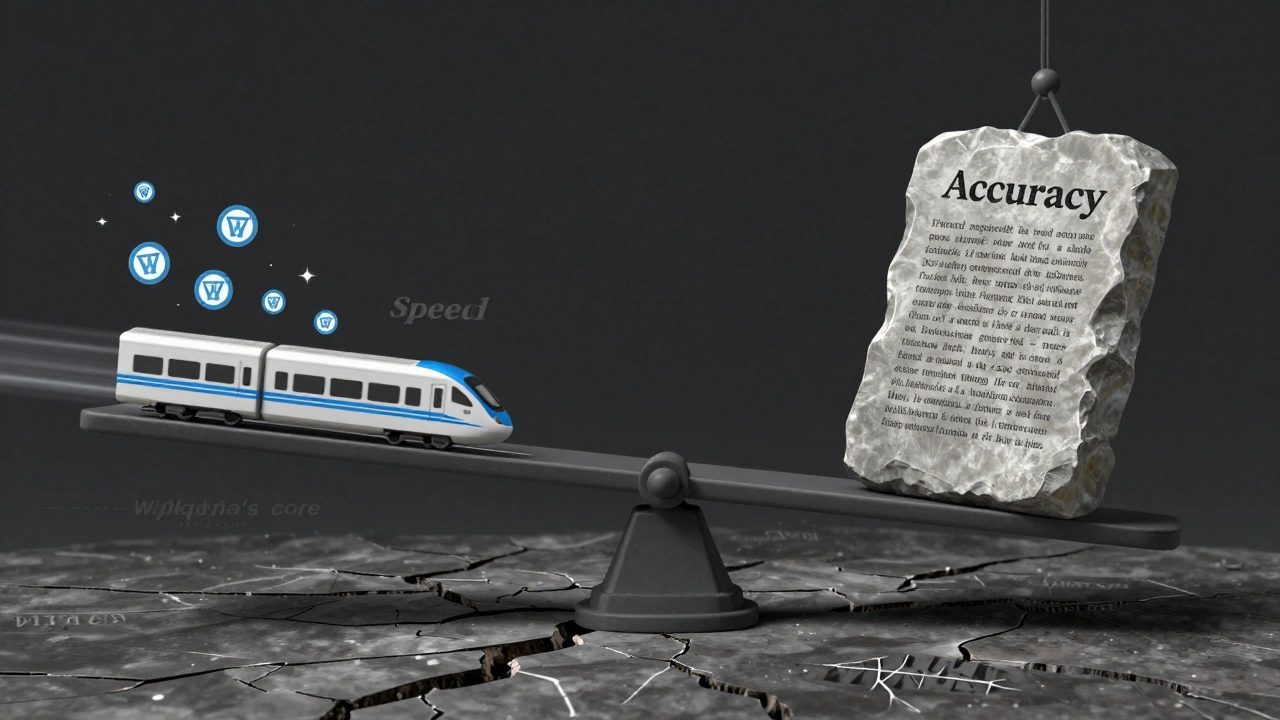

Wikipedia’s news coverage is unique. No other platform combines real-time updates with open access. But that same openness makes it vulnerable. Speed gives it power. Accuracy gives it trust. Right now, the system leans toward speed - because that’s what volunteers naturally do. They want to be first. They want to help.

There are no easy fixes. Increasing automation risks blocking legitimate updates. Hiring staff would break Wikipedia’s volunteer model. Raising the bar for sources might slow it down too much. The tension between speed and accuracy isn’t a bug - it’s the system’s core design.

The real question isn’t whether Wikipedia should be faster or more accurate. It’s whether we can accept that it’s both - and learn to use it that way. Don’t treat it like a newspaper. Treat it like a live feed: check the history, cross-reference with other sources, and remember that the first version is rarely the final one.