Imagine if someone could change the definition of "gravity" to mean "the act of being cool" every time you blinked. Chaos would ensue. Yet, on Wikipedia, a platform with over 6 million English articles updated thousands of times daily, this kind of vandalism happens constantly. The difference is that Wikipedia has a robust system to catch it. At the heart of this system are two forces: human volunteers and automated scripts known as bots. Understanding how bot operators and community oversight work together reveals why the encyclopedia remains one of the most reliable sources of information online.

The Role of Bots in Maintaining Accuracy

Bots are not artificial intelligence taking over the world. They are simple computer programs written by humans to perform repetitive tasks. Think of them as digital interns who never sleep and make zero typos. A typical bot might fix broken links, update infoboxes with new data from trusted sources, or revert obvious vandalism within seconds of it happening.

For example, when a major sports event concludes, a bot can instantly update the scores across hundreds of related articles. Without these tools, editors would spend countless hours manually clicking through pages to ensure consistency. However, because bots can edit at high speeds, they carry significant risk. If a bot’s code contains an error, it could damage thousands of articles before anyone notices. This is why strict controls exist.

Who Are Bot Operators?

A bot operator is a trusted volunteer user who writes, maintains, and runs automated editing scripts on Wikipedia. Becoming a bot operator isn’t automatic. It requires years of experience, a deep understanding of Wikipedia’s policies, and proven reliability. Most operators start as regular editors, gradually earning trust by fixing errors and contributing high-quality content.

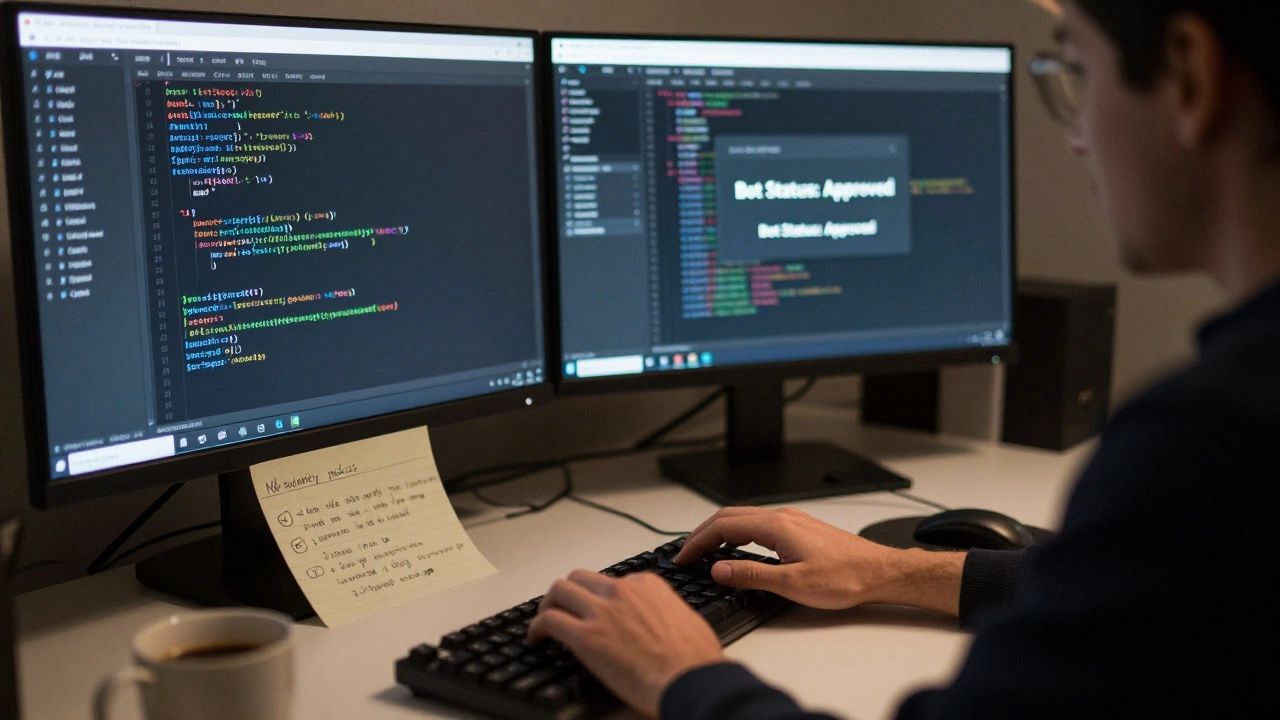

Operators must apply for bot status through a formal process. They explain what their bot will do, provide sample edits, and demonstrate that the tool adheres to all guidelines. The community reviews each application carefully. Only after receiving approval can an operator activate their bot flag. This flag allows the bot to make edits without triggering standard alerts, but it also means the operator accepts full responsibility for any mistakes.

- Technical Skill: Operators usually know programming languages like Python or JavaScript to build efficient scripts.

- Policymaking Knowledge: They must understand complex rules about neutrality, verifiability, and copyright.

- Accountability: Every bot action is logged and can be reviewed by other users.

Community Oversight Mechanisms

The term "community oversight" refers to the collective effort of volunteers monitoring changes and enforcing standards. Unlike traditional encyclopedias edited by paid staff, Wikipedia relies on decentralized governance. Anyone can review recent changes, discuss disputes on talk pages, or vote on policy updates.

This system works because of transparency. All edits are public. You can see who changed what, when, and why. If a bot makes a mistake, another editor can quickly revert it. More importantly, patterns of bad behavior trigger deeper investigations. For instance, if multiple bots begin promoting biased viewpoints, the community may pause bot activities until the issue is resolved.

Key mechanisms include:

- Recent Changes Patrol: Volunteers scan the live feed of edits to catch vandalism or poor-quality contributions.

- Talk Page Discussions: Users debate controversial topics and reach consensus before making large-scale changes.

- Administrators: Experienced editors with special tools to block vandals, protect pages, and delete spam.

- Ombudsman Commissions: Independent groups that investigate abuse of power by administrators or bot operators.

| Feature | Human Editor | Bot Operator |

|---|---|---|

| Speed of Edits | Slow (minutes per article) | Fast (seconds per batch) |

| Error Rate | Higher (typos, bias) | Lower (if coded correctly) |

| Flexibility | High (can handle nuance) | Low (follows predefined rules) |

| Approval Required | No | Yes (formal request) |

| Accountability | Individual | Shared with community |

The Approval Process for New Bots

Getting your bot approved is rigorous. First, you propose your idea on the Requests for Bot Status page, where other experienced editors evaluate whether your script aligns with Wikipedia's core principles. You must describe exactly what the bot does, show examples of test runs, and confirm it won’t disrupt ongoing discussions.

If reviewers find potential issues-such as conflicting with another bot or violating neutrality-they ask for revisions. Sometimes applications take weeks to finalize. Once approved, the bot receives a unique identifier. Its actions appear in logs marked clearly as “bot.” This ensures everyone knows when automation is involved.

Even after approval, operators must maintain their bots regularly. Policies change, software updates occur, and new threats emerge. Neglecting maintenance can lead to revoked status. In rare cases, malicious actors have attempted to misuse bots for propaganda or advertising. These incidents reinforce why oversight matters so much.

Challenges Facing Modern Oversight

As Wikipedia grows, so do its challenges. With millions of articles, tracking every change becomes harder. Some critics argue that reliance on bots reduces human interaction, potentially weakening community bonds. Others worry about algorithmic bias-if a bot learns from flawed data, it might spread inaccuracies systematically.

Another concern involves coordination between different language editions. A bot working well on English Wikipedia might fail completely on Spanish Wikipedia due to cultural differences in citation styles or topic coverage. Cross-language collaboration helps mitigate these problems, but it requires extra effort.

Despite these hurdles, the system continues evolving. Tools like ORES (Objective Revision Evaluation Service), which uses machine learning to predict the quality of edits automatically, assist reviewers by flagging suspicious activity early. Such innovations balance efficiency with accuracy.

Why Transparency Builds Trust

Transparency is Wikipedia’s greatest strength. Every decision, every edit, and every dispute resolution is documented publicly. Readers don’t just accept information blindly; they can verify claims themselves. This openness fosters trust among users worldwide.

When controversies arise-like debates over historical figures or scientific theories-the community engages openly. Instead of hiding disagreements, they surface them for discussion. Over time, this process refines content and strengthens credibility. Even skeptics acknowledge that no other platform offers such detailed visibility into editorial processes.

Future Directions for Bot Governance

Looking ahead, several trends shape bot governance. Artificial intelligence promises smarter detection systems capable of identifying subtle forms of manipulation. Blockchain technology could enhance audit trails, making tampering nearly impossible. Meanwhile, increased diversity among bot operators ensures broader perspectives inform automated decisions.

Educational initiatives also play a crucial role. Teaching newcomers about responsible bot usage prevents misuse before it starts. Workshops, tutorials, and mentorship programs help aspiring operators develop ethical practices alongside technical skills.

Ultimately, the goal remains unchanged: preserve Wikipedia’s mission to share free knowledge globally. By combining human judgment with machine precision, bot operators and communities continue safeguarding this vital resource for future generations.

What is a bot operator on Wikipedia?

A bot operator is a trusted volunteer who creates and manages automated scripts to perform routine tasks on Wikipedia, such as fixing links or reverting vandalism. They must undergo a formal approval process to ensure their tools comply with community guidelines.

How does community oversight prevent abuse?

Community oversight involves volunteers reviewing edits, discussing disputes, and enforcing policies through transparent processes. Administrators and ombudsmen address serious violations, ensuring accountability for both humans and bots.

Can anyone become a bot operator?

No. Candidates must demonstrate extensive experience, technical proficiency, and adherence to Wikipedia’s rules. Applications require peer review and explicit approval before activation.

What happens if a bot makes a mistake?

Mistakes are logged publicly and can be reverted immediately by other editors. Persistent errors may result in temporary suspension or permanent revocation of bot privileges depending on severity.

Are there risks associated with using bots?

Yes. Poorly designed bots can propagate errors rapidly, introduce bias, or conflict with existing workflows. Strict oversight minimizes these risks while maximizing benefits.