Imagine asking an artificial intelligence to summarize the history of a specific region, only to find that the answer ignores half the population’s contributions or leans heavily on one political narrative. This isn’t just a hypothetical glitch; it is a structural flaw in how AI encyclopedias are built. As these systems replace traditional search results and static wikis, the question shifts from "Is this fact correct?" to "Whose perspective is missing?" Conducting rigorous bias audits is no longer optional-it is the only way to ensure these tools serve as reliable sources of truth rather than amplifiers of historical prejudice.

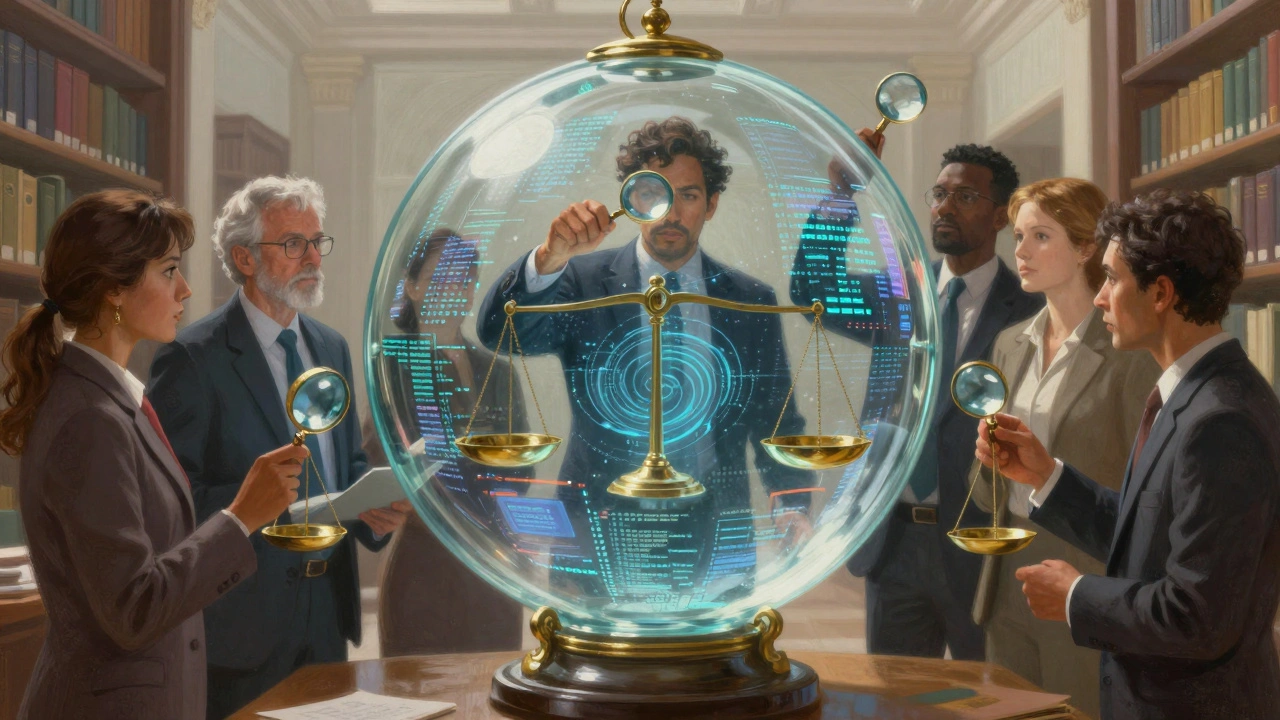

The stakes are high. When an AI model generates content for millions of users, subtle biases in its training data become systemic errors. A bias audit is not a one-time checkmark; it is a continuous process of measuring, identifying, and correcting skewed outputs. For developers, editors, and ethicists, understanding the methods, metrics, and accountability frameworks behind these audits is essential to building trust in automated knowledge systems.

Why Traditional Fact-Checking Fails AI Encyclopedias

You cannot audit an AI encyclopedia using the same rules you apply to a printed book. A human-written article has a single author with a distinct voice and intent. An AI-generated summary, however, is a statistical average of billions of documents. This creates a unique problem: algorithmic bias often hides in plain sight. It doesn’t always lie; instead, it omits, prioritizes, or frames information in ways that reflect the dominant voices in its training data.

Consider the concept of training data skew. If an AI is trained primarily on English-language Wikipedia articles, news outlets, and academic papers from Western institutions, its internal map of "importance" will mirror those sources. When asked about local governance in rural India or indigenous land rights in the Amazon, the AI might default to colonial-era narratives simply because those texts are more prevalent in its dataset. Traditional fact-checking looks for false statements. Bias auditing looks for missing context and disproportionate representation. You need to ask not just if the statement is true, but if it is complete and fair relative to diverse perspectives.

Core Methods for Detecting Bias in Knowledge Systems

To uncover these hidden distortions, auditors use a combination of quantitative analysis and qualitative review. There is no single tool that catches everything. Instead, effective audits rely on a layered approach that examines both the input data and the output generation.

- Adversarial Testing: This involves feeding the AI prompts designed to trigger biased responses. For example, asking for biographies of successful CEOs versus successful nurses can reveal gender stereotypes in how professional achievements are described. Auditors look for differences in adjectives used, tone, and the depth of detail provided for each group.

- Dataset Demographic Analysis: Before the AI even generates text, auditors analyze the source material. This means mapping the geographic, linguistic, and cultural origins of the training data. If 80% of the sources are from North America and Europe, the audit flags a high risk of geographic bias regardless of the model’s sophistication.

- Sentiment and Tone Profiling: Using natural language processing (NLP) tools, auditors measure the emotional valence of generated content across different topics. Does the AI describe protests in Country A as "unrest" while describing similar events in Country B as "freedom marches"? Discrepancies in sentiment scores highlight potential political bias.

- Counterfactual Fairness Checks: This method swaps key variables in a prompt-such as changing a person’s name from "John" to "Jasmine" or swapping nationalities-and compares the outputs. If the core facts remain the same but the framing changes significantly based on identity markers, the system fails the counterfactual test.

These methods work best when combined. Relying solely on automated metrics can miss nuanced cultural insinuations, while relying only on human reviewers is too slow and subjective for large-scale AI systems. The goal is to create a feedback loop where automated flags guide human investigation.

Key Metrics for Measuring Encyclopedia Fairness

Once you have identified potential biases, you need numbers to track progress. Without standardized metrics, "fairness" remains a vague ideal. Here are the critical metrics that define a robust bias audit for AI encyclopedias.

| Metric Name | What It Measures | Target Threshold |

|---|---|---|

| Representation Parity | The ratio of coverage volume between different demographic groups or regions. | Within 15% of real-world distribution |

| Semantic Consistency Score | How similarly the AI describes equivalent concepts across different cultures. | >0.9 similarity index |

| Omission Rate | The percentage of verified facts excluded from summaries compared to a gold-standard reference set. | <5% deviation from baseline | r>

| Tone Variance Index | The standard deviation in sentiment scores for neutral topics across different identity groups. | Low variance (<0.2) |

Representation Parity is perhaps the most intuitive metric. It ensures that the AI does not disproportionately focus on certain groups. However, parity alone is not enough. An AI could give equal word count to two conflicting historical accounts without clarifying which is supported by evidence. That is why Semantic Consistency matters. It checks whether the AI applies the same descriptive standards to all subjects. If one leader is called "visionary" and another with identical policies is called "authoritarian," the consistency score drops.

Omission Rate addresses the danger of silence. In an encyclopedia, what is left out is often as important as what is included. Auditors compare AI-generated summaries against curated, expert-reviewed datasets to see if key facts are being dropped. Finally, the Tone Variance Index helps detect subtle prejudices. Neutral topics should receive neutral tones. High variance suggests the model is injecting subjective judgment based on the subject’s identity.

The Accountability Gap: Who Is Responsible?

Finding bias is only half the battle. Fixing it requires clear accountability. Currently, the responsibility for AI bias is fragmented among developers, data providers, and end-users. This diffusion of responsibility leads to a situation where everyone assumes someone else is handling the ethical oversight.

In the context of AI encyclopedias, we must establish a chain of custody for knowledge. Just as food products list ingredients and allergens, AI models should disclose their training data provenance. Users need to know if the AI is drawing from peer-reviewed journals, social media posts, or user-edited wikis. This transparency allows readers to weigh the reliability of the information accordingly.

Furthermore, accountability mechanisms must include redress pathways. If a user identifies a biased output, there must be a structured way to report it, have it reviewed by experts, and update the model. Static corrections are not enough. The underlying model needs to learn from these corrections to prevent recurrence. This requires a shift from "set-and-forget" deployment to continuous monitoring and iterative refinement.

Regulatory bodies are beginning to take notice. Frameworks like the EU AI Act mandate risk assessments for high-impact AI systems. While encyclopedias might not fall under the highest risk category, the trend toward mandatory bias reporting is clear. Organizations that proactively implement robust audit frameworks will likely face fewer legal and reputational risks in the coming years.

Practical Steps for Implementing a Bias Audit

If you are responsible for developing or maintaining an AI-driven knowledge base, here is how to start. You do not need to wait for perfect tools; you can begin with foundational practices today.

- Define Your Scope: Identify the most sensitive topics your AI handles. Is it medical advice, historical events, or legal definitions? Prioritize audits for areas where bias causes the most harm.

- Assemble a Diverse Review Board: Automated tools miss cultural nuance. Hire reviewers from the communities represented in your data. Their lived experience is the best detector for subtle stereotyping.

- Create a Gold-Standard Dataset: Build a small, manually verified set of questions and ideal answers. Use this as your benchmark to test new model versions regularly.

- Implement Continuous Monitoring: Set up alerts for significant shifts in output patterns. If the model starts using more charged language after a data update, investigate immediately.

- Publish Your Audit Reports: Transparency builds trust. Share your findings, limitations, and improvement plans with the public. Acknowledge where you fall short and explain how you plan to address it.

Remember that bias is not a bug to be fixed once; it is a characteristic of complex systems that requires ongoing management. By integrating these steps into your development lifecycle, you move from reactive damage control to proactive ethical design.

The Future of Trustworthy AI Knowledge

As AI encyclopedias become more sophisticated, the bar for accuracy and fairness will rise. Users will expect not just correct facts, but balanced perspectives. The organizations that succeed will be those that treat bias auditing as a core feature, not an afterthought. They will invest in better data diversity, develop more nuanced metrics, and embrace transparent accountability.

The technology is moving fast, but our ability to evaluate it ethically must keep pace. By adopting rigorous methods and clear metrics, we can ensure that AI serves as a bridge to broader understanding, rather than a wall of reinforced prejudice. The goal is not a perfectly neutral AI-that may be impossible-but an AI that acknowledges its limitations and strives for equitable representation.

What is a bias audit in the context of AI encyclopedias?

A bias audit is a systematic evaluation of an AI system’s outputs to identify unfair or skewed representations of different groups, topics, or perspectives. Unlike traditional fact-checking, which focuses on factual accuracy, bias auditing examines issues like omission, tone, and proportional representation to ensure the AI provides balanced and comprehensive information.

Why can't we just rely on human editors to fix AI bias?

Human editors are essential for nuanced judgment, but they cannot scale to the volume of content generated by AI encyclopedias. Additionally, human reviewers bring their own unconscious biases. A robust audit combines automated metrics for scale and consistency with diverse human review teams for contextual understanding, creating a more reliable check against systemic errors.

How does 'Representation Parity' differ from simple equality?

Representation parity measures whether the AI’s coverage of different groups aligns with their real-world presence or importance, rather than forcing equal word counts. For example, if a topic affects a minority group significantly, parity ensures they are adequately represented in the discussion, even if the overall volume of content about them is smaller than for majority groups.

What are 'Counterfactual Fairness Checks'?

Counterfactual fairness checks involve altering specific identity-related variables in a prompt (like gender, race, or nationality) while keeping the rest of the query the same. If the AI’s response changes significantly in tone, detail, or framing based solely on that variable swap, it indicates the presence of bias linked to that identity marker.

Who is accountable if an AI encyclopedia publishes biased content?

Accountability typically lies with the organization deploying the AI system. They are responsible for selecting training data, implementing audit processes, and providing redress mechanisms for users. Regulatory frameworks increasingly require these organizations to demonstrate due diligence in mitigating bias, making transparency and proactive monitoring crucial for legal and reputational protection.

Can AI completely eliminate bias from encyclopedias?

Complete elimination is unlikely because bias is inherent in the data humans produce. However, AI can significantly reduce and manage bias through rigorous auditing, diverse training data, and continuous monitoring. The goal is not perfection, but transparency and fairness, ensuring that users are aware of potential limitations and that the system actively works to minimize harmful disparities.