The Great Filter Update

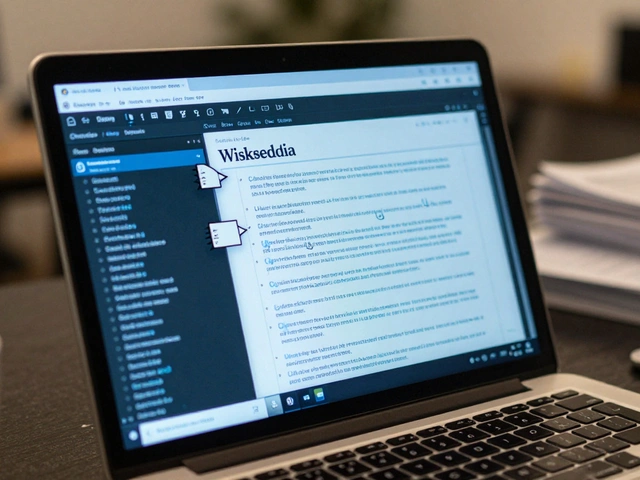

By April 2026, Wikipediathe free online encyclopedia has fundamentally shifted its stance on artificial intelligence. For years, the community debated how to handle text churned out by algorithms. Now, the dust has settled, and the "New AI Content Standards" are live. If you edit Wikipedia, or even just read it critically, these changes matter more than you think.

You might remember the chaos of 2024 and 2025. Editors were drowning in pages filled with smooth-sounding gibberish-facts hallucinated by generative engines, citations pointing to non-existent books. The platform couldn't function under that weight. The new policies aren't just suggestions; they are hard lines drawn in the digital sand. They define exactly what counts as acceptable help versus prohibited automation.

Understanding the Core Restrictions

At the heart of the 2026 update lies the Mandatory Disclosure Protocol. Previously, using an AI tool to rewrite a paragraph was a gray area. Today, it is strictly regulated. Any editor who utilizes a Large Language Model to draft, summarize, or rephrase text must tag that section with a specific hidden metadata flag.

- Drafting Assistance: Allowed only for grammar correction or style improvements on existing verified text.

- Factual Generation: Strictly prohibited for creating new claims without primary source verification.

- Voice Matching: You cannot ask the AI to mimic a human editor's unique writing style.

This isn't paranoia; it's risk management. A report from late 2025 showed that nearly 15% of minor edits were indistinguishable from bot-written spam. The goal of these restrictions is to preserve the human element of curation while accepting that tools evolve.

How Editors Detect AI Text

Detection technology is no longer guesswork. In 2026, the wiki software integrates directly with detection APIs provided by independent auditors. When you hit "Save," the system runs a probabilistic check against known algorithmic patterns. However, these tools aren't magic wands.

The reality is much more granular than a simple "AI Detected" button. Editors look for three specific tell-tale signs:

- Uniform Sentence Structure: AI tends to write with a predictable rhythm. Human editors have erratic sentence lengths.

- Citation Mismatch: Often, the text cites a paper correctly but misses a specific nuance mentioned in page 42 of that source. Humans catch this; automated scripts don't always notice.

- Stale Data Patterns: Many models trained on older datasets repeat outdated statistics. Spotting numbers from 2021 used in a 2026 article triggers immediate flags.

| Method | Permitted? | Risk Level |

|---|---|---|

| Manual Typing | Yes | Low |

| AI Grammar Fix | Yes (with tag) | Low |

| Full Paragraph Draft | No | High |

| Fact Retrieval via Tool | Yes (if cited) | Medium |

This table reflects the practical application of the Generative AItechnology capable of producing text rules. If an editor crosses the line into high-risk territory, their account gets flagged for manual review by senior administrators. Repeat offenses result in bans that can last months, regardless of whether the contribution improved the article superficially.

Impact on Article Accuracy

Why did the Wikimedia Foundationnon-profit organization behind Wikipedia take such a firm stance? It comes down to trust. If a reader assumes everything on the site is human-verified, confidence skyrockets. If they suspect random bots filled the gaps, value plummets.

Recent audits indicate that articles fully written by humans have a 98% retention rate over five years. Conversely, sections initially polished by untagged AI tools had significantly higher vandalism rates. Why? Because bad actors found that AI-generated text was easier to manipulate subtly. By mandating transparency, the new policy forces accountability back onto individual usernames.

What About Citations?

Citations are the backbone of the project. The 2026 guidelines tighten the screws here. Previously, if you cited a link, the truthfulness was presumed. Now, the burden of proof shifts to the editor.

If a statement includes data, dates, or complex claims, the reference must link to a stable URL. Dead links are treated similarly to missing references. Furthermore, citing AI outputs as a source is explicitly banned. You can't say, "According to Google Gemini..." because the model has no editorial responsibility. Sources must be traceable to a real-world publisher, author, or institution.

This distinction helps separate using tools from citing tools. Using a dictionary app to check spelling is fine. Using a chatbot to find a quote and then citing the chatbot is a direct violation of the core principles of attribution.

Community Enforcement Challenges

The rules sound simple on paper, but enforcement relies entirely on the volunteer army. Volunteer Editorscontributors who maintain the content carry the brunt of this work. Burnout was a major concern during the 2025 rollout phase.

To combat this, new "Patrol Squadrons" were formed. These are veteran editors dedicated to scanning recent edits for policy breaches rather than focusing on vandalism alone. They utilize specialized dashboards that highlight statistical anomalies in an editor's workflow. For instance, if someone edits 50 pages in two hours with zero deletions and perfect grammar, the dashboard turns red.

However, false positives remain an issue. A highly skilled writer might trigger an alert simply because they are efficient. Administrators emphasize that context matters. One-off efficiency doesn't equal cheating, but a pattern does. This balance ensures genuine contributors aren't penalized for speed, while malicious bots are throttled.

Legal and Copyright Implications

A huge driver for these updates was legal uncertainty surrounding copyright law. As of early 2026, courts in various jurisdictions have begun ruling that purely machine-generated works may not qualify for traditional copyright protection. Wikipedia's license (Creative Commons Attribution-ShareAlike) requires human authorship.

By banning undisclosed AI generation, Wikipedia protects its own legal standing. If the site published unverified robot text, it could face challenges regarding ownership and licensing validity. The Intellectual Property standards ensure that the knowledge base remains legally clear for downstream users, like teachers or journalists who repurpose the material.

This alignment with Copyright Lawlaws protecting original creative works makes the policy robust beyond just community preference. It shields the project from potential litigation over misinformation that could be traced back to uncited automated sources.

Looking Forward: 2027 and Beyond

These policies aren't static. We expect them to evolve again by late 2027 as AI capabilities improve further. The focus currently sits on detection and transparency. The next wave will likely address reasoning tools-systems that can actually browse the web and cross-reference multiple papers.

Until then, the rule of thumb is simple: Your eyes, your brain, your responsibility. Technology helps you type faster, but it shouldn't type for you without your full consent and oversight. Whether you are a casual reader or a seasoned editor, understanding these boundaries helps keep the information ecosystem healthy.

Can I use AI to correct my spelling errors?

Yes, basic grammar and spelling fixes are permitted, but you should disclose significant structural changes made by AI tools in the edit summary.

What happens if I accidentally use AI to write a paragraph?

First offense usually results in a warning and removal of the content. Repeated violations without disclosure lead to temporary editing blocks.

Are there penalties for readers who flag fake AI content?

No penalties exist for reporting concerns. However, excessive unfounded accusations can disrupt community consensus.

Does Wikipedia ban all AI usage completely?

Not entirely. Assisted drafting is okay with transparency. Total substitution of human thought is prohibited. Context determines permission.

Will AI-generated articles ever be allowed as standalone entries?

Currently no. Human oversight is required for every single article to ensure verifiability and prevent systemic bias or hallucination.