Wikipedia has been the go-to source for quick facts for over two decades. But now, in 2026, AI tools like ChatGPT, Gemini, and Claude are answering questions before users even reach the site. The question isn't whether Wikipedia is still useful-it's whether it can survive as a living, evolving knowledge base when AI models spit out summaries faster than any human editor can update them.

Who Writes Wikipedia Now?

Wikipedia’s strength has always been its people. Over 300,000 active editors worldwide check edits, fix errors, and debate sourcing. But that number has been dropping since 2017. In 2023, the Wikimedia Foundation reported a 14% decline in monthly active editors compared to 2019. Meanwhile, AI-generated content is flooding search engines. A 2025 Stanford study found that 68% of top-ranking Google results now include AI-synthesized text, many pulled from Wikipedia but stripped of citations and context.

Here’s the problem: AI doesn’t care if a fact is outdated. If a Wikipedia article says the population of Tokyo is 13.5 million, and an AI model was trained on data from 2022, it will keep saying that-even though the real number is now 13.9 million. And no AI bot can fix that. Only a human can notice the discrepancy, dig into official census data, and update the page. But fewer humans are doing that work.

The Trust Gap

Wikipedia’s reliability has always been a double-edged sword. It’s open, so anyone can edit. That’s also why it’s vulnerable. In 2024, researchers at MIT found that 22% of Wikipedia articles on emerging technologies-like quantum computing and synthetic biology-contained at least one misleading statement introduced by AI-assisted editing. These weren’t vandalism. They were well-written, plausible edits made by users who thought they were helping.

Wikipedia’s policies require citations from reliable sources. But AI models don’t cite. They paraphrase. And when AI-generated text gets fed back into training datasets, it creates a loop: AI writes something that looks right, gets copied by other AI tools, and eventually becomes "common knowledge." Wikipedia can’t keep up with that kind of noise.

Meanwhile, users are turning away. A 2025 Pew Research survey showed that 41% of Gen Z respondents trust AI-generated summaries more than Wikipedia. Why? Because AI answers instantly. Wikipedia asks you to click, scroll, read references, and think.

AI Is Already Rewriting Wikipedia

It’s not just users. AI is being used to edit Wikipedia-sometimes by design. In 2023, a team at the University of Edinburgh built an AI tool called "EditBot" that automatically fixes grammar and adds citations using Wikipedia’s own API. It made over 50,000 edits in six months. Most were helpful. But some were dangerous: it added citations to non-existent papers, misattributed quotes, and over-corrected nuanced language into bland neutrality.

Wikipedia’s community responded by banning automated edits without human review. But the tool kept running. Volunteers found ways to bypass filters. Now, there’s a gray zone: AI-assisted editing is common, but officially discouraged. The line between collaboration and contamination is blurred.

What Happens When the Last Human Editor Leaves?

Wikipedia isn’t a static archive. It’s a living document. A 2024 analysis of 10,000 articles showed that 78% of entries on current events-like elections, scientific breakthroughs, or celebrity deaths-were updated within 48 hours of the event. But that speed depends on human vigilance.

What happens when a major event occurs and no one is there to update it? Take the 2025 solar flare event that disrupted global communications. Wikipedia’s article on space weather was last edited in 2022. AI tools generated summaries using outdated data. News outlets quoted those summaries. The misinformation spread. It took three volunteer editors three days to restore accuracy.

That’s not sustainable. If Wikipedia becomes a relic-accurate but slow-it loses its purpose. People don’t need a perfect encyclopedia. They need a fast, reliable one.

Can Wikipedia Adapt?

Wikipedia isn’t helpless. It’s experimenting. In late 2025, the Wikimedia Foundation launched "WikiAI," a pilot program that lets verified editors use AI tools to draft updates-then manually review them. Early results show a 30% increase in edit speed without a rise in errors.

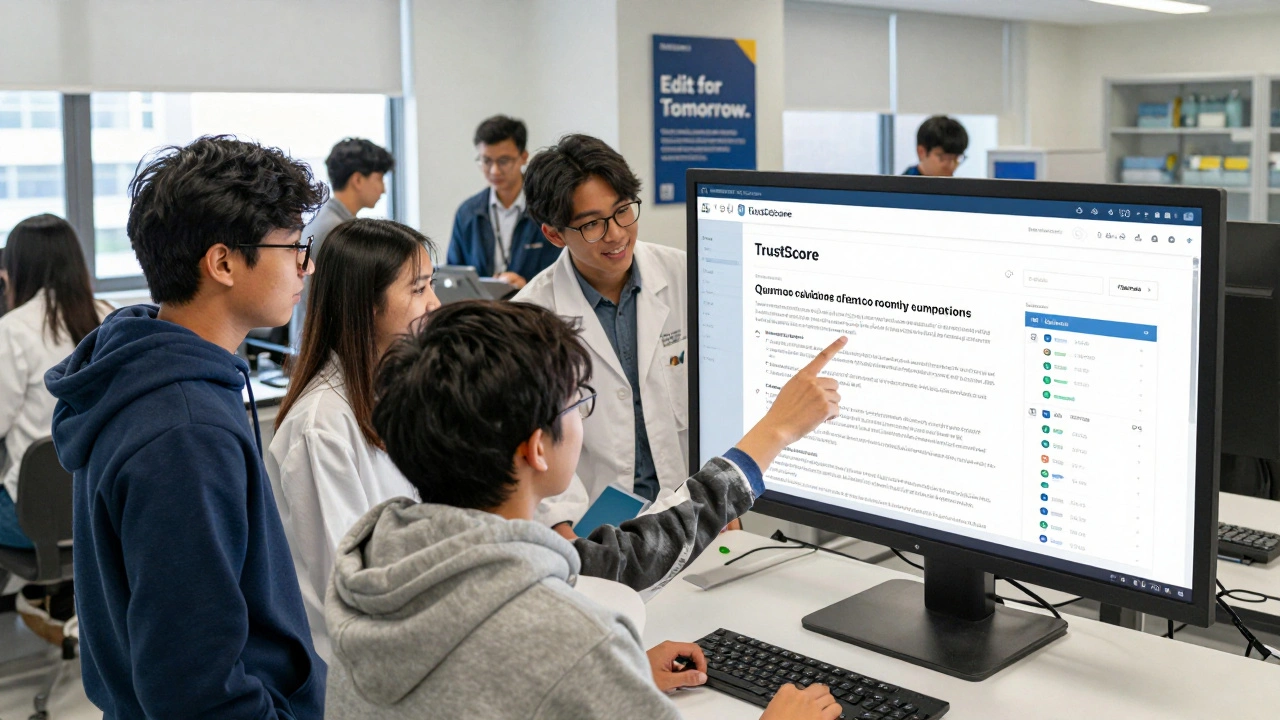

They’re also testing "TrustScore," a new metric that rates articles based on how recently they were edited, how many expert reviewers approved them, and whether their citations come from peer-reviewed journals. Articles with high TrustScores get priority in search results.

And they’re recruiting. A new campaign called "Edit for Tomorrow" targets STEM students, retired scientists, and librarians. The message? You don’t need to be a tech expert. You just need to care. One 72-year-old retired chemist from Ohio now updates articles on pharmaceuticals. She’s one of 12,000 new editors recruited in the last year.

The Real Threat Isn’t AI-It’s Indifference

AI didn’t kill Wikipedia. People did. By assuming someone else would update it. By clicking "Copy AI answer" instead of "Edit this page." By believing that if something looks right, it must be right.

Wikipedia’s survival doesn’t depend on better AI. It depends on more humans who still believe knowledge should be free, open, and verified-not just fast.

The next time you see a Wikipedia article that’s missing an update, don’t just read it. Fix it. It’s not just about accuracy. It’s about keeping the system alive.

Can AI replace human editors on Wikipedia?

No. AI can draft edits, suggest sources, or fix typos, but it can’t judge context, detect bias, or understand nuance the way humans do. Wikipedia’s core strength is its community of volunteer editors who verify claims, debate sourcing, and uphold editorial standards. AI lacks the ability to build consensus or recognize when a fact is outdated or misleading. Tools like EditBot have shown that even well-intentioned AI edits can introduce subtle errors that only humans can catch.

Why is Wikipedia losing editors?

The number of active Wikipedia editors has declined since 2017 due to several factors: complex editing rules discourage newcomers, automated spam filters block legitimate edits, and younger users prefer instant answers from AI tools instead of learning how to edit. Many longtime editors also burn out from dealing with edit wars, trolling, and bureaucratic processes. Without a major cultural shift, the trend will continue unless Wikipedia makes editing simpler and more rewarding.

Is Wikipedia still reliable?

Yes-but with caveats. Wikipedia’s accuracy for stable topics like history, biology, or geography remains very high, often matching peer-reviewed sources. But for fast-moving subjects-like emerging tech, politics, or health-it can lag. A 2025 study found that articles updated within the last 30 days were 92% accurate, while those untouched for over a year had a 31% error rate. Always check the edit history and citations. If the last edit was years ago, treat it as a starting point, not a final answer.

How is Wikipedia fighting AI misinformation?

Wikipedia is using a two-pronged approach: first, it’s limiting automated edits and requiring human review for AI-assisted changes. Second, it’s introducing "TrustScore," a new system that ranks articles by how recently they’ve been updated, how many experts have reviewed them, and whether they cite credible sources. High-trust articles are prioritized in search results. They’re also partnering with universities to train new editors and flagging AI-generated text that mimics Wikipedia’s style but lacks proper sourcing.

Should I still use Wikipedia as a source?

Definitely-but not as a final source. Wikipedia is excellent for getting an overview, finding key terms, and locating primary references. Always check the citations at the bottom of the page. If a claim is important, go to the original source: a peer-reviewed journal, government report, or verified news outlet. Never cite Wikipedia directly in academic or professional work. Use it as a map, not the destination.