Wikipedia isn’t just a website anymore-it’s the world’s largest living archive of human knowledge, written by millions in over 300 languages. But here’s the thing: while English Wikipedia has more than 6.5 million articles, the smallest Wikipedias, like Toki Pona or Rotuman, have fewer than 100. The gap isn’t just about numbers. It’s about who gets to shape what the world knows-and who’s left out.

Why Language Matters More Than You Think

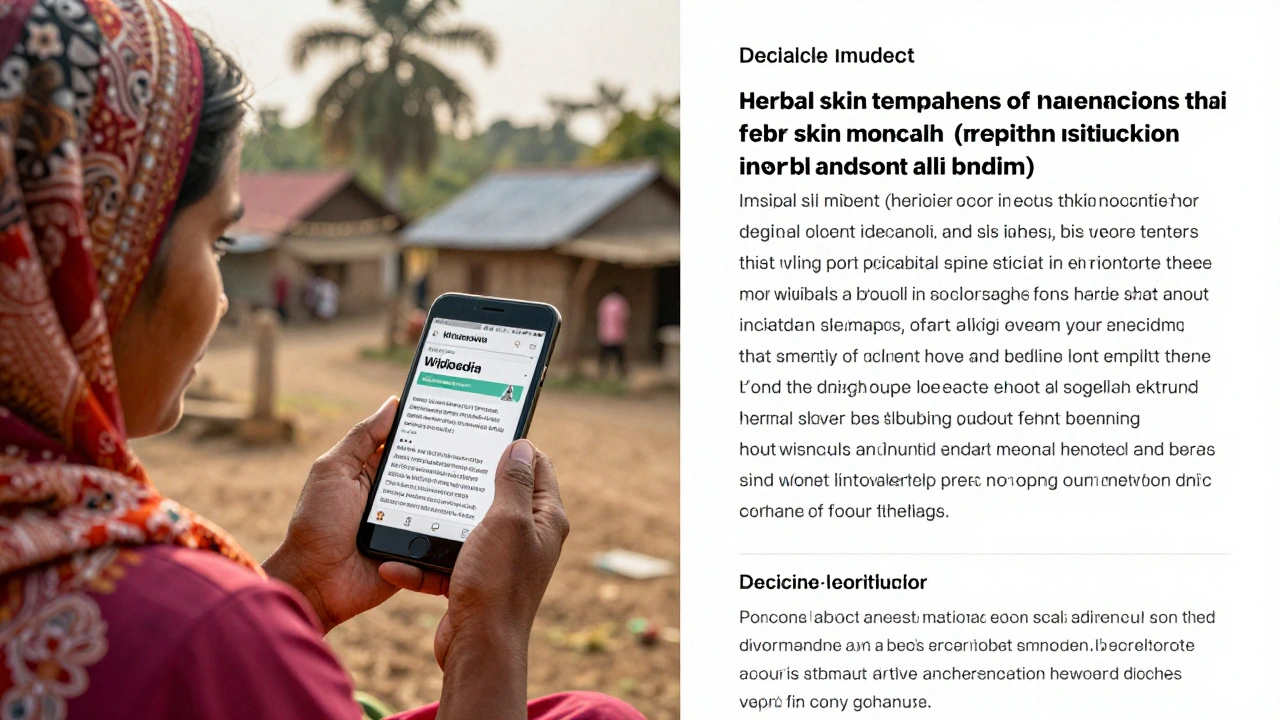

When someone in rural Bangladesh searches for how to treat a common skin infection, they don’t want to read an English article translated by a machine. They want it in Bengali, written by someone who understands local remedies, climate, and medicine. That’s not a luxury-it’s a necessity. And yet, only 12% of Wikipedia’s total content is in non-English languages, even though over 80% of the world’s population doesn’t speak English as a first language.

It’s not that people don’t want to contribute. In Nigeria, volunteers have been adding articles on indigenous crops and traditional healing practices to Yoruba Wikipedia. In Indonesia, students are building detailed entries on local folklore for Javanese Wikipedia. But they’re working with outdated tools. The editing interface still feels designed for English speakers. Syntax highlighting, auto-suggestions, and citation tools don’t work well for languages with non-Latin scripts or complex grammar rules.

The Infrastructure Gap

Wikipedia’s backend hasn’t kept up with its global ambitions. The software that powers Wikipedia-MediaWiki-was built in the early 2000s. It assumes a left-to-right, Latin-script world. For languages like Arabic, Hebrew, or Mongolian, editors still struggle with text alignment, bidirectional rendering, and font rendering. In Hindi, where one word can have dozens of forms based on gender and case, the auto-correct system often suggests wrong spellings because it doesn’t understand the language’s morphology.

And then there’s the data problem. Most of Wikipedia’s traffic comes from a handful of countries. Servers in the U.S. and Europe handle requests from India, Nigeria, and Brazil, causing delays of over a second on mobile networks. That’s enough to make someone quit. In places where data is expensive and connections are weak, slow loading times mean fewer people edit, fewer people read, and fewer people care.

Wikipedia’s Foundation has tried to fix this. They launched the “Language Growth Initiative” in 2023, pouring resources into local language communities. They hired native speakers as community liaisons in 18 countries. They partnered with universities in Senegal, Vietnam, and Peru to train students in editing. But tools still lag behind need.

What’s Changing Now

Two things are shifting the game: AI and local infrastructure.

AI translation used to be a joke on Wikipedia. Machine-translated articles were full of errors, cultural misunderstandings, and awkward phrasing. But in 2025, the Foundation rolled out a new system called WikiLingua is a new AI-powered translation and editing assistant built specifically for Wikipedia’s needs. It doesn’t just translate text-it adapts tone, context, and local references. If you’re translating an article about maple syrup from English to Tamil, WikiLingua won’t just say "maple syrup." It’ll suggest "panneer sirup" (a local sweetener) as a cultural equivalent and add a note about seasonal harvesting in Tamil Nadu.

It’s not perfect. Some communities are skeptical. But early results show a 40% increase in article creation in low-resource languages. In Swahili Wikipedia, article growth jumped from 800 to over 1,200 new entries in three months after WikiLingua was introduced.

On the infrastructure side, the Foundation is rolling out edge caching nodes in Lagos, Jakarta, and Mexico City. These aren’t just faster servers-they’re local hubs where edits from African and Southeast Asian editors sync in under 200 milliseconds. For the first time, someone in Kinshasa can edit a Swahili article and see their changes appear instantly to someone in Nairobi.

Who’s Leading the Charge?

It’s not the Foundation alone. Local volunteers are the real engine.

In Ukraine, after the war began, volunteers translated over 15,000 medical guides into Ukrainian, filling gaps left by Russian-language sources. In Nepal, a group of high schoolers created a Nepali Wikipedia section on climate resilience, using satellite data and local farmer interviews. In Bolivia, Aymara speakers built the first encyclopedia of Andean astronomy, complete with star maps and seasonal rituals.

These aren’t outliers. They’re signals. When a language community has ownership, they don’t just contribute-they innovate. They create new formats: audio summaries for illiterate users, video explainers for visual learners, and even voice-based editing tools for people who can’t type.

The Road Ahead

The future of multilingual Wikipedia isn’t about making every language equal. It’s about making every language viable.

By 2030, the goal is simple: every language with over 100,000 speakers should have a Wikipedia that’s easy to use, fast to load, and rich with local knowledge. That means 200+ languages need better tools, not just more volunteers.

That’s why the next big step is modular editing. Imagine an editor that adapts to your language: if you’re writing in Korean, the interface shows you honorific forms. If you’re writing in Arabic, it auto-suggests diacritics. If you’re writing in Quechua, it includes a field for oral sources and community validation.

And it’s not just about editing. It’s about discovery. Right now, if you search for "how to grow quinoa" on Wikipedia, you’ll get English results first-even if you’re in Peru. The search engine needs to learn that context matters. A user in Bolivia should see Quechua articles before English ones. That’s not bias-it’s relevance.

What’s at Stake

If Wikipedia fails to become truly multilingual, it risks becoming a digital echo chamber of Western knowledge. The world’s oral traditions, indigenous science, and local histories will continue to vanish-because they’re not written down, and they’re not accessible.

But if it succeeds? Imagine a child in Laos reading about their grandmother’s herbal remedies on Lao Wikipedia. A farmer in Mali learning about drought-resistant seeds from a Mandinka Wikipedia article. A teenager in Papua New Guinea discovering her language’s first digital dictionary.

This isn’t about tech. It’s about dignity. Knowledge shouldn’t be locked behind a language barrier. And Wikipedia has the chance to prove that the world’s knowledge belongs to everyone-not just the ones who speak English.

Why don’t all Wikipedia languages have the same number of articles?

The number of articles depends on how many active editors a language community has, not on population size. English has millions of native speakers and a long history of online participation, so it has more editors. Smaller languages often have passionate but small groups of contributors. Some languages, like Toki Pona, have fewer than 10 active editors, which limits growth. It’s not about importance-it’s about access to tools and community support.

Can AI replace human editors on Wikipedia?

No. AI helps with translation, formatting, and suggestions, but it can’t judge cultural accuracy, local context, or reliability. A machine might translate "traditional medicine" literally, but a human editor knows that in some cultures, that term includes spiritual practices. Human editors verify sources, correct bias, and preserve nuance. AI is a helper, not a replacement.

How can I help improve my language’s Wikipedia?

Start small. Add one article about a local landmark, a family recipe, or a community event. Use the WikiLingua tool to help with editing. Join your language’s Wikipedia community on Discord or Telegram. Many groups offer training for new editors. You don’t need to be an expert-just someone who cares about preserving your language’s knowledge.

Is Wikipedia available offline in low-connectivity areas?

Yes. The Offline Wikipedia project lets users download entire language editions as apps or USB drives. In rural schools in Kenya and India, teachers use these offline versions to teach students without internet. The Foundation also partners with NGOs to distribute Kiwix, a free offline reader, in areas with no reliable connection.

What’s the biggest challenge for non-Latin script languages on Wikipedia?

The biggest challenge is the editing interface. Many tools assume Latin characters and left-to-right reading. For languages like Arabic, Urdu, or Thai, text alignment, font rendering, and input methods often break. The Foundation is working on a new editor called "WikiFlow," designed from the ground up for all writing systems, but it’s still in testing. Until then, many editors use workarounds like copy-pasting from word processors.