Wikipedia isn’t just a collection of articles. Under the hood, it’s a living, breathing knowledge graph - a web of facts connected by relationships, not just words. Most people think of Wikipedia as a place to look up who invented the telephone or when the Berlin Wall fell. But behind every article, there’s a machine-readable structure quietly powering research, AI, and academic tools you’ve probably never noticed. That structure? It’s called Wikidata.

What Wikidata Actually Is

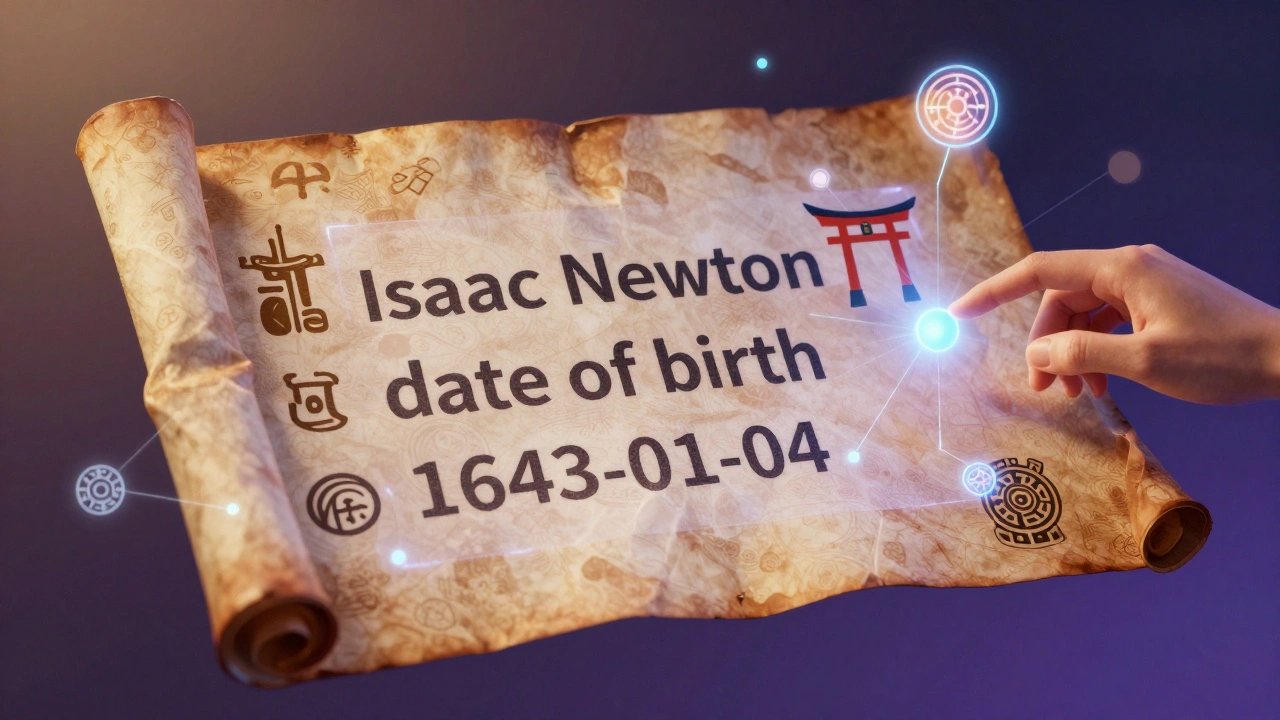

Wikidata is Wikipedia’s silent partner. While Wikipedia gives you readable text, Wikidata gives you structured data. Think of it like a giant spreadsheet where every row is a thing - a person, a planet, a disease, a book - and every column is a fact about that thing. Instead of saying "Isaac Newton was born in 1643," Wikidata stores it as:

- Entity: Isaac Newton

- Property: date of birth

- Value: 1643-01-04

This format isn’t just for computers to read - it’s designed to be linked. That means if you search for "Isaac Newton" in Wikidata, you’ll also find links to his place of birth, his academic advisors, his published works, and even the museums that hold his personal items. These connections turn isolated facts into networks. And that’s what makes it a knowledge graph.

Wikidata isn’t built by bots. It’s edited by humans - the same volunteers who write Wikipedia articles. But instead of writing paragraphs, they’re adding structured claims. Every edit gets reviewed, cited, and linked to reliable sources. As of 2026, Wikidata holds over 100 million items, each with an average of 15 properties attached. That’s more than a billion verified facts.

How Scholars Use It

Academic researchers don’t just cite Wikipedia - they use Wikidata as a data source. A historian studying 19th-century scientists doesn’t manually collect birth dates, affiliations, and publication records from 500 different archives. They query Wikidata. Using a simple tool called SPARQL, they can ask questions like:

- "Show me all female chemists who published before 1900 and were affiliated with German universities."

- "Which Nobel laureates studied under the same advisor as Marie Curie?"

One 2023 study from the University of Oxford used Wikidata to trace the academic lineage of 12,000 physicists. They found patterns in mentorship networks that had never been mapped before - all because Wikidata linked advisor-student relationships across 150 years of data.

Medical researchers rely on Wikidata too. The World Health Organization’s disease classifications are synced with Wikidata. When a team in Brazil needed to track the spread of a rare tropical virus, they didn’t scrape websites - they pulled direct data from Wikidata’s disease ontology, which included transmission methods, geographic distribution, and associated symptoms. That data was then fed into a public health dashboard used by local clinics.

Even literature scholars use it. A team at Stanford built a tool that maps literary influences by linking authors to their cited works, publishers, and translation history - all pulled from Wikidata. They discovered that a 19th-century Polish poet was cited more often in Spanish translations than in English, a pattern no print-based archive had revealed.

Why This Matters More Than You Think

Most knowledge bases - like PubMed, JSTOR, or Google Scholar - keep data locked in their own systems. They don’t talk to each other. Wikidata does. It’s the only public, open, and linked database that connects science, history, art, and culture under one roof.

When a researcher in Tokyo uses Wikidata to find data on Japanese war memorials, and a historian in Berlin uses the same data to study postwar memory, they’re both accessing the same facts. No duplication. No conflicting records. Just one source that updates in real time.

It’s also why AI models like ChatGPT and Google’s Gemini use Wikidata as a grounding source. When you ask, "Who directed Inception?", the answer doesn’t come from a random webpage - it comes from Wikidata’s structured claim: "Christopher Nolan, director, Inception." That’s why those answers are often accurate, even when the web is full of noise.

How It’s Different from Traditional Databases

Traditional academic databases are rigid. You need to know exactly what you’re looking for. If you want to find all papers on climate change in the 1990s, you type in keywords, filter by journal, and pray the database didn’t miss something.

Wikidata works differently. It’s built on relationships. If you know one fact - say, "Albert Einstein worked at the Swiss Patent Office" - Wikidata doesn’t stop there. It links that to his salary, the building’s address, the year he started, and even the names of his coworkers. You can follow those connections outward like a spiderweb.

Here’s a simple comparison:

| Feature | Wikidata | Traditional Database (e.g., JSTOR) |

|---|---|---|

| Data Format | Structured triples (subject-predicate-object) | Unstructured text or metadata fields |

| Linking | Automatically connects to related entities | Links only within same system |

| Updates | Real-time, community-driven | Monthly or yearly updates |

| Access | Open API, free for all | Often paywalled or institutional |

| Scope | Global, cross-disciplinary | Subject-specific (e.g., medicine, history) |

That flexibility makes Wikidata uniquely powerful. You can combine data from biology, politics, and art in one query. No other database lets you do that.

Real-World Impact: Beyond the Lab

It’s not just about research papers. Wikidata powers tools used by libraries, museums, and even public schools. The British Library uses it to tag historical documents. The Smithsonian links its artifact collections to Wikidata so anyone can trace an object’s origin across centuries.

One project in Wisconsin used Wikidata to build a digital map of Native American tribal leaders who signed treaties with the U.S. government. By linking names, dates, locations, and treaty numbers, students were able to visualize patterns of displacement that textbooks had flattened into single paragraphs.

Even Wikipedia’s own mobile app uses Wikidata to power its "See Also" suggestions. When you read about the Apollo 11 mission, you don’t just get links to other articles - you get related spacecraft, astronauts’ birthplaces, and launch sites, all pulled from structured data.

Challenges and Limitations

It’s not perfect. Wikidata still has gaps. Not every scientist has a profile. Many historical records from non-Western cultures are underrepresented. And because it’s edited by volunteers, some entries are incomplete or outdated.

But the community is fixing this. Academic institutions now run "edit-a-thons" where professors and students spend a day adding verified data to Wikidata. The University of California system has trained over 800 students to contribute to scholarly entries. Libraries in Canada and Germany have integrated Wikidata into their catalog systems.

And unlike commercial databases, Wikidata doesn’t charge for access. No institutional login. No paywall. Just open data - available to anyone with an internet connection.

What’s Next

The next frontier is automation. Researchers are training AI models to scan scholarly papers and automatically extract facts to add to Wikidata. One tool, called "Citizendium," can read a research paper’s bibliography and suggest new claims - like linking a newly discovered species to its habitat, author, and collection location.

Soon, your university library might not just have a catalog - it might have a live feed from Wikidata, updating in real time as new studies are published. Imagine a student in Nairobi accessing the same data on African biodiversity as one in Oslo - because both are using the same open, reliable, linked source.

Wikipedia was meant to be a free encyclopedia. Wikidata made it something bigger: a global knowledge infrastructure. And for scholars, it’s already changing how research is done - one fact at a time.

Is Wikidata the same as Wikipedia?

No. Wikipedia is for human-readable articles. Wikidata is for machine-readable facts. You read about Napoleon on Wikipedia. You query his birth date, military campaigns, and heirs in Wikidata. They work together - but serve different purposes.

Can I use Wikidata for my research paper?

Yes - and many peer-reviewed journals now accept it as a valid data source. You can cite Wikidata items directly using their unique IDs (like Q42 for Douglas Adams). Some journals even require it for reproducibility. Always link to the specific item and include the date you accessed it.

How accurate is Wikidata?

It’s highly accurate for well-covered topics like scientists, historical events, and major cultural works - because each claim is backed by a citation. For niche or underrepresented subjects, gaps exist. But the system is designed to improve over time, and academic contributions are closing those gaps fast.

Do I need to know programming to use Wikidata?

No. You can browse and search Wikidata like a regular website. But if you want to do complex queries - like comparing data across centuries - you’ll need to use SPARQL, a query language for databases. Many universities now offer free workshops on SPARQL for researchers.

Is Wikidata used outside academia?

Absolutely. Google, Apple, and Bing use it to power knowledge panels. Museums, museums, and libraries use it to connect artifacts to global records. Even weather apps use it to link climate stations to their geographic coordinates. It’s one of the most widely used public data sources on the internet.