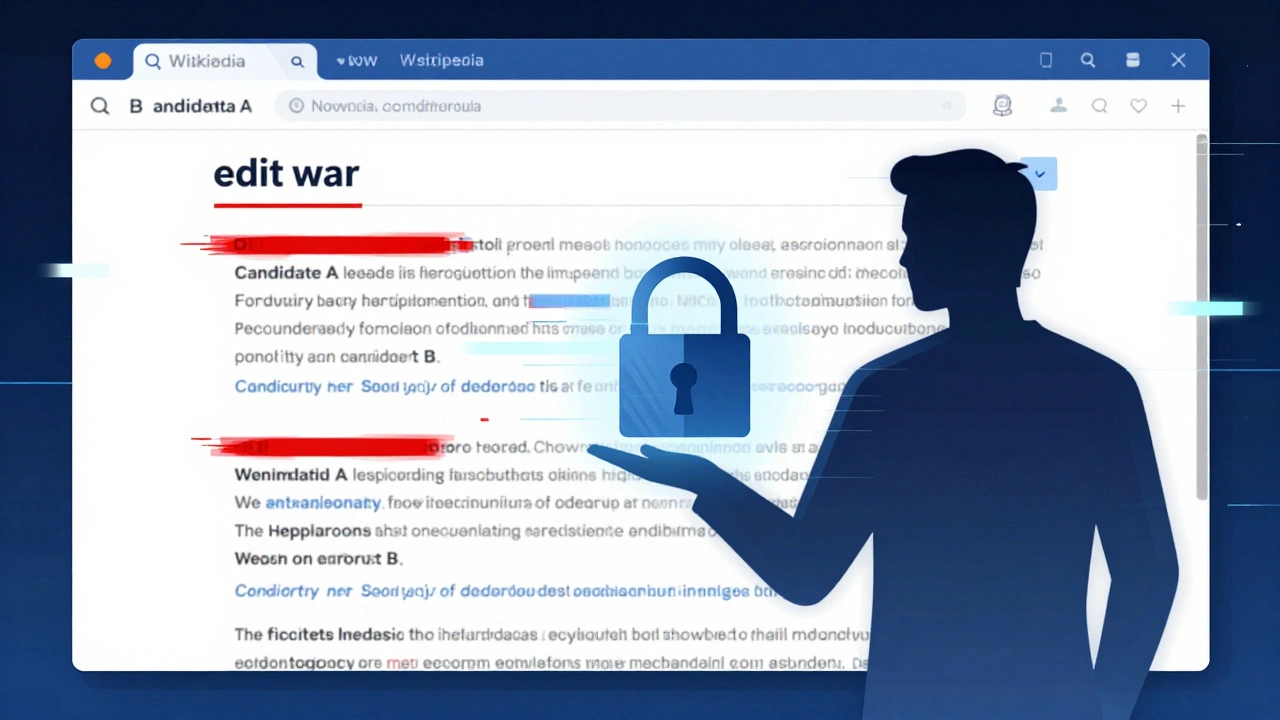

Imagine opening an article about a major election or a heated cultural debate, only to find the text has changed three times in the last hour. One version says Candidate A is leading; another claims Candidate B has secured victory. The middle paragraph oscillates between two opposing viewpoints until the page is locked by administrators. This isn’t just bad editing-it’s a symptom of how Wikipedia is a free, web-based, collaborative encyclopedia that allows anyone to create and edit articles. When high-stakes topics like elections, geopolitics, and culture wars hit the platform, the underlying mechanics of consensus-building often fracture under pressure.

We don't usually think of an encyclopedia as a battlefield, but for those who watch these pages closely, it can feel like one. The core issue isn't malice; it's structure. Wikipedia relies on volunteer editors working asynchronously across time zones and ideologies. When a topic becomes politically charged, that system strains. Understanding these conflict patterns helps us see not just what goes wrong, but why it happens-and more importantly, how the community tries to fix it.

The Mechanics of Disagreement

To understand the chaos, you have to look at the rules. Wikipedia operates on five foundational pillars, with Neutral Point of View (NPOV) being the policy requiring all content to be written from a neutral perspective, representing significant viewpoints fairly without editorial bias. In theory, this means if Group X believes Y, and Group Z believes Q, both views get space, provided they are backed by reliable sources. In practice, deciding which sources count as "reliable" is where the fighting starts.

When tensions rise, you often see a phenomenon called Edit Warring is a disruptive behavior where editors repeatedly revert changes made by others to impose their preferred version of an article. Editor A adds a sentence supporting Viewpoint 1. Editor B removes it because they think it violates NPOV. Editor A puts it back. Editor B deletes it again. Within minutes, bots flag the activity, and human admins step in to block accounts. It’s exhausting, unproductive, and leaves the reader confused. These cycles rarely resolve the actual disagreement; they just punish the participants.

The real problem lies in verification. Wikipedia requires verifiability-claims must be supported by published, credible sources. But during breaking news events, those sources are scarce or contradictory. Editors rush to fill the void, often using low-quality blogs or partisan outlets because mainstream media hasn’t reported yet. This creates a fragile foundation that collapses once better information arrives.

Elections: The Speed Trap

Election coverage brings a unique set of challenges. Unlike historical events, elections happen in real-time. As votes come in, editors want to update results immediately. But speed kills accuracy here. We’ve seen instances where premature declarations led to massive reverts and vandalism.

Consider the pattern around live result tracking. During tight races, different regions report at different speeds. An editor in one state might add a "Projected Winner" tag based on local exit polls, while an editor in another region argues it’s too early. The article swings back and forth. To manage this, the community developed strict guidelines for Live Event Coverage is procedures designed to maintain stability and accuracy when documenting events currently unfolding. These include waiting for official certification before declaring winners and using clear disclaimers about uncertainty.

Despite these rules, pressure remains immense. Political campaigns sometimes encourage supporters to edit articles, hoping to shape narratives subtly. This leads to subtle bias rather than overt vandalism-adding favorable quotes, highlighting minor scandals, or burying negative information deep in footnotes. Detecting this requires experienced editors who know the difference between legitimate sourcing and strategic framing.

| Trigger Type | Description | Typical Resolution Strategy |

|---|---|---|

| Result Disputes | Conflicting reports on vote counts or projections | Lock page until official certification; cite multiple major AP/Reuters sources |

| Narrative Framing | Bias in lead paragraphs summarizing candidate performance | Talk page discussion focusing on tone analysis and source weighting |

| Vandalism Spikes | Fake results posted by trolls or bots | Automated filters (Huggle/AutoWikiBrowser) + temporary semi-protection |

Geopolitics: The Source War

If elections test speed, geopolitics tests neutrality. Conflicts involving nations with competing historical narratives-like borders in Eastern Europe, territorial claims in Asia, or Middle Eastern disputes-are perennial hotspots. Here, the battle isn’t just about facts; it’s about legitimacy.

Different countries have different standards for what constitutes a "reliable source." A newspaper considered authoritative in Country A might be viewed as propaganda in Country B. Editors from each side bring their own national perspectives, leading to stalemates. You’ll often see sections labeled "Disputed" where no agreement can be reached.

This is where Verifiability is the principle that all material challenged or likely to be challenged needs a citation to a reliable source that supports the material. becomes tricky. International organizations like the United Nations provide neutral ground, but their resolutions aren’t always accepted by all parties involved. Editors must navigate diplomatic language carefully, ensuring they reflect international law without appearing to take sides.

A common tactic used by skilled editors is seeking out academic journals over news outlets. Academic papers undergo peer review, offering a layer of scrutiny that reduces bias. However, access barriers limit who can use them. Free tools like Unpaywall is a browser extension that finds legal open-access versions of paywalled scholarly articles. help bridge this gap, allowing more editors to cite high-quality research. Still, the effort required slows down updates, leaving gaps that less rigorous sources try to fill.

Culture Wars: Identity and Tone

Culture war topics-gender identity, race relations, religious controversies-hit differently. They touch personal identities, making disagreements feel existential. On Wikipedia, this manifests as intense debates over terminology and inclusion.

Take the debate over pronouns in biographical articles. Some editors argue for strict adherence to self-identification, citing modern style guides. Others insist on using names and genders assigned at birth, referencing older records. Both sides believe they’re upholding truth. Neither wants to compromise because doing so feels like erasing someone’s existence.

These conflicts often revolve around Encyclopedic Notability is the criteria determining whether a subject warrants its own article based on significant coverage in independent reliable sources. Should every activist get a page? What defines "significant coverage"? Activist groups may push for visibility, arguing their work matters. Critics counter that notability thresholds prevent the site from becoming a directory of opinions rather than an encyclopedia.

Tone policing also plays a huge role. One editor might write, "Critics argue..." while another prefers, "Opponents claim..." The words seem similar, but connotations differ. Subtle shifts in phrasing can make a viewpoint sound reasonable or ridiculous. Experienced moderators spend hours dissecting these nuances, trying to find wording that satisfies everyone-or at least offends no one disproportionately.

Community Governance and Tools

How does Wikipedia handle all this? Through layers of community governance. There’s no central boss. Instead, trusted volunteers enforce policies through discussions, warnings, and blocks. Key tools include:

- Semi-Protection: Restricts editing to autoconfirmed users (accounts older than four days), reducing casual vandalism.

- Full Protection: Only administrators can edit the page, used during extreme disputes.

- Talk Pages: Dedicated spaces for discussing changes before implementing them, fostering consensus.

- Ombudsman Requests: Formal appeals process for blocked users who believe they were treated unfairly.

Recently, AI-assisted moderation has entered the scene. Systems trained to detect abusive language or coordinated edits help flag potential issues faster. Yet, AI struggles with context. It might flag a sarcastic comment as harassment or miss a subtle ad hominem attack wrapped in polite language. Human judgment remains essential.

The concept of Consensus Building is the informal process by which editors reach agreement on contentious issues through discussion and compromise. is central to Wikipedia’s survival. It’s slow, messy, and frustrating. But it works. By forcing people to explain their reasoning publicly, it exposes weak arguments and builds shared understanding. Not every dispute ends happily, but most eventually stabilize.

Why This Matters Beyond Wikipedia

You might wonder why any of this affects you. If you don’t edit Wikipedia, do these internal fights matter? Absolutely. Wikipedia shapes public perception. Students cite it. Journalists reference it. Politicians quote it. When articles on elections or culture wars become battlegrounds, misinformation spreads easily. Readers assume neutrality because they see the Wikipedia logo. They trust the content implicitly.

Understanding conflict patterns helps you read critically. Look for talk page links. Check revision histories. Notice sudden large changes. Ask yourself: Who benefits from this version? Is there a broader narrative being pushed? Being aware of these dynamics turns passive consumption into active engagement.

Moreover, these lessons apply elsewhere. Social media platforms face similar challenges-how to balance free expression with safety, how to verify truth amid noise. Wikipedia’s experiments offer valuable insights. Its failures teach us what doesn’t work. Its successes show what’s possible when communities commit to shared standards.

What causes edit wars on Wikipedia?

Edit wars occur when editors disagree strongly on content and repeatedly revert each other’s changes instead of discussing alternatives. Common triggers include biased sourcing, rapid updates during breaking news, and ideological differences on sensitive topics like politics or culture.

How does Wikipedia ensure neutrality?

Wikipedia enforces neutrality through its Neutral Point of View (NPOV) policy, requiring articles to represent significant viewpoints fairly without editorial bias. Editors rely on reliable sources, engage in talk page discussions, and seek consensus before making controversial changes.

Can anyone edit Wikipedia?

Yes, technically anyone can edit Wikipedia without creating an account. However, anonymous edits are scrutinized more heavily, and certain pages may be protected, limiting edits to registered users or administrators to prevent vandalism and disputes.

What happens when editors cannot agree?

When editors cannot reach consensus, they may request mediation from experienced volunteers or administrators. In severe cases, pages become fully protected, allowing only admins to make changes until stability returns. Disputes are documented on talk pages for transparency.

Are Wikipedia articles trustworthy during elections?

Generally yes, but caution is advised during live events. Wikipedia implements strict guidelines for election coverage, delaying final results until official certification and relying on major wire services. Always check recent edits and talk pages for context.