The Arms Race Between Editors and Vandals

Imagine you are a volunteer editor on Wikipedia, the free online encyclopedia that powers billions of searches daily. You spend hours verifying sources for an article about a historical event. Suddenly, an anonymous user wipes out your work, replaces it with nonsense, or inserts promotional content for a product they sell. This is not just annoying; it undermines the core mission of providing reliable, neutral information to the world. To stop this, Wikipedia relies on a complex system of blocks, bans, and technical filters. But vandals are clever. They use proxies, create new accounts, and rotate their IP addresses to evade detection. This creates a constant arms race between the community enforcers and bad actors.

The problem of blocking evasion is critical for the integrity of any large-scale collaborative project. If bad actors can easily bypass restrictions, the platform becomes unusable for constructive editors. Understanding how these evasions work-and how they are stopped-requires looking at the technical infrastructure behind the scenes. It involves IP address ranges, proxy servers, and sophisticated software tools used by administrators.

How Blocks Work on Wikipedia

When an administrator decides to block a user, they are essentially locking a specific identifier from editing pages. The most common identifier is an IP address. Every device connected to the internet has one. For home users, this address often changes frequently because Internet Service Providers (ISPs) use dynamic addressing. This means if you edit from your living room today, your IP might be different tomorrow.

Administrators can block a single IP address, but this is rarely effective against determined vandals. Instead, they often block an IP range. An IP range is a group of addresses assigned to a specific organization, such as a university, a company, or an ISP. By blocking the entire range, administrators prevent anyone using those addresses from editing. This is useful when a school network is being abused for vandalism during class hours. However, it also stops legitimate students who want to contribute. Balancing security with accessibility is a major challenge.

| Block Type | Target | Duration | Common Use Case |

|---|---|---|---|

| Single IP Block | One specific IP address | Hours to Indefinite | Isolated vandalism from a static connection |

| Range Block | A block of IP addresses (e.g., /24) | Days to Months | Abuse from schools, companies, or ISPs |

| User Account Block | A registered username | Indefinite | Sockpuppetry or persistent policy violations |

| Proxy Block | Known open proxy IPs | Indefinite | Preventing anonymous abuse via third-party services |

The Role of Open Proxies in Evasion

An open proxy is a server that allows anyone on the internet to route their traffic through it. This hides the user's real IP address, making them appear as if they are connecting from the proxy's location. For a vandal trying to evade a block, this is a golden ticket. They can connect to a proxy in another country, and their edits will look like they come from that foreign IP address.

Wikipedia actively fights against open proxies. The platform maintains a list of known open proxies and automatically blocks them. These lists are updated regularly using data from external databases and community reports. When a proxy is detected, it is added to the global blocklist. However, new proxies appear every day. Bad actors rent cheap virtual private servers (VPS) or use residential proxy networks to stay one step ahead. Residential proxies are particularly tricky because they use real people's internet connections, making them look like normal users.

The battle against proxies is not just about technology; it is about trust. Legitimate users in countries with heavy internet censorship sometimes need proxies to access Wikipedia at all. Blocking too aggressively can cut off these readers and editors. Administrators must carefully distinguish between malicious proxies and necessary access tools. This requires nuanced judgment and ongoing monitoring.

Detecting Sockpuppets and Multi-Account Abuse

Another form of evasion is creating multiple accounts, known as sockpuppets. A sockpuppet is a fake identity used to deceive others. On Wikipedia, users might create several accounts to vote in discussions, promote a biased viewpoint, or harass other editors. While having multiple accounts is not always forbidden, using them to mislead the community is strictly prohibited.

Detecting sockpuppets is challenging because users can change their writing style, time of day, and topics of interest. However, there are subtle clues. CheckUser is a specialized tool available to trusted volunteers. It allows them to see if two accounts share the same IP address or browser fingerprint. Browser fingerprints include details like screen resolution, installed fonts, and JavaScript settings. Even if a user clears their cookies, their browser fingerprint often remains consistent.

For example, if three different accounts all edit the same obscure topic within minutes of each other, and they all have the same browser fingerprint, it is likely they are controlled by the same person. CheckUsers compile evidence and present it to the community for review. If confirmed, all associated accounts are blocked. This process protects the integrity of consensus-building on the platform.

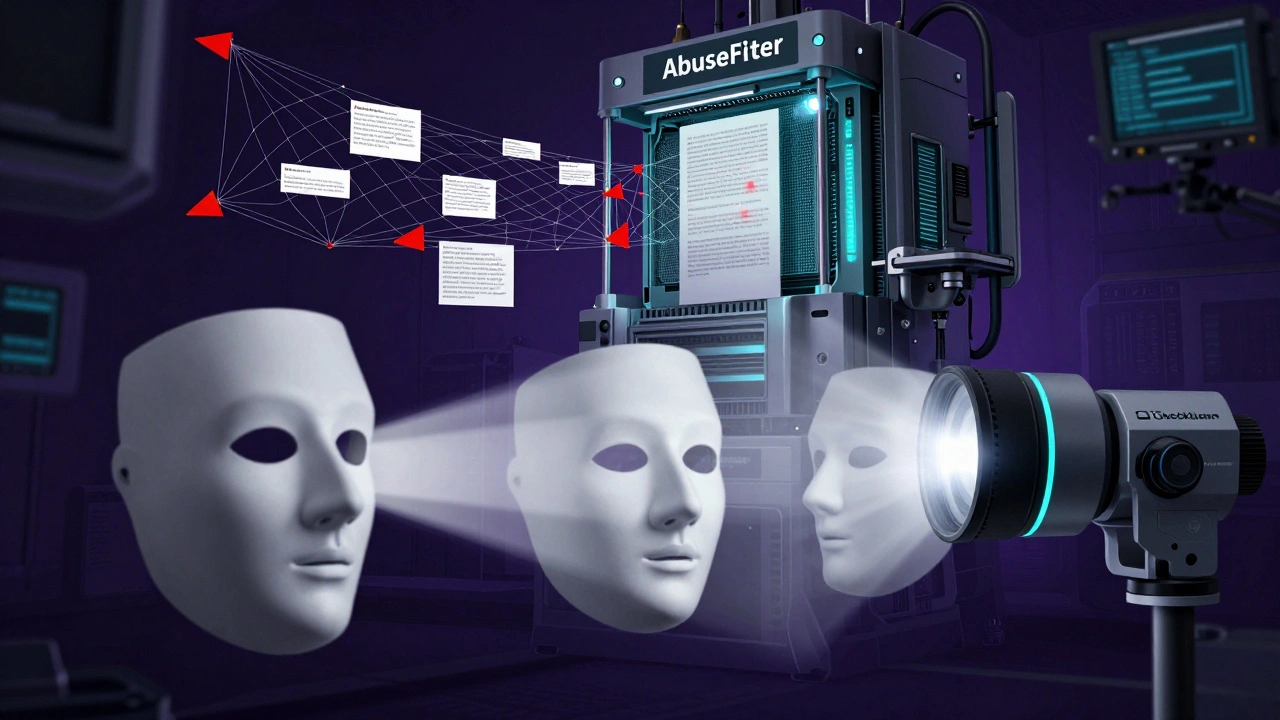

Automated Tools and Abuse Filters

Human reviewers cannot catch every instance of evasion. That is why Wikipedia uses automated systems. AbuseFilter is a powerful tool that monitors edits in real-time. It runs scripts that check for patterns associated with vandalism. For instance, if an edit contains a lot of capital letters, links to suspicious websites, or removes large sections of text, the filter may flag it.

These filters can take various actions. They might warn the user, log the edit for later review, or completely prevent the save. Administrators constantly tweak these rules to reduce false positives. A false positive occurs when a legitimate edit is mistakenly flagged. For example, a good-faith editor adding a bold statement might trigger a rule meant to catch aggressive vandalism. Finding the right balance is key to keeping the system effective without frustrating contributors.

In addition to AbuseFilter, bots patrol recent changes. These automated scripts scan new edits for common errors, spam links, and obvious vandalism. They work around the clock, providing a first line of defense. When a bot spots something suspicious, it alerts human volunteers who can then investigate further. This combination of automation and human oversight makes Wikipedia resilient against coordinated attacks.

Community Enforcement and Global Bans

Technology alone cannot solve blocking evasion. The heart of Wikipedia’s enforcement lies in its community. Volunteers discuss problematic behavior on dedicated pages, gather evidence, and decide on appropriate sanctions. This democratic process ensures that decisions are transparent and fair.

In severe cases, the Office of Change at the Wikimedia Foundation may issue a global ban. This prevents a user from editing any Wikipedia language edition. Global bans are reserved for individuals who engage in harassment, threats, or systematic disruption across multiple projects. The decision-making process is rigorous, involving input from communities worldwide. Once banned, the individual’s accounts and IP ranges are blocked globally, making evasion nearly impossible.

Local administrators play a crucial role in implementing these bans. They monitor local activity, respond to reports, and enforce policies consistently. Their efforts keep the encyclopedia clean and trustworthy. Without this dedicated group of volunteers, Wikipedia would struggle to maintain its quality standards.

Challenges in Modern Enforcement

As technology evolves, so do the methods of evasion. New challenges emerge regularly. One growing concern is the use of artificial intelligence to generate vandalism. AI tools can produce coherent but misleading text, making it harder to detect bias or fabrication. Another issue is the rise of paid advocacy gone wrong. Some public relations firms instruct clients to edit Wikipedia under false pretenses, violating the site’s conflict of interest guidelines.

To combat these trends, Wikipedia continues to refine its tools and policies. Research into machine learning models helps identify subtle patterns of abuse. Community education campaigns teach editors how to spot manipulation and report it effectively. Collaboration with academic institutions provides insights into best practices for managing large-scale online communities.

Despite these advancements, no system is perfect. There will always be bad actors looking for loopholes. The goal is not to eliminate all risk but to manage it effectively. By staying vigilant and adapting to new threats, Wikipedia ensures that its content remains reliable and accessible to everyone.

What is an IP range block?

An IP range block restricts editing from a group of IP addresses typically assigned to a single organization like a school or ISP. It is used when many users from that network are causing problems.

Why does Wikipedia block open proxies?

Open proxies hide a user's true identity, making it easy for vandals to evade blocks. Blocking them helps protect the encyclopedia from anonymous abuse while maintaining accountability.

How do CheckUsers detect sockpuppets?

CheckUsers analyze technical data such as shared IP addresses and browser fingerprints. Consistent patterns across multiple accounts suggest they are controlled by the same person.

Can I appeal a block on Wikipedia?

Yes, most blocks can be appealed. Users should explain their situation on their talk page or contact an administrator directly. Appeals are reviewed based on new evidence or changed circumstances.

What is AbuseFilter?

AbuseFilter is an automated system that checks edits for signs of vandalism or spam. It can warn users, log edits, or prevent saves based on predefined rules set by administrators.