Imagine you are searching for a critical piece of information. You have two options: a polished, corporate-run database that hides its decision-making process behind closed doors, or a messy, community-driven project where every edit, argument, and policy change is visible to anyone with an internet connection. For years, most people assumed the polished option was superior because it looked more professional. But as we move through 2026, that assumption is crumbling. The real competitive advantage in the world of online encyclopedias is governance transparency.

Wikipedia is a free-content online encyclopedia written by volunteers around the world has turned this radical openness into its strongest asset. While competitors like Baidu Baike is a Chinese online encyclopedia service provided by Baidu or Fandom is a fan-owned and operated wiki-based encyclopedia network rely on opaque algorithms or centralized editorial control, Wikipedia’s strength lies in its ability to show its work. This isn't just about ethics; it is a strategic moat in an era where users are deeply skeptical of hidden agendas.

The Crisis of Trust in Digital Information

We live in a time where "fake news" is not just a buzzword but a daily reality. Users are tired of being manipulated by search engine results that prioritize paid placements over accuracy. When you click on a result from a proprietary platform, you are trusting a black box. You don't know why that answer appeared first. Was it accurate? Or was it sponsored?

This uncertainty creates a massive opportunity for platforms that offer visibility into their operations. Information asymmetry is a situation where one party in a transaction has more or better information than the other has been the standard for decades. Google knows what it ranks; you don't. Facebook knows why your post was suppressed; you don't. Wikipedia flips this script. Every article has a history tab. You can see who changed a sentence, when they changed it, and why. If a user disagrees with a claim, they can read the discussion page where editors debated the evidence. This level of provenance tracking is the practice of documenting the origin and history of data or content builds a type of trust that money cannot buy.

Transparency as a Feature, Not Just a Policy

Most companies talk about transparency in their annual reports. They hold press conferences. It is marketing. For Wikipedia, transparency is the product itself. The platform’s core mechanism is collaborative editing is a process where multiple authors contribute to the creation and maintenance of a document. This model requires a robust system of checks and balances. When a major controversy arises-like the editing of a politician’s biography during an election-the entire community watches. Corrections happen in minutes, not months. The public nature of these corrections serves as proof of the system’s resilience.

Consider the difference between Wikipedia and Britannica Online is the digital version of the Encyclopædia Britannica, a general knowledge encyclopedia. Britannica relies on credentialed experts. Their authority comes from their credentials. Wikipedia relies on verifiable sources. Its authority comes from the process. In 2026, users increasingly prefer process-based authority because credentials can be faked, but a trail of cited sources is harder to fabricate at scale. This shift makes Wikipedia’s transparent governance a direct competitive advantage against traditional, expert-gated models.

The Role of Community Governance

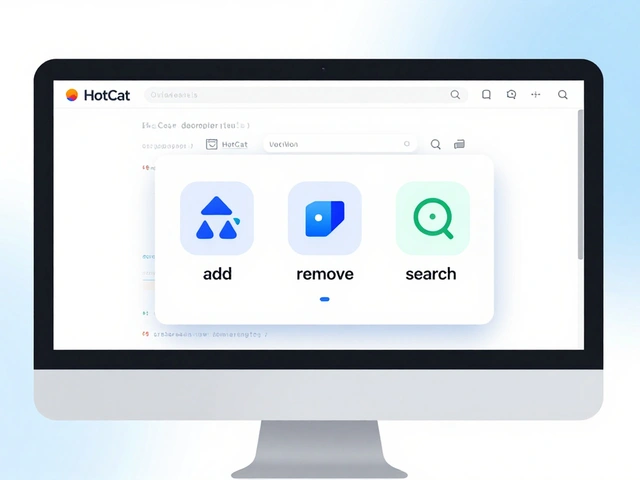

Who runs Wikipedia? No one person does. It is governed by a complex ecosystem of policies, guidelines, and customs developed by its editors. This system is known as community governance is a method of management where decisions are made collectively by the members of a group. While it can seem chaotic to outsiders, it is highly effective because it is self-correcting. If a rule becomes outdated, the community discusses it, votes on changes, and updates the policy. All of this happens in public forums.

This contrasts sharply with centralized moderation is a content management approach where a small team of employees decides what content is allowed used by social media platforms. When Twitter or Facebook bans a user, the reasoning is often vague. Appeals are handled by bots or underpaid contractors. On Wikipedia, if an editor is blocked, they can appeal the decision to a neutral third party, and the entire conversation is archived for everyone to read. This fairness reduces long-term resentment and fosters a loyal contributor base. Loyalty is a scarce resource in the attention economy.

Algorithmic Opacity vs. Human Visibility

A major trend in 2026 is the rise of AI-generated content. Many new platforms use large language models to generate summaries and articles instantly. These platforms are fast, but they are also opaque. You cannot trace how the AI arrived at its conclusion. It might be hallucinating facts. It might be biased by its training data. This is the problem of algorithmic opacity is the lack of visibility into how automated systems make decisions.

Wikipedia remains human-edited. Yes, it uses bots to clean up formatting, but the substantive content is curated by humans who cite sources. This distinction is crucial. As users become more aware of AI risks, the value of human-verified content increases. Wikipedia’s transparency allows users to verify claims themselves. You can click the citation, read the original study, and decide for yourself. This empowers the user in a way that AI-generated summaries never will. In a market flooded with synthetic content, genuine human curation backed by transparent processes becomes a premium feature.

| Platform | Governance Model | Transparency Level | Trust Mechanism |

|---|---|---|---|

| Wikipedia | Community-led | High (public edits/discussions) | Process verification |

| Britannica Online | Expert-led | Low (editorial decisions hidden) | Credential authority |

| Fandom | Hybrid (admin + corporate) | Medium (edit logs visible) | Brand association |

| AI Summarizers | Algorithmic | Very Low (black box) | Convenience speed |

Economic Sustainability Through Trust

You might wonder how transparency pays the bills. Wikipedia does not sell ads. It does not track users. It relies on donations. This model works because donors trust the mission. When you donate to the Wikimedia Foundation, you are supporting a specific, transparent goal: keeping the encyclopedia free and accessible. There is no risk that your donation will be used to target you with personalized ads.

This economic model is resilient. Ad-supported platforms are vulnerable to privacy regulations and changing consumer sentiment. As laws like GDPR and CCPA tighten, companies face higher compliance costs and user backlash. Wikipedia’s non-profit status and transparent financial reporting insulate it from these pressures. Its revenue is predictable, and its expenses are publicly audited. This financial clarity reinforces the brand’s integrity, creating a virtuous cycle where trust drives donations, which fund stability, which sustains trust.

Challenges to Transparent Governance

It is not all perfect. Open governance has drawbacks. It can be slow. Consensus-building takes time. Bad actors can exploit the system by creating sock puppet accounts to manipulate discussions. sock puppetry is the act of using multiple fake identities to influence online discussions is a constant threat. However, the community has developed sophisticated tools to detect and counter these threats. CheckUser tools allow trusted volunteers to identify coordinated abuse. The key is that these tools are used within a framework of accountability. Abuse investigations are documented. Decisions to block users are reviewed. The system is imperfect, but it is honest about its imperfections.

Competitors cannot replicate this honesty easily. A company that admits its algorithm made a mistake looks weak. A community that admits a policy failed looks mature. This cultural difference is significant. In 2026, users reward maturity and humility over polished perfection. They want partners, not masters.

The Future of Platform Competition

As we look ahead, the battle for information dominance will not be won by speed alone. It will be won by credibility. Platforms that hide their methods will struggle to retain user loyalty. Those that embrace transparency will thrive. Wikipedia has already proven that a decentralized, transparent model can scale globally. It serves hundreds of millions of users daily. Its success is a testament to the power of open governance.

For other platforms, the lesson is clear. You do not need to become Wikipedia to benefit from its insights. You can adopt elements of transparency. Publish your editorial guidelines. Show your revision history. Explain your ranking algorithms. These steps may seem small, but they signal respect for the user. In a crowded digital landscape, respect is the ultimate competitive advantage.

Why is transparency important for online encyclopedias?

Transparency builds trust by allowing users to verify information and understand how decisions are made. In an era of misinformation, seeing the process behind content creation helps users distinguish reliable sources from biased or fabricated ones.

How does Wikipedia's governance differ from Britannica's?

Wikipedia uses a community-driven model where anyone can edit and discuss content publicly. Britannica relies on a small team of credentialed experts whose editorial decisions are not publicly visible. Wikipedia prioritizes process transparency, while Britannica prioritizes credential authority.

Can transparency lead to slower content updates?

Yes, consensus-building can take longer than top-down editorial decisions. However, Wikipedia’s large volunteer base often offsets this delay. Additionally, the extra time ensures higher accuracy and reduces the risk of publishing biased or incorrect information.

What is sock puppetry in the context of Wikipedia?

Sock puppetry is the creation of fake accounts to manipulate discussions or voting outcomes. Wikipedia combats this with CheckUser tools and community oversight, ensuring that abuse is identified and addressed transparently.

How does Wikipedia fund its operations without ads?

Wikipedia relies on donations from individuals, foundations, and corporations. Its transparent financial reporting and non-profit status build donor confidence, creating a sustainable revenue model independent of advertising markets.