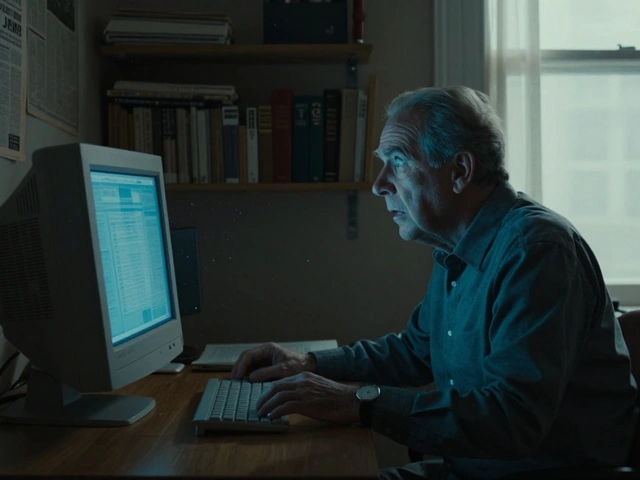

Wikipedia is one of the most used sources of information in the world. But it’s also one of the most criticized-for being biased. Not because it’s intentionally misleading, but because the people who write it are human. And humans bring their blind spots, cultural assumptions, and unspoken preferences into every edit. The challenge? Fixing that bias without throwing out the core rule that keeps Wikipedia trustworthy: Neutral Point of View.

What Neutral Point of View Really Means

Neutral Point of View (NPOV) isn’t about being boring or avoiding opinions. It’s about presenting all significant viewpoints fairly, proportionally, and without editorializing. If a topic has multiple well-documented perspectives, you don’t pick one as "correct." You show what each side says, backed by reliable sources. The goal isn’t to tell readers what to think-it’s to give them the tools to think for themselves.

For example, if you’re writing about climate change, you don’t say "climate change is real" and leave it at that. You cite scientific consensus from the IPCC, then acknowledge minority viewpoints that exist in public discourse-even if those views are rejected by experts-while making it clear how widely they’re held. That’s NPOV. Not neutrality as in "no stance," but neutrality as in "fair representation."

Where Bias Actually Shows Up

Bias on Wikipedia doesn’t usually come from editors trying to push an agenda. It shows up quietly-in what’s missing.

- Articles about Western history are often longer, more detailed, and better sourced than those about non-Western cultures.

- Biographies of women and people of color are less likely to exist, or are shorter and lack citations.

- Topics tied to marginalized communities (like Indigenous land rights or LGBTQ+ history) are sometimes flagged as "controversial" and locked down, while similar content about mainstream subjects stays open.

- Reliance on English-language sources means global perspectives get drowned out.

A 2023 study by the Wikimedia Foundation found that only 19% of biographies on English Wikipedia were about women, despite women making up roughly half the global population. That’s not an accident. It’s a pattern.

This isn’t bias in the sense of malice. It’s bias in the sense of neglect. The system rewards edits that are visible, easy to verify, and fit familiar templates. That means well-documented, English-language, Western-centric topics get more attention. Everything else slips through the cracks.

Fixing Bias Without Breaking NPOV

You can’t fix bias by declaring one side "right" and deleting the other. That violates NPOV. But you can fix it by doing three things:

- Expand coverage-if a topic lacks representation, add it. Not by forcing a viewpoint, but by adding verified facts from credible sources that have been ignored.

- Improve sourcing-replace outdated, narrow, or Western-only references with global, peer-reviewed, or community-published material. A 2021 analysis showed that articles citing sources from non-English countries were 40% more likely to include diverse perspectives.

- Balance proportion-if 90% of the article is about one perspective, and 10% is about another, that’s not neutral. It’s skewed. Adjust space based on how widely each view is held, not on how loud it is.

Take the article on "colonialism." A biased version might focus only on economic benefits claimed by colonial powers. A neutral version includes those claims-but also documents resistance, cultural erasure, and long-term impacts, all with citations from historians on all sides. The key isn’t to remove the colonial narrative. It’s to give equal weight to the narratives it silenced.

Tools and Strategies That Work

Wikipedia editors have built tools to help with this. Not because they’re perfect, but because they’re practical.

- WikiProject Women in Red-a volunteer group that creates biographies of notable women who were left out. They don’t argue about whether women deserve coverage. They just write the articles, cite reliable sources, and let NPOV do the rest.

- Content Translation Tool-lets editors translate well-written articles from other language Wikipedias into English. This brings in perspectives from India, Brazil, Nigeria, and beyond that English editors might never find on their own.

- Dispute Resolution Pages-if an edit is challenged as biased, editors are guided to find consensus through talk pages, not edit wars. The goal isn’t to win. It’s to find what sources support.

These tools work because they don’t fight NPOV. They use it as a foundation. They say: "Here’s a gap. Let’s fill it with facts, not opinions. Let’s let the sources speak."

Common Mistakes to Avoid

Even well-intentioned editors mess this up.

- Adding "balance" where there’s no real debate-You don’t give equal space to climate change deniers and climate scientists. That’s not neutral. That’s false equivalence. NPOV requires proportionality. If 97% of experts agree, say so. Mention dissent only if it’s documented and notable.

- Assuming "mainstream" means "white, Western, male"-Just because a viewpoint is common in U.S. media doesn’t mean it’s globally representative. Always ask: "Which sources are we using? Are they diverse?"

- Removing content because it’s "controversial"-If a topic is controversial because it’s been ignored for decades, don’t delete it. Expand it. Add context. Let the sources guide you.

One editor tried to delete a section on Indigenous land acknowledgments from a university article, calling it "political." The talk page response was simple: "The university itself publishes these acknowledgments. They’re not opinion-they’re official policy. Cite them. Don’t remove them."

What Success Looks Like

Success isn’t a perfect article. It’s an article that gets better over time.

The article on "Feminism in Japan" started in 2015 as a three-sentence stub. Today, it’s a 12,000-word deep dive with citations from Japanese scholars, translated interviews, legal documents, and feminist journals from Tokyo, Osaka, and Okinawa. It still has debates on the talk page. But every edit is grounded in published sources. No one is pushing an agenda. They’re just adding what’s documented.

That’s the model. Not perfection. Progress.

What You Can Do

You don’t need to be an expert. You don’t need to be a historian. You just need to care about what’s missing.

- Search for "red links"-those are topics that don’t have articles yet. If you find one that’s notable, write it.

- Check the "citation needed" tags. Find a reliable source to back it up.

- Look at the "Talk" page of underrepresented topics. Ask: "What’s missing? What sources haven’t been used?"

- Use the Content Translation tool. Translate an article from another language. You’ll bring in perspectives you didn’t know existed.

Wikipedia doesn’t need more editors who think alike. It needs more editors who ask: "Who’s not here? What aren’t we seeing?"

Can I remove biased content if it’s offensive?

No-not just because it’s offensive. Wikipedia doesn’t remove content based on offensiveness. It removes content based on verifiability and neutrality. If a claim is false, unsupported, or violates policy (like original research), then it can be removed. But if a viewpoint is documented in reliable sources-even if it’s offensive-you must represent it fairly, with context. The goal is to inform, not censor.

Does NPOV mean I can’t use terms like "racism" or "colonialism"?

No. Those are factual terms used in academic, legal, and historical contexts. You can use them if they’re supported by sources. For example, if a scholar describes a policy as "colonial," you can write that. But you can’t say "colonialism was evil"-that’s a value judgment. Instead, say "many historians argue that colonialism caused lasting economic and cultural damage," and cite them.

What if a source is biased? Should I still use it?

Yes-if it’s reliable. Bias doesn’t mean unreliability. A newspaper with a political slant can still report facts accurately. The key is to use multiple sources. If one source says "X happened," and two others confirm it, you can include it. If only one source claims something controversial, mention that it’s a minority view. The goal is to show what sources say, not to judge them.

How do I know if a topic is notable enough for a Wikipedia article?

Notability means there’s significant coverage in reliable, independent sources. It doesn’t mean fame or popularity. For example, a local activist might be notable if they’re covered in three different academic journals or major news outlets. Check Wikipedia’s notability guidelines for your topic category. If you can find at least two or three solid sources, it’s likely notable.

Why do some articles get locked while others don’t?

Articles are locked when they’re subject to persistent edit wars or vandalism-not because they’re controversial. A well-sourced article on a sensitive topic can stay open if editors follow policy. Locking is a temporary tool, not a judgment on the topic’s validity. The real fix is better sourcing and discussion, not restriction.

Wikipedia’s strength isn’t that it’s perfect. It’s that it’s open. And every edit, every citation, every quiet addition to an overlooked article is a step toward a more complete picture of the world. You don’t need to change the whole system. Just change one article. That’s how bias gets unmade.