Key Takeaways for Researchers

- Wikipedia provides a high-volume, diverse dataset that eliminates the 'WEIRD' (Western, Educated, Industrialized, Rich, and Democratic) sample bias.

- Using the Wikipedia API allows for longitudinal analysis of how concepts evolve over time.

- Replication requires mapping original study variables to available Wikipedia metrics like edit frequency or link density.

- Open science practices increase the transparency and credibility of updated findings.

Why Classical Samples Fail Today

Most of the 'classic' studies we cite were conducted in the mid-20th century. Take the Stanford Prison Experiment or early behavioral economics tests. They often relied on a handful of participants in a controlled lab. The problem is that human behavior changes, and the demographics of those early samples were incredibly narrow. When we talk about the Replication Crisis, we're talking about the inability to achieve the same results using the same methodology. By shifting the lens to a digital commons, we can see if those behavioral patterns still exist when the sample size jumps from 30 people to 30 million.

Wikipedia isn't just a place to check facts; it's a giant behavioral laboratory. Every single edit, every dispute on a talk page, and every translation into another language is a data point. If a classic study claimed that people prioritize certain types of information over others, we can test that by analyzing which topics get the most attention and how they are structured across different language editions of the site.

Turning Wikipedia into a Research Lab

To start replicating a study, you first need to identify the core variable. If a study was about social influence, you might look at how a single influential editor can steer the narrative of a page. If it was about cognitive bias, you could analyze the 'recency effect' in how information is updated on trending pages. You aren't just reading articles; you're analyzing the process of knowledge creation.

The primary tool here is the Wikipedia API, which provides a programmatic way to extract history, revisions, and metadata. For those who aren't coding experts, Wikimedia Dumps offer a way to download the entire database for offline analysis using tools like Python or R. This allows you to track a specific concept's growth over ten years, which is something a traditional lab study could never achieve.

| Feature | Classic Lab Study | Wikipedia Replication |

|---|---|---|

| Sample Size | Small (usually 20-100) | Massive (Millions of contributors) |

| Demographics | Narrow (often US college students) | Global and diverse |

| Observation | Active/Interventional | Naturalistic/Observational |

| Timeframe | Short-term snapshot | Decade-long longitudinal data |

Step-by-Step Process for Data Replication

Replicating a study isn't as simple as searching for a keyword. It requires a structured approach to ensure the new data actually maps to the original theory. If you're trying to see if a theory on 'groupthink' still applies, follow these steps:

- Isolate the Hypothesis: Strip the original study down to its simplest form. Example: "Groups tend to reach consensus faster when there is a strong dominant leader."

- Define the Proxy: Since you can't interview Wikipedia editors, find a digital equivalent. A 'dominant leader' might be an editor with a high volume of reverts or a long history of administrative power on a specific page.

- Extract the Dataset: Use the API to pull the edit history of 50 different controversial pages. Look for patterns where a few users dominate the narrative.

- Run the Analysis: Compare the speed of consensus (how long it takes for a page to stop being edited) against the level of editor dominance.

- Validate Against Controls: Compare your findings with a control group, such as non-controversial pages, to make sure the pattern isn't just a coincidence.

Common Pitfalls and How to Avoid Them

One big mistake is ignoring the 'power law' of contributions. A tiny fraction of editors do the vast majority of the work. If you just average out the data, you'll get a skewed result that doesn't represent the community. You need to weight your data based on user activity levels to get an accurate picture.

Another trap is the 'survivorship bias.' You're seeing the version of the page that survived, not the thousands of edits that were deleted or reverted. To truly replicate a study on human error or bias, you have to look at the deleted content. This is where the real behavioral data lives-in the mistakes and the corrections.

Lastly, be careful with Selection Bias. People who edit Wikipedia are not a random sample of the global population; they are people who enjoy editing Wikipedia. While this is still better than a group of 20 students in a basement, you must acknowledge that your 'digital humans' have specific traits-they are generally more tech-savvy and opinionated than the average person.

The Role of Open Science in Modern Validation

The beauty of using a platform like Wikipedia is that it aligns perfectly with the Open Science Framework. In the old days, researchers kept their data in private spreadsheets. Today, if you replicate a study using Wikipedia, your data is public. Anyone in the world can run your script, check your API calls, and see if they get the same result.

This transparency kills the incentive to 'p-hack' or cherry-pick data to fit a desired outcome. When the dataset is a public record of millions of interactions, the results are much harder to fake. We are moving toward a future where 'truth' in social science is determined by large-scale digital evidence rather than the prestige of the university where the study was conducted.

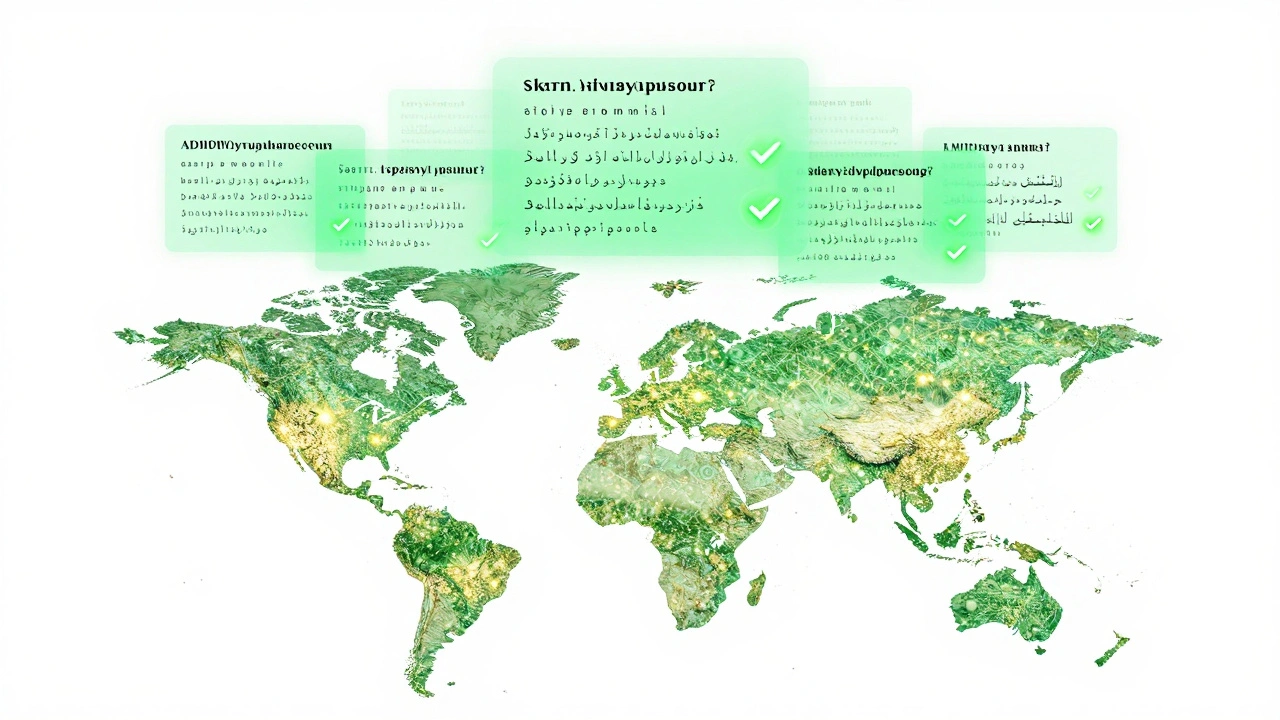

Looking Beyond the English Wikipedia

If you want to take your research to the next level, stop looking only at the English version. One of the most powerful ways to test a universal human theory is to see if it holds up across Cross-Cultural Psychology. Does the same behavioral pattern emerge in the Japanese Wikipedia as it does in the Spanish one?

By comparing different language editions, you can separate biological human traits from cultural quirks. If a classic study on memory or categorization holds true across ten different language communities on Wikipedia, you've found something truly fundamental about the human mind. This is the gold standard of replication: finding a result that is consistent across geography, language, and time.

Is Wikipedia data reliable enough for academic publishing?

Yes, but not as a source of 'fact.' It is reliable as a record of human behavior. Academics use it to study how people collaborate, how misinformation spreads, and how knowledge is categorized. The reliability comes from the volume and the transparency of the edit logs, not necessarily the content of the articles themselves.

Do I need to be a programmer to do this?

While knowing Python or R helps immensely for large datasets, there are third-party tools and browser extensions that allow you to export Wikipedia history to CSV files. For smaller-scale replications, these tools are plenty.

What is the 'WEIRD' bias mentioned?

WEIRD stands for Western, Educated, Industrialized, Rich, and Democratic. It refers to the fact that most psychological research has been done on people from these backgrounds, making the results less applicable to the global population. Wikipedia's global user base helps break this cycle.

How do I handle the massive size of Wikimedia Dumps?

Don't try to open them in Excel. Use specialized tools like PyWikipedia or database systems like PostgreSQL to query the data. If you're just starting, use the API to pull specific pages rather than downloading the entire site.

Can this method actually disprove a classic study?

It can provide strong evidence that a study's findings don't generalize to a larger, more diverse population. While it might not 'disprove' the original lab result (which happened in a specific setting), it can show that the theory isn't as universal as previously thought.

Next Steps for Your Research

If you're a student or a researcher, start small. Pick one classic study with a simple hypothesis. Try to find three 'proxies' on Wikipedia that relate to the original variables. Once you've mapped those out, use a tool like the Wikipedia API to pull a small sample of data. Don't aim for a full paper immediately; aim for a proof-of-concept that shows the digital data reflects (or contradicts) the original lab findings. From there, you can scale up to millions of data points and contribute to the movement of open, verifiable science.