When you hear the phrase "peer review," your mind probably jumps to academic journals. You imagine a stack of papers being shuffled between professors in ivory towers, with months of silence before a decision arrives. It feels exclusive, slow, and expensive. But there is another side to this story that most people overlook. Wikipedia is a free online encyclopedia that relies on community-driven editing and rigorous oversight processes to maintain content quality. While it isn't a traditional journal, its internal mechanisms for verifying information operate much like a continuous, real-time peer review system.

The confusion often stems from mixing up two different things: editing an article on Wikipedia versus publishing research *about* Wikipedia. If you are a researcher studying how knowledge spreads online, you might want to publish your findings in a journal. If you are trying to add new scientific data to an existing Wikipedia page, you are engaging in a different kind of verification process. Both paths require understanding how credibility is built in the digital age.

Understanding the Myth of Traditional Peer Review

Traditional academic peer review has become a bottleneck for science. According to recent studies, the average time from submission to publication in high-impact journals can exceed six months. During this time, valuable insights sit in limbo. This delay costs researchers funding opportunities and slows down public understanding of critical issues, such as health crises or climate change.

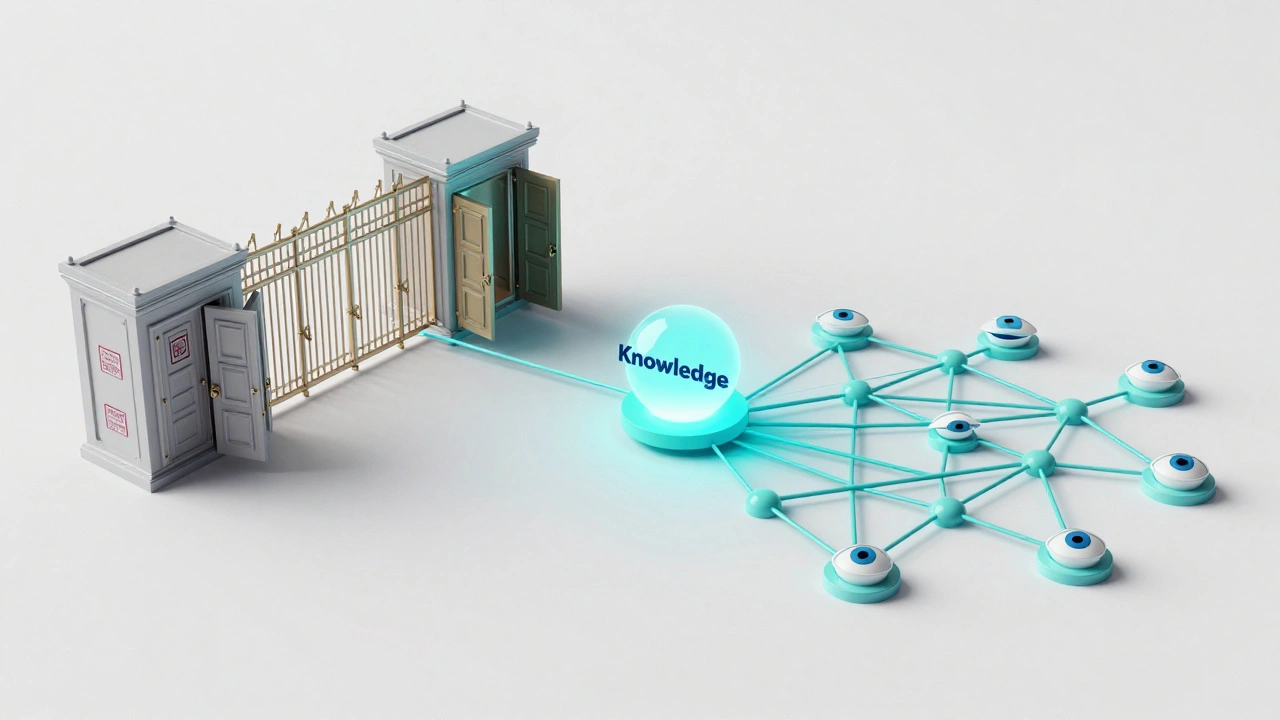

In contrast, the model used by platforms like Wikimedia Foundation operates on a decentralized structure where volunteers monitor changes and enforce editorial guidelines without central gatekeepers. There is no single editor-in-chief who says yes or no. Instead, thousands of editors watch specific topics they care about. When someone adds a claim to a page, these watchers check the sources immediately. If the source is weak, the edit gets reverted within minutes. This creates a form of continuous scrutiny that is far more intense than the occasional glance of a busy academic reviewer.

However, this speed comes with risks. The lack of formal credentials means anyone can contribute. To counter this, the community relies heavily on verifiability rather than originality. You cannot publish new ideas on Wikipedia; you can only summarize what reputable sources have already said. This distinction is crucial for researchers trying to understand the platform's role in the ecosystem of knowledge.

The Role of Scholarly Journals in Wikipedia Studies

If your goal is to publish original research about Wikipedia-such as analyzing editor behavior, mapping knowledge gaps, or evaluating citation quality-you need to go through traditional channels. Several respected journals now specialize in this area. Publications like PLOS ONE frequently publishes open-access articles covering diverse scientific disciplines, including studies on digital collaboration and information science have featured significant work on Wikipedia dynamics. Similarly, Nature Human Behaviour has highlighted research exploring social dynamics, cognitive biases, and collective intelligence in online communities.

These journals apply standard peer review protocols. Your manuscript goes out to two or three anonymous experts who critique your methodology, statistical analysis, and conclusions. This process ensures that claims about Wikipedia’s impact are backed by solid evidence. For example, a study claiming that Wikipedia improves student learning must demonstrate measurable outcomes using controlled experiments, not just anecdotes.

One major benefit of publishing in these venues is visibility. Researchers searching for reliable data on digital literacy will find your work indexed in databases like PubMed or Scopus. This gives your findings legitimacy beyond the tech-savvy crowd who naturally gravitate toward Wikipedia discussions.

Preprints and Open Science Movements

Some researchers choose to bypass traditional journals entirely by using preprint servers. Platforms like arXiv allows authors to share unpublished manuscripts in physics, mathematics, and computer science before formal peer review enable immediate dissemination of findings. In fields related to information technology and social computing, posting a preprint lets other scientists comment publicly, creating a transparent record of feedback.

This approach aligns well with the ethos of open access. Many scholars argue that paywalls hinder the spread of knowledge about tools like Wikipedia, which itself is freely available. By sharing their work openly, researchers invite broader participation in validating their results. However, preprints carry a caveat: they haven’t undergone formal vetting. Readers must exercise caution when citing them, especially if those citations eventually make their way into Wikipedia articles themselves.

A growing trend involves combining both methods. Authors post their paper as a preprint to gain early traction, then submit it to a peer-reviewed journal later. This hybrid strategy maximizes reach while maintaining academic rigor. It reflects a shift in how we think about authority-not as something granted solely by institutions, but as something earned through transparency and community engagement.

Quality Control Mechanisms Within Wikipedia

Let’s circle back to what happens inside Wikipedia itself. How does the site prevent misinformation? The answer lies in its robust set of policies and enforcement tools. One key mechanism is the use of talk pages. Every article has a discussion space where editors debate content changes. These conversations often mirror academic discourse, complete with references to policy guidelines and external sources.

Another vital component is the network of bots and scripts that automatically flag suspicious edits. Tools like ORES (Objective Revision Evaluation Service) analyze text patterns to predict whether an edit is likely to be constructive or vandalism-prone. Machine learning models help prioritize human review efforts, allowing experienced volunteers to focus on complex disputes rather than obvious errors.

Contentious topics receive extra attention through structured dispute resolution processes. Articles marked as "protected" can only be edited by administrators after approval. High-profile subjects, such as political figures or medical conditions, undergo periodic audits by specialized task forces. This layered defense system mimics the checks and balances found in scientific publishing, albeit adapted for a volunteer-driven environment.

| Feature | Academic Journal | Wikipedia |

|---|---|---|

| Gatekeeping | Centralized editors | Distributed community |

| Speed | Months to years | Minutes to hours |

| Cost | Often includes fees | Free |

| Anonymity | Double-blind common | Pseudonymous usernames |

| Originality | Required | Prohibited (no original research) |

| Correction Process | Errata notes | Immediate reversion |

Challenges Facing Researchers in This Field

Studying Wikipedia presents unique hurdles. First, the dataset is massive and constantly changing. Capturing a snapshot of all edits requires sophisticated data engineering skills. Second, interpreting user motivations can be tricky. Are editors driven by altruism, ego, or hobbyist interests? Disentangling these factors demands careful survey design and behavioral analysis.

Third, there’s the issue of bias. Despite best efforts, certain topics remain underrepresented due to language barriers or cultural preferences. Researchers must account for these systemic inequalities when drawing conclusions about global knowledge production. Ignoring them leads to skewed interpretations that could misinform policymakers relying on your work.

Finally, ethical considerations loom large. Conducting experiments involving real users raises questions about consent and privacy. Even though Wikipedia contributions are public, aggregating personal data across multiple accounts violates core principles of responsible research. Navigating these constraints requires close collaboration with legal advisors and ethics boards.

Building Credibility Through Transparency

To succeed in either arena-publishing research about Wikipedia or contributing to its content-transparency is non-negotiable. Cite your sources meticulously. Explain your methods clearly. Acknowledge limitations openly. These practices build trust over time, whether you’re addressing fellow academics or casual readers scrolling through an article.

Remember that credibility isn’t static. It evolves based on how consistently you uphold standards. In the world of open knowledge, reputation matters more than ever. A single lapse in accuracy can damage your standing permanently. Conversely, sustained excellence earns recognition that transcends individual projects.

As digital platforms continue reshaping how we create and consume information, understanding the interplay between traditional scholarship and collaborative innovation becomes essential. Whether you aim to influence policy, improve educational resources, or simply satisfy curiosity, mastering the nuances of peer review in all its forms empowers you to participate meaningfully in the conversation.

Can I publish my own research directly on Wikipedia?

No, Wikipedia strictly prohibits original research. All content must be based on previously published, reliable sources. If you have unpublished findings, you should first submit them to a peer-reviewed journal or preprint server before referencing them elsewhere.

Is Wikipedia considered a credible source for academic writing?

Generally, no. Most universities advise against citing Wikipedia directly because its content can change rapidly and lacks formal authorship. Instead, use the references listed at the bottom of articles to locate primary sources like books or journal articles.

How long does peer review take for journals focused on digital media?

Timelines vary widely depending on the journal. Some open-access publications offer expedited reviews within weeks, while others may take several months. Always check the specific journal’s stated average processing times before submitting.

What makes Wikipedia different from other encyclopedias?

Unlike traditional encyclopedias written by assigned experts, Wikipedia is collaboratively edited by volunteers worldwide. Its openness allows rapid updates but also necessitates strict adherence to sourcing rules to maintain reliability.

Are there costs associated with publishing research about Wikipedia?

It depends on the journal. Many open-access journals charge Article Processing Charges (APCs), which can range from hundreds to thousands of dollars. However, some institutions provide waivers or subsidies to support researchers without funding.