Imagine typing your name into a search bar. Instead of your professional bio or recent achievements, you see accusations of crimes you didn’t commit, leaked private addresses, or malicious edits designed to ruin your reputation. For thousands of people, this isn't a hypothetical nightmare-it's the reality of Wikipedia, the world's most visited encyclopedia. While the platform is a marvel of collaborative knowledge, it has become a battleground for coordinated harassment campaigns. This is where safety journalism, a specialized form of reporting that investigates how digital platforms handle user abuse, becomes critical.

Covering harassment on Wikipedia is not just about writing a story; it’s about understanding a complex ecosystem of volunteers, bots, and policy loopholes. If you are a journalist looking to report on online safety, you need to know how to navigate the "backstage" of Wikipedia. You need to understand who pulls the strings, how bad actors exploit the system, and what the Wikimedia Foundation does-or fails to do-to protect its users. This guide breaks down the mechanics of Wikipedia moderation so you can produce accurate, impactful safety journalism.

The Hidden Mechanics of Wikipedia Moderation

To report on Wikipedia effectively, you first have to demystify how it works. Unlike traditional encyclopedias with paid editors, Wikipedia relies on a volunteer hierarchy. At the bottom, you have anonymous users editing pages. Above them are "admins," volunteers who have earned the right to delete pages, block IP addresses, and protect articles from vandalism. At the very top are the Wikimedia Foundation, the non-profit organization that hosts the site and employs staff to manage legal issues and server infrastructure.

The problem? The community runs the show. Decisions about what stays on a page and who gets banned are made by consensus among these volunteers, not by corporate HR departments. This creates a unique challenge for journalists. When someone claims they were harassed on Wikipedia, there is no central customer service ticket to review. Instead, you have to dig through public logs, discussion pages, and arbitration cases. Understanding this structure is the first step in safety journalism. It explains why accountability is often slow and why victims frequently feel ignored.

Identifying Coordinated Harassment Campaigns

One of the most dangerous threats on Wikipedia is not random vandalism, but organized attacks. These are often called "sock puppet" rings-groups of individuals using multiple fake accounts to push a specific narrative or harass a target. A classic example is the targeting of women in tech or politics. Editors might create dozens of accounts to argue against including a woman’s work in her biography, citing dubious sources or attacking her character.

As a journalist, your job is to spot these patterns. Look for:

- Sudden spikes in negative edits: Does a page receive dozens of controversial changes within hours?

- Repetitive arguments: Are different users making the exact same points in discussion threads?

- IP address clusters: Do multiple accounts log in from the same internet service provider range?

Tools like CheckUser, a special permission granted to trusted volunteers to investigate potential sock puppets, can reveal these connections. However, this data is restricted. To get access, you often need to build relationships with senior editors or file formal requests through the Wikimedia Foundation’s transparency channels. Safety journalism requires patience here; you cannot simply demand data. You must earn trust or use publicly available evidence to build your case.

The Role of the Arbitration Committee

When conflicts escalate beyond what regular admins can handle, they go to the Arbitration Committee (ArbCom), Wikipedia’s highest court. ArbCom handles serious disputes, including bans for harassment, bias, or disruptive behavior. Their decisions are public and detailed, offering a goldmine for journalists. By reading past arbitration cases, you can identify recurring themes in how the community handles abuse.

However, ArbCom is also a point of contention. Critics argue that the committee is too insular, dominated by long-time editors who may share blind spots regarding modern forms of harassment, such as dog-whiling or subtle bias. Your reporting should explore whether ArbCom’s rulings actually protect vulnerable users or if they inadvertently shield powerful, toxic editors. Interview former arbitrators, current members, and those who have been sanctioned. Ask them directly: "Did the process feel fair? Did it stop the harassment?" These personal narratives bring human stakes to technical policies.

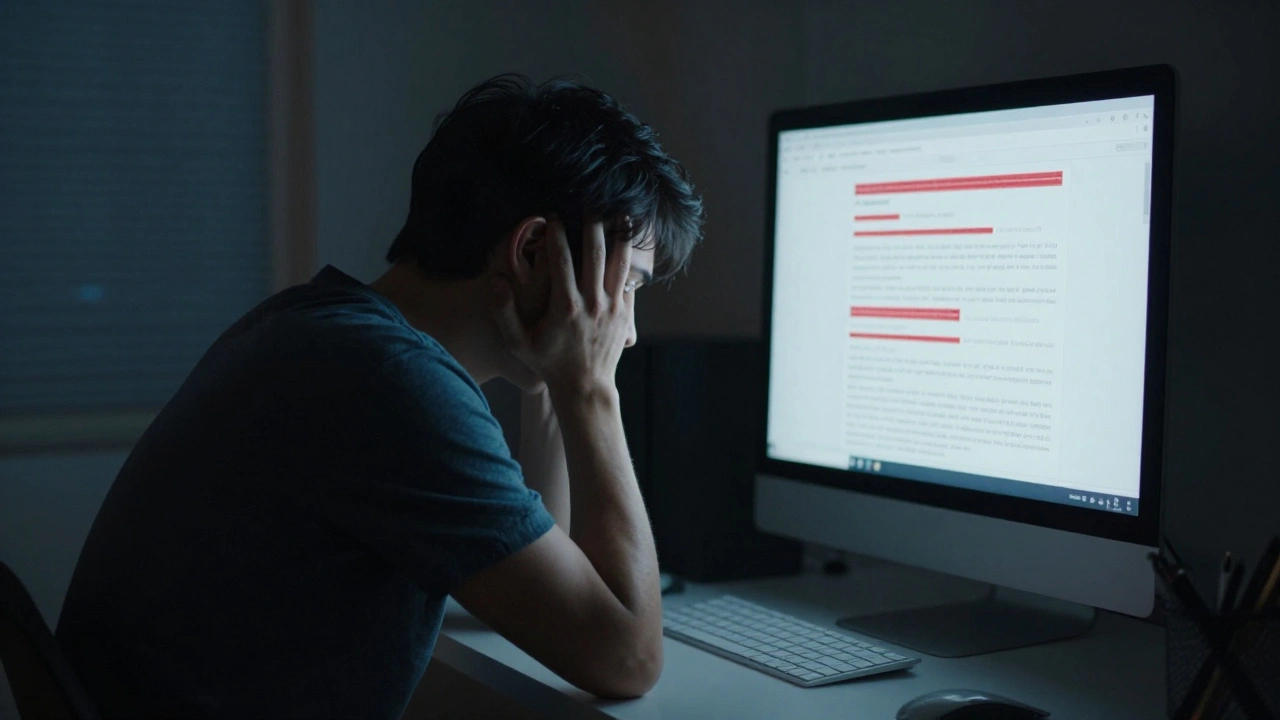

Interviewing Victims and Perpetrators

Safety journalism is deeply human. Behind every edit war is a person whose life has been impacted. When interviewing victims of Wikipedia harassment, focus on the real-world consequences. Did they lose job opportunities? Did they experience mental health struggles? Did they have to change their social media privacy settings out of fear? These stories highlight why Wikipedia’s internal policies matter to the broader public.

Equally important is engaging with the perpetrators, though this is harder. Many bad actors operate anonymously. In cases where identities are known, approach them with journalistic rigor. Give them a chance to respond to allegations. Sometimes, what looks like harassment is a genuine disagreement over facts. Other times, it’s malicious intent. Your role is to distinguish between the two without becoming an advocate for either side. Maintain neutrality while exposing harmful behaviors.

Policy Gaps and Platform Responsibility

A core tenet of safety journalism is holding platforms accountable. The Wikimedia Foundation often argues that it is neutral and that content decisions belong to the community. But this stance has limits. When harassment involves doxxing (publishing private information) or threats of violence, the foundation must act. Investigate how quickly they respond to such reports. Do they remove harmful content promptly? Do they ban repeat offenders?

Compare Wikipedia’s approach to other platforms like Facebook or Twitter. Those companies have dedicated teams monitoring abuse at scale. Wikipedia relies on volunteers. Is this model sustainable in an era of increasing online toxicity? Explore alternative models, such as paid moderation roles or AI-driven detection tools. Present these options fairly, weighing the benefits of automation against the risks of censorship. Your analysis should help readers understand the trade-offs involved in keeping an open encyclopedia safe.

| Feature | Wikipedia | Commercial Social Media |

|---|---|---|

| Moderation Staff | Volunteer-based | Paid employees + AI |

| Decision Process | Community consensus | Corporate policy enforcement |

| Transparency | High (public logs) | Low (private algorithms) |

| Response Time | Variable (hours to days) | Fast (minutes to hours) |

| Accountability | Internal appeals | Customer support tickets |

Best Practices for Reporting

If you’re new to covering Wikipedia, start small. Follow a few high-profile edit wars. Read the talk pages where editors debate changes. Notice the tone-is it civil or hostile? Join relevant communities, such as the WikiProject on gender gap or LGBTQ+ topics, to understand the culture. Building rapport with editors will give you insights that outsiders miss.

Always verify your sources. Wikipedia allows anyone to edit, which means misinformation can spread quickly. Cross-check claims with independent, reliable sources. Don’t rely solely on what’s written on the page. Use the "View History" tab to see who changed what and when. This metadata is crucial for establishing timelines and identifying suspicious activity.

Finally, be mindful of your own impact. Publishing details about a harassment campaign can sometimes amplify the harm. Consider anonymizing victims unless they explicitly consent to being named. Focus on systemic issues rather than sensationalizing individual drama. Your goal is to improve the platform, not just to expose its flaws.

What is safety journalism?

Safety journalism is a branch of reporting that focuses on how digital platforms design, implement, and enforce rules to protect users from harm, including harassment, hate speech, and misinformation. It examines the intersection of technology, policy, and human behavior.

How can I find evidence of harassment on Wikipedia?

Start by examining the "Talk" pages associated with disputed articles. Look for patterns in user contributions, such as multiple accounts arguing the same point. Review public deletion logs and check for any open arbitration cases related to the topic. Tools like CheckUser can help, but access is restricted to trusted volunteers.

Who makes the final decision on bans?

For most cases, experienced volunteer administrators make banning decisions based on community guidelines. Serious or complex disputes are escalated to the Arbitration Committee, a group of elected volunteers who issue binding rulings. The Wikimedia Foundation staff generally do not intervene in content disputes unless legal issues arise.

Is Wikipedia safe for marginalized groups?

While Wikipedia has improved its policies, many marginalized groups still face significant challenges. Studies show that women, particularly women of color, are more likely to have their contributions reverted or be subjected to biased criticism. Safety journalism highlights these disparities and pushes for better protection mechanisms.

How does Wikipedia compare to other platforms in handling abuse?

Wikipedia relies heavily on volunteer moderation, leading to slower response times but higher transparency. Commercial platforms use paid teams and AI for faster action but offer less insight into their decision-making processes. Each model has trade-offs between speed, fairness, and accountability.