Wikipedia is the go-to source for quick facts. Billions of people rely on it every day - for homework, work projects, or just curiosity. But here’s the thing: anyone can edit it. That openness is its strength. It’s also its weakness.

How Vandalism Slips Through

Wikipedia doesn’t have a team of fact-checkers sitting over every edit. Instead, it uses a mix of bots, volunteer editors, and community flags. Most vandalism is caught within minutes. But some sneaks through - sometimes for days, even years.

Take the case of the Wikipedia page for the 2008 U.S. presidential election. In October 2008, someone changed Barack Obama’s birthplace from Hawaii to Kenya. The edit stayed live for over four months. It wasn’t just a typo. It was a politically motivated lie, carefully worded to look plausible. Hundreds of thousands of people saw it before it was corrected. Why? Because the edit used real-looking citations and mimicked Wikipedia’s tone. It didn’t scream "fake." It whispered.

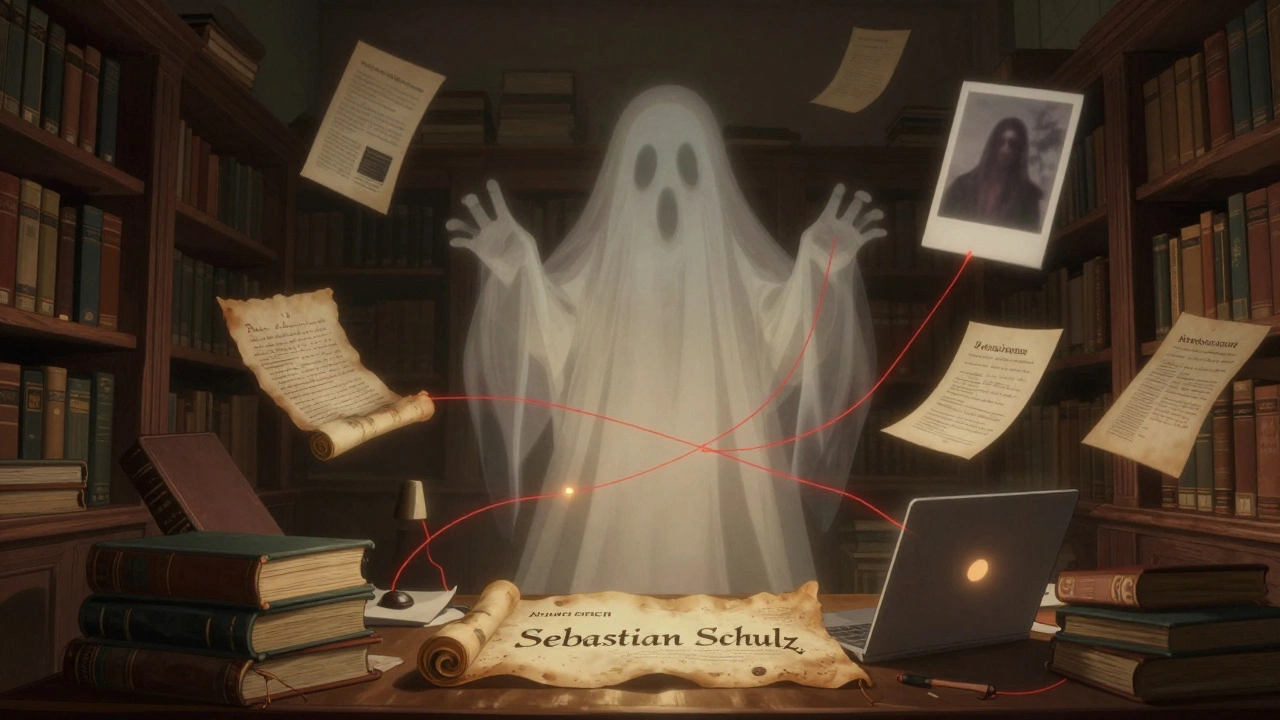

The Longest-Running Hoax

One of the most famous cases is the fake biography of Sebastian Schulz. In 2005, a user created a page claiming Schulz was a German computer scientist who worked on early internet protocols. He had fake publications, fake affiliations, even a fake photo. No one questioned it. For over 15 years, Schulz appeared in academic databases, research papers, and even a German university’s website. It wasn’t until 2021 that someone noticed his "publications" were all cited by the same two users - both of whom had edited Schulz’s page.

How did it last so long? Because nobody looked hard enough. People assumed Wikipedia had been vetted. They trusted the format. The structure. The font.

Corporate Sabotage

Not all vandalism comes from trolls. Sometimes it comes from companies.

In 2013, a PR firm hired to manage the online reputation of a pharmaceutical company edited the Wikipedia page for a rival drug. They added false claims that the competitor’s medication caused liver damage. The edit was subtle: a single sentence buried under a "side effects" section. It was cited to a blog post - not a peer-reviewed journal. The edit stayed up for three weeks. When it was finally caught, the firm was exposed. But not before the misinformation spread to news sites, forums, and even medical discussion boards.

Wikipedia doesn’t ban corporate edits outright. It just requires disclosure. But many don’t disclose. And when they do, they often hide behind vague usernames like "HealthResearchGroup123."

The Celebrity Myth

One of the most bizarre cases involved the actor John Travolta. In 2010, someone edited his Wikipedia page to say he had died in a plane crash. The edit included a fake quote from a "family spokesperson" and linked to a non-existent news article. It stayed live for 18 hours. During that time, Twitter exploded. News aggregators picked it up. A few local blogs even ran headlines.

Why did it spread? Because people didn’t check the source. They saw "Wikipedia" and assumed it was official. The edit had the right tone - calm, factual, slightly somber. It didn’t look like a prank. It looked like breaking news.

When Wikipedia Gets It Right

It’s easy to focus on the failures. But Wikipedia’s self-correction system works more often than not. The average vandalism edit lasts less than five minutes. Bots detect obvious nonsense - like "Wikipedia is stupid" - in under 30 seconds.

What’s more impressive is how quickly the community reacts. In 2022, a user edited the page for the 2022 FIFA World Cup to claim Qatar had won the tournament. The edit was flagged by a bot within 12 seconds. Within three minutes, three volunteer editors reverted it. One of them left a comment: "Nice try. But the final was France vs. Argentina. You might want to rewatch it."

Wikipedia’s strength isn’t perfection. It’s accountability. Every edit is tracked. Every change has a history. If you look closely, you can see the whole story - who changed what, when, and why.

Why It Still Matters

Wikipedia isn’t the final word. But for most people, it’s the first word. And that’s why vandalism matters.

When false information stays up - even for a few hours - it gets copied. It gets shared. It becomes part of the public memory. A student writes a paper using a fake fact. A journalist cites a corrupted entry. A parent believes a lie because it’s on a "trusted" site.

That’s not just a glitch. It’s a risk.

Wikipedia’s system works because people care. Thousands of volunteers spend hours reviewing edits, chasing sources, and undoing nonsense. But no system is foolproof. Not even one with millions of eyes.

What You Can Do

You don’t need to be an editor to help. Here’s how:

- Check the edit history - Click "View history" on any Wikipedia page. If the last edit was made by a new user with no other edits, be cautious.

- Look at citations - Are they from reputable sources? Or just blogs, forums, or personal websites?

- Compare with other sources - If it sounds too wild, Google it. See what other reliable sites say.

- Report it - Use the "Report vandalism" button on any page. It’s one click. And it helps.

Wikipedia isn’t broken. But it’s not magic. It’s a mirror of the internet - sometimes brilliant, sometimes chaotic. Understanding that helps you use it better.

Can Wikipedia be trusted at all?

Yes - but not blindly. Wikipedia is reliable for general facts that have been reviewed by many editors. For topics like history, science, and major events, it’s often accurate. But for breaking news, niche subjects, or controversial topics, always double-check. The more recent the edit, the higher the risk.

Why don’t they just lock all pages?

Locking pages would defeat Wikipedia’s purpose. The whole point is to let anyone contribute. If only experts could edit, it would become slow, expensive, and disconnected from real-world knowledge. Instead, Wikipedia uses a layered defense: bots catch obvious lies, volunteers monitor changes, and the community debates edits. It’s messy - but it works.

How often does vandalism go unnoticed?

Rarely - but it happens. Studies show that less than 0.1% of edits are malicious. Of those, 95% are fixed within an hour. But the remaining 5% - the sneaky ones - can last days or weeks. These are the ones that spread. They’re not random. They’re targeted, well-written, and designed to look legitimate.

Are there any famous cases of vandalism that still exist?

No major cases remain active today. Wikipedia’s community is too active. But some edits from years ago still show up in cached versions of pages, or in third-party sites that copied outdated content. Always check the current version on Wikipedia.org - not a cached copy.

Can I get banned for editing Wikipedia?

Only if you’re deliberately spreading misinformation or spamming. Honest mistakes are fine. In fact, Wikipedia encourages new editors. But if you repeatedly make false edits - especially to high-profile pages - you’ll be blocked. The system is designed to protect accuracy, not punish newcomers.