Imagine it is 3:00 AM on a Friday in Madison. A massive earthquake hits somewhere in California. Within minutes, videos flood the timeline on X and TikTok. By the time the sun rises, three different stories have emerged. One is true, two are fabrications generated by sophisticated algorithms. As an editor watching the feed, your job isn't just to report what happened; it's to prove it happened before you type a single word.

This is the reality of modern information curation. The speed of digital communication often outpaces traditional journalism. When users click on a wiki page expecting truth during a crisis, they expect rigor. That rigor starts with rigorous Social Media Verification, defined here as the systematic process of authenticating digital content found on platforms to confirm its origin, location, and integrity. We are not guessing; we are proving. Below, we break down exactly how reliable platforms handle these incoming tides of data.

The Cost of Unverified Information

In the past, news organizations had gatekeepers. You needed credentials to publish. Now, anyone with a smartphone acts as a reporter. While this democratizes storytelling, it introduces significant risks for public resources. If a false claim enters a stable reference source, it takes days, sometimes weeks, to remove the damage. The reputational cost of hosting unverified breaking news is incredibly high.

Consider the phenomenon known as the "Streisand effect." When you delete misinformation, attention spikes around it. Conversely, if you update quickly with verified facts, trust solidifies. The difference lies in the evidence trail. Editors now need to act like detectives rather than scribes. They require a toolkit that goes beyond reading captions.

Visual Forensics and Image Analysis

Pictures tell stories, but they can also lie. By early 2026, image manipulation has become seamless. Simple filters aren't enough to catch deepfakes anymore. You need to look under the hood.

- Reverse Image Search: This remains the bedrock of verification. Uploading an image to search engines checks if it appeared years ago. Many "breaking" photos turn out to be archival footage from previous events.

- Metadata Inspection: Files carry hidden data. GPS coordinates, camera models, and creation timestamps live inside the file header. Sometimes, metadata is stripped upon upload, but original files shared via direct messages retain them.

- Error Level Analysis: This technique identifies areas of an image that have been altered by detecting inconsistencies in compression patterns.

Geolocation and Satellite Verification

Sometimes, you don't know where a video was taken. Claimed locations are easy to fake, but the physical world leaves clues. Shadows, vegetation, road signs, and building angles are hard to replicate perfectly.

Editors cross-reference landmarks against satellite maps. For example, if a video claims to show a protest in Paris but the cobblestone texture and streetlamp style match Berlin, the claim is suspect. In 2026, augmented reality overlays on mapping tools help pinpoint exact angles relative to the sun based on timestamped shadows.

| Environmental Indicators | |

|---|---|

| Vegetation Type | Identify specific tree species or seasonal changes unique to regions. |

| Street Signage | Font styles and language on public signs often differ between cities. |

| Solar Position | Shadow direction reveals cardinal orientation at specific times of day. |

| License Plates | Format and color schemes indicate country or state of origin. |

Video Authenticity in the Age of AI

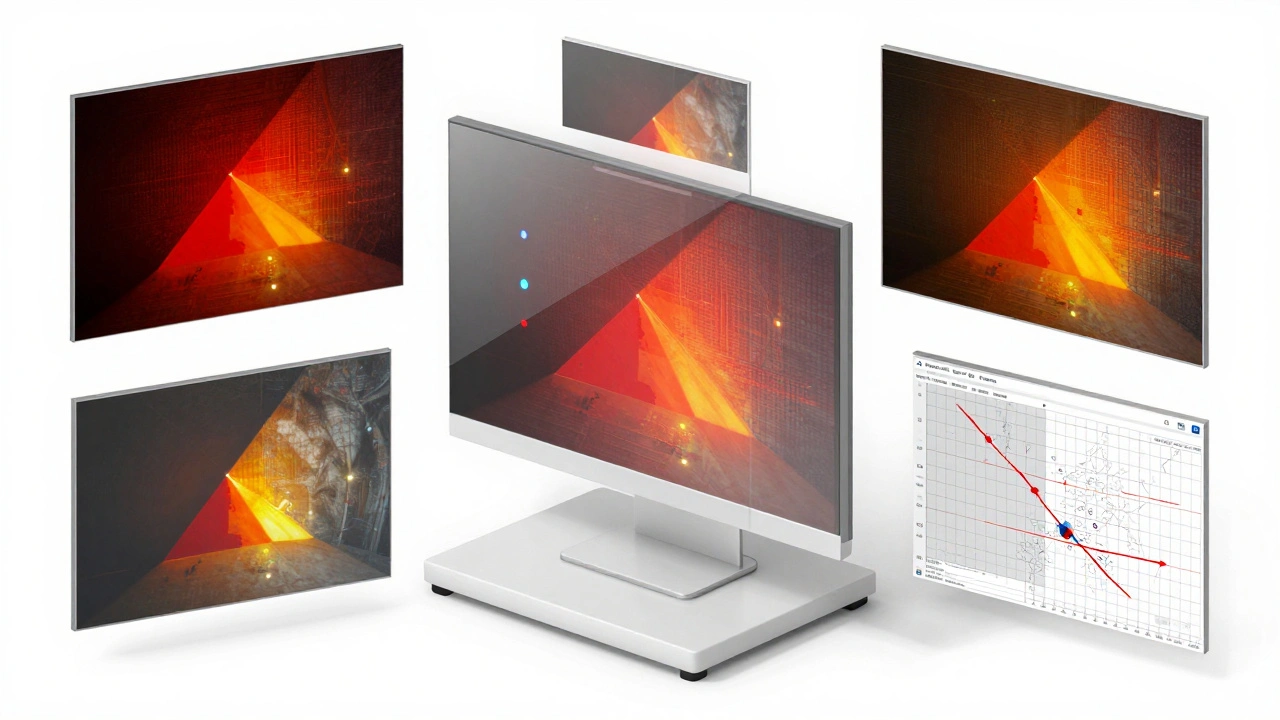

By 2026, text-to-video generation is ubiquitous. Distinguishing real events from rendered simulations requires checking for artifacts. Human physiology is complex. Eyes blink, fingers move naturally, and reflections in glasses are consistent.

AI-generated video often fails at consistency. Hands might merge, backgrounds might flicker, or audio might lip-sync imperfectly. Dedicated forensic software scans frames per second to detect digital noise patterns that render engines leave behind. These patterns act like a digital fingerprint. If the noise looks synthetic, the content is flagged for review.

Furthermore, audio analysis plays a huge role. Real recordings contain background noise-wind, distant traffic, hum of electrical equipment. Clean studio-quality audio attached to a chaotic street scene is a red flag. It suggests the sound was added later to enhance the narrative.

Source Evaluation and Corroboration

A single witness does not make a fact. Reliable standards require multiple independent accounts. If ten accounts post the same story but all share the same network connection or IP address range, it indicates a coordinated disinformation campaign.

Corroboration means finding independent confirmation. Does a local official confirm the damage? Does a weather agency report seismic activity? Cross-referencing social media claims with institutional records builds a chain of custody. Without this triangulation, even viral posts remain rumors.

The Workflow for 2026 Editors

Modern verification happens fast but follows a strict chain. It isn't about posting first; it is about publishing accurate.

- Initial Triage: Identify the platform and account reputation. Is this a bot farm or a local journalist?

- Evidence Gathering: Download raw files whenever possible to preserve metadata.

- Tool Analysis: Run through forensic tools for reverse search and anomaly detection.

- Community Review: Post preliminary findings on discussion boards for peer critique.

- Citation Logging: Save snapshots of the content with timestamps for future reference.

Wikimedia communities operate on a consensus model. Even with perfect technical proof, the community must agree on the interpretation. This social layer adds security against bad actors trying to manipulate technical tools.

Handling Uncertainty and Gray Areas

Sometimes, you cannot verify everything immediately. In those cases, transparency wins. Note the uncertainty in the article draft. Use qualifiers like "according to initial reports" until confirmed. Do not present speculation as absolute fact.

There is also the legal aspect. Some content might be protected by privacy laws or terms of service. Respecting boundaries while investigating truth is crucial. Always attribute the work back to the original uploader if permission allows.

How long does verification take during a breaking event?

Time varies by complexity. Simple image checks take minutes. Full geolocation and forensic analysis can take hours. During fast-moving crises, updates happen in stages as more evidence becomes available.

What tools are essential for verifying social media content?

Essential tools include reverse image search engines, EXIF viewers, frame extraction software, and geolocation mapping applications. Many browsers now bundle basic forensic suites directly.

Can AI reliably detect deepfakes in 2026?

AI detection has improved significantly but is not infallible. Automated flags assist human experts, but human review remains the final safeguard against sophisticated generative media.

Why is crowd-sourcing verification risky?

Crowds may lack training or motivation. Bad actors can weaponize large groups to spread false narratives. Professional oversight is necessary to validate community findings.

Does copyright law restrict verification techniques?

Verification usually qualifies as fair use for educational or informational purposes. However, republishing full copyrighted works without transformation can be legally problematic in some jurisdictions.