Open any random list of notable people on Wikipedia is the world's largest free online encyclopedia written collaboratively by volunteers., and you will likely see a room full of men. This isn't an accident; it’s a structural flaw that has persisted for nearly two decades. While millions of articles exist, a significant portion of them-especially those about women-are missing entirely or are mere stubs. This is where WikiProject Women in Red is a collaborative effort within Wikipedia dedicated to creating and expanding articles about notable women. comes into play.

The initiative didn't start as a grand campaign. It began with a simple observation by volunteer editor Jessy Andrew in 2015. She noticed that many links to women in existing articles were colored red instead of blue. On Wikipedia, a blue link means the article exists. A red link means it doesn’t. These "red links" represent gaps in our collective knowledge. Women in Red turned these gaps into a mission: find the red links, write the articles, and turn them blue.

Why the Gender Gap Exists

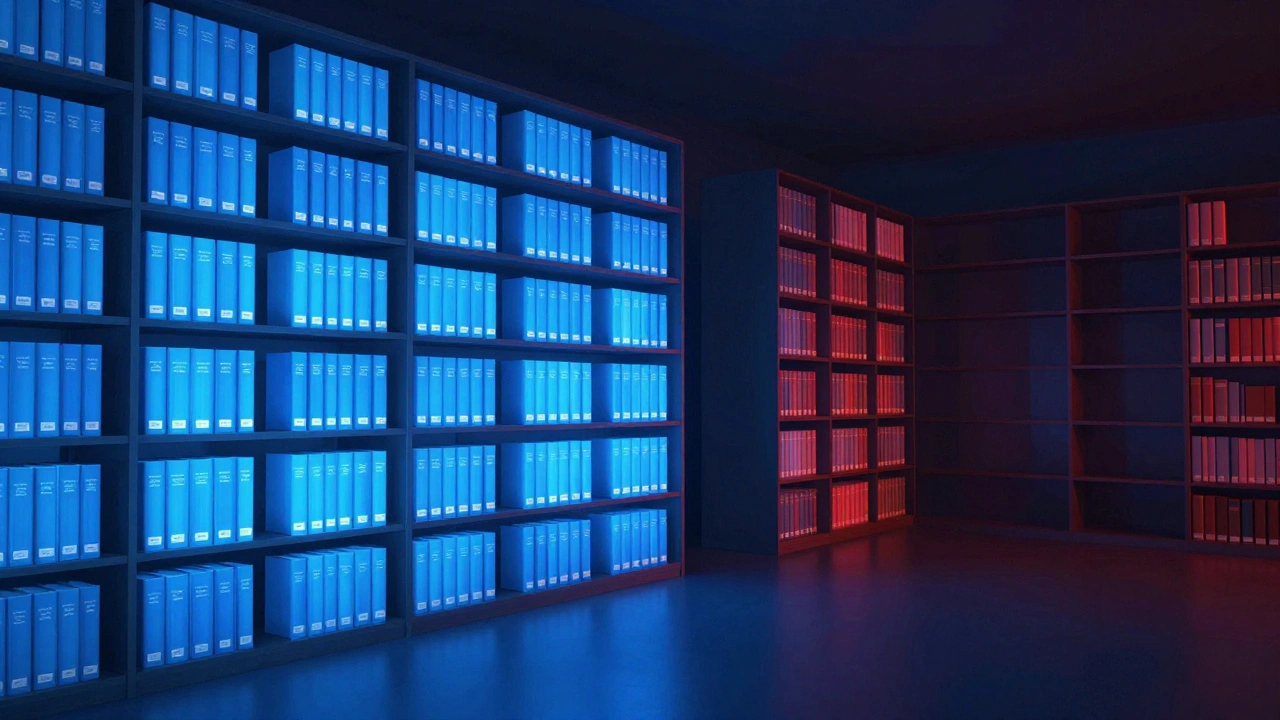

To fix the problem, we have to understand why it started. The gap isn't just about who edits Wikipedia; it’s about what Wikipedia values as "notable." For years, the platform relied heavily on traditional sources like mainstream news outlets, academic journals, and published books. Historically, these sources focused more on men’s achievements than women’s. If a woman wasn't covered in a major newspaper, she often failed the notability threshold required to have her own page.

This creates a feedback loop. Editors look for reliable sources to write an article. They can't find sources because the media ignored the subject. So, they don't write the article. Consequently, future editors still can't find sources. Breaking this cycle requires looking beyond traditional media. It means digging through local archives, university records, oral histories, and specialized databases to prove that a woman’s work was significant, even if the New York Times never mentioned her.

How Women in Red Operates

Unlike some large-scale campaigns that rely on paid staff, Women in Red is run entirely by volunteers. It operates as a "task force" within the broader Wikipedia ecosystem. You don't need to be an expert historian or a professional writer to join. You just need to care about accurate representation. The project provides a structured way for new and experienced editors to contribute without feeling overwhelmed.

The workflow is straightforward but effective:

- Identify Red Links: Editors scan existing articles (like lists of Nobel Prize winners or heads of state) for red links pointing to women who lack their own pages.

- Research Reliably: Volunteers hunt down verifiable information using diverse sources, ensuring the content meets Wikipedia’s strict neutrality and sourcing standards.

- Draft and Publish: Articles are drafted, reviewed by peers for accuracy and tone, and then published to the main site.

One of the most powerful tools they use is the Red Link Challenge is a monthly competition where participants create articles from red links to earn points and prizes.. This gamifies the process, encouraging friendly competition among editors. Participants aim to create the highest number of high-quality articles in a month. It transforms solitary editing into a community event, fostering mentorship between veteran editors and newcomers.

The Impact Beyond Article Counts

Since its inception, the impact has been measurable. Thousands of articles have been created or significantly expanded. But the numbers only tell part of the story. The real value lies in changing the narrative of history. When you create an article for a female scientist from the 19th century, you aren't just adding text to a database. You are correcting the historical record for students, researchers, and curious readers worldwide.

Consider the effect on search engines. Google and other platforms often pull snippets directly from Wikipedia. If a woman doesn't have a Wikipedia page, she effectively disappears from the top results of global searches. By filling these gaps, Women in Red ensures that women’s contributions are visible in the digital age. This visibility matters for young girls looking for role models and for academics trying to trace the lineage of ideas across genders.

Challenges and Criticisms

No movement is without friction. Women in Red faces challenges common to all Wikipedia projects: vandalism, biased opposition, and burnout. Some critics argue that focusing solely on women ignores intersectional issues, such as the underrepresentation of women of color or LGBTQ+ individuals. In response, the project has evolved. Many chapters now host specific editathons focused on Black women, Indigenous leaders, or women in STEM fields, ensuring a more inclusive approach to closing the gap.

Another challenge is the quality versus quantity debate. Critics worry that rushing to create articles might lead to poorly sourced or biased entries. To combat this, Women in Red emphasizes rigorous peer review. Before an article goes live, it is often checked by multiple editors who ensure that every claim is backed by a credible source. This slows the process down slightly but guarantees that the final product withstands scrutiny.

Getting Involved: A Practical Guide

If you want to help, you don't need to rewrite your resume. Here is how you can start contributing today:

- Create an Account: Go to Wikipedia and sign up. An account gives you a talk page and allows you to save drafts privately before publishing.

- Join the Community Portal: Navigate to the Women in Red task force page. There, you will find current challenges, lists of needed articles, and contact information for mentors.

- Start Small: Don't try to write a biography of Marie Curie; that page already exists. Look for "stubs"-short articles that need expansion-or red links in categories like "Local Politicians" or "University Professors."

- Learn Sourcing: Spend time learning how to cite sources correctly. Use libraries, JSTOR, and official institutional websites. Avoid blogs or social media posts unless they are primary sources from the subject themselves.

Mentorship is key. Most successful editors started by asking questions. Don't be afraid to post on the project’s talk page if you’re unsure about a source or a formatting rule. The community is generally welcoming to those who show good faith and a willingness to learn.

The Future of Digital Equity

As we move further into the 2020s, the importance of digital literacy and equitable representation grows. Artificial intelligence systems are trained on data scraped from the web. If Wikipedia remains skewed toward male perspectives, AI models will inherit and amplify those biases. Closing the gender gap on Wikipedia is not just about fairness; it’s about ensuring the integrity of the data that powers future technologies.

Women in Red serves as a blueprint for other underrepresented groups. Its methods-identifying gaps, mobilizing volunteers, and enforcing strict quality control-can be applied to improve coverage of non-Western cultures, disabled individuals, and working-class heroes. The goal is not just to add names, but to build a more complete, nuanced, and truthful picture of humanity.

| Year | Milestone | Significance |

|---|---|---|

| 2015 | Launch of Women in Red | Established the framework for turning red links into blue ones. |

| 2017 | First Global Red Link Challenge | Gamified editing, attracting thousands of new contributors worldwide. |

| 2019 | Expansion to Non-English Wikipedias | Localized efforts began in Spanish, French, and German editions. |

| 2023 | Focus on Intersectionality | Dedicated sprints for women of color and LGBTQ+ figures increased diversity. |

| 2026 | Integration with AI Tools | Use of AI-assisted drafting tools to speed up research while maintaining human oversight. |

The journey to close the gender gap is ongoing. It requires patience, persistence, and a commitment to truth. Every article created is a small victory against erasure. By participating in Women in Red, you become part of a global movement to ensure that history reflects everyone, not just the privileged few.

Do I need to be an expert to edit Wikipedia?

No, you do not need to be an expert. You just need to be able to find reliable sources and summarize them neutrally. Wikipedia values verifiability over authority. If you can cite a reputable book or article, you can write the entry.

What is a "red link" on Wikipedia?

A red link is a hyperlink to a page that does not yet exist. Clicking it takes you to a blank page where you can start writing the article. Blue links indicate that the page already exists.

Is Women in Red only for women?

Absolutely not. Anyone can participate. In fact, having a diverse group of editors helps reduce bias. Men, non-binary individuals, and people of all backgrounds are encouraged to join and contribute.

How long does it take to create an article?

It varies widely. A simple stub might take an hour, while a complex biography with extensive research could take weeks. The Red Link Challenge encourages participants to create multiple shorter articles in a month.

Can my article be deleted?

Yes, if it fails to meet notability guidelines or lacks reliable sources. However, experienced editors in Women in Red often review drafts beforehand to minimize this risk. If deleted, you can usually appeal or revise the content.

Does Women in Red work outside the English Wikipedia?

Yes. Similar initiatives exist in many language editions, including Spanish, French, German, and Arabic. They often collaborate on global editathons to improve representation across languages.

What types of sources are acceptable?

Acceptable sources include peer-reviewed journals, reputable news organizations, published books, and official institutional records. Social media, blogs, and self-published content are generally not considered reliable secondary sources.

How does this affect AI and search results?

Search engines and AI models frequently use Wikipedia data. Adding accurate articles about women ensures that AI training data is more balanced, reducing gender bias in automated responses and search rankings.