Quick Takeaways for Data Explorers

- Topic modeling finds patterns, not just keywords, allowing you to see conceptual links between unrelated articles.

- Latent Dirichlet Allocation (LDA) is the gold standard for discovering these hidden themes.

- Preprocessing (removing "stop words") is the most critical step to avoid getting results that are just common grammar.

- Clustering reveals "knowledge gaps" where Wikipedia lacks a formal category for a recurring theme.

How Topic Modeling Actually Works

To get this right, you have to stop thinking about documents as a string of sentences and start seeing them as a mixture of topics. If you have an article on "Quantum Computing," it isn't just one topic. It is a mix of physics, computer science, and perhaps a bit of history.

The most common way to do this is through Latent Dirichlet Allocation (or LDA), which is a generative statistical model that allows sets of observations to be explained by unobserved groups. LDA assumes every document is a blend of topics and every topic is a blend of words. For example, if the word "algorithm" frequently appears with "neuron" and "weight," LDA identifies a cluster it labels as a topic-which a human would then recognize as "Neural Networks."

Why does this matter for Wikipedia? Because the site is built by humans. Humans are biased. We create categories based on how we think, not necessarily how the information relates. By using LDA on a dump of Wikipedia text, you can find "shadow categories"-clusters of information that are logically connected but aren't linked by a single category page.

Step-by-Step: Finding Clusters in Wikipedia Data

You can't just feed a raw Wikipedia page into a model. If you do, the model will tell you that the most important topic in the world is the word "the." Here is how you actually execute the process.

- Data Acquisition: Use the Wikipedia Dump, which is a massive XML file containing the full text of every article. Processing this requires a lot of RAM or a distributed system like Apache Spark.

- Text Cleaning (Preprocessing): This is where the magic happens. You need to strip out HTML tags and perform "tokenization" (breaking sentences into words). You must remove Stop Words-common words like "and," "is," and "of"-because they carry no semantic weight.

- Lemmatization: You turn "running," "runs," and "ran" into the base form "run." This prevents the model from thinking these are three different concepts.

- Vectorization: The computer can't read words, so you turn them into numbers. Most researchers use a "Bag of Words" approach or TF-IDF (Term Frequency-Inverse Document Frequency) to highlight words that are unique to a specific document but rare across the whole site.

- Running the LDA Model: You choose the number of topics (K) you want to find. If you pick K=100, the model will force the data into 100 clusters. If you pick K=1000, the clusters become much more specific.

Comparing Topic Modeling Techniques

LDA isn't the only tool in the shed. Depending on whether you need speed, accuracy, or deep semantic meaning, you might pick a different path.

| Method | Core Logic | Best Use Case | Main Downside |

|---|---|---|---|

| LDA | Probabilistic word distribution | General discovery of themes | Needs manual "K" topic setting |

| NMF | Linear algebra (matrix factorization) | Smaller datasets, faster speed | Less flexible than LDA |

| BERTopic | Transformer embeddings + HDBSCAN | Capturing actual meaning/context | Requires high GPU power |

The "Aha!" Moment: Discovering Hidden Clusters

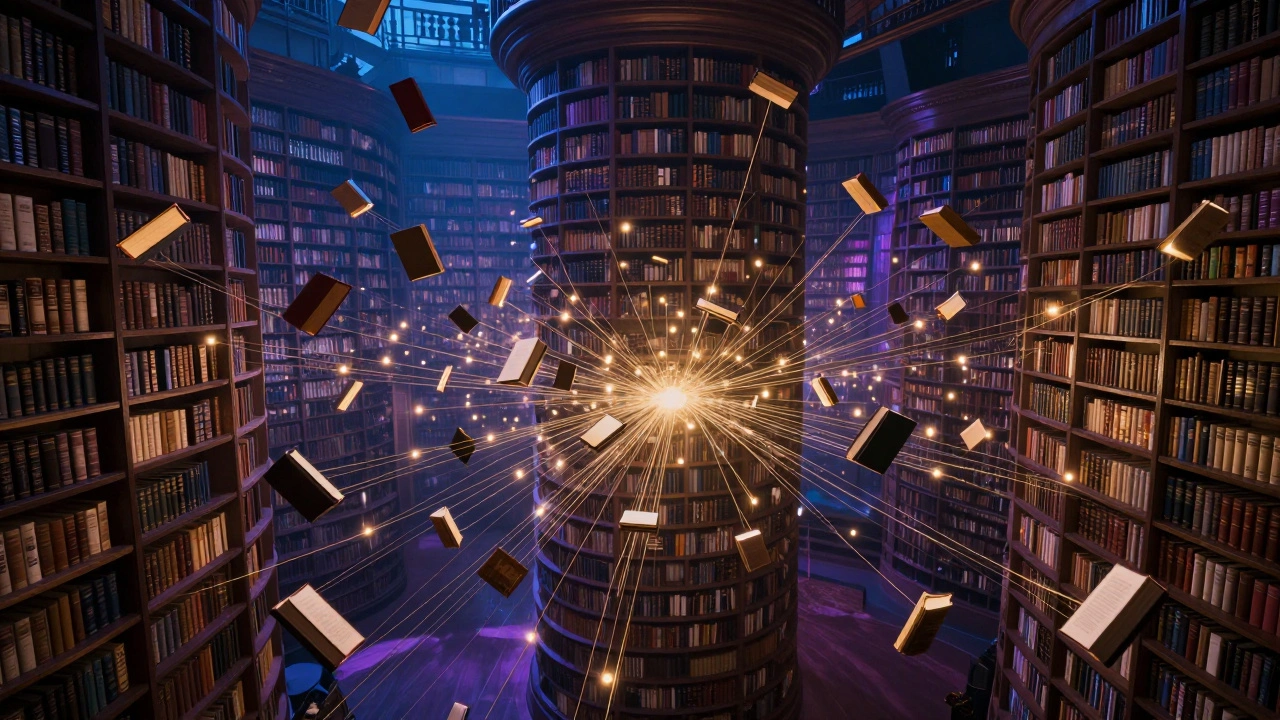

When you run these models on Wikipedia, you start seeing things that are invisible to the naked eye. For example, a researcher might find a cluster of articles that all mention "carbon capture," "vertical farming," and "lab-grown meat." On Wikipedia, these might be in different categories (Agriculture, Environmental Science, Biotechnology). But the topic model groups them together because they all share a semantic core: "Future Sustainability Technology."

This is a goldmine for academic research. It allows you to map the evolution of a field. If you analyze Wikipedia dumps from 2010 versus 2026, you can see exactly when "Artificial Intelligence" shifted from a niche computer science topic to a dominant cultural theme that overlaps with ethics, law, and art.

Another fascinating use is finding "orphaned" content. Sometimes, the model identifies a strong cluster of documents that has no central "Hub" page. This tells you exactly where Wikipedia needs a new summary article to tie those disparate pages together, effectively guiding the community toward creating more structured knowledge.

Common Pitfalls to Avoid

Topic modeling isn't a magic button. If you just plug in the data and hit "run," you'll likely get garbage. The most common mistake is ignoring the Coherence Score. Coherence is a metric that tells you if the words in a topic actually make sense together. If your coherence score is low, your "topics" are just random collections of words, and you need to adjust your preprocessing or the number of topics (K).

Another trap is the "Over-clustering" problem. If you set K too high, the model will split a single cohesive topic into five tiny, confusing ones. For instance, instead of one topic for "European History," you might get separate, overlapping topics for "18th Century France," "Napoleonic Wars," and "Viennese Diplomacy." The key is to find the "elbow point" where adding more topics no longer significantly improves the model's fit.

The Future of Semantic Discovery

We are moving away from simple word counting toward Natural Language Processing (or NLP), which is the branch of AI focused on the interaction between computers and humans using natural language. Modern tools like BERTopic use Transformers-the architecture behind GPT-to understand that "bank" in a financial context is different from "bank" in a river context.

When you apply these to Wikipedia, the clusters become incredibly precise. You aren't just finding words that appear together; you're finding concepts that *mean* the same thing. This transforms Wikipedia from a collection of pages into a living, multi-dimensional map of human knowledge.

Do I need to be a coder to do topic modeling on Wikipedia?

You don't need to be a software engineer, but you do need a basic understanding of Python and a few libraries like Gensim or Scikit-learn. There are also some no-code tools, but for the scale of Wikipedia, a script is usually necessary to handle the massive data dumps.

How do I know if the number of topics (K) is correct?

The best way is to use a coherence plot. You run the model for various values of K (e.g., 10, 20, 50, 100) and plot the coherence score. The point where the score starts to plateau or drop is usually your optimal number of topics.

Why is preprocessing so important?

Because topic models are based on frequency. If you leave in words like "the," "and," or "is," the model will identify these as the most important "topics" simply because they appear most often, drowning out the actual meaningful content.

Is LDA better than BERTopic?

It depends. LDA is faster and better for very large, simple datasets where you just want to find general themes. BERTopic is far more accurate because it understands context and semantics, but it requires significantly more computing power (GPUs).

Can I use this to find fake information on Wikipedia?

Not directly, but it helps. If a group of articles starts clustering around a topic that has no basis in established literature or other reliable sources, it can act as a red flag for editors to investigate potential coordinated misinformation campaigns.

What to Try Next

If you have successfully found clusters in Wikipedia, the next logical step is Sentiment Analysis. Once you know *what* people are writing about, you can analyze *how* they feel about it. Are the articles in the "Climate Change" cluster becoming more urgent or more pessimistic over time? Combining topic modeling with sentiment tracking gives you a full picture of the cultural shift in human knowledge.

Alternatively, try Network Analysis. Use the links between the articles in your discovered clusters to see which page acts as the "bridge" between two different topics. This is how you find the true influencers of information within the encyclopedia.