Quick Takeaways on Trusting the Wiki

- The Strength of Numbers: High-traffic pages are usually more accurate because thousands of eyes are watching for errors.

- Verification is King: The site isn't a primary source; it's a map leading you to reliable citations.

- Bias is Real: Because the editor base is skewed (mostly male, Western, English-speaking), some topics have a natural tilt.

- The "Edit War" Cycle: Conflict over "truth" often leads to a more balanced, neutral point of view over time.

The Engine Under the Hood: How it Actually Works

To understand if Wikipedia is reliable, you have to stop thinking of it as a book and start thinking of it as a process. Wikipedia is a multilingual online encyclopedia written collaboratively by volunteers. Unlike a traditional Britannica volume, where a small group of experts decides what is true, the Wiki relies on a concept called consensus.

When a user adds a claim, it's not automatically "true." It's only acceptable if it's backed by a reliable source. This is the core of the Verifiability policy. If you write "The sky is neon green," and you can't link to a peer-reviewed journal or a reputable news outlet saying so, a seasoned editor will slap a "citation needed" tag on it or delete it within seconds. This creates a self-correcting loop where the most popular pages are often the most scrutinized and, therefore, the most reliable.

The Great Vandalism Panic

We've all seen the jokes about people changing a celebrity's death date or adding insults to a politician's bio. This is known as vandalism. But here is the secret: the scale of the site makes this noise negligible. The Wikimedia Foundation, the non-profit that manages the infrastructure, has implemented a variety of tools to stop this.

They use bots-automated scripts that scan for common swear words or massive deletions-and a system of user permissions. If you're a new account or an anonymous IP address, you can't edit "protected" pages, like the one for the current US President. These high-stakes pages require a level of trust earned through thousands of helpful edits. This layered security means that while a prank might survive for ten minutes, it rarely survives the hour.

| Feature | Wikipedia | Traditional (e.g., Britannica) |

|---|---|---|

| Update Speed | Near instantaneous | Years (Print) / Months (Digital) |

| Author Identity | Anonymous/Pseudonymous Volunteers | Named Subject Matter Experts |

| Verification | Crowdsourced consensus | Editorial board review |

| Cost | Free (Donation-based) | Subscription/Purchase |

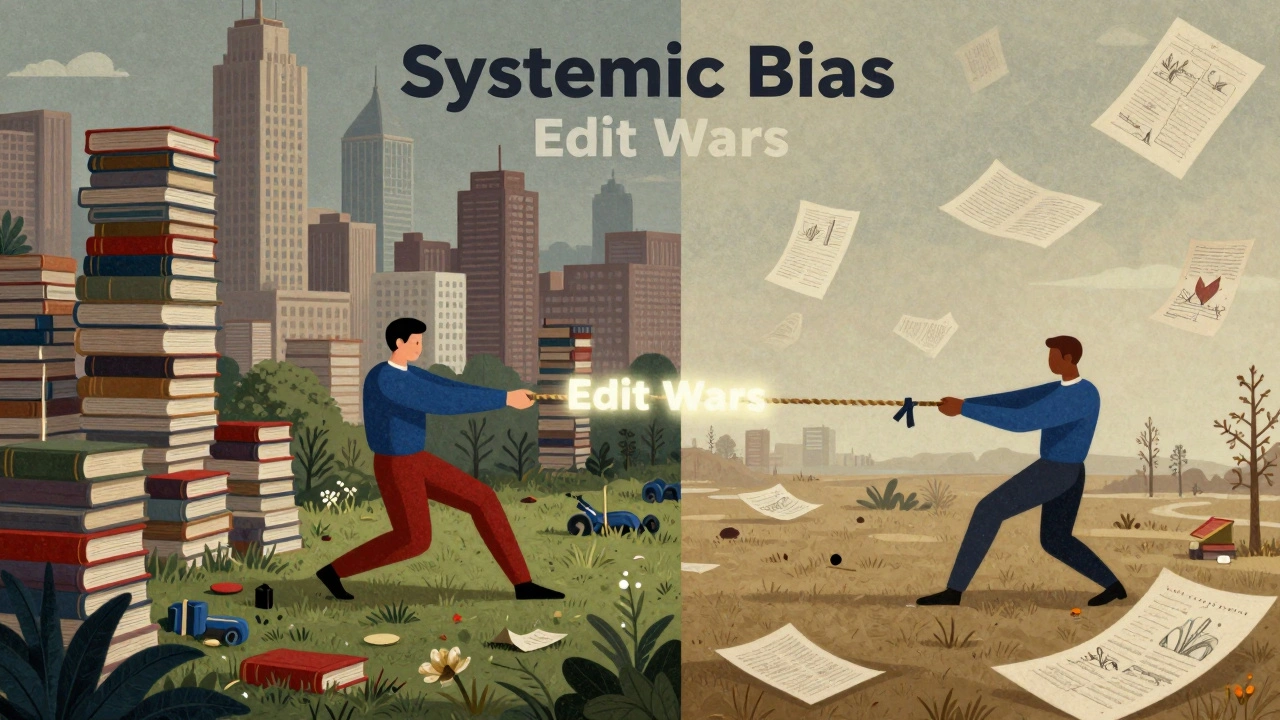

The Dark Side: Systemic Bias and Governance

While blatant lies are easy to catch, systemic bias is much harder to fight. This isn't about one person lying; it's about who is doing the writing. If 80% of the editors are from North America and Europe, the "neutral" tone of the site will naturally reflect a Western perspective. This leads to a phenomenon where a minor historical event in the UK might have a 5,000-word page, while a massive cultural shift in Southeast Asia is barely a paragraph.

This creates governance conflicts. When two groups of editors disagree on how to frame a controversial topic-say, the Israeli-Palestinian conflict or the ethics of climate change-they enter an edit war. This is when two parties repeatedly overwrite each other's changes. To solve this, the community uses "Talk pages" to debate the wording. This is basically a digital town hall where editors argue over a single adjective for three weeks until they reach a compromise. It's tedious, but it's the only way to ensure the Neutral Point of View (NPOV) standard is met.

Why Your Professor Still Says "Don't Use Wikipedia"

If the site is so self-correcting, why is it still banned in many classrooms? The problem isn't necessarily the content, but the nature of the source. In academia, you need a primary source-the original study, the legal document, or the eyewitness account. Wikipedia is a tertiary source. It's a summary of a summary.

Using Wikipedia as your final destination is a mistake because you're trusting the editor's interpretation of the source. However, using it as a discovery tool is a pro move. The real gold is in the "References" section at the bottom. By clicking those links, you move from the crowdsourced summary to the actual evidence. The most effective way to use the site is to let it guide you to the experts, then read the experts yourself.

The Trust Metric: How to Spot a Reliable Page

Not all pages are created equal. If you're unsure whether to trust a specific entry, look for these red flags and green lights:

- The Talk Page: Click the "Talk" tab. If it's a ghost town, the page isn't being scrutinized. If there are 50 pages of heated debate, it means the community is actively refining the truth.

- Citation Density: A reliable page looks like a forest of little blue numbers. If a page makes bold claims without any superscript numbers, be skeptical.

- The "Warning" Banner: Look for banners at the top that say "This article needs additional citations" or "The neutrality of this article is disputed." These are honest admissions of imperfection.

- Version History: Check the "View history" tab. A page that has been edited 10,000 times by 500 different people is generally more reliable than one edited twice by one person.

The Future of Truth in the Age of AI

With the rise of Large Language Models (LLMs) and AI-generated content, Wikipedia's role is changing. AI often "hallucinates," making up facts that sound confident. Because Wikipedia has a strict requirement for citations, it serves as a critical guardrail. Many AI models are actually trained on Wikipedia data because of its structured nature and consensus-driven accuracy.

The danger now is automated vandalism-AI bots designed to subtly tilt the narrative of a page over months, rather than crashing it in seconds. The fight for reliability is moving from "stopping the prankster" to "detecting the algorithm." This means the community will likely need more human oversight, not less, to ensure that the crowdsourced knowledge remains a reflection of reality rather than a reflection of a programmed bias.

Can I use Wikipedia for a college paper?

Generally, no. Most professors forbid it because it is a tertiary source and can be changed by anyone. However, you should use it to find the original sources cited at the bottom of the page. Use Wikipedia to find the lead, then cite the original research or book mentioned in the references.

Who decides what is true on Wikipedia?

No single person decides. Truth is determined by consensus among editors based on the Verifiability policy. If a claim is backed by multiple reliable, third-party sources, it is accepted. If editors disagree, they debate it on the Talk page until a neutral wording is agreed upon.

What is an "Edit War"?

An edit war happens when two or more editors repeatedly change a piece of information back and forth because they disagree on the facts or the framing. When this happens, the page may be "locked" by an administrator to force the parties to resolve the conflict on the Talk page.

How does Wikipedia prevent fake news?

It uses a combination of automated bots that detect common vandalism patterns and human "admins" who monitor changes. The most important defense is the requirement for citations; if a piece of news cannot be verified by a reputable source, it is typically removed quickly.

Is Wikipedia biased?

Yes, it can be. Systemic bias occurs because the volunteer editor base is not a perfect cross-section of the global population. This means Western and English-speaking perspectives are often over-represented, while voices from the Global South may be under-represented.