Key Takeaways for Navigating Digital Trust

- Verification is shifting from trusting a source to trusting a traceable process.

- Transparency requires open-sourcing the logic behind how knowledge is curated.

- Accountability means having a clear path to correct errors and penalize intentional falsehoods.

- Hybrid models combining human expertise with AI auditing are the current gold standard.

The Death of the Single Source of Truth

For decades, we relied on a few giant pillars of knowledge. You had the encyclopedia, the nightly news, and maybe a few trusted textbooks. Today, knowledge is fragmented. It lives in decentralized wikis, social media threads, and AI chat interfaces. This shift has created a massive gap in how we handle trust frameworks is a set of standards, policies, and technical tools used to ensure that digital information is accurate, reliable, and traceable. When there is no single editor-in-chief for the internet, we need a system that verifies the data itself, not just the person posting it.

Think about how you use a map app. You don't necessarily trust the company, but you trust the aggregate data from millions of users. That's a form of distributed trust. However, applying that to complex knowledge-like medical advice or geopolitical history-is much harder. You can't simply 'crowdsource' the truth when a majority of people might be wrong about a scientific fact.

Verification: Moving Beyond the Blue Checkmark

We tried to solve trust with 'verification badges,' but those became status symbols you could buy. Real verification needs to be deeper. One of the most promising paths is the use of Content Credentials, which is a technical standard that attaches metadata to digital content to show its origin and history of edits. This is essentially a digital nutrition label. If you see an image or a claim, you can see exactly where it came from and whether it was altered by an AI tool.

Another heavy hitter in this space is Cryptographic Proofs, which are mathematical methods used to verify that a piece of data has not been tampered with since its creation. By using these, a knowledge base can prove that a fact was sourced from a specific government document without the user having to manually hunt for the original PDF. It turns trust into a math problem rather than a gut feeling.

| Method | How it Works | Strength | Weakness |

|---|---|---|---|

| Reputation Systems | Users rate the accuracy of contributors. | Fast, community-driven. | Prone to 'echo chambers.' |

| Source Attribution | Linking directly to primary documents. | High transparency. | Requires user effort to verify. |

| Algorithmic Auditing | AI checks other AI for hallucinations. | Scalable and instant. | Can miss subtle nuances. |

| Blockchain Notarization | Timestamping data on a ledger. | Immutable history. | High energy/complexity. |

Transparency and the 'Black Box' Problem

Transparency isn't just about showing your sources; it's about showing your work. Most AI models are 'black boxes.' They give you an answer, but they can't tell you *why* they chose that specific phrasing or which piece of data tipped the scale. To fix this, we are seeing a move toward Retrieval-Augmented Generation, often called RAG, which is a technique that forces an AI to retrieve specific, cited documents before generating a response. Instead of the AI guessing based on its training, it acts like a librarian who finds three books and summarizes them for you.

But transparency also applies to humans. When a wiki page is edited, we can see the history. But we rarely see the *intent*. Why was this change made? Was it a correction based on a new study, or was it a subtle attempt to shift a political narrative? True transparency requires a link between the edit and the evidence. If you change a date in a biography, the system should prompt you for a source before the edit even goes live.

Accountability: Who Pays for the Lie?

Accountability is the hardest piece of the puzzle because the internet is largely anonymous. If an AI provides a hallucinated medical dose that harms someone, who is responsible? The developer? The user who prompted it? The data source that provided the wrong info? We are currently seeing the rise of Digital Accountability Frameworks, which are legal and technical structures that assign liability for misinformation based on the role of the entity in the information chain.

A practical example is the 'Knowledge Bounty' system. Imagine a platform where experts are paid to debunk falsehoods. If a piece of information is proven wrong by a verified expert, the original poster (or the AI company) loses a stake of reputation or currency. This creates a financial or social cost for being wrong, which is the only real way to curb the flood of low-quality content. When there is no cost to lying, lying becomes the most efficient way to get attention.

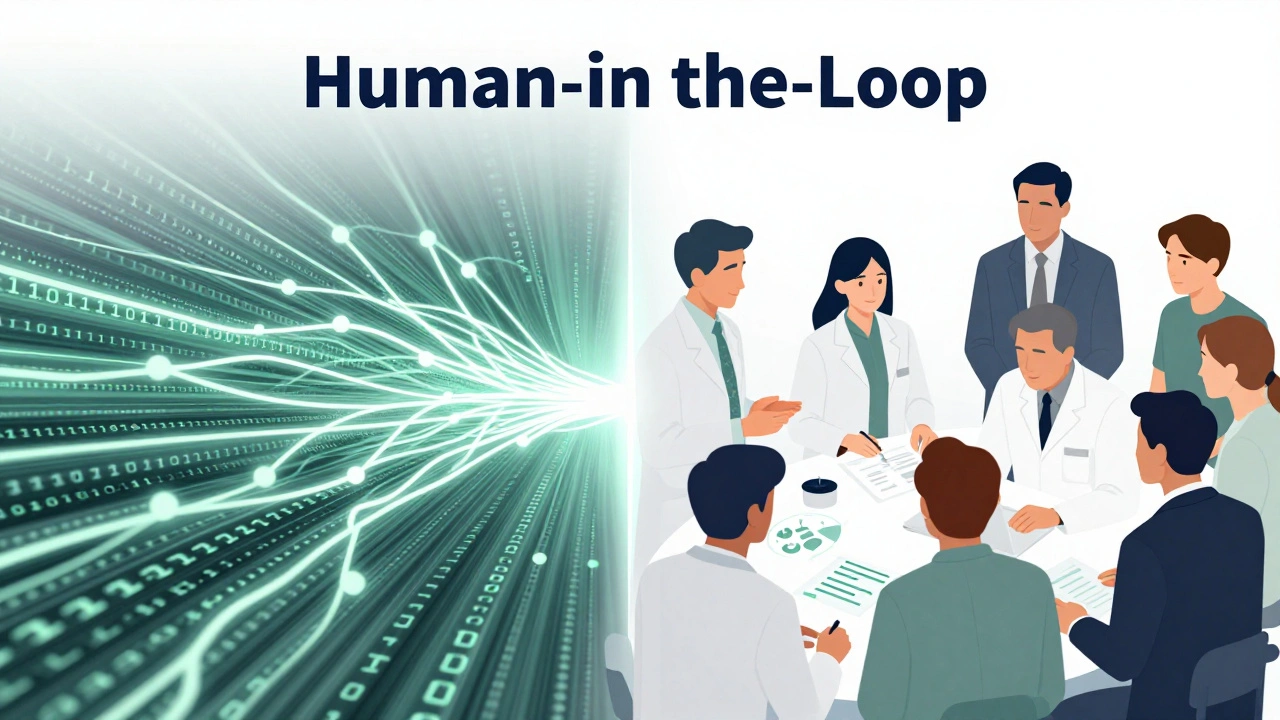

The Human Element in a Machine World

We can't automate truth. Truth is often messy, contextual, and subject to interpretation. This is where Human-in-the-Loop (HITL) systems come in. These are processes where AI handles the bulk of data processing, but humans provide the final layer of verification and ethical judgment. For instance, an AI might scan 10,000 medical papers to find a trend, but a board of doctors must sign off on the conclusion before it's published as 'knowledge.'

The goal isn't to replace humans with a perfect algorithm, but to use algorithms to clear the noise so humans can focus on the nuance. If an AI can filter out 99% of the obvious lies, a human expert can spend their time debating the remaining 1% where the truth is actually contested. That's where real knowledge grows-not in the agreement, but in the argued-out correction.

Practical Steps for Personal Knowledge Verification

While the world builds these big frameworks, you can use a few rules of thumb to protect yourself from misinformation. First, look for 'lateral reading.' Instead of staying on one page to see if it looks professional, open five new tabs and see what other independent sources say about that same claim. If only one site is reporting a 'miracle cure,' it's probably a scam.

Second, check for the 'citation trail.' A link to another blog post is not a source; it's just another person's opinion. A link to a peer-reviewed study or a primary legal document is a source. If you can't find the original document that started the claim, treat the information as a rumor, not a fact.

Can we ever truly trust AI-generated knowledge?

Trust shouldn't be binary. You shouldn't 'trust' or 'distrust' AI; instead, you should trust the verification process surrounding it. When an AI uses RAG (Retrieval-Augmented Generation) to provide direct citations to reputable sources, the trust moves from the AI's 'brain' to the evidence it provides. Trust the evidence, not the interface.

What is the difference between transparency and verification?

Transparency is about seeing how the clock works (showing the data, the algorithms, and the edits). Verification is about proving the clock is telling the right time (comparing the data against a known truth or a primary source). You can have transparency without verification-for example, an AI can be transparent about why it lied, but that doesn't make the lie true.

How do cryptographic proofs actually help with trust?

They create an immutable digital fingerprint. If a government agency publishes a report and signs it with a cryptographic key, any single character change in that document will break the signature. This allows you to know with 100% certainty that the document you are reading is exactly what was published, preventing subtle 'stealth edits' that change a fact's meaning.

Will these frameworks make the internet more censored?

There is a risk that 'truth' becomes defined by whoever controls the verification framework. However, the goal of decentralized trust frameworks-like open-source protocols and blockchain notarization-is to remove the middleman. The idea is to move away from 'Trust us because we are the authority' to 'Trust this because the evidence is mathematically verifiable by anyone.'

What is a 'Knowledge Bounty' in a real-world scenario?

Imagine a platform like Wikipedia, but with a financial layer. If a user posts a claim that is later proven false by a verified expert (backed by primary evidence), the original poster's 'trust score' drops, and a portion of their staked collateral is paid to the expert who corrected it. This incentivizes accuracy over speed or sensationalism.

What's Next for Your Digital Diet

If you're feeling overwhelmed by the noise, start by auditing your sources. Are you getting your news from a curated feed that only tells you what you already believe? Try seeking out 'adversarial' information-reputable sources that disagree with your view-and apply the verification steps mentioned above. The future of knowledge isn't about finding a perfect, unbiased source; it's about developing the skill to triangulate the truth between multiple, imperfect sources.