Every minute, someone edits a Wikipedia page. Not a bot. Not a paid staffer. A volunteer-a real person, sitting at home, on a lunch break, or after work, trying to keep one of the world’s largest knowledge bases accurate, neutral, and free of spam. These volunteers aren’t just contributors. They’re moderators, enforcers, referees, and sometimes, the only thing standing between Wikipedia and chaos.

Who Are These Volunteers?

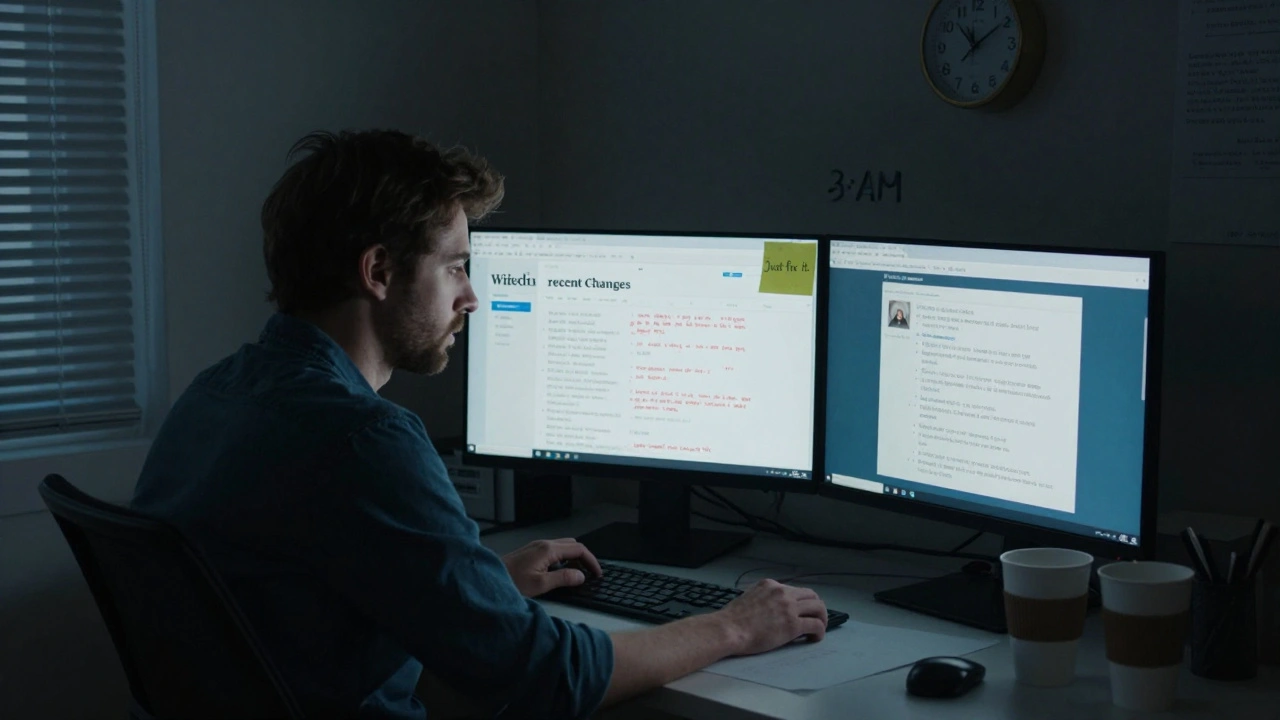

Wikipedia doesn’t have a corporate moderation team. It has about 70,000 active editors who make at least five edits per month. Among them, roughly 1,500 hold administrative tools-permissions to block users, delete pages, or protect articles from editing. These aren’t hired roles. They’re elected by the community, often after years of quiet, consistent work. Many started as readers who noticed a typo and fixed it. Then they saw a vandalism edit. Then they started reverting it. Then they started patrolling recent changes. Then they got tired.

The average admin spends 8-12 hours a week on moderation tasks. That’s not a part-time job. That’s a second job. And for many, it’s unpaid, unacknowledged, and emotionally draining.

The Weight of Moderation

Imagine this: You wake up, open Wikipedia, and spend your morning undoing 40 vandalism edits. You block a user who’s been harassing editors for weeks. You spend an hour arguing in a talk page thread about whether a celebrity’s biography should mention a controversial relationship. You get a personal message from someone you blocked: "You’re a fascist. I hope you die." You report it. Nothing happens. The system doesn’t notify you when the same person creates a new account and starts again.

This isn’t hypothetical. It’s daily for hundreds of volunteers. A 2023 study by the Wikimedia Foundation found that 68% of active admins reported feeling "frequently overwhelmed" by their responsibilities. Nearly half said they had considered quitting in the past year. The workload isn’t just about volume-it’s about emotional labor. You’re policing behavior, mediating disputes, and absorbing hostility-all while being told you’re "just a volunteer."

Why Burnout Hits Hard

Burnout doesn’t come from working too many hours. It comes from working too many hours with no recognition, no support, and no exit strategy.

Wikipedia’s culture celebrates self-reliance. "Just fix it," they say. But what if you’re the one who’s been fixing it for five years? What if you’re the one who’s been the target of the same troll’s attacks? What if you’ve been the only one to notice a pattern of harassment and no one else steps in?

There’s no HR department. No mental health resources. No paid time off. No manager to talk to. Volunteers are expected to handle everything-from edit wars to death threats-using only a wiki interface and a community consensus process that often takes weeks to resolve a single issue.

And when they do step away? Often, they’re replaced by someone else who doesn’t know the history. The same mistakes repeat. The same conflicts flare up. The same people get burned out again.

What Support Exists-and What Doesn’t

Wikipedia does have some support structures. There’s the Administrator Intervention Against Vandalism (AIV) page. There are arbitration committees for serious disputes. There are user warning templates and automated tools like ClueBot NG that flag edits. But these are tools, not systems.

Most volunteers rely on informal networks: a few trusted editors they message privately, a Discord channel, a subreddit. These aren’t official. They’re fragile. If someone leaves the community, those networks vanish.

There’s no centralized mentorship program. No onboarding for new admins. No training on conflict de-escalation. No psychological safety guidelines. Volunteers are expected to learn by doing-and by suffering.

Compare this to other platforms. Reddit has moderators with access to staff. YouTube has community guidelines teams. Even TikTok has a reporting flow with human review. Wikipedia? You’re on your own.

Who’s Leaving-and Why

The decline in active editors isn’t just about interest. It’s about exhaustion. Between 2007 and 2025, the number of active editors dropped by 40%. The median tenure of an admin has fallen from 5.2 years to 2.8 years. Why? Because the system doesn’t adapt.

Younger editors are more likely to leave. They’re more aware of mental health, more skeptical of unpaid labor, and less willing to tolerate toxicity. Many who start as students or researchers leave after their project ends. Others leave because they’ve been attacked, ignored, or gaslit by other editors who claim they’re "just following policy."

The most common reason for leaving? "I’m tired of being the adult in the room."

What Could Change

Wikipedia could survive without change. But it wouldn’t thrive. And it might not last.

Here’s what could help:

- Formalized mental health support-a confidential, volunteer-run counseling line staffed by trained moderators.

- Reduced workload per admin-limiting the number of tools any one person can hold, and rotating responsibilities.

- Automated escalation-when a user makes 10+ harmful edits in a day, trigger a human review, not just a bot flag.

- Recognition and documentation-publicly acknowledging contributions, not just with badges, but with stories, interviews, and archived impact reports.

- Community-trained mediators-volunteers who specialize in de-escalating disputes, not just enforcing rules.

None of these require money. They require will. And that’s the real bottleneck.

The Bigger Picture

Wikipedia is one of the last great public knowledge projects built entirely on volunteer labor. It’s not perfect. It’s not always fair. But it’s real. And it’s fragile.

When you use Wikipedia, you’re not just consuming information. You’re relying on the emotional resilience of strangers who’ve given up hours of their lives to keep it clean. That’s not sustainable. Not without support.

The question isn’t whether Wikipedia can survive without better moderation systems. It’s whether we, as users, care enough to demand them.

Why doesn’t Wikipedia pay its moderators?

Wikipedia was built on the idea that knowledge should be free-and so should the people who maintain it. The Wikimedia Foundation, which runs Wikipedia, is a nonprofit that relies on donations. Paying moderators would require a massive shift in funding, governance, and philosophy. While some have proposed small stipends or grants for long-term contributors, the community remains divided. Many fear paid staff would undermine neutrality. Others argue that unpaid labor is unsustainable and unfair. Right now, the model depends on volunteers who can afford to give their time.

How do volunteers deal with harassment?

Volunteers can block users, report abuse to the Arbitration Committee, or use the "Administrator Intervention Against Vandalism" page for urgent cases. But there’s no direct line to human support. Most rely on informal networks-private messages, trusted editors, or third-party platforms like Discord. The system is reactive, not preventive. Many report that harassment is ignored if it doesn’t violate a specific policy, even if it’s clearly abusive. There’s no standardized process for emotional or psychological harm.

Is Wikipedia’s moderation system broken?

It’s not broken-it’s under-resourced. The system works well for simple edits and clear vandalism. But it struggles with nuanced disputes, coordinated harassment, and emotional burnout. The tools are outdated. The process is slow. The support is nonexistent. Many volunteers say the system was designed for a different era, when contributors were mostly academics with time to spare. Today, the same rules apply, but the people applying them are exhausted, underpaid, and overworked.

Can AI help reduce the workload?

Yes, but not enough. Tools like ClueBot NG and Huggle automatically revert vandalism and flag edits. But AI can’t judge tone, context, or intent. It can’t tell the difference between a well-meaning edit that’s slightly biased and a malicious one. It can’t mediate a heated argument on a talk page. It can’t offer emotional support. AI helps with volume, but not with the human parts of moderation-where most of the burnout happens.

What can regular users do to help?

Be respectful. Don’t assume bad faith. If you see someone being harassed, say something-even a simple "Thanks for your work" on their talk page helps. Report abuse properly. Don’t just revert edits-explain why. Support new editors. And if you’re a long-term user, consider mentoring someone else. The health of Wikipedia doesn’t depend on a few overworked admins. It depends on everyone who uses it.