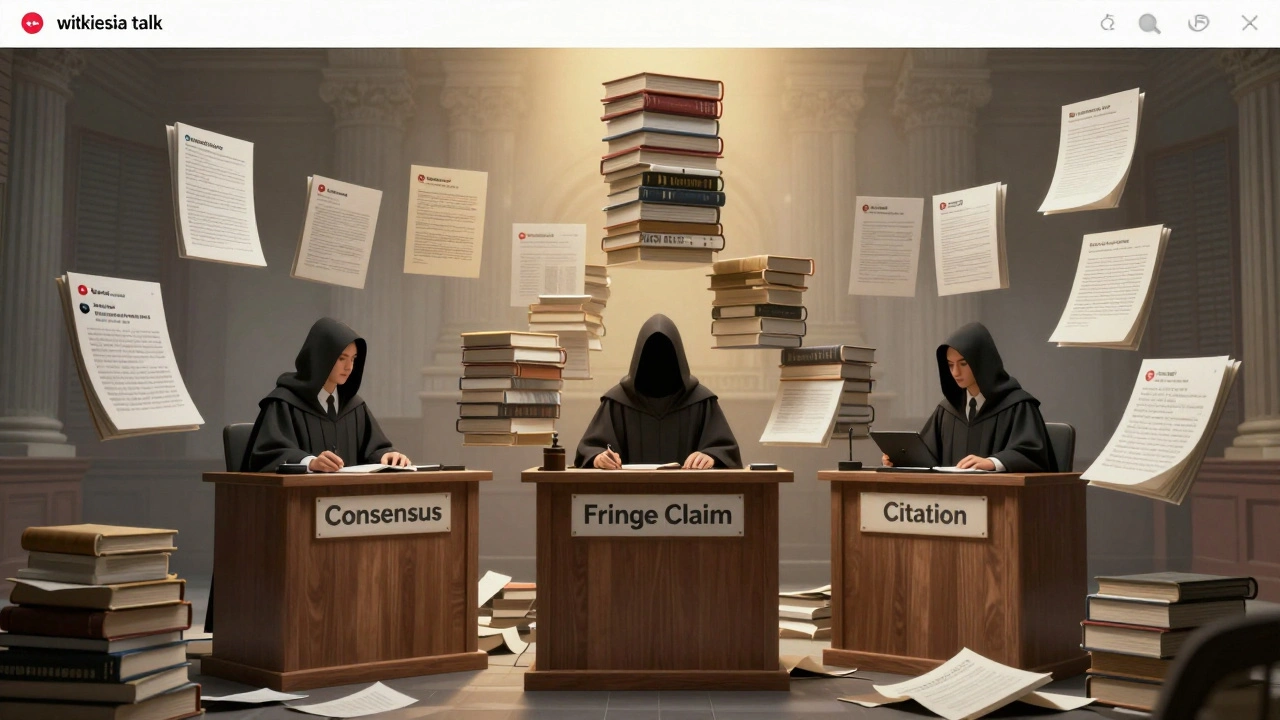

Wikipedia runs on rules. Not laws, not government mandates - just a handful of core policies that volunteers agree to follow. Two of them are sacred: NPOV (Neutral Point of View) and Verifiability. But what happens when they pull in opposite directions? When one rule says you must stay neutral, and the other says you can’t include anything unless it’s backed by solid sources? That’s where real editing battles begin.

What NPOV Really Means - And What It Doesn’t

NPOV isn’t about balance. It’s not about giving equal space to every opinion. It’s about representing all significant viewpoints fairly, proportionally, and without editorial bias. If 95% of climate scientists agree that human activity is driving global warming, then NPOV doesn’t require you to give 50% of the article to climate skeptics. It requires you to present the scientific consensus accurately - and mention dissenting views only if they’re held by a meaningful minority with published evidence.

Here’s the catch: NPOV doesn’t care if a view is true. It cares if it’s notable. A fringe theory backed by one blog post and a YouTube video doesn’t qualify. But if that same theory was published in a peer-reviewed journal, even if it’s widely criticized, NPOV says: mention it - but also mention the criticism.

Verifiability: The Gatekeeper of Facts

Verifiability is simpler: if it’s not cited, it doesn’t belong. Not because it’s false. Not because it’s controversial. But because Wikipedia isn’t a place for original research or personal belief. It’s a summary of what reliable sources have already said.

That means no hearsay. No anonymous forum posts. No unverified tweets. Even if something is true - if no reputable source has documented it, it gets deleted. This policy keeps Wikipedia from becoming a rumor mill. But it also creates blind spots. What about emerging topics? What about underreported communities? What about facts that exist but aren’t yet in academic journals?

Case Study 1: The Gender Identity Debate

In 2022, editors on the Wikipedia page for Gender identity clashed over whether to describe gender identity as a “social construct” or a “biological reality.” One group cited psychology journals and LGBTQ+ advocacy organizations. Another cited medical textbooks and religious institutions that defined gender strictly by sex assigned at birth.

NPOV said: include both. Verifiability said: only include what’s published in reliable sources. The conflict? Many of the sources backing the “biological reality” view were from non-peer-reviewed outlets, or had clear ideological agendas. Editors spent months reviewing each source. Some were rejected. Others were accepted with heavy context. The final version didn’t say gender is purely biological or purely social. It said: “Most academic sources define gender identity as distinct from biological sex, with social and cultural influences playing a significant role. Some religious and political groups hold alternative views, which are documented in [sources].”

It wasn’t pretty. It wasn’t quick. But it worked because editors stuck to the rules - not their opinions.

Case Study 2: The 2020 U.S. Election Claims

After the 2020 U.S. presidential election, Wikipedia became a battleground for claims of widespread voter fraud. Thousands of edits were made to the 2020 United States presidential election page, pushing unsubstantiated allegations. NPOV seemed to demand inclusion - “some people believe this.” But Verifiability said: show me the source.

Most claims came from partisan websites, social media posts, or unverified affidavits. None were backed by courts, election officials, or credible news organizations. Editors didn’t remove the claims because they were “false.” They removed them because they were unverifiable. Meanwhile, the official results from all 50 states - certified by bipartisan boards - were cited with primary documents. The page stayed neutral by reflecting what authoritative sources confirmed, not what loud voices claimed.

Over 12,000 edits were reverted in 30 days. No one won. But Wikipedia did.

Case Study 3: The “Alternative Medicine” Page

For years, editors fought over whether to call acupuncture “effective” or “pseudoscience.” NPOV said: present both sides. Verifiability said: what do reputable medical journals say?

A 2021 meta-analysis from the Journal of the American Medical Association found acupuncture had no clinically meaningful effect beyond placebo for chronic pain. But a 2020 report from the World Health Organization listed it as a “recognized treatment” for 100+ conditions. Conflict? Not really. The solution? The page now says: “While some organizations, including the WHO, have endorsed acupuncture for certain conditions, systematic reviews in peer-reviewed medical journals generally find no significant benefit beyond placebo effect.”

The key? They didn’t say “some say X, others say Y.” They said: here’s what the evidence says - and here’s where the disagreement comes from.

How Editors Actually Resolve These Conflicts

There’s no magic formula. But there are patterns:

- Reliable sources always win. Peer-reviewed journals, government reports, major news outlets - these are the gold standard.

- Proportion matters. If 90% of experts agree, the article should reflect that. Not 50-50.

- Context is everything. A claim without its criticism is misleading. A criticism without its source is noise.

- Don’t trust your gut. Trust the citations.

Wikipedia’s conflict resolution system is messy. It relies on hundreds of volunteers arguing over tiny edits. But it works because the rules are clear - even when they’re hard to apply.

Why This Matters Beyond Wikipedia

Wikipedia isn’t just an encyclopedia. It’s a mirror of how society handles truth. When you search for “is climate change real?” - 80% of users click on the top result. That’s Wikipedia. If its policies break down, so does public trust in shared facts.

NPOV and Verifiability aren’t just rules. They’re a social contract. They say: we won’t let loud voices drown out evidence. We won’t let bias slip in under the guise of fairness. We’ll only include what’s been vetted - even if it’s boring.

That’s why Wikipedia survives. Not because it’s perfect. But because its editors keep returning to the same two questions: Can you prove it? and Have you represented all sides fairly?

What Happens When the Rules Break?

They don’t break. They get applied more carefully.

When a conflict arises, editors don’t pick a side. They go to the policy pages. They check citation guidelines. They consult the Wikipedia:Neutral point of view and Wikipedia:Verifiability pages - not their own beliefs. They use talk pages. They request third opinions. Sometimes, they escalate to arbitration.

And when all else fails? They wait. They let time pass. They let more sources emerge. Sometimes, a claim that seemed controversial today becomes accepted science tomorrow. And Wikipedia waits - because that’s what verifiability demands.