Imagine typing a query into your favorite search engine and landing on an encyclopedia article that is confidently wrong. Not just slightly off, but fundamentally incorrect, yet presented with the authority of a verified source. This isn't a hypothetical scenario from a dystopian novel; it is a growing reality as encyclopedias are digital repositories of structured knowledge that rely on either human consensus or automated systems for accuracy. The way we verify truth online is shifting beneath our feet. For decades, the gold standard was community-driven editing-thousands of volunteers policing content through debate and citation. Now, a new contender has emerged: algorithmic output. Artificial intelligence can generate, edit, and verify text at speeds no human team can match. But speed doesn't always equal accuracy.

The core tension here is between two distinct models of quality assurance is the process of ensuring that information meets specific standards of accuracy, reliability, and relevance before publication. On one side, you have the messy, slow, but deeply nuanced world of human review. On the other, you have the pristine, instantaneous, but potentially brittle world of machine-generated content. As platforms compete for dominance in the attention economy, understanding which model serves the reader better is critical. Are we trading depth for convenience? Or are we finally escaping the biases of human editors?

The Human Element: How Community Review Works

To understand why community review has held the fort for so long, you have to look at how it actually functions. Take Wikipedia is a free, web-based, collaborative encyclopedia that allows users to create and edit articles collectively. It operates on a model of radical openness. Anyone can edit any page at any time. This sounds chaotic, but it relies on a robust system of checks and balances. When someone adds false information, other users-often experts in that specific field-spot it and revert it. This creates a dynamic equilibrium where errors are corrected rapidly, often within minutes.

The strength of this model lies in its diversity of perspective. A single editor might miss a nuance, but a hundred editors from different backgrounds, cultures, and professional fields will catch it. This collective intelligence helps mitigate individual bias. If an editor tries to push a political agenda, others will flag it, discuss it on talk pages, and enforce neutral point of view policies. The result is a product that reflects a broad consensus rather than a single opinion.

However, this system is not without flaws. It requires significant time and effort. Disputes can drag on for weeks, leaving articles in a state of flux. More importantly, it suffers from coverage gaps. Topics that interest niche communities get polished to perfection, while obscure but important subjects might remain poorly sourced or incomplete simply because no one cares enough to fix them. Human energy is finite, and community review cannot scale infinitely.

The Machine Approach: Algorithmic Output and AI

Enter algorithmic output is content generated or curated by computer programs using predefined rules or machine learning models. With the rise of large language models (LLMs) and advanced natural language processing, platforms can now generate encyclopedic entries instantly. These algorithms analyze vast datasets to identify patterns, extract facts, and synthesize coherent narratives. The promise is seductive: comprehensive coverage, zero latency, and consistent formatting across millions of topics.

Unlike human editors, algorithms do not get tired, bored, or biased by personal experience-at least, not in the same way. They can process thousands of sources in seconds, cross-referencing data points to ensure consistency. For straightforward factual queries, like "What is the capital of France?" or "When was the iPhone released?", algorithmic output is nearly flawless. It provides immediate answers without the friction of navigating edit wars or checking revision histories.

But here is the catch: algorithms lack true understanding. They predict the next likely word in a sequence based on statistical probability, not semantic truth. This leads to a phenomenon known as hallucination, where the AI generates plausible-sounding but entirely fabricated information. An algorithm might confidently state that a non-existent treaty was signed in 1995 because the pattern of words around "treaty" and "1995" appears frequently in its training data. Without a human in the loop to fact-check, these errors propagate quickly and widely.

Platform Competition: The Battle for Trust

This divergence in quality assurance methods is driving intense platform competition is the rivalry between digital services to capture user attention, data, and market share. Traditional encyclopedia platforms are under pressure to modernize. Users want instant answers, not links to lengthy articles they have to read themselves. Search engines and AI assistants are stepping in to fill this gap, providing summarized, algorithmically generated responses directly in the search results.

The stakes are high. Trust is the currency of the internet. If users perceive that algorithmic outputs are unreliable, they will retreat to trusted human-curated sources. Conversely, if human-curated sources seem outdated or inaccessible, users will embrace the convenience of AI, regardless of the risks. Platforms are now racing to hybridize their approaches. Some are using AI to draft initial articles, which humans then review. Others are using AI to detect vandalism in real-time, enhancing the efficiency of community review.

This competition forces both sides to improve. Human-led platforms are adopting better tools to streamline editing and reduce friction. Algorithmic platforms are investing heavily in reinforcement learning from human feedback (RLHF) to align their outputs with factual accuracy and ethical standards. The winner won't necessarily be the one with the purest model, but the one that best balances speed with reliability.

| Feature | Community Review | Algorithmic Output |

|---|---|---|

| Speed | Slow (hours to days) | Instant (milliseconds) |

| Scalability | Limited by human resources | Virtually unlimited |

| Nuance Handling | High (context-aware) | Low (pattern-based) |

| Error Type | Bias, vandalism, omission | Hallucination, fabrication |

| Trust Mechanism | Transparency and consensus | Proprietary algorithms and reputation |

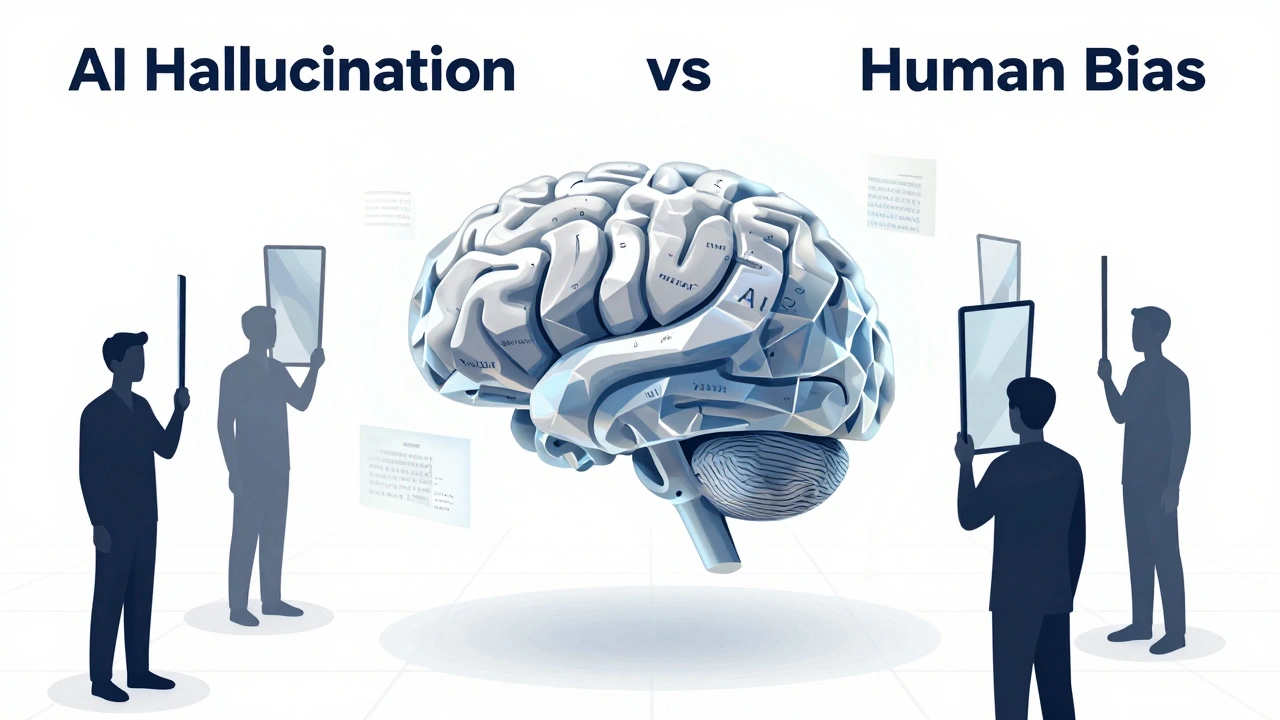

The Risk of Hallucination vs. Bias

When evaluating these systems, it is crucial to distinguish between the types of errors they produce. Community review is prone to bias. Editors may unconsciously favor certain viewpoints, leading to systemic imbalances in coverage. For example, historical events involving marginalized groups might be underrepresented if the editor base lacks diversity. Vandalism is another risk, though it is usually caught quickly. The key advantage is that these errors are visible. You can see who edited what, when, and why. This transparency allows for accountability.

Algorithmic output, by contrast, hides its errors behind a veil of confidence. Hallucinations are insidious because they sound authoritative. An AI might invent a quote from a famous scientist or misattribute a discovery to the wrong person. Because the algorithm does not "know" it is lying, it cannot correct itself without external intervention. Furthermore, algorithms inherit biases from their training data. If the internet contains more negative articles about a certain demographic, the AI will reflect that imbalance, amplifying stereotypes rather than challenging them. Unlike human editors, algorithms do not have moral compasses; they only have mathematical weights.

Hybrid Models: The Future of Knowledge

The most promising path forward is not choosing one side over the other, but integrating them. Hybrid models leverage the strengths of both community review and algorithmic output. Imagine a system where AI drafts an initial summary of a topic, citing sources automatically. Human editors then step in to verify the citations, add nuance, and ensure neutrality. This reduces the burden on volunteers, allowing them to focus on high-value tasks like resolving disputes and covering complex topics.

Platforms are already experimenting with this. Some use AI to flag potential issues in articles, such as missing citations or conflicting statements, alerting human editors to take action. Others use AI to translate articles, expanding access to global audiences while maintaining human oversight for cultural context. The goal is to create a symbiotic relationship where machines handle the heavy lifting of data processing, and humans provide the judgment and empathy that machines lack.

This approach also addresses the scalability issue. By automating routine tasks, platforms can expand their coverage without sacrificing quality. Niche topics that previously languished due to lack of interest can now receive basic coverage from AI, which can then be refined by enthusiasts over time. The result is a more inclusive and comprehensive knowledge base.

Practical Tips for Readers

As a consumer of information, you need to adapt your habits. Do not trust any single source blindly, whether it is written by a human or generated by an AI. Here are some practical steps to verify information:

- Check the sources: Look for citations and references. If an article claims something extraordinary, it should link to credible primary sources. If there are no links, be skeptical.

- Cross-reference: Compare the information with other reputable sources. If multiple independent sources agree, the likelihood of accuracy increases.

- Look for transparency: Prefer platforms that show their edit history or explain how their algorithms work. Transparency builds trust.

- Be aware of dates: Information changes rapidly. Check when the article was last updated. Outdated information can be misleading, even if it was once accurate.

- Question the tone: Be wary of overly definitive language, especially on controversial topics. Nuanced discussions are often more reliable than absolute statements.

By adopting a critical mindset, you protect yourself from misinformation and contribute to a healthier information ecosystem. Your skepticism drives platforms to improve their quality assurance processes.

Conclusion: Who Wins?

The battle between community review and algorithmic output is not a zero-sum game. Both models have vital roles to play in the future of encyclopedias. Community review brings depth, nuance, and accountability. Algorithmic output brings speed, scale, and consistency. The platforms that succeed will be those that find the right balance, leveraging technology to enhance human judgment rather than replace it. As readers, we must remain vigilant, demanding transparency and accuracy from all sources. In the end, quality assurance is not just a technical challenge; it is a societal responsibility.

Is Wikipedia still relevant in the age of AI?

Yes, Wikipedia remains highly relevant because it offers transparency and human-curated content. While AI can provide quick answers, Wikipedia’s detailed edit history and community oversight make it a trusted source for complex topics. Many AI systems actually use Wikipedia as part of their training data.

Can algorithms completely replace human editors?

Unlikely. Algorithms lack true understanding and moral judgment. They can handle factual data well but struggle with nuance, context, and bias. Human editors are essential for verifying sources, resolving disputes, and ensuring content is fair and balanced.

What is AI hallucination in encyclopedias?

AI hallucination refers to instances where an artificial intelligence generates false or nonsensical information that sounds plausible. In encyclopedias, this could mean inventing quotes, dates, or events. It is a major risk of relying solely on algorithmic output without human verification.

How do I know if an encyclopedia entry is trustworthy?

Look for clear citations, transparent edit histories, and updates from recent dates. Cross-reference the information with other reputable sources. If the platform explains its quality assurance process, such as showing who edited the content or how the AI was trained, it is generally more trustworthy.

What is a hybrid model in knowledge platforms?

A hybrid model combines AI and human efforts. AI might draft initial content or flag potential errors, while human editors review, refine, and verify the information. This approach aims to achieve the speed and scale of algorithms with the accuracy and nuance of human judgment.