Quick Facts About Edit Filters

- They act as a first line of defense against automated bots and trolls.

- Filters can either warn a user or block the edit entirely.

- Most filters look for "red flag" patterns, like excessive profanity or mass deletions.

- They help human moderators focus on complex disputes rather than obvious spam.

How the Filtering System Actually Works

At its core, an edit filter is basically a set of "if-then" rules. When you hit that "Save changes" button, your edit doesn't go straight to the page. Instead, it passes through a series of checks. If your edit matches a specific pattern-say, you're trying to delete 90% of a page's content-the system flags it. This process happens in milliseconds, long before a human editor even knows something is wrong.

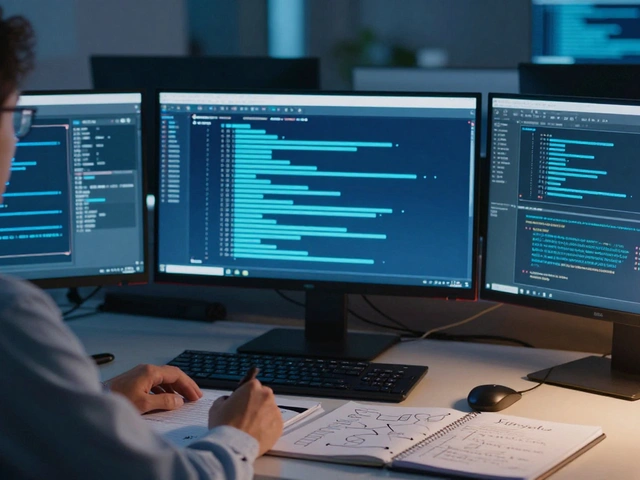

The system uses Regular Expressions (often called Regex), which are search patterns used to match character combinations in text. For example, a filter might be programmed to look for common swear words or specific phrases used by known spam networks. If the Regex finds a match, the system can either stop the edit with a hard block or trigger a warning that asks the user, "Are you sure this is helpful?"

There are different levels of filters. Some are "global," meaning they apply to every single page on the site, while others are "local," applying only to specific high-traffic pages like those of current political figures. This tiered approach ensures that a strict filter on a controversial page doesn't accidentally stop a helpful edit on a page about 18th-century pottery.

The Battle Against Vandalism and Spam

Vandalism on Wikipedia comes in many forms. There is "blatant vandalism," where someone just types nonsense. Then there is "sophisticated vandalism," where an editor makes a subtle, factual change that is actually a lie, intended to mislead readers. While filters struggle with the subtle lies, they are absolute champions at stopping the blatant stuff.

Consider the impact of Bots. Without filters, a single script could rewrite 10,000 pages in seconds. By implementing rate limits and pattern detection, the platform can stop these automated software applications from wreaking havoc. For instance, if an account creates 50 pages in two minutes, the filter identifies this as non-human behavior and freezes the account immediately.

The system also targets Spam. This usually involves adding promotional links to low-quality websites. Filters look for specific URL patterns or the sudden insertion of keywords like "best cheap loans" or "buy followers." By catching these at the gateway, the site avoids the need for massive cleanup projects after the damage is already done.

| Filter Type | Trigger Example | Action Taken | Primary Target |

|---|---|---|---|

| Pattern-Based | Profanity or Slurs | Hard Block | Blatant Vandalism |

| Quantitative | Deleting 500+ words | Warning/Review | Mass Erasure |

| Behavioral | 10 edits in 1 second | Account Lock | Malicious Bots |

| Contextual | Adding links to a new IP | Pending Review | Commercial Spam |

The Human Element: Moderation and Overrides

No matter how smart a filter is, it will occasionally make a mistake. This is called a "false positive." Maybe you're writing a medical article about a specific disease and use a word that the filter thinks is a slur. In these cases, the human element is vital. Administrators and experienced editors have the power to override filters and review flagged content.

The relationship between the software and the human is symbiotic. When a filter blocks a weird new type of attack, human editors analyze the blocked edits to see if they need to create a new filter. It's a constant arms race. As vandals get smarter-using techniques like adding invisible characters to bypass Regex-the moderators update the rules to keep up.

There is also the concept of Autoconfirmed Users. If you've had an account for a while and made a certain number of successful edits, the system trusts you more. You might be able to bypass filters that a brand-new, anonymous user from an IP address would be stopped by. This encourages people to create accounts and contribute legitimately while keeping the "barrier to entry" high for trolls.

Protecting the Integrity of Information

Why does this matter? Because Information Integrity is the only reason people trust the site. If a user visits a page and sees a joke or a lie, they stop trusting the entire platform. The filters don't just protect text; they protect the brand's reputation for reliability. By scrubbing the "noise" of vandalism, the platform ensures that the signal-actual knowledge-remains clear.

This system also prevents "edit wars" from spiraling out of control. When a topic becomes a battleground for political arguments, filters can be tightened to ensure that only highly trusted editors can make changes. This stops a page from flipping between two opposing viewpoints every five minutes, which would make the site unusable for a casual reader.

Furthermore, these tools allow the community to scale. The volume of data is simply too large for a manual approach. By automating the obvious blocks, the community can spend its energy on Peer Review and sourcing, which are the real drivers of quality. The filters handle the "trash," leaving the intellectuals to handle the "truth."

Common Pitfalls and Filter Limitations

Despite their utility, filters aren't perfect. One major issue is "over-blocking." If a filter is too aggressive, it can discourage new, well-meaning editors. If a student tries to improve a paragraph and gets a pop-up telling them their edit looks like vandalism, they might just give up and leave. Balancing security with accessibility is a constant struggle for the developers.

Another gap is the "slow drip" attack. A sophisticated vandal won't delete a page; they'll change one word here and one date there across a hundred different pages. Because each individual edit looks normal, it doesn't trigger the quantitative filters. Detecting these requires higher-level Algorithmic Detection and human vigilance.

Finally, there is the risk of filter bias. Since humans write the rules, those rules can reflect the biases of the creators. While the community works hard to keep filters objective, the process of deciding what constitutes "spam" or "vandalism" can sometimes be subjective, especially in multi-lingual environments where a word might be an insult in one dialect but a common term in another.

Do edit filters stop all vandalism?

No, they don't. Filters are great at stopping obvious patterns and bot attacks, but they can't detect subtle factual errors or "slow drip" vandalism where changes are small enough to seem natural. That's why human moderators are still essential.

Can a regular user create these filters?

Generally, no. Creating or modifying filters requires specific administrative privileges. This prevents malicious users from creating filters that block legitimate information or target specific viewpoints.

What happens if my legitimate edit is blocked?

If you encounter a false positive, you can usually provide a reason for your edit in the warning box or contact an administrator. If you have an established account, you can often bypass these filters by becoming an autoconfirmed user.

How do filters know what is a "bad" word?

They use lists of keywords and Regular Expressions (Regex) that match the patterns of known slurs, profanity, or common spam phrases. These lists are updated regularly by the community to keep up with new internet slang.

Are edit filters used on other websites?

Yes, almost any site with user-generated content uses similar logic. Reddit uses automoderators, and social media platforms use AI filters to catch hate speech or spam, although the specific implementation varies by platform.

Next Steps for Contributors

If you're looking to help protect the encyclopedia, you don't need to write code for filters. The best thing you can do is become an active editor. By building a history of helpful, sourced edits, you earn the trust of the system and the community. This allows you to move past the restrictive filters and help monitor pages that are currently under "heavy protection."

If you notice a pattern of vandalism that seems to be slipping through the cracks, report it on the relevant community noticeboard. Providing examples of how the vandals are bypassing the current system helps the technical team refine the Regex and create more effective barriers for everyone.