Quick Takeaways: The Chaos of Consensus

- The Core Conflict: Most fights stem from the tension between "Neutral Point of View" (NPOV) and the personal biases of editors.

- Governance Evolution: Wikipedia moved from a loose group of volunteers to a complex hierarchy of administrators and bureaucrats.

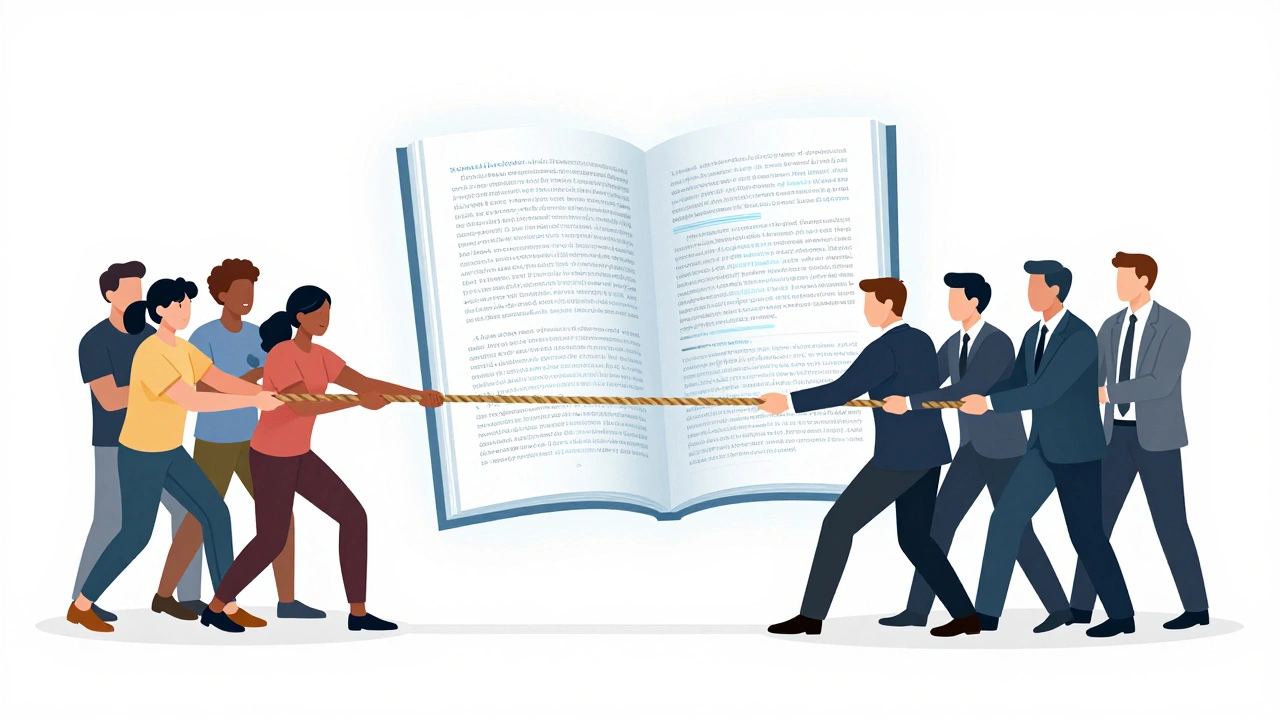

- The Power Struggle: There is a constant tug-of-war between the Wikimedia Foundation (the legal arm) and the volunteer community.

- The "Edit War" Cycle: Disagreements often spiral into repetitive changes, requiring a third-party "lock" on the page.

The Early Days and the Birth of NPOV

In the beginning, Wikipedia was a wild west. When Wikipedia is a free, multilingual online encyclopedia written collaboratively by volunteers launched in 2001, the rules were thin. The community quickly realized that if they didn't have a standard for truth, the site would just be a collection of opinions. This led to the creation of Neutral Point of View (or NPOV), which is a policy requiring articles to be written without bias, representing all significant views fairly.

But here is the problem: who decides what is "neutral"? In the early 2000s, this sparked the first major governance crises. Editors fought over whether a neutral tone meant "middle of the road" or "following the most reliable source." For example, if five sources say the earth is round and one says it's flat, NPOV doesn't mean giving equal space to both. This distinction between "weight" and "balance" is where most Wikipedia conflicts begin. When two editors disagree on the weight of an argument, they don't just argue; they engage in an edit war, where one person deletes a sentence and the other puts it back seconds later.

The Great Schism: Community vs. Foundation

As the site grew, the Wikimedia Foundation (WMF) stepped in as the legal and financial backbone. The WMF is the non-profit organization that manages the servers and fundraising for Wikipedia. For years, there was a silent agreement: the WMF handles the money and the servers, and the volunteers handle the content.

This peace broke during various "governance shocks." One of the biggest clashes occurred over the role of paid editors. The community generally hates the idea of someone being paid to scrub a celebrity's image or a company's record. However, as the site became a primary source for Google search results, the pressure to allow professional editors grew. The tension reached a boiling point when the WMF tried to implement more formal structures to handle disputes, which volunteers saw as an attempt to corporate-ize the project. The community responded by insisting that the "Wiki way"-consensus through endless discussion on talk pages-was the only legitimate way to govern.

The War of the Experts: Verifiability vs. Original Research

One of the most sophisticated conflicts in Wikipedia's history is the fight over Verifiability. To prevent people from just making things up, the site adopted a rule: you cannot add original research. Every claim must be backed by a reliable, third-party source.

This sounds great on paper, but it created a "source war." If a reputable newspaper publishes a mistake, and an editor tries to correct it using their own direct knowledge of the event, the other editors will often remove the correction because it isn't "verifiable" via a secondary source. This creates a weird paradox where Wikipedia can be "wrong" even when the community knows the truth, simply because no "acceptable" source has written it down yet. This has led to massive blowouts in scientific and medical articles, where PhDs argue with hobbyists over which academic journal constitutes a "gold standard" source.

| Model | Who Holds Power? | Decision Method | Main Weakness |

|---|---|---|---|

| Early Consensus | Any Editor | Informal Agreement | Slow, prone to edit wars |

| Bureaucratic | Administrators/Syzops | Policy Enforcement | Perceived as "elitist" |

| Foundation-led | WMF Board | Corporate Strategy | Disconnect from users |

The Battle for Political Truth: Regional Conflicts

Nowhere are the conflicts more vicious than in political articles. In regions with high geopolitical tension, Wikipedia pages become proxy wars. Take the conflicts over territorial disputes in Asia or Eastern Europe. Editors from opposing countries often spend years in a state of "cold war," carefully wording sentences to avoid triggering a mass-reversion from the other side.

To fight this, the community created Protected Pages, which are articles that require a certain level of editor experience or administrator approval to change. But protection often just moves the fight to the "Talk" pages. These discussion forums can become thousands of messages long, where editors dissect single commas for weeks. It's a form of digital diplomacy that looks more like a UN summit than an encyclopedia project. The conflict isn't about the facts-usually, both sides agree on what happened-it's about the *narrative* and which adjective is used to describe the event.

The 2020s: Bot Wars and Algorithmic Governance

In recent years, the conflict has shifted from humans fighting humans to humans fighting Bots. Bots are automated scripts designed to perform repetitive tasks, such as fixing typos or reverting vandalism. While they keep the site clean, they've introduced a new layer of controversy: the "Bot War."

When a bot is programmed with a specific bias (intentionally or not), it can overwrite human edits in milliseconds. This has led to a feeling of alienation among new editors who find their contributions instantly deleted by a script. The governance struggle now involves deciding how much autonomy we should give to AI. If an algorithm can determine what a "reliable source" is better than a human, do we let it lock the page? This is the current frontier of Wikipedia's internal struggle-balancing the efficiency of automation with the human nuance of a community-driven project.

Common Pitfalls in Wiki Governance

If you've ever tried to edit a controversial page, you've probably hit these walls. Understanding them helps explain why the site feels so combative in some areas:

- The "Bold" Fallacy: New users are told to be "bold," but when they change a high-profile page, they are often met with immediate aggression from "power users" who feel a sense of ownership over the topic.

- The Consensus Trap: Because everything requires consensus, some articles never actually get updated. They stay stuck in a version from 2012 because no one can agree on the new wording.

- Administrator Bias: Admins are volunteers. While they are supposed to be neutral, they are humans with their own political and social leanings, which can lead to accusations of "power tripping."

What is an edit war exactly?

An edit war happens when two or more editors repeatedly undo each other's changes to a page. This usually occurs when there is a fundamental disagreement about a fact or the wording of a section. To stop this, administrators will eventually "lock" or protect the page, forcing the editors to resolve their dispute on a talk page before any more changes can be made.

Who actually owns Wikipedia?

No one "owns" the content. The Wikimedia Foundation owns the trademarks and the servers, but the content is licensed under Creative Commons. The power to change the content resides with the community of volunteers, although administrators have special tools to block users or protect pages.

How does the "Neutral Point of View" policy work in practice?

NPOV doesn't mean the editor has to be neutral; it means the *article* must be. Instead of saying "The politician is a liar," an NPOV approach is to say "Critics, including X and Y, have accused the politician of lying." This shifts the focus from the editor's opinion to the documented views of others.

Why can't I just use my own expertise to fix a page?

Because of the "No Original Research" rule. Wikipedia values verifiability over truth. If you are a world-renowned expert on a topic but cannot find a published, third-party source that supports your claim, the community will likely remove your edit. You are encouraged to contribute to the sources (journals, books) first, and then cite those sources on Wikipedia.

What happens if a user is banned?

Bans are usually issued for "vandalism" or repeated violations of community guidelines. A user can be blocked from a specific page, or globally banned from the entire site. Most bans have an appeal process through the User Rights or Arbitration Committee.

What to do next

If you're looking to contribute without getting caught in a governance war, start with "stub" articles-short pages that need basic expansion. These are rarely contested and allow you to build a reputation. If you do find yourself in a conflict, avoid the urge to keep reverting the change. Instead, move to the "Talk" tab and provide a link to a high-quality source. In the world of Wikipedia, a link to a peer-reviewed paper is the only currency that actually matters.