Have you ever searched for a name on Wikipedia and found nothing? Not a missing page - but a page that used to exist, then vanished without a trace? That’s not a glitch. It’s oversight.

Wikipedia oversight is a quiet but powerful tool used by a tiny group of trusted editors to remove sensitive information from public view. It doesn’t delete pages. It doesn’t block users. It makes specific revisions, usernames, or comments invisible to almost everyone - even other admins. Only a handful of people on Earth can see what’s been suppressed, and they’re bound by strict rules.

What Exactly Is Wikipedia Oversight?

Oversight is a technical function granted to a small number of experienced Wikipedia editors - fewer than 100 worldwide. These are not regular admins. They’re vetted volunteers with years of trusted contributions and a history of handling sensitive situations. Their job isn’t to censor opinions. It’s to remove personal, dangerous, or legally risky data that violates Wikipedia’s core policies.

When oversight is applied, it doesn’t erase the edit from Wikipedia’s servers. The data still exists in the database. But it’s hidden from public view. Regular users, even logged-in editors, can’t see it. Only other oversighters and a few trusted staff at the Wikimedia Foundation can access it. This isn’t secrecy for secrecy’s sake. It’s a legal and ethical shield.

When Do Suppression Requests Happen?

Suppression isn’t used lightly. It’s triggered only when specific criteria are met. Here are the three most common reasons:

- Personal information leaks - Real names, home addresses, phone numbers, or medical records posted without consent. For example, if someone accidentally pastes a stranger’s Social Security number into a talk page, oversight removes it immediately.

- Threats and harassment - Death threats, doxxing, or targeted abuse directed at editors, subjects, or third parties. If a user posts a private email address along with a warning like “I’ll find you,” oversight hides the entire revision.

- Copyright violations with legal risk - Private documents, unreleased media, or confidential internal communications that could lead to lawsuits. This includes leaked corporate emails or unpublished personal letters.

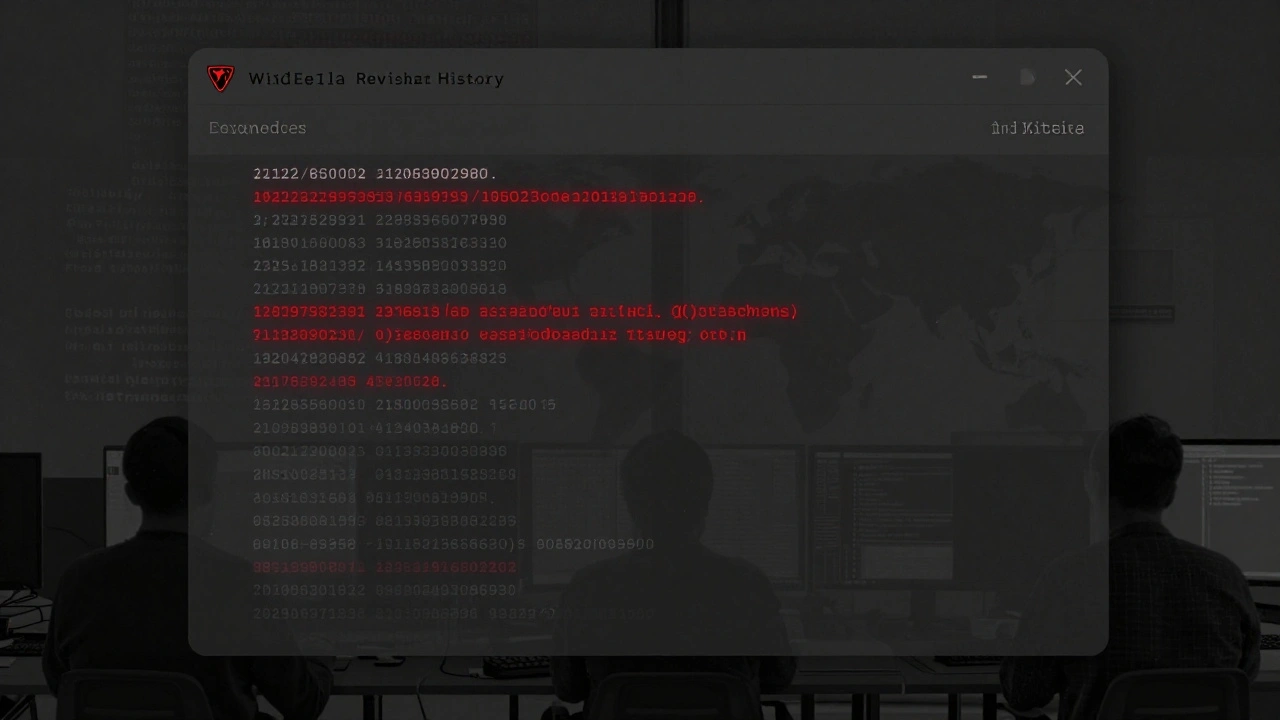

These aren’t theoretical cases. In 2024, the Wikimedia Foundation confirmed 217 oversight actions globally. Of those, 68% involved personal data exposure, 22% involved threats, and 10% involved copyright-sensitive material.

How Is a Suppression Request Made?

It starts with a report. An editor - maybe a regular volunteer or a flagged user - notices something that violates policy. They don’t delete it. They don’t argue about it. They file a formal request on a private page called Wikipedia:Oversight/Requests.

The request includes:

- The exact revision ID (a unique number assigned to every edit)

- The reason for suppression (citing Wikipedia policy)

- Proof - screenshots, links, or timestamps

Then, one of the dozen or so active oversighters reviews it. They don’t vote. They don’t debate. They decide based on policy, not opinion. If the request meets the criteria, they apply suppression within hours. If not, they decline it - often with a detailed explanation.

There’s no public appeal process. No forum. No petition. The decision is final. That’s intentional. Too many voices in the room would make oversight useless.

What Can’t Be Suppressed?

Oversight isn’t a magic eraser for bad content. It doesn’t remove:

- Controversial opinions - even if they’re offensive

- Unpopular edits - even if they’re wrong

- Disputed facts - even if they’re false

- Libelous claims - unless they include private data

For example, if someone adds “John Doe is a convicted criminal” to a page - and that’s false - oversight won’t touch it. That’s a content dispute. It goes to the talk page, then to arbitration. But if that same edit includes John Doe’s home address, phone number, and a threat like “I’ll sue him,” then oversight kicks in.

Wikipedia doesn’t protect truth. It protects privacy and safety. That’s the line.

Who Can See Suppressed Content?

Only two groups:

- Oversighters - The 50-100 trusted editors with the technical tool. They’re scattered across the globe - from Canada to South Africa to Japan. They’re volunteers, not employees.

- Wikimedia Foundation staff - A small team of legal and technical staff who can access suppressed data for audits, legal compliance, or security investigations.

Even other admins - the ones who block vandals or delete pages - can’t see suppressed edits. That’s a hard boundary. It’s not about trust. It’s about minimizing risk. The fewer people who can see hidden data, the less chance it leaks.

Every time an oversighter uses the tool, it’s logged. Not publicly. But internally. There’s a digital trail. If someone abuses the power, it’s traceable. And it’s happened before. In 2022, one overseer was stripped of privileges after using suppression to hide criticism of a friend. The system has checks.

Why Does This System Exist?

Wikipedia is built on openness. But openness without boundaries is dangerous. In 2017, a Wikipedia editor’s real name was leaked in a dispute. Within hours, they received death threats. Their workplace found out. They lost their job.

Oversight exists because Wikipedia learned the hard way: public editing can have real-world consequences. A single unredacted email, a misplaced phone number, a careless mention of a minor’s name - these aren’t just editing errors. They’re human risks.

The system isn’t perfect. Critics say it’s too secretive. Supporters say it’s the only thing keeping editors safe. The truth? It’s a necessary compromise. You can’t have total transparency and total safety at the same time. Wikipedia chose safety - for its people.

Is Oversight Censorship?

Some call it censorship. But that’s misleading. Censorship removes ideas. Oversight removes data.

Wikipedia still allows you to debate whether a person is a hero or a villain. You can argue about their actions, their motives, their legacy. But you can’t publish their private medical records, their child’s school name, or their home address. That’s not censorship. That’s basic ethics.

Think of it like this: If you write a news article about a celebrity, you can say they’re a bad parent. But you can’t print their kid’s birth certificate. Oversight is the rule that stops the birth certificate from appearing.

What Happens After Suppression?

After suppression, the edit is gone from public view. But it’s not gone from history.

The revision ID stays in the system. The edit is still recorded in the server logs. If law enforcement requests it - with a valid warrant - the Wikimedia Foundation can hand over the suppressed data. Oversight doesn’t mean deletion. It means delay.

Some edits are restored later. If the threat fades - say, a doxxed number was posted by accident and the person isn’t in danger anymore - an overseer can lift the suppression. It’s rare, but it happens.

Mostly, though, suppressed content stays hidden. Forever.

Can anyone request suppression on Wikipedia?

Yes - any registered user can submit a suppression request on the private oversight page. But requests must include specific details: the revision ID, the policy violated, and evidence. Requests without this information are ignored. You can’t just say “this is bad.” You have to prove it meets the legal or safety criteria.

Are suppression requests ever denied?

Yes - and often. About 30% of suppression requests are declined. Common reasons: the content doesn’t contain personal data, it’s a content dispute rather than a privacy issue, or the request lacks proof. Oversighters don’t act on emotion. They follow policy. If it doesn’t meet the threshold, it stays public.

Can I see what’s been suppressed?

No - not unless you’re an overseer or a Wikimedia Foundation staff member. Even administrators with full editing rights can’t view suppressed content. This is intentional. The fewer people who can access hidden data, the lower the risk of leaks or misuse.

Does oversight violate Wikipedia’s transparency principles?

It seems to, but it doesn’t. Transparency on Wikipedia means openness to public editing and discussion - not public access to private data. Oversight protects the people who make Wikipedia possible. Without it, editors would stop contributing out of fear. The system balances openness with human safety.

How often is oversight used?

Around 200-250 suppression actions occur each year globally. Most are in English Wikipedia, but oversighters handle requests across all language editions. Usage has stayed steady since 2020 - not rising, not falling. That suggests the system is working as designed: rare, targeted, and effective.