Imagine a script that deletes thousands of spam pages in seconds. Now imagine that same script accidentally wiping out a decade of verified historical data. This is the high-stakes reality of admin bots on Wikipedia, the free online encyclopedia. These are not just regular bots; they are automated accounts with full administrative powers-often called "sysops" or "admins." They can block users, delete pages, and protect articles from edit wars. Because their actions are irreversible and impactful, the community treats them with extreme caution.

If you are curious about how Wikipedia maintains its integrity while allowing automation, understanding the strict boundaries around these powerful tools is essential. The system relies on a delicate balance between efficiency and human oversight. Let’s look at what these bots can actually do, who watches them, and why the rules exist.

What Defines an Admin Bot?

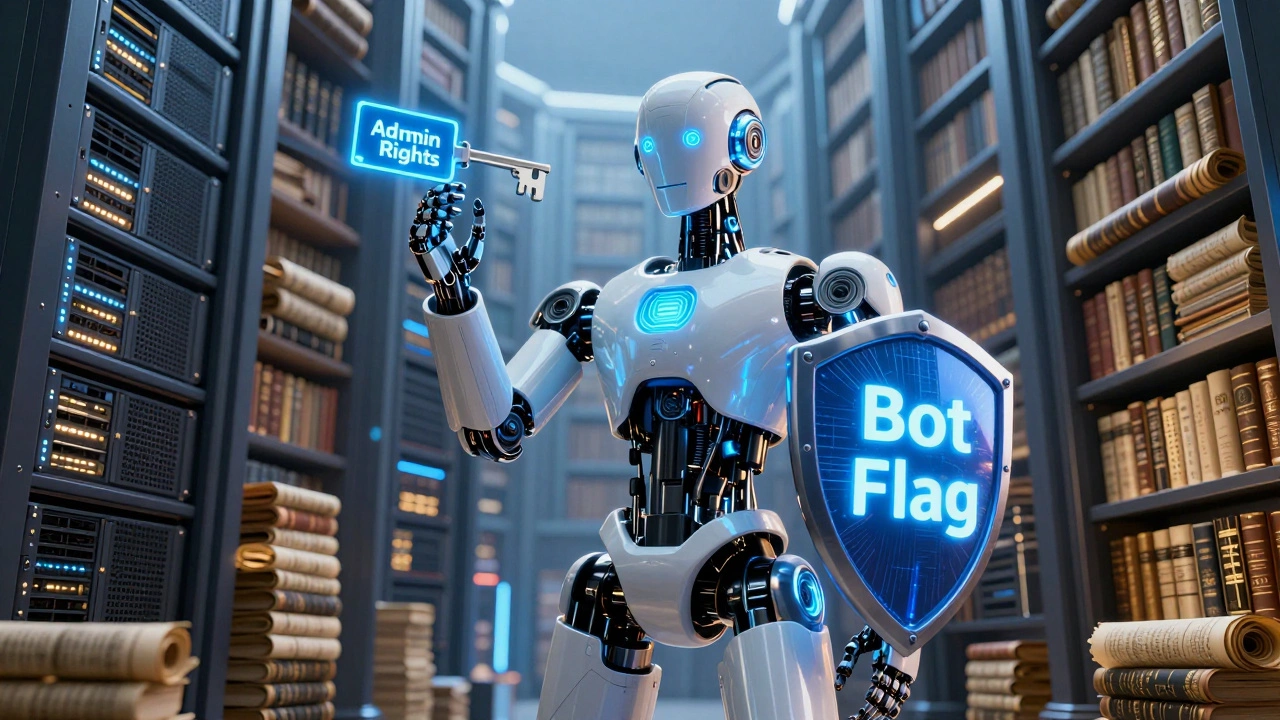

To understand the oversight, we first need to define the entity. An administrative bot is a software program operated by a trusted user that has been granted both bot status and administrator rights. Regular bots handle small tasks like fixing typos or adding categories. Admin bots, however, operate in the realm of enforcement and maintenance.

- Bot Flag: Allows edits to be hidden from recent changes to avoid cluttering user feeds.

- Admin Rights: Grants access to deletion logs, block lists, and page protection settings.

Not every admin runs a bot. In fact, most administrators perform their duties manually through the web interface. Admin bots are typically reserved for large-scale cleanup operations that would take a human weeks to complete. For example, during the "Sockpuppetry" scandals of the past, admins used bots to mass-block hundreds of fake accounts linked to a single bad actor.

The Strict List of Allowed Tasks

You might think that if a tool is useful, it should be allowed. On Wikipedia, utility does not equal permission. The community has drawn hard lines around what an admin bot can execute automatically. These tasks generally fall into three categories: speed-critical maintenance, repetitive enforcement, and data correction.

| Allowed Automated Tasks | Prohibited or Manual-Only Tasks |

|---|---|

| Mass-deletion of obvious spam (e.g., copy-pasted ads) | Blocking IP ranges without specific justification |

| Protecting pages during active vandalism spikes | Banning users based on complex behavioral analysis |

| Reverting obvious test edits across multiple namespaces | Deletion of controversial content without discussion |

| Updating template links after site-wide technical changes | Arbitration enforcement requiring legal interpretation |

The key principle here is predictability. If the outcome of the bot's action is 100% certain and aligns with existing policy, automation is often approved. If there is even a slight chance of nuance being missed, the task must remain manual. For instance, deleting a page because it violates copyright is straightforward for a bot. Deciding whether a biography is notable enough to stay requires human judgment.

The Oversight Mechanism: Who Watches the Watchers?

No admin bot operates in a vacuum. The oversight structure on Wikipedia is multi-layered, designed to catch errors before they cause permanent damage. This process involves several distinct roles and checks.

Request for Bot Status (RfB)

Before an account becomes a bot, it must go through a public voting process. The operator explains exactly what the code will do. Other editors review the logic. If the community finds flaws, the request is rejected. This step ensures transparency from day one.

Administrator Oversight

Even after approval, the bot is monitored. Administrators regularly check logs for unusual patterns. If a bot starts deleting legitimate pages due to a coding error, other admins can intervene immediately. They have the power to disable the bot flag or remove admin rights temporarily.

CheckUser and Oversight Teams

For sensitive cases involving privacy or harassment, specialized teams step in. The CheckUser team investigates sock puppets but rarely uses bots for this due to privacy concerns. The Oversight team handles private information removal, a task almost never automated due to the high risk of accidental exposure.

Risks and Historical Failures

Why are the rules so strict? Because mistakes happen. History provides sobering examples of when automation went wrong.

In 2009, a bot intended to clean up references accidentally deleted valid citations from thousands of articles. It took days to restore the content. In another incident, a poorly configured script blocked entire IP blocks belonging to universities, preventing students from editing during exam periods. These incidents highlight that code is only as good as its testing environment.

The primary risks include:

- False Positives: Deleting good-faith edits because they match a spam pattern.

- Over-Broad Blocks: Accidentally blocking legitimate users sharing an IP address.

- Logic Errors: Code bugs that escalate minor issues into major disruptions.

To mitigate these risks, operators are required to run tests in sandbox environments before deploying live. They must also provide clear documentation on how to contact them if something goes wrong.

How to Request or Challenge an Admin Bot

If you are an experienced editor considering running an admin bot, the path is clear but rigorous. You must demonstrate technical competence and a deep understanding of community policies. Start by discussing your idea on the Village Pump, the main forum for general discussion. Then, submit a formal request for bot status.

If you notice a bot behaving erratically, do not ignore it. You can:

- Contact the bot operator directly via their talk page.

- Report the issue to the Administrator Noticeboard.

- Use the "rollback" function if the bot’s edits are visible and reversible.

The community encourages vigilance. A healthy skepticism protects the encyclopedia from both malicious actors and well-meaning but flawed automation.

The Future of Automation on Wikipedia

As artificial intelligence advances, the line between simple scripts and complex AI blurs. Some propose using machine learning to detect vandalism more accurately. However, the core principle remains unchanged: humans must retain final authority over significant decisions. The goal is not to replace admins but to free them from tedious tasks so they can focus on complex disputes and content quality.

For now, admin bots remain a niche tool, governed by strict consensus. Their existence proves that Wikipedia values efficiency, but never at the cost of reliability. The oversight mechanisms ensure that while machines may pull the triggers, humans always hold the keys.

Can any Wikipedia admin run a bot?

No. Having admin rights does not automatically grant bot status. An admin must separately apply for bot flags through the Request for Bot Status process. The community votes on each application individually, ensuring that only reliable and transparent scripts are approved.

What happens if an admin bot makes a mistake?

If an admin bot makes a mistake, other admins can quickly reverse its actions or disable its permissions. The operator is usually expected to fix the code and explain the error. Serious or repeated errors can lead to the revocation of both bot and admin privileges.

Are admin bots used to censor content?

No. Admin bots are strictly prohibited from making subjective content judgments. They are limited to objective tasks like removing spam, protecting pages from vandalism, or enforcing clear-cut policies. Any decision regarding the notability or neutrality of content must be made by humans.

How can I report a suspicious bot?

You can report a suspicious bot by leaving a message on the Administrator Noticeboard or contacting the bot operator directly. If the bot is causing immediate harm, you can also alert other active admins on the Village Pump. The community takes reports of erratic automation seriously.

Do admin bots work on all language versions of Wikipedia?

Yes, but each language version has its own independent approval process. A bot approved on English Wikipedia must reapply for status on Spanish, German, or any other language edition. Policies and cultural norms vary significantly across different communities.