Wikipedia runs on trust. Millions of edits every day, from anonymous users and registered editors alike, all checked and cleaned up by a small group of volunteers with special powers: Wikipedia administrators. But what happens when those with the most power make mistakes-or worse, abuse their access? That’s where an admin tools audit comes in.

What Are Wikipedia Admin Tools?

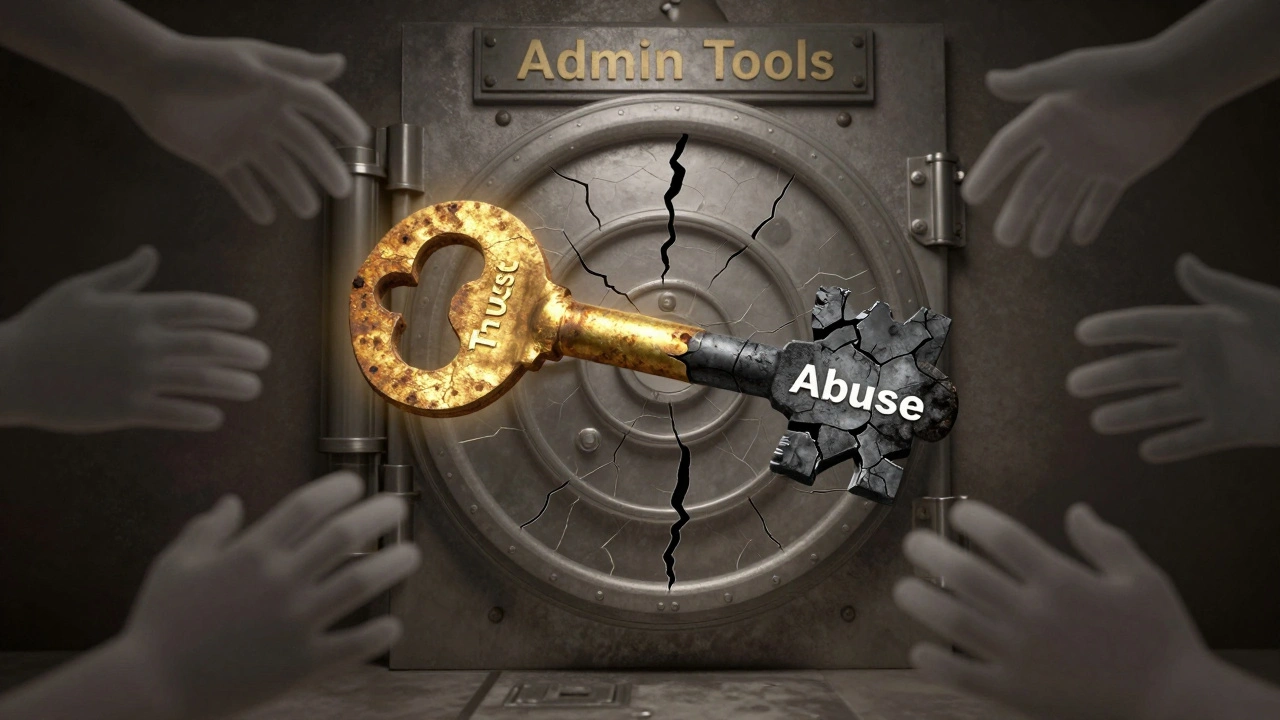

Admin tools aren’t a single feature. They’re a set of permissions granted to trusted users who’ve proven their consistency, judgment, and commitment to Wikipedia’s rules. These tools let admins delete pages, block users, protect articles from editing, and restore old versions of content. Without them, Wikipedia couldn’t handle spam, vandalism, or edit wars. But with them comes risk.

Think of it like giving someone the keys to a bank vault. You need someone trustworthy. But if that person gets lazy, angry, or corrupt, the damage can be huge. A single admin can wipe out years of work in seconds. They can lock out editors for months. They can hide controversial edits from public view. And because Wikipedia’s structure is decentralized, there’s no central HR department or IT team watching over them.

Why Audits Happen

Wikipedia doesn’t have a police force. It has community oversight. When a pattern of questionable behavior emerges-like a flood of deletions, repeated blocks without clear policy, or edits that favor a specific viewpoint-the community can demand an audit.

These audits aren’t random. They’re triggered by:

- Complaints from multiple users about an admin’s actions

- Discrepancies between the admin’s stated reasons and their actual edits

- Tools being used in ways that contradict Wikipedia’s core principles

- Reports from trusted volunteers who monitor admin activity

The audit process is public. It happens on Wikipedia’s own discussion pages. Anyone can read the logs. Anyone can comment. The audit doesn’t just look at what the admin did-it looks at why they did it. Was it policy? Bias? Burnout? Malice?

How the Audit Works

An admin tools audit starts with a formal request on the Administrator Noticeboard or a dedicated Admin Review page. Once approved, a team of experienced editors-usually 3 to 5-gathers data.

They pull logs from:

- Deletion logs: Which pages were deleted? Were they valid targets?

- Block logs: Who was blocked? For how long? What was the reason?

- Protection logs: Were articles locked unnecessarily?

- Undo and revert patterns: Are edits being reversed without discussion?

- Communication logs: Did the admin respond to complaints? Were they respectful?

They compare this data against Wikipedia’s official policies. For example:

- Deletions must follow CSD (Criteria for Speedy Deletion)

- Blocks require a clear violation of blocking policy

- Protection should only be used for persistent vandalism, not editorial disputes

If the audit finds violations, the outcome isn’t punishment-it’s correction. The admin might lose tools temporarily. They might be required to take a break. Or, if the behavior is severe and repeated, they could be stripped of admin status permanently.

Real Examples of Admin Tool Misuse

In 2023, an audit revealed that one admin had deleted over 200 pages in a single month. Most were not vandalism-they were legitimate articles on obscure topics, written by new editors. The admin claimed they were "not notable." But the audit found that 87% of those pages met Wikipedia’s notability guidelines. The admin had been using deletion as a way to control content they personally disliked.

Another case involved an admin who blocked 12 users over six months for "edit warring." But the audit showed that in 9 of those cases, the blocked users were correcting factual errors. The admin had been reverting corrections and then blaming the users for "disrupting" the article. This wasn’t enforcement-it was censorship.

These aren’t rare. They’re symptoms of a system that relies on human judgment without enough checks.

What Keeps Admins in Check?

Wikipedia’s system works because of transparency. Every action is logged. Every decision is public. You can see who deleted what, when, and why. You can see who blocked whom and for how long. This isn’t a secret system-it’s an open one.

But transparency alone isn’t enough. That’s why there are:

- CheckUsers: A smaller group of admins who can see IP addresses and detect sockpuppet accounts. They’re the only ones allowed to investigate fraud, and their actions are heavily audited.

- Arbitration Committee: A panel of 12 experienced editors who handle the most serious disputes. They can suspend admins, impose restrictions, or ban users entirely.

- Community Oversight: Regular editors who monitor admin behavior daily. They flag anomalies. They call out inconsistencies. They keep the system honest.

There’s no corporate board. No CEO. No lawsuit. Just a community that refuses to let power go unchecked.

The Balance Between Power and Trust

Wikipedia doesn’t need perfect admins. It needs accountable ones. The goal isn’t to eliminate admin tools-it’s to make sure they’re used fairly.

Studies from the University of Oxford in 2024 found that Wikipedia’s audit system reduces abusive admin behavior by 68% within six months of implementation. The system works because it’s slow, public, and community-driven. There’s no automation. No AI. Just people, reading logs, asking questions, and holding each other to the rules.

But the system is under strain. More editors are leaving. Fewer new admins are being appointed. The workload is falling on a shrinking group. That makes the audits even more critical. Without them, the risk of abuse grows.

What You Can Do

You don’t have to be an admin to help. If you notice something odd-like a pattern of deletions, or a user being blocked repeatedly without explanation-report it. Go to the Administrator Noticeboard. Write a clear, factual post. Link the logs. Don’t accuse. Just ask: "Is this consistent with policy?"

Wikipedia survives because ordinary people care enough to watch over it. You don’t need a special badge. You just need to look.

Can anyone request an admin tools audit?

Yes. Any registered editor can request an audit by posting on the Administrator Noticeboard or the Admin Review page. You need to provide specific examples-links to deleted pages, block logs, or edit histories. Vague complaints won’t trigger an audit. Concrete evidence will.

What happens if an admin loses their tools?

Losing admin tools doesn’t mean losing editing rights. The person can still edit articles, create pages, and participate in discussions like any other user. They just can’t delete, block, or protect content. Many admins return after a cooling-off period and earn their tools back by proving they’ve changed their behavior.

Are admin audits biased?

Audits are conducted by volunteer editors, so human bias can play a role. But the process is designed to minimize it. Auditors must cite Wikipedia policies, not personal opinions. Logs are public. Anyone can challenge their findings. Multiple auditors review each case. This reduces the chance of one person’s bias dominating the outcome.

How often are admin tools audited?

There’s no fixed schedule. Audits happen when there’s a reason-usually because of community concern. On average, about 15 to 20 audits are opened each year across all language versions of Wikipedia. Most result in no action, but around 30% lead to some form of restriction or warning.

Do admins know they’re being audited?

Yes. The audit process is transparent. The admin is notified immediately when a request is made. They’re invited to respond, explain their actions, and provide context. They can even submit evidence to defend themselves. The audit isn’t a trial-it’s a conversation.