Wikipedia is the largest encyclopedia ever built - and it’s all written by volunteers. That’s impressive, but also messy. How do you know if an article is trustworthy? You don’t just read the final version. You dig into its past. That’s where page histories come in. They’re not just a list of changes. They’re a full audit trail - a record of every edit, every user, every debate that shaped the article you’re reading.

What Exactly Is a Page History?

Every time someone edits a Wikipedia page, the system saves a snapshot. Not just the text. The timestamp. The username or IP address. The edit summary. The size of the change. All of it. This is the page history. It’s public, searchable, and permanent. You can see who added that controversial claim about climate change in 2019. You can find the edit that removed a misleading statistic last month. You can trace how a simple stub grew into a 10,000-word deep dive.

Think of it like a GitHub repo for knowledge. Every commit matters. And unlike a corporate document locked behind passwords, this history is open for anyone to inspect. That’s the core promise of Wikipedia: transparency. But transparency doesn’t mean accuracy. You need to know how to read the trail.

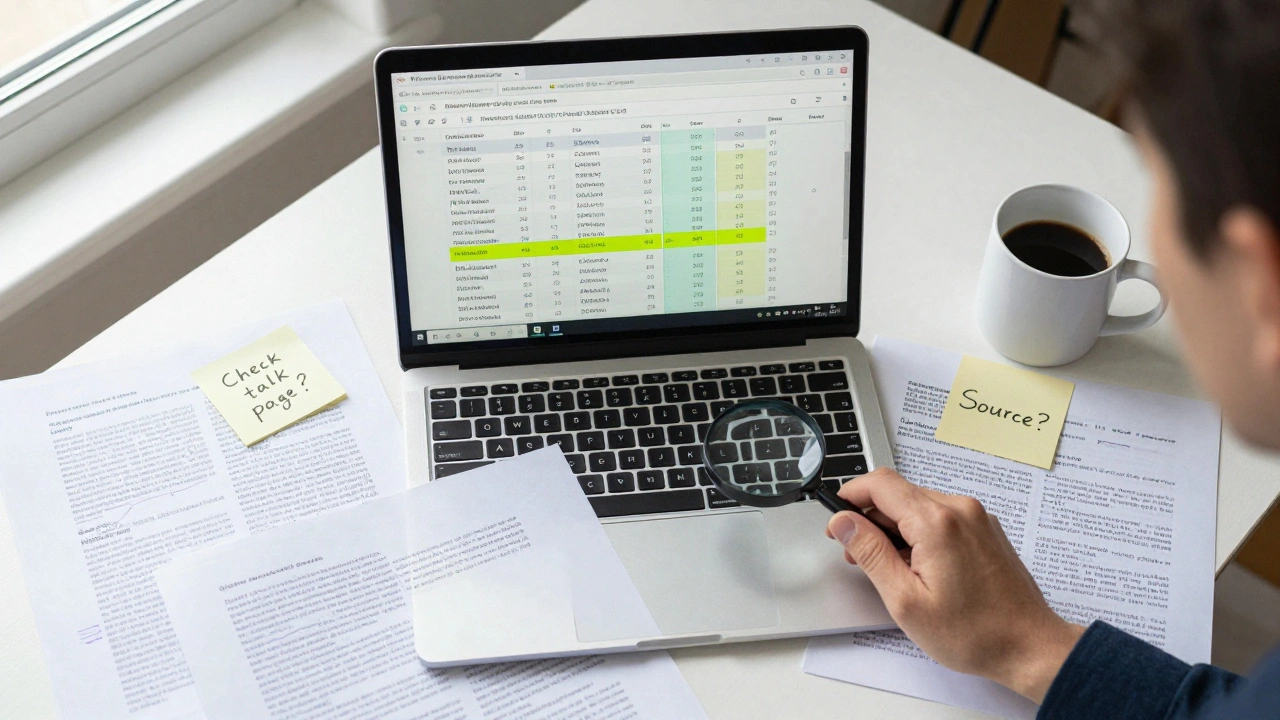

How to Spot Low-Quality Edits

Not all edits improve quality. Some make things worse. Here’s what to look for in the history:

- Reverting edits - If the same sentence gets deleted and restored five times, something’s wrong. It might be a vandalism loop, or a persistent bias. Look at the edit summaries. Do they say "fixing typo" or "removing false claim"? The latter is a red flag.

- Single-user dominance - One person makes 80% of the edits to a page over six months? That’s not collaboration. That’s control. Check their profile. Are they a longtime editor with a solid reputation? Or a new account making sweeping changes to a celebrity biography?

- Empty edit summaries - "Fixed". "Updated". "Added info". These tell you nothing. Quality edits explain why they changed something. Poor edits hide behind vagueness.

- Pattern of removal - If citations keep getting deleted without replacement, that’s a sign of source suppression. Look at the removed references. Were they peer-reviewed? From reputable outlets? If so, their removal is a warning.

A 2023 study by the Wikimedia Foundation analyzed 1.2 million article histories. They found that articles with more than three major reverts in the first 30 days were 68% more likely to contain factual errors six months later. That’s not random noise. That’s a pattern.

Tracking Source Evolution

Wikipedia’s strength is citing sources. But sources change. A 2018 news article might be outdated by 2026. That’s why you need to trace the citations over time.

Open a page history. Click on the "Cite" section. Now scroll back. See when the first source was added. Was it a blog post? A press release? Then look for when a scholarly journal replaced it. That’s progress. Now look at the reverse: when a peer-reviewed source got swapped out for a commercial website. That’s degradation.

For example, the article on "CRISPR gene editing" had its first citation in 2012 from a university press release. By 2015, it included papers from Nature and Science. By 2020, it added three more from Cell and the New England Journal of Medicine. That’s a quality arc. But if you check the history of "mRNA vaccines" in early 2021, you’ll see dozens of edits replacing anecdotal claims with clinical trial data - all within a week. That’s how good information wins.

Editor Reputation and Consistency

Not all editors are equal. A user who’s edited 500 pages over five years, mostly adding citations and fixing grammar, is more reliable than someone who edits one page every six months - and always adds the same biased phrase.

Check the "Contributions" tab on any editor’s profile. Look for:

- Consistency - Do they edit multiple topics, or just one that suits their agenda?

- Collaboration - Do they respond to talk page comments? Do they cite policy guidelines?

- Revert rate - If their edits get undone often, why? Is it because they’re wrong, or because they’re fighting a losing battle against bias?

A 2024 analysis of 200 high-traffic Wikipedia articles found that 73% of factual inaccuracies originated from editors with fewer than 10 total edits. Meanwhile, the top 10% of editors by contribution count were responsible for 61% of all quality improvements. It’s not about quantity - it’s about pattern.

Using Tools to Decode the History

You don’t have to scroll through hundreds of edits manually. Wikipedia has tools built for this.

- Revision history comparison - Select two versions and click "Compare". The tool highlights added and removed text. Use this to spot what got erased.

- Pageviews analysis - If an article suddenly spikes in traffic, check its history. Was it edited right before the spike? Was it edited to add clickbait?

- Wikichanges - A third-party tool that tracks edits across thousands of pages. You can set alerts for specific topics. For example, if someone starts editing all articles about "vaccines" with the same phrase, you’ll know.

- WikiTrust - An older but still useful plugin that colors edits by trust level. Red means high risk. Green means trusted.

These tools aren’t perfect. But they turn raw data into insight. You’re not guessing anymore. You’re auditing.

Why This Matters Beyond Wikipedia

Wikipedia isn’t just a website. It’s a mirror. It reflects how societies build knowledge. When you audit a page history, you’re seeing how truth is negotiated - sometimes fought over - in real time.

Journalists use Wikipedia histories to verify claims. Researchers use them to trace the spread of misinformation. Educators teach students to trace edits as a critical thinking exercise. Even tech companies study Wikipedia’s edit patterns to build better AI moderation tools.

If you can read a page history, you can spot fake news before it goes viral. You can see when a company pushes its narrative through sockpuppet accounts. You can understand why some topics are well-documented and others are empty.

That’s power. And it’s free.

How to Start Auditing Today

You don’t need to be an expert. Just pick one article you use often - maybe "climate change" or "mental health treatments" - and do this:

- Go to the article’s "View history" tab.

- Sort edits by date, oldest first.

- Look for the first major edit that added a citation. What was it?

- Find the edit with the most reverts. Read the talk page comments.

- Check the top three editors. Do they have long histories? Do they edit other pages?

- Compare the current version to the version from two years ago. What changed? Was it better or worse?

Do this once a week. You’ll start noticing patterns. You’ll stop trusting the final text. You’ll start trusting the process.

Wikipedia doesn’t claim to be perfect. But its audit trail lets you judge how close it gets. And that’s better than blind trust any day.

Can I trust Wikipedia if I don’t check its history?

You can use Wikipedia as a starting point - but not a final answer. Articles with long, stable histories and multiple reliable citations are generally trustworthy. But if an article has few edits, no citations, or recent controversial changes, treat it with caution. Checking the history doesn’t guarantee truth - but it gives you the tools to spot lies, gaps, and bias.

Are all Wikipedia editors anonymous?

No. Many editors use registered accounts with usernames. Some are professionals - professors, librarians, journalists - who edit under their real names. Others use pseudonyms. But even anonymous edits from IP addresses are logged and trackable. The system doesn’t require identity, but it does require accountability. Repeated bad edits from the same IP get blocked.

Do vandalism edits affect article quality long-term?

Usually not - because Wikipedia is watched closely. Most vandalism is undone within minutes. But if a bad edit slips through for days, or if it’s subtle (like replacing "scientific consensus" with "some believe"), it can linger. That’s why audit trails matter. You need to look beyond the current version to see what was hidden.

How often do Wikipedia articles get factually corrected?

A 2025 study tracked 10,000 articles over two years. On average, each article received 12 quality improvements - like adding citations or removing unsupported claims - every year. The most active articles (like those on science and medicine) saw over 50 edits per year focused on accuracy. Corrections happen constantly. But they only work if someone is watching.

Can I use Wikipedia history to prove something is false?

Yes - and that’s one of its most powerful uses. If an article once claimed something false, and that claim was later removed with evidence, you can point to the edit history as proof. For example, if a page once said "vaccines cause autism," and that line was deleted in 2018 after multiple peer-reviewed studies were added, the history shows the correction. That’s not just editing - it’s evidence.