Key Takeaways

- Bots handle the "grunt work" like fixing links and deleting spam.

- Strict community rules prevent bots from causing accidental mass damage.

- Modern bots use machine learning to spot vandalism in real-time.

- Human oversight remains the final word on content accuracy.

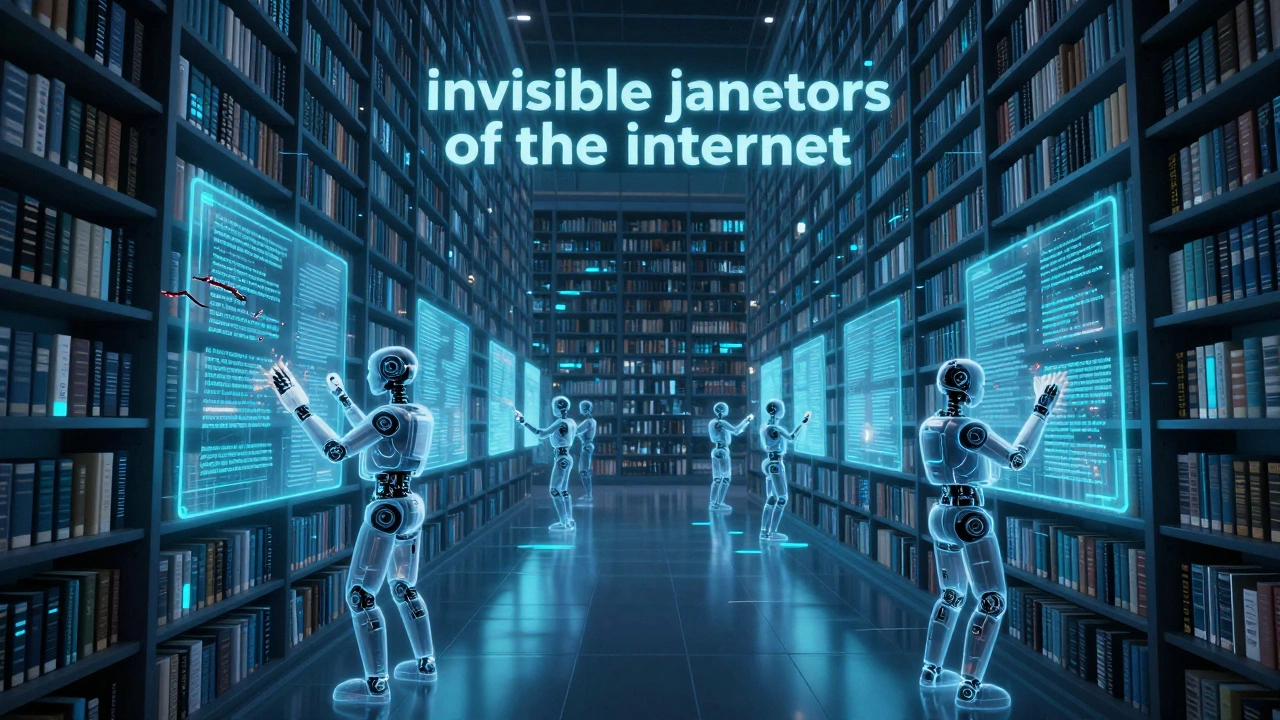

The Invisible Janitors of the Internet

Most people think of a bot as a chat interface or a social media spammer. But on Wikipedia, bots are more like invisible janitors. They don't write deep philosophical essays; they scrub the floors. They look for patterns. For example, if a bot sees a word like "color" used in a British English article where "colour" is the standard, it can swap thousands of instances across the site in seconds. A human would take weeks to do that and probably miss a few.

These tools operate via the Edit API, which allows software to talk directly to the site's database. Instead of clicking "Edit" and typing in a browser, the bot sends a request to the server saying, "Change X to Y in these 500 articles." This efficiency is what allows Wikipedia to maintain a consistent style across dozens of different languages and millions of pages.

Fighting the Vandalism War

Vandalism is a constant threat. Whether it's a bored teenager changing a political leader's birth date to something offensive or a company trying to scrub a negative review from their corporate page, the attacks are relentless. To counter this, Wikipedia uses ClueBot NG, an advanced bot that uses machine learning to detect bad edits.

ClueBot NG doesn't just look for "bad words." It analyzes the pattern of the edit. If a brand-new account suddenly deletes 90% of an article's text and replaces it with a single sentence, the bot flags it as likely vandalism and reverts the change instantly. It's like having a security guard who can see every single door in a giant building at once. Because it works in milliseconds, most users never even see the vandalism; it's gone before the page even finishes loading.

| Bot Type | Primary Goal | Example Task | Risk Level |

|---|---|---|---|

| Maintenance Bots | Formatting & Style | Fixing broken URL links | Low |

| Anti-Vandalism Bots | Content Protection | Reverting spam edits | Medium |

| Content Generation | Data Expansion | Creating lists of cities | High |

| Admin Bots | User Management | Blocking banned accounts | Medium |

The Strict Rules of Bot Deployment

You can't just write a script and let it loose on the encyclopedia. If someone wrote a buggy bot that accidentally deleted the first paragraph of every page, it would be a digital catastrophe. To prevent this, the community enforces a rigorous Bot Request process. Before a bot goes live, the creator must explain exactly what the bot does, provide a test page where the bot's changes are visible, and get approval from a human administrator.

Once approved, the bot is given a special flag. This tells the system that the account is an automated tool, which prevents the bot's massive amount of edits from skewing the "recent changes" feed. If a bot starts acting up, a human can kill the process instantly. This balance between automated speed and human judgment is the secret to Wikipedia's quality control.

Handling Big Data and Content Generation

Sometimes, the goal isn't just cleaning up-it's building. There are thousands of small towns, chemical compounds, or astronomical objects that deserve an article but are too numerous for humans to write individually. This is where content generation bots come in. They use structured data from sources like Wikidata-a central database of facts-to build "stubs."

For instance, if Wikidata knows that "City X" is in "Country Y" and has a population of "10,000," a bot can automatically generate a basic sentence: "City X is a city in Country Y with a population of 10,000." It's not a masterpiece of literature, but it provides a foundation. A human editor can then come along later and add the nuance, history, and flavor that a machine simply can't produce.

The Conflict Between Automation and Nuance

Bots are great at facts, but they are terrible at context. A bot might see a phrase that looks like a typo and "fix" it, not realizing that the phrase is actually a specific local dialect or a technical term in a niche field. This creates a tension between efficiency and accuracy.

This is why the MediaWiki software-the engine that powers Wikipedia-is designed to be highly transparent. Every single bot edit is logged. If a bot makes a mistake, any user can see who (or what) did it and undo the change. The bot doesn't get offended; it just gets its code updated to be more precise next time.

Can bots write entire Wikipedia articles?

Only basic ones. Bots can create "stubs" using data from Wikidata, but they cannot write comprehensive, nuanced articles because they lack the ability to synthesize complex information or provide critical analysis. Most detailed content is still written by humans.

Do bots replace human editors?

No, they complement them. Bots handle the boring, repetitive tasks (like fixing formatting or deleting spam) so that human editors can spend their time on actual research and writing.

How does Wikipedia stop a "rogue bot"?

Administrators have the power to immediately block any bot account. Additionally, the community monitors "Recent Changes" feeds, and if a bot begins making incorrect edits, it is flagged and stopped manually.

What is the difference between a bot and an AI like ChatGPT?

Most Wikipedia bots are based on strict "if-then" logic or specific machine learning models for pattern recognition. Unlike LLMs (Large Language Models), they aren't designed to "chat" or hallucinate; they are designed to execute precise, predictable changes to a database.

Can I create my own Wikipedia bot?

Yes, but you must follow the community guidelines. You need to create a bot account, submit a formal request to the Bot Requests page, and prove that your bot doesn't cause damage before it is allowed to edit live pages.

Next Steps for New Contributors

If you've noticed bot activity while editing and want to help, don't be afraid to revert a bot if it's wrong. Even the best scripts make mistakes. If you see a bot repeatedly failing at a task, you can leave a note on the bot's user page to alert the operator.

For those who know how to code, exploring the MediaWiki API is a great way to contribute. Start by writing a small script that helps you find articles needing a specific citation style, then move up to the formal bot request process once your tool is stable.