Imagine a massive explosion in a city center or a sudden political coup. Within seconds, thousands of people flock to a single page to figure out what happened. In the first ten minutes, that page is usually a mess of rumors, tweets from unverified accounts, and sheer panic. But an hour later, it’s a structured summary of facts. How does a site with no paid editors and a completely open door policy manage to clean up the chaos without crashing into a pit of lies? The secret isn't a magical algorithm; it's a high-stress social system designed to survive the Wikipedia misinformation storm.

Quick Takeaways

- Rapid Response: Experienced editors use specialized tools to lock pages and revert vandalism in real-time.

- Source Hierarchy: Information is only as good as its citation; social media posts are generally ignored in favor of established news wires.

- Protective Layers: Pages can be restricted to prevent new or anonymous users from making edits during a crisis.

- Consensus Building: Conflict is resolved through public talk pages where evidence outweighs opinion.

The First Line of Defense: Page Protection

When a news event goes viral, a Wikipedia page becomes a target. You get "edit wars" where two groups fight over a narrative, or trolls intentionally adding jokes to a tragedy. To stop this, Wikipedia uses Page Protection, a mechanism that limits who can edit a specific entry.

If a page is under heavy attack, it might move to "Semi-protected" status. This means only users who have been registered for at least four days and have made at least ten edits can change the text. If the situation is truly volatile-like during a high-profile election result-it can be moved to "Full protection," where only administrators can make changes. This creates a cooling-off period, ensuring that the facts can be verified before they are blasted to millions of readers.

The War on Unreliable Sources

Wikipedia doesn't actually "do" journalism. It doesn't have reporters on the ground. Instead, it relies on Verifiability, the rule that information must be attributable to a reliable, published source. During a major news event, the temptation to cite a viral X (formerly Twitter) post is huge. However, Wikipedia's community treats social media as a primary source only in very specific contexts, and almost never as proof of a complex fact.

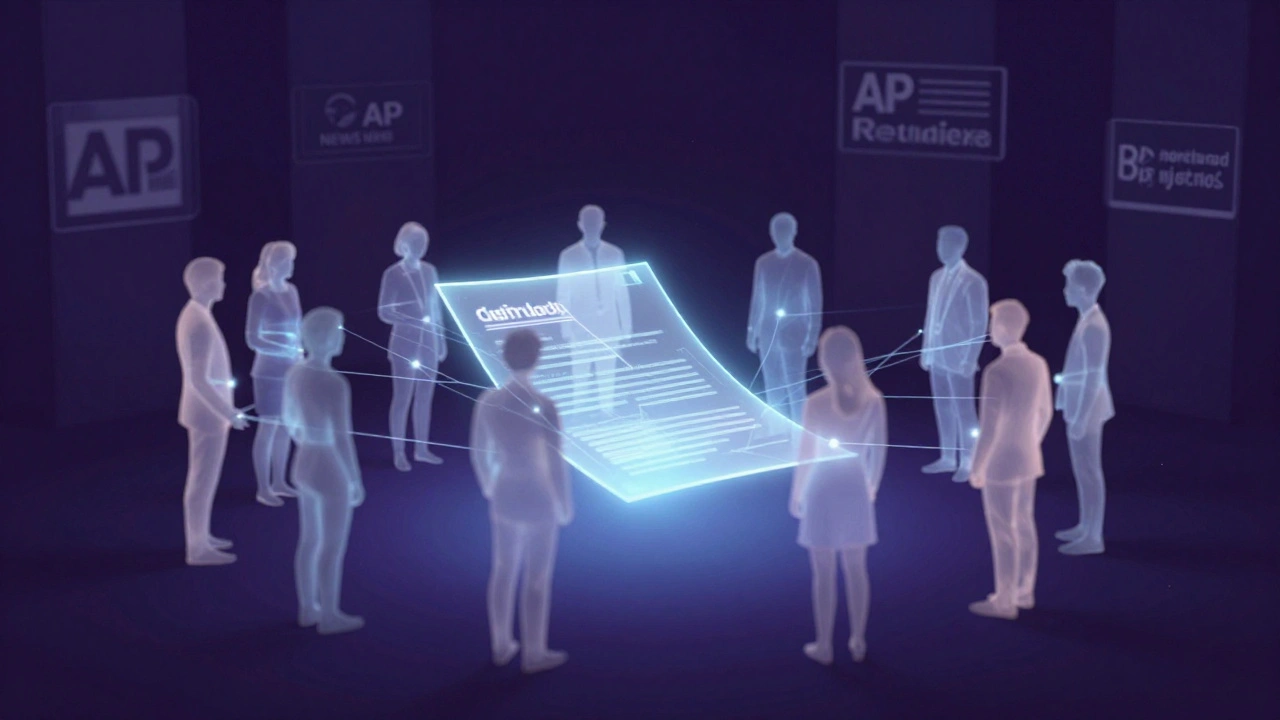

Editors prioritize "gold standard" sources. They look for reporting from Associated Press or Reuters-agencies known for strict factual standards and speed. If a blogger claims a building collapsed but Reuters hasn't confirmed it, the claim is stripped out. This delay might make Wikipedia feel slower than a live feed, but it's exactly why the site remains trustworthy while social media descends into chaos.

| Source Type | Trust Level | Usage on Wikipedia |

|---|---|---|

| Wire Services (AP, Reuters) | Highest | Primary basis for breaking facts |

| Established National Press | High | Used for context and detailed reporting |

| Local News Outlets | Medium | Useful for geography, but cross-referenced |

| Social Media / Viral Posts | Low | Generally discarded unless citing a public figure |

The Invisible Army: Bot and Human Moderation

Humans are slow, but bots are instant. Wikipedia employs ClueBot NG, an automated tool that scans every single edit across the entire site. If someone changes "The President signed the bill" to something profane or obviously false, ClueBot detects the pattern of vandalism and reverts the change in milliseconds. It doesn't have to "understand" the news; it just recognizes the signature of a troll.

While bots handle the obvious trash, human Administrators handle the nuance. These are experienced volunteers who monitor "Recent Changes" feeds. When a major event breaks, they often form informal "strike teams" in the background, coordinating via IRC or Discord to keep the page stable. They aren't just deleting bad info; they are actively hunting for the most accurate updates to prevent a vacuum of information that rumors usually fill.

Managing Conflict through Talk Pages

What happens when two reliable sources disagree? This is where the real battle for truth happens. Instead of just deleting each other's work, editors move the fight to the Talk Page, a hidden forum attached to every article.

On these pages, you'll find intense debates. One editor might argue that a specific death toll is accurate because it comes from a government agency, while another argues the agency is lying and points to an independent NGO report. They use a process called "consensus building." They don't vote on what is true; they agree on which source is more credible based on the site's established guidelines. This ensures that the final article represents a balanced view of the available evidence rather than the opinion of the loudest person in the room.

The Danger of 'Confirmation Bias' in Editing

Even with all these tools, Wikipedia isn't perfect. The biggest threat isn't actually a random troll, but "systemic bias." Because the editors are volunteers, they often have their own perspectives. During a political crisis, editors from one side of the spectrum might be more likely to trust sources that align with their worldview.

To fight this, Wikipedia encourages the use of Neutral Point of View (NPOV). This isn't about finding a "middle ground" (which can lead to false balance), but about describing the facts as they are reported without using loaded language. If a source calls someone a "freedom fighter" and another calls them a "terrorist," a good Wikipedia editor will write: "He is described by X as a freedom fighter and by Y as a terrorist." This shifts the focus from *what* the truth is to *who* is saying what.

Why does Wikipedia sometimes feel slow to update during a crisis?

Wikipedia prioritizes accuracy over speed. While a tweet can go viral in seconds, Wikipedia editors often wait for multiple reliable news outlets to confirm a fact before adding it. This "lag" is a deliberate feature to prevent the spread of misinformation.

Can anyone really be trusted to edit a news page?

While anyone can technically edit, the community doesn't trust every edit. Most breaking news pages are heavily monitored by veteran editors and protected by bots. If an unverified user adds a fake claim, it is usually reverted within seconds by someone with a more established track record.

What is the difference between an editor and an administrator?

An editor is anyone who changes a page's content. An administrator is a trusted user with "technical tools," such as the ability to block users, protect pages from editing, and delete pages entirely. They act more like moderators than contributors.

How do editors handle government censorship during news events?

When governments try to scrub information or push a specific narrative, Wikipedia editors often rely on archived versions of websites (like the Wayback Machine) and reports from international human rights organizations to maintain an accurate record.

Do bots ever make mistakes and delete real news?

Yes, bots can occasionally trigger "false positives" by flagging legitimate edits as vandalism. However, humans constantly monitor these logs and can easily restore any accidentally deleted information.

What to do when you spot an error

If you're reading a page during a live event and see something that looks wrong, don't just ignore it. You have a few options depending on your comfort level:

- The "Talk" Tab: If the page is protected, go to the Talk tab and leave a note with a link to a reliable source proving the error. This is the most effective way to get an admin's attention.

- Correct and Cite: If the page is open, fix the error and immediately add a citation. An edit without a source is almost always reverted; an edit with a source is likely to stay.

- Flagging: Use the "Report」 tool if you see blatant hate speech or harassment, which allows admins to take fast action against the user.

The system works because it treats the encyclopedia as a living document. It assumes the first draft is wrong and that the truth emerges through the friction of a thousand people arguing over the evidence.