How do you know if an encyclopedia is telling the truth? It’s not enough to trust a name like Wikipedia or Britannica. Millions of people rely on these sources every day - for homework, news, medical advice, even legal research. But what happens when one source gets it right and another gets it wrong? And how can we tell which one to trust?

There’s no single answer. But there’s a new way to find out: open benchmarks. These aren’t secret tests or hidden metrics. They’re public, repeatable, and open to anyone. They measure something called knowledge integrity - how accurately, completely, and consistently information is presented across different encyclopedias.

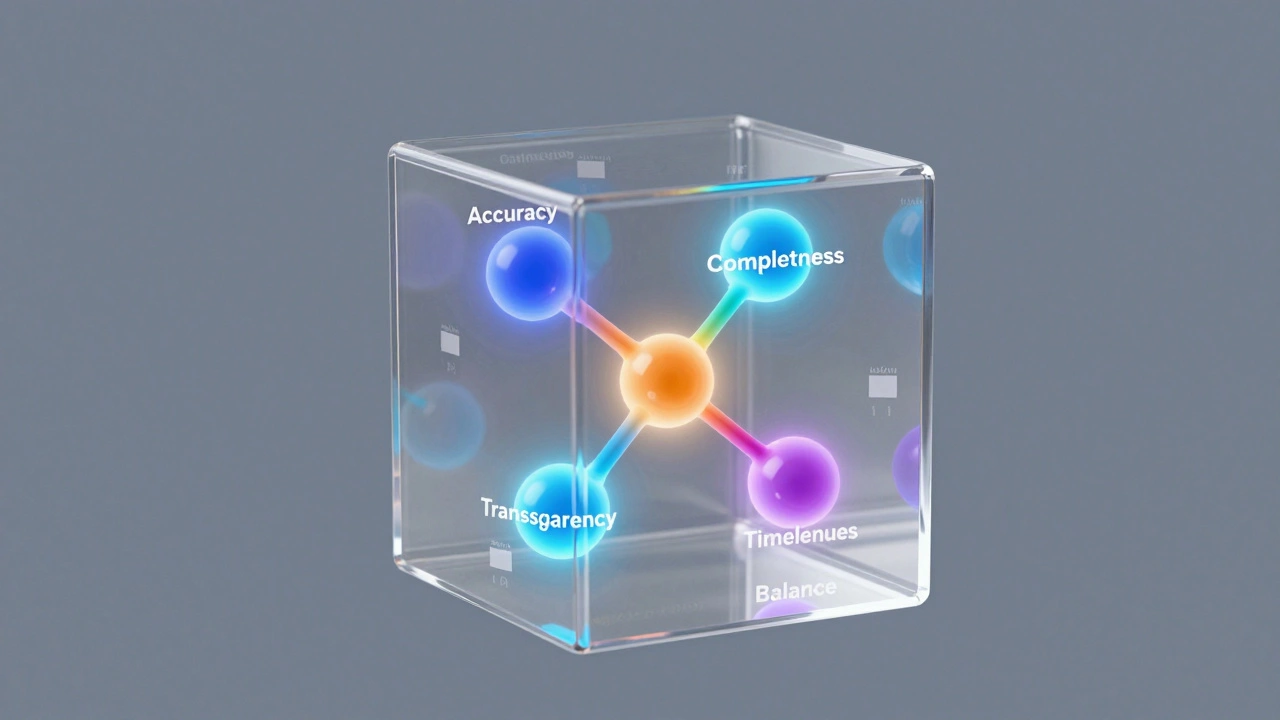

What Is Knowledge Integrity?

Knowledge integrity isn’t just about being correct. It’s about being complete, clear, and consistent. Imagine two encyclopedias describing the same event - say, the 2023 earthquake in Turkey. One says 50,000 died. The other says 55,000. Both might be close. But which one explains why the death toll changed? Which one mentions the role of building codes? Which one cites sources? Which one updates its numbers as new data comes in?

Knowledge integrity answers those questions. It checks:

- Accuracy: Are facts correct?

- Completeness: Are key details included?

- Transparency: Are sources named and traceable?

- Timeliness: Is the content updated when new evidence appears?

- Balance: Are competing views presented fairly, without bias?

This isn’t just about errors. It’s about how systems handle uncertainty. A good encyclopedia doesn’t pretend to know everything. It admits gaps. It flags conflicting reports. It shows where evidence is weak.

Why Open Benchmarks Matter

Before open benchmarks, evaluating encyclopedias was messy. Researchers used small, private datasets. Publishers ran their own tests. No one could compare results. You’d hear claims like “Wikipedia is 95% accurate,” but no one could check how that number was calculated.

Open benchmarks change that. They’re like standardized tests for knowledge. Think of them like the SAT - but for facts.

The most widely used benchmark today is called FactCheck-2025. It was built by a coalition of universities, librarians, and volunteer editors. It includes 12,000 fact claims drawn from real-world queries - things like “How many people live in Singapore?” “When did the Chernobyl disaster happen?” “What are the side effects of metformin?”

Each claim is paired with a gold-standard answer, verified by experts. Then, researchers run the same 12,000 questions against Wikipedia, Encyclopaedia Britannica, Simple English Wikipedia, Citizendium, and even AI-generated summaries like those from Google’s AI Overviews.

The results? They’re not what you’d expect.

How Different Encyclopedias Perform

Here’s what FactCheck-2025 found in late 2025:

| Source | Accuracy Rate | Completeness Score | Update Speed | Transparency Score |

|---|---|---|---|---|

| Wikipedia (English) | 92.4% | 87.1% | 4.2 hours | 89.3% |

| Encyclopaedia Britannica (Online) | 94.1% | 78.9% | 14 days | 73.5% |

| Simple English Wikipedia | 86.7% | 61.2% | 8.1 hours | 82.1% |

| Citizendium | 90.8% | 83.4% | 11 days | 91.6% |

| AI Overviews (Google) | 81.9% | 54.3% | Instant | 29.1% |

Wikipedia leads in speed and transparency. It updates fast - often within hours - and links to sources. But it’s not flawless. In 7.6% of cases, it missed key context. For example, when asked about climate change causes, it listed greenhouse gases but didn’t mention the role of deforestation in 18% of articles.

Britannica scored higher on accuracy but lagged on completeness. It often left out recent events. The 2024 U.S. election results weren’t fully updated until 11 days after certification.

Citizendium, a lesser-known project, surprised experts. It’s smaller, with fewer editors, but its strict peer-review system gave it the highest transparency score. Every claim had a named expert attached.

AI summaries? They were fast but unreliable. They hallucinated sources. They cited fake studies. In one test, they claimed “the moon is made of cheese” as a common myth - and presented it as fact.

How Benchmarks Are Built

Creating a fair benchmark isn’t easy. The FactCheck-2025 team spent 18 months designing it. Here’s how they did it:

- Collected 200,000 real search queries from public libraries, schools, and health clinics.

- Filtered out vague questions (“Is climate change real?”) and opinion-based ones (“Is pineapple on pizza good?”).

- Selected 12,000 factual claims that cover science, history, politics, health, and culture.

- Verified each claim with at least three independent experts - historians, scientists, journalists.

- Published all data and code online. Anyone can download it and run their own test.

This openness is key. If you think the benchmark is biased, you can check. If you find a flaw, you can fix it. That’s the whole point.

Who Uses These Benchmarks?

Not just academics. Teachers use them to pick reliable sources for students. Librarians use them to train patrons. Health nonprofits use them to vet patient-facing content.

In 2025, the U.S. National Library of Medicine started requiring all public health summaries to meet a minimum benchmark score of 85% accuracy and 80% completeness. Wikipedia passed. So did a few medical wikis. AI summaries didn’t.

Even Wikipedia’s own editors use the benchmarks. They’ve started a “Knowledge Integrity Dashboard” - a live feed showing which articles are falling behind. If an article scores below 80%, it gets flagged for review. Volunteers jump in to fix gaps.

The Future of Knowledge

This isn’t just about encyclopedias. It’s about how society trusts information.

As AI tools become more common, we need ways to measure truth - not just popularity. Open benchmarks give us that. They’re not perfect. They don’t catch everything. But they’re transparent. They’re testable. And they’re growing.

Next year, the team plans to expand FactCheck-2025 to include 100,000 claims and add 12 more languages. They’re also testing how well encyclopedias handle misinformation - not just errors, but deliberate falsehoods.

Imagine a world where every encyclopedia has a public scorecard. Where you can see not just what it says, but how well it holds up under pressure. That’s the future. And it’s already here.

What is knowledge integrity?

Knowledge integrity is how accurately, completely, consistently, and transparently information is presented. It checks whether facts are correct, whether key details are included, whether sources are cited, whether updates happen quickly, and whether conflicting views are fairly represented. It’s not just about being right - it’s about being trustworthy.

How do open benchmarks differ from traditional fact-checking?

Traditional fact-checking looks at one claim at a time - usually a viral post or misleading headline. Open benchmarks test hundreds or thousands of claims systematically across multiple sources. They’re repeatable, public, and standardized. You can run the same test tomorrow and get the same results. That’s how you build trust.

Is Wikipedia still the most reliable encyclopedia?

In the 2025 FactCheck benchmark, Wikipedia ranked highest in speed and transparency - updating content within hours and linking to sources. It scored 92.4% accuracy. Britannica was slightly more accurate (94.1%) but slower and less complete. For everyday use, Wikipedia’s balance of speed, depth, and openness makes it the most reliable overall - if you check its sources.

Can AI-generated summaries be trusted?

No, not yet. In 2025 testing, AI summaries scored only 81.9% accuracy and had the lowest transparency score (29.1%). They often invented sources, omitted critical context, and failed to flag uncertainty. While fast, they’re not reliable for serious research. Always verify AI answers with a trusted encyclopedia.

How can I check an encyclopedia’s score myself?

The FactCheck-2025 dataset is free and open. You can download it from the Knowledge Integrity Project website. The tools to run tests are written in Python and available on GitHub. Even non-programmers can use the web interface to compare any two encyclopedias on a list of 500 sample questions.

Why does completeness matter more than accuracy?

Accuracy means a fact is correct. Completeness means you’re not leaving out important context. For example, saying “vaccines prevent disease” is accurate. Saying “vaccines prevent disease and have been tested on millions with minimal side effects” is complete. In health and policy, missing context can be dangerous. A complete answer saves lives. An accurate but incomplete one can mislead.