When you read an article on Wikipedia is the world's largest free online encyclopedia, built by volunteers across the globe. Also known as Wikimedia Commons, it handles billions of requests every month. The framework relies heavily on community trust and automated tools to maintain quality., you see millions of edits daily. Most seem fine, but some are vandalism or spam. How do researchers and administrators sort through this noise? They use MACHINE LEARNING. Specifically, they rely on a system called ORES.

Ores stands for Objective Revision Evaluation Service. It provides machine learning predictions about the quality of edits. This isn't just a theoretical concept; it powers real-time filters that flag suspicious activity before it causes harm. As of 2026, these models process thousands of revisions per second, making it a critical piece of internet infrastructure.

Understanding ORES and Its Core Functions

To understand the research potential, you first need to grasp what the service actually does. It is not a judgment engine in the human sense. Instead, it calculates probabilities. Think of it as a risk assessment tool rather than a verdict. When a user saves a page change, ORES scans the modification and assigns scores to various traits.

ORES is an objective revision evaluation service that uses predictive modeling to assess Wikipedia edits. Developed by The Wikimedia Foundation, it helps communities identify bad faith edits efficiently.

The system tracks several key dimensions. Is this edit likely to be damaging? Does it look like vandalism? Is the contributor new? These aren't random guesses; they are mathematical outputs based on historical data. Researchers use these scores to study patterns in human behavior. For instance, you might analyze how vandalism spikes during breaking news events. Without ORES, manually tagging millions of edits would be impossible for any single human team.

The Role of Machine Learning in Content Moderation

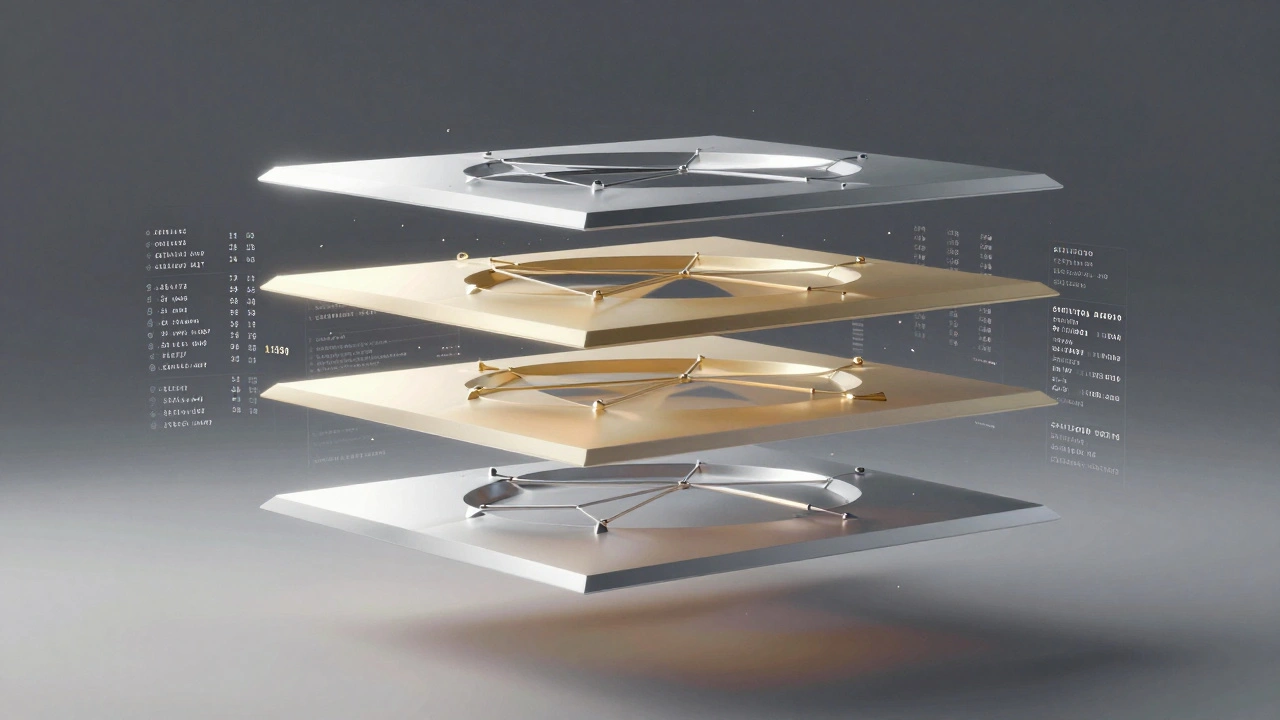

Under the hood, ORES operates using supervised learning algorithms. This means the system learns from past examples where humans have already labeled edits as good or bad. It builds a relationship between input features-like the number of characters changed-and the output quality score. This approach allows the model to generalize from training data to unseen edits.

Why does this matter for your research? Because it turns subjective editing history into quantifiable data. Before ORES, analyzing edit quality required reading summaries or relying on manual revert logs. Now, you can export datasets of prediction scores for statistical analysis. This shift enables large-scale studies on information ecology.

| Model Name | Purpose | Output Metric |

|---|---|---|

| Damaging | Predicts harmful edits | Probability 0-1 |

| Good Faith | Identifies constructive contributions | Probability 0-1 |

| Destab | Flags edits that destabilize pages | Binary Score |

Each model focuses on a specific aspect of edit health. A high "damaging" score usually triggers a warning on the watchlist. A low "good faith" score might suggest an account created for spamming. Researchers combine these signals to create composite views of project health over time.

Data Sources and Feature Engineering

You cannot train a robust model without rich data. ORES pulls its inputs from the MediaWiki database dumps. These include revision history, user registration timestamps, and discussion page talk archives. The process of turning raw logs into usable features is called feature engineering.

For example, the model doesn't just see text. It sees structural metadata. How many links were removed? Was the edit reverted quickly? Did the user have a verified email? These features feed into the neural network or regression algorithm. The accuracy depends entirely on the relevance of these chosen features.

In recent updates leading up to 2026, developers have added natural language processing capabilities. This allows the system to understand the sentiment of the edit summary. If a summary reads aggressively hostile, the probability of damage increases. This integration bridges the gap between text analysis and behavioral prediction.

Evaluating Accuracy and Avoiding Bias

No machine learning system is perfect. You need to evaluate performance using precision and recall. Precision tells you how many flagged edits are actually bad. Recall tells you how many bad edits you successfully caught. High precision reduces false alarms for editors, while high recall protects the platform from content abuse.

However, there is a significant challenge: bias. If the training data reflects the views of a specific group of experienced volunteers, the model inherits their blind spots. Research indicates that ORES historically struggled with edits from smaller language wikis. This happens when English-centric data dominates the training set.

Bias is a systematic error in AI systems caused by skewed data representation or flawed assumptions. In this context, it manifests as Algorithmic Fairness Issues impacting minority language contributors disproportionately.

Addressing this requires active intervention. Researchers now work on multi-lingual models that respect local norms. It involves regular audits of the confusion matrix. If a model flags 80% of edits from non-native speakers as damaging, something is wrong with the features, not the contributors. Fixing this is essential for maintaining trust in the encyclopedic mission.

Accessing Tools for Your Own Analysis

You don't need to rebuild the system to benefit from it. ORES exposes an API that allows programmatic access to scores. Developers can query the service using simple HTTP requests. The response returns JSON data containing the probabilities and confidence intervals for specific revisions.

If you are building a dashboard or running a custom analysis, start with the REST API. Documentation shows you exactly what parameters to send, such as the page title and revision ID. Many researchers write scripts in Python to batch-process large sets of edits. GitHub hosts open-source wrappers that simplify this connection.

- Set up a development environment with necessary libraries like Python is a popular programming language widely used for data science and scripting tasks..

- Clone the official client repository from the version control system.

- Test queries against the sandbox instance first.

- Monitor rate limits to avoid overloading the server.

This approach ensures you stay within ethical boundaries while gathering the data you need. Remember, scraping raw databases directly violates terms of service, but using the public API is encouraged and safe.

Future Directions for Research

Looking ahead, the next wave of improvements focuses on explainability. Black box models are hard to trust. Researchers want to know exactly why a specific edit was flagged. New techniques allow the system to highlight which features contributed most to the decision. This transparency helps editors accept the warnings rather than ignore them.

Another area is cross-project application. Can these models help other collaborative platforms beyond the main encyclopedia? The underlying logic applies to forums, code repositories, and even social media moderation. Transferring this technology requires adapting the feature sets, but the architectural principles remain solid.

Finally, there is the human-in-the-loop component. The goal isn't to replace people, but to assist them. Automated triage frees up senior editors to focus on complex disputes. Understanding this balance is vital for anyone conducting longitudinal studies on volunteer motivation.

Is ORES available for private wikis?

ORES is primarily deployed on Wikimedia projects. However, self-hosted instances are theoretically possible for private MediaWiki installations using the same open-source backend code, though maintenance requires significant technical skill.

How accurate are the predictions?

Accuracy varies by model and wiki. Generally, the damaging model achieves around 80% accuracy on English Wikipedia. Smaller wikis typically see lower performance due to less training data available for those languages.

Can I download the full dataset?

Full historical revision datasets are available via dump files provided by the Wikimedia Foundation. You do not need to use ORES API for bulk downloads; direct SQL dumps are preferred for massive historical analysis.

What programming language is best for API interaction?

Python is the most common choice because of its extensive data analysis libraries. Official bindings exist for other languages like PHP, but Python offers the easiest path for statistical research and visualization.

Does ORES automatically delete content?

No, ORES does not take action on its own. It only provides predictions. Actual deletions or blocks are handled by human administrators or separate automated bots configured by the community.