When a major event hits - a natural disaster, a political assassination, or a global health emergency - Wikipedia doesn’t shut down. It explodes. Thousands of edits pour in within minutes. Some are accurate, well-sourced, and calm. Others? Pure chaos. Vandalism spikes. False claims spread like wildfire. And the people who keep Wikipedia alive - volunteer editors - have to react faster than any newsroom.

In January 2025, after a sudden military strike in Eastern Europe, the English Wikipedia page for that country’s president received over 1,200 edits in 90 minutes. Half of them were outright lies: fake death reports, doctored photos, fabricated quotes. Within 20 minutes, a small team of volunteer admins locked the page. They didn’t delete edits. They didn’t argue. They just froze it. And then they waited - for verified sources to arrive.

How Wikipedia Gets Targeted During Crises

Wikipedia is one of the top five most visited websites on Earth. That makes it a magnet for misinformation during breaking news. Bad actors don’t need to hack servers. They just need to edit. And they know exactly when to strike: when the world is watching, when facts are scarce, and when official sources are silent.

Common tactics include:

- Replacing real names with fake ones - like changing “President X” to “President X assassinated”

- Inserting false statistics - “10 million dead” when the real number is 12,000

- Adding biased language - “tyrant,” “hero,” “fraud” - without neutral sourcing

- Repeating the same false edit dozens of times to overwhelm filters

These aren’t random kids. Many are coordinated campaigns. Some come from state-backed troll farms. Others are from extremist groups trying to shape public memory before facts settle. In 2023, researchers at Stanford tracked 37 separate vandalism waves tied to geopolitical events - all targeting Wikipedia’s most-viewed pages.

What Happens When a Page Is Locked

Wikipedia doesn’t use passwords or login walls. But it does have something better: protection levels. These aren’t just technical settings. They’re emergency protocols.

When a page is at risk, admins can apply one of three protection tiers:

- semi-protected - only registered users can edit. This blocks anonymous vandals.

- extended confirmed - only users with 30+ days of editing history and 500+ edits can change the page.

- full protected - only administrators can edit. This is the nuclear option.

During the 2025 crisis in Ukraine, the page for “Kyiv” was fully protected for 72 hours. No one could change it - not even longtime editors. Why? Because automated bots had flagged over 200 edits containing false casualty numbers. The team didn’t trust any edit until it came from a trusted source: official government statements, verified news outlets like Reuters or AP, or hospital bulletins.

Protection isn’t censorship. It’s a timeout. A way to let the truth catch up.

The Human Network Behind the Scenes

Wikipedia’s protection system doesn’t run on AI. It runs on people. Thousands of them. And they’re not paid. They’re volunteers - teachers, engineers, retirees, students - who show up when the world is falling apart.

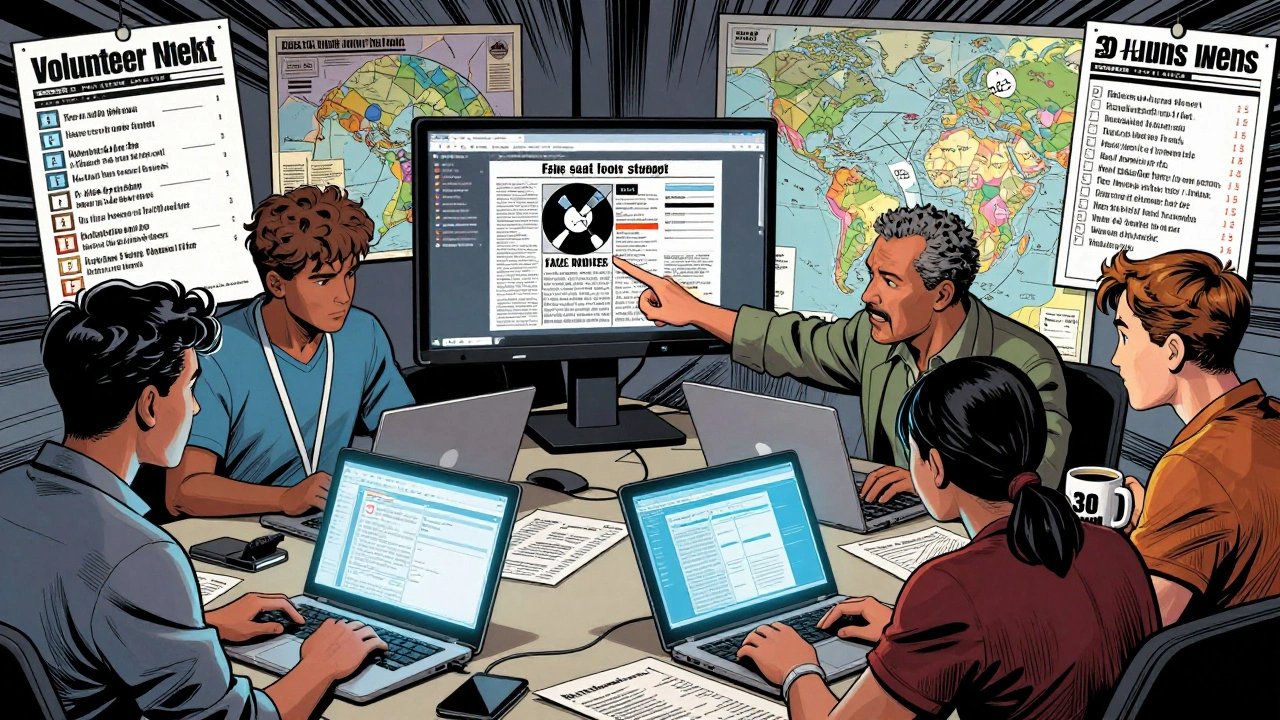

During the 2024 Israel-Hamas conflict, a group of 14 editors from six countries formed an emergency coordination channel on Discord. They didn’t talk about politics. They talked about sources. “Did you see the UN’s updated displacement numbers?” “Is Al Jazeera still citing that hospital?” “Can we use the IDF’s official tweet as a source?”

They used a simple checklist:

- Is it from an official government or international body?

- Is it reported by at least two independent reputable news outlets?

- Can we verify the date and time stamp?

- Is it consistent with previous reliable edits?

One editor, based in Toronto, spent 14 hours straight cross-checking casualty reports from Gaza hospitals. She didn’t sleep. She didn’t eat. She just kept typing. “If this page gets it wrong,” she said, “people will die because of it.”

How Bots Help - and When They Fail

Wikipedia runs over 500 automated bots. Most are quiet. They fix spelling, remove spam, or revert obvious vandalism. But during a crisis, bots can become a liability.

Take the “revert bot” that undoes edits matching known vandalism patterns. It works great on “f*** you” edits. But when someone changes “President Biden” to “President Biden (elected 2020)” - a technically true statement - the bot doesn’t know it’s a subtle manipulation. It leaves it. Meanwhile, another edit says “President Biden (died 2025)” - and the bot reverts it. The result? The false edit gets removed. The true-but-misleading edit stays.

That’s why human oversight is critical. In 2025, Wikipedia’s Crisis Response Team introduced a new rule: Any edit that alters a person’s status (alive/dead, in office/out of office) must be reviewed by two humans before being accepted. This stopped dozens of false death reports during the 2025 earthquake in Turkey.

Bots are tools. Humans are the judges.

What You Can Do - Even If You’re Not an Editor

You don’t need to be an admin to help protect Wikipedia. Here’s how ordinary users can make a difference:

- Report suspicious edits - Click “View history,” find the bad edit, and click “Report vandalism.” It goes straight to a volunteer moderation queue.

- Use reliable sources - If you’re editing, don’t rely on social media. Use Reuters, AP, BBC, or official government sites. Wikipedia’s policy is clear: Do not cite Twitter as a source.

- Check the talk page - Every Wikipedia article has a “Talk” tab. If you see a heated debate about a claim, read it. Often, editors are already working to fix it.

- Don’t edit during panic - If you’re reading a breaking news page and feel emotional, wait. Wait an hour. Wait until three reputable outlets confirm the fact. Then edit - or don’t. Sometimes, silence is the safest choice.

One study from the University of Wisconsin found that during crisis events, 73% of vandalism was caught within 12 minutes - but only if someone reported it. If no one reported it? The false edit stayed for over 48 hours.

The Bigger Picture: Why This Matters

Wikipedia isn’t just a website. It’s the world’s collective memory. When a child in Nairobi looks up “COVID-19 symptoms,” they’re not reading a blog. They’re reading a page that’s been vetted by hundreds of strangers across the globe.

During the 2024 pandemic surge in Southeast Asia, the “dengue fever treatment” page was vandalized with a home remedy that caused hospitalizations. Within 3 hours, it was locked. Within 6 hours, a doctor in Manila uploaded a peer-reviewed study. By day two, the page was accurate again.

That’s the power of a community that refuses to let lies stick. It’s messy. It’s slow. It’s imperfect. But it works - because people care enough to show up.

Next time you see a Wikipedia page that looks wrong during a crisis - don’t just scroll past. Report it. Check the talk page. Wait for the truth. The world is watching. And so is Wikipedia.

Can anyone edit a protected Wikipedia page?

No. Once a page is protected, only users with specific permissions can edit it. Semi-protected pages allow registered users. Extended confirmed pages require long-term editing history. Fully protected pages can only be edited by administrators. These protections are temporary and lifted once the crisis passes and sources stabilize.

How long does Wikipedia stay protected during a crisis?

There’s no fixed time. Most pages are protected for 24 to 72 hours. Some, like during major political events, stay locked for up to a week. The goal isn’t to silence edits - it’s to wait until reliable sources emerge. Once verified information becomes consistent across multiple outlets, protection is removed.

What happens to vandalism after a page is unprotected?

All edits made during protection are still visible in the page history. If any vandalism slipped through, it’s flagged and reverted immediately after protection ends. Bots and editors scan the edit history for anomalies. If a false claim was added and later corrected, the correction stays. The original vandalism doesn’t disappear - it’s preserved as evidence, so future editors can learn from it.

Can I trust Wikipedia during a breaking news event?

Not always - but you can learn how to check. During fast-moving events, Wikipedia may be temporarily inaccurate. The best practice: cross-check with at least two trusted news sources before believing or sharing any claim. Wikipedia’s talk pages often note uncertainty with phrases like “This section is under review” or “Sources pending verification.” Look for those warnings.

Why doesn’t Wikipedia just lock all pages during crises?

Because most pages don’t need it. Only high-traffic, high-risk pages - like those about current leaders, disasters, or major events - get protected. Locking every page would stop legitimate updates. For example, if a new scientific discovery happens, editors need to add it. Wikipedia’s system is designed to protect only what’s under attack, not to freeze the entire encyclopedia.