Quick Takeaways

- Quantitative methods focus on scale, patterns, and statistical significance using tools like API dumps.

- Qualitative methods dive into the 'why' by analyzing talk pages, edit conflicts, and editor motivations.

- The most powerful research often uses a mixed-methods approach to validate big-data trends with human stories.

- Key challenges include dealing with 'edit wars' and the skewed demographics of the contributor base.

The Numbers Game: Quantitative Approaches

When you want to know how many people are editing a page or how often a specific bias appears across 10,000 articles, you go quantitative. This approach treats Wikipedia as a giant dataset. Researchers often start by accessing the Wikipedia Database Dumps, which are massive files containing every single revision of every page. You aren't reading articles here; you are analyzing strings of text and timestamps.

One of the most common tools for this is Python, specifically using libraries like Pandas for data manipulation. A researcher might want to track the "gender gap" on the site. By scraping the user profiles of top contributors and using name-gender dictionaries, they can prove statistically that a vast majority of editors are men. This isn't a guess; it is a value based on millions of data points. This is the power of Wikipedia research methodologies-they can turn a vague suspicion into a hard number.

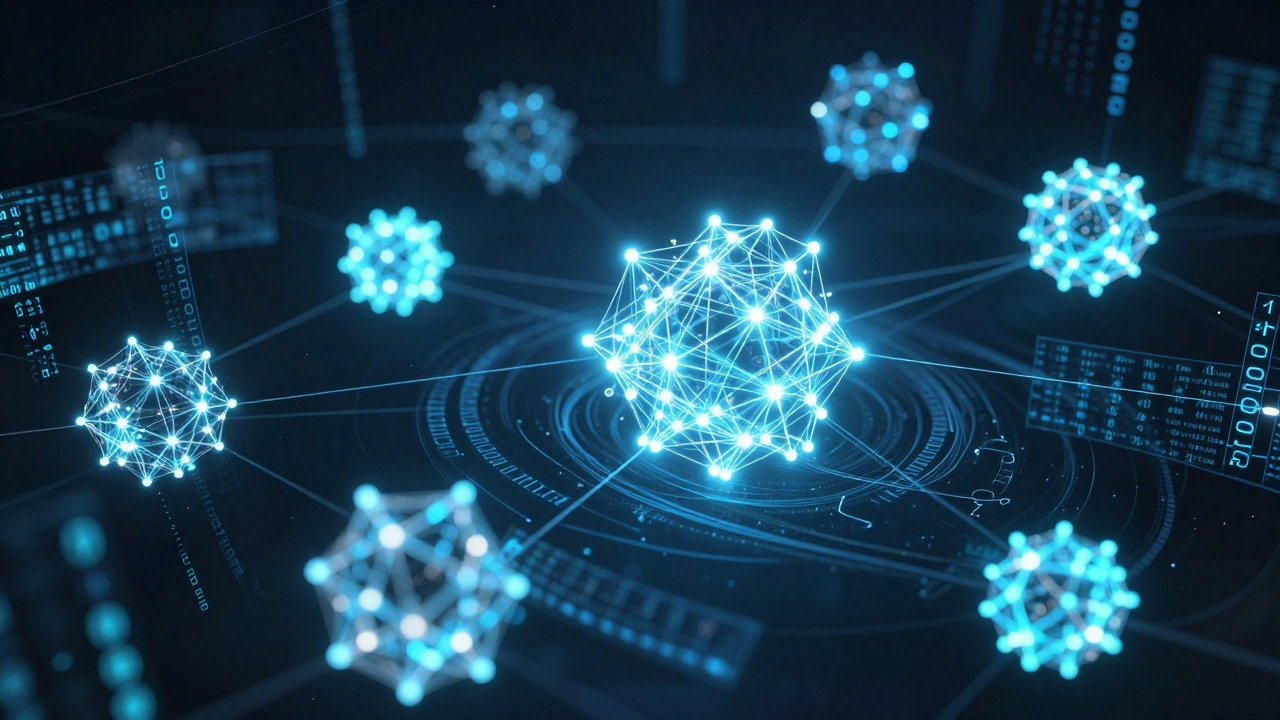

But counting isn't enough. Many scholars use Network Analysis to see how articles link to one another. If you visualize this, you see "clusters" of knowledge. For example, you might find that articles about quantum physics are tightly knit, while articles about 18th-century pottery are isolated. This tells us how the community organizes information and which topics are viewed as "central" to human knowledge.

| Attribute | Quantitative Approach | Qualitative Approach |

|---|---|---|

| Primary Goal | Identifying patterns and scale | Understanding meaning and motive |

| Data Source | SQL Dumps, API logs, Metadata | Talk pages, User logs, Interviews |

| Sample Size | Thousands to millions of pages | Small set of case studies |

| Typical Tool | Python, R, Gephi | NVivo, Manual Coding, Ethnography |

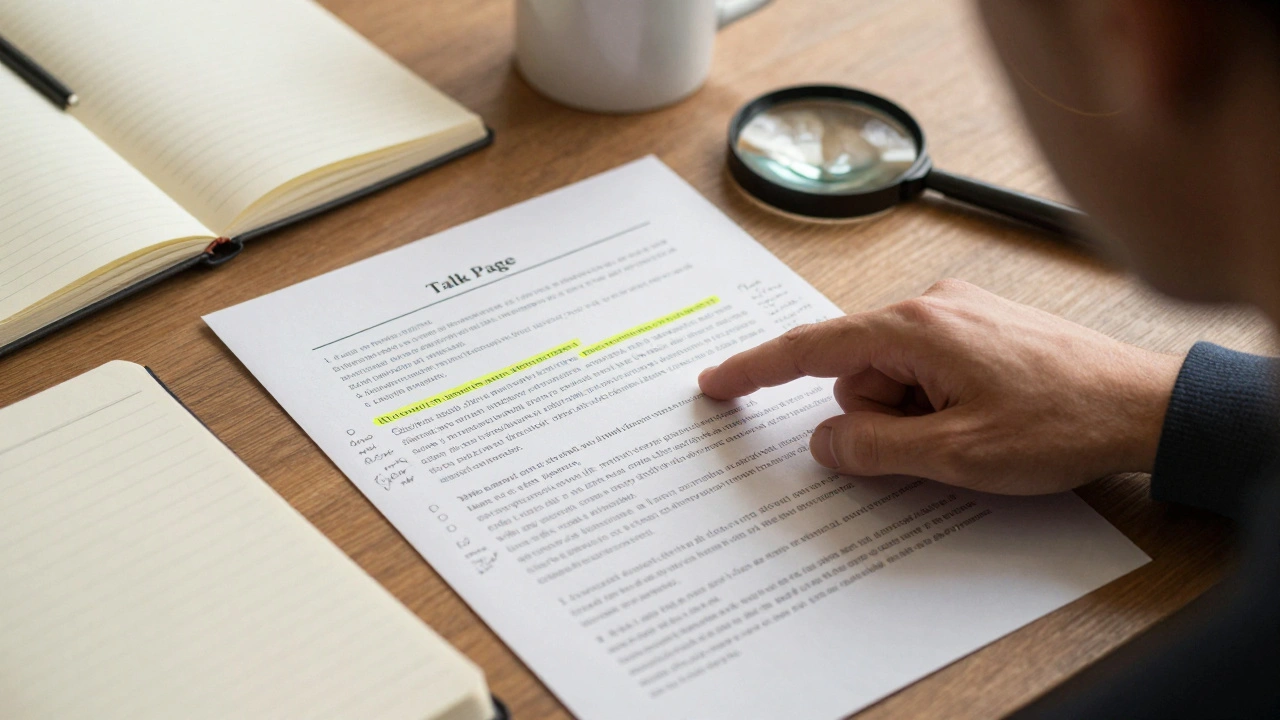

Getting Personal: Qualitative Approaches

Numbers tell you that a page was edited 500 times in an hour, but they don't tell you that the editors were screaming at each other in the comments. This is where qualitative research comes in. Instead of looking at the final article, qualitative researchers spend their time on Talk Pages, which are the behind-the-scenes forums where editors debate what should actually stay in an article.

If you want to understand how a controversial political event is framed, you perform a Content Analysis. You read the arguments, look at the sources being cited, and observe the power dynamics. Who is the "administrator" shutting down the conversation? Who is the new user trying to introduce a marginalized perspective? This is essentially digital ethnography. You are observing a culture in its natural habitat.

A great example of this is studying "Edit Wars." A quantitative study can tell you that a page about a specific politician has a high "revert rate" (meaning changes are quickly undone). But a qualitative study reveals the ideological battle. You might find that two different groups are fighting over a single adjective-like whether a leader was "decisive" or "authoritarian." This tells us more about human psychology and political conflict than any spreadsheet ever could.

The Collision of Methods: Mixed-Methods Research

The real magic happens when you combine both. If you only use quantitative data, you risk oversimplifying. If you only use qualitative data, you risk "anecdotal evidence"-meaning you found one crazy fight on a talk page and assumed the whole website is a war zone. Mixed-methods research uses the quantitative data to find the "where" and the qualitative data to find the "why."

Imagine a researcher notices a spike in edits for articles related to climate change every September. That is a quantitative discovery. To explain it, they dive into the talk pages and find that students worldwide are starting university and updating their assignments. Now the data has a story. This approach satisfies both the need for statistical rigor and the need for human context.

This synergy is particularly useful when studying Algorithmic Bias. You might use a script to find that certain demographics are consistently deleted from biographies (quantitative), and then interview the editors who performed the deletions to understand their definition of "notability" (qualitative). This exposes the hidden rules that govern what the world considers "important" knowledge.

Common Pitfalls and Ethical Hurdles

Studying Wikipedia isn't as simple as it looks. One of the biggest traps is the "Survivor Bias." You are studying the people who stayed and edited, not the thousands who tried to contribute once and got yelled at by a veteran editor and quit. If you only analyze existing edits, you are missing the ghost of everyone who failed.

Then there is the issue of privacy. Even though Wikipedia is public, the people editing it are often doing so under pseudonyms. Does that make them public figures? If you are tracking a user's behavior across five years, are you violating their expectation of anonymity? Most institutional review boards (IRBs) treat this as a gray area, but the gold standard is to anonymize user IDs in published papers to protect the individuals from real-world harassment.

Finally, beware of the "Wikipedia as Truth" fallacy. Some researchers use Wikipedia as a baseline for what is "true" to compare against other sources. But Wikipedia is a reflection of the people who edit it, not a divine oracle. It is a mirror of the biases, languages, and interests of its community, not a perfect representation of objective reality.

Choosing Your Path: A Decision Guide

So, which one should you use? It depends on the question you are asking. If your question starts with "How many," "How often," or "What is the correlation," you need a quantitative toolkit. You will need to get comfortable with APIs and large-scale data processing. You will be looking for trends that hold up across thousands of samples.

If your question starts with "How," "Why," or "In what way," you need qualitative tools. You will be reading, coding themes, and perhaps even conducting interviews via the Wikipedia community portals. You will be looking for deep insights into a small number of cases.

If you have the time and resources, do both. Start wide with the numbers to narrow your focus, then go deep with the text to find the meaning. This is how you build a study that is both scientifically sound and humanly relevant.

Can I study Wikipedia without knowing how to code?

Yes, but you will be limited to qualitative research. You can analyze talk pages, read article histories manually, and conduct case studies. However, for any large-scale quantitative analysis, you'll eventually need tools like Python or R to handle the massive amount of data in the database dumps.

What is a "Database Dump" exactly?

A database dump is a snapshot of Wikipedia's entire content and metadata at a specific point in time. The Wikimedia Foundation provides these for free. They allow researchers to run complex queries locally on their own machines without slowing down the live website.

How do you handle bias in qualitative Wikipedia research?

The best way is through "triangulation." This means comparing your findings from talk pages with other data sources, such as the user's edit history or interviews with the editors. Using a standardized coding frame-where multiple researchers agree on how to categorize a comment-also helps reduce individual bias.

Is it ethical to quote Wikipedia editors in academic papers?

Generally, yes, because it is a public forum. However, it is best practice to redact usernames or use pseudonyms to avoid "doxing" or attracting unwanted attention to the editor, especially when dealing with sensitive political or social topics.

What is the most common mistake in quantitative Wikipedia studies?

Treating the "current version" of a page as the only data point. Wikipedia is a process, not a product. If you only look at the final article, you miss the thousands of changes and debates that shaped it. You must analyze the revision history to get a true picture of how knowledge is constructed.

Next Steps for Researchers

If you are just starting, don't try to boil the ocean. Pick a specific niche-perhaps a specific language edition of Wikipedia or a small cluster of related articles. If you are leaning toward quantitative work, start by exploring the Wikimedia API to see how you can pull small amounts of data before committing to a full database dump.

For those going the qualitative route, spend a month just "lurking." Read the talk pages without posting. Understand the jargon (like "WP:NPOV" for neutral point of view) and the social hierarchy. Once you understand the culture, your analysis will be far more accurate.

Regardless of your choice, remember that Wikipedia is a living organism. A methodology that worked in 2015 might not work today because the site's editing tools and community guidelines have evolved. Stay flexible and always be ready to pivot your approach based on what the data is actually telling you.