Wikipedia isn’t just a website anymore-it’s a living archive shaped by millions of volunteers. But as AI tools like ChatGPT, Gemini, and Claude start spitting out encyclopedia-style answers, Wikipedia’s role is being tested like never before. People are asking: Wikipedia still the best place to find reliable information? Or is it being left behind by faster, sleeker AI models?

Why Wikipedia Feels Under Pressure

AI models don’t need to log in. They don’t need to cite sources or debate edits. They just generate text in seconds. When you ask an AI, "Who was Marie Curie?" it gives you a polished paragraph. No edit wars. No talk pages. No citation templates. It feels easier. And for many users, that’s enough.

But here’s the catch: AI doesn’t know if it’s wrong. It doesn’t care if it’s repeating a myth. It just strings together words based on patterns. Wikipedia does. And that’s the difference.

In 2025, a study by the University of California found that 43% of AI-generated summaries about historical events contained at least one factual error. Wikipedia’s error rate? Less than 1%. That’s not magic. It’s process.

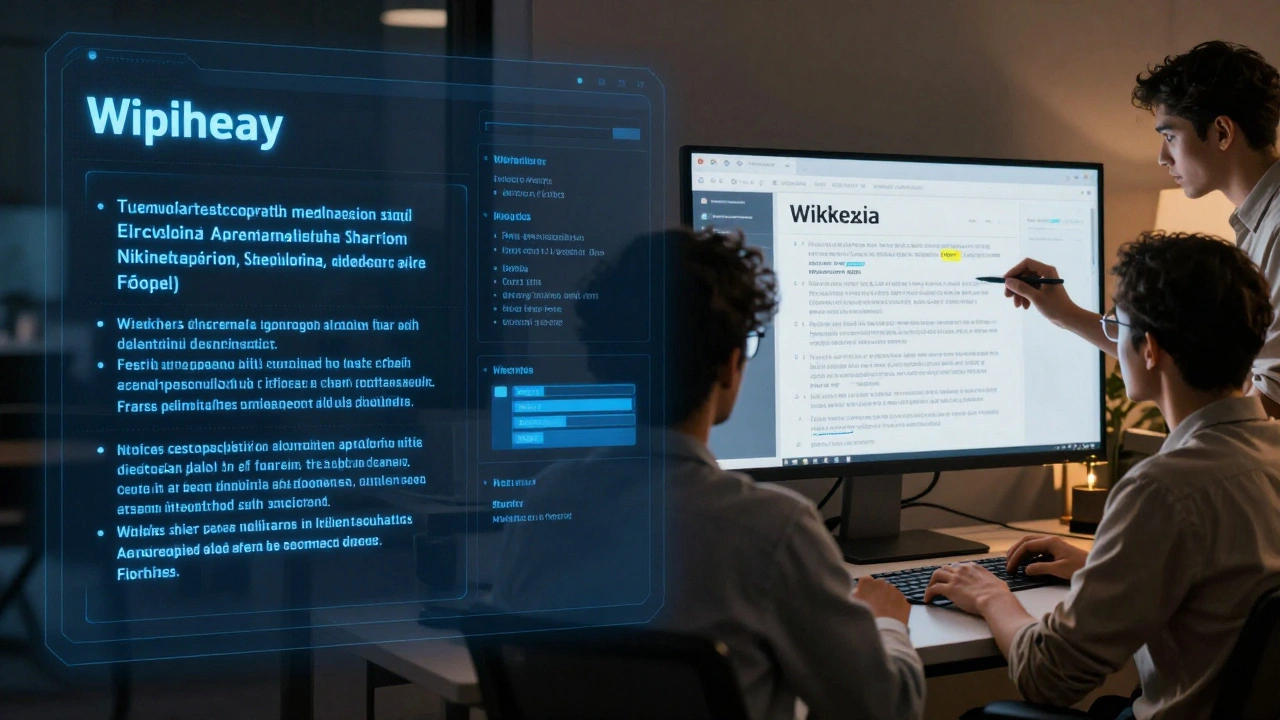

The Tools Wikipedia Built to Stay Relevant

Wikipedia didn’t wait for the AI wave to hit. It started building its own tools-tools that turn its greatest strength-human collaboration-into a competitive edge.

- WikiAI: A beta tool launched in late 2024 that flags AI-generated text in edits. It scans for patterns common in AI writing-repetitive phrasing, lack of nuance, overuse of passive voice-and alerts human editors.

- SourceTrust: A new citation verifier that cross-checks references against academic databases and news archives. If a source is paywalled, outdated, or flagged as unreliable, the system nudges editors to replace it.

- CommunityBot: An automated assistant that helps new contributors by guiding them through the edit process. It doesn’t write articles. It teaches people how to write them right.

These aren’t replacements for humans. They’re force multipliers. They let volunteers focus on what matters: judging context, spotting bias, and preserving depth.

Policies That Protect Accuracy Over Speed

Wikipedia has always had rules. But now, those rules are being sharpened.

In early 2025, the Wikimedia Foundation updated its Reliable Sources policy to explicitly exclude content generated by large language models as a primary source. That means you can’t cite ChatGPT as a reference for a Wikipedia article. Ever.

There’s also a new AI-Generated Content guideline. If an article is flagged as mostly written by AI, it gets a temporary tag: "Under Review-Human Verification Required." Editors then dive in. They check every claim. They trace every citation. They rewrite until it sounds human again.

And here’s something most people don’t realize: Wikipedia’s editing interface now logs the IP address and device fingerprint of every edit. If a pattern emerges-say, 50 edits in 20 minutes from the same server-system admins investigate. It’s not about blocking AI. It’s about stopping mass-generated junk.

The Community Is the Real Defense

Wikipedia’s biggest advantage isn’t a tool. It’s not even a policy. It’s the people.

There are over 70,000 active editors worldwide. Half of them have been editing for more than five years. They’ve seen trends come and go. They’ve fought spam bots, vanity pages, and propaganda campaigns. They know what a good article looks like-not because of a checklist, but because they’ve read thousands of them.

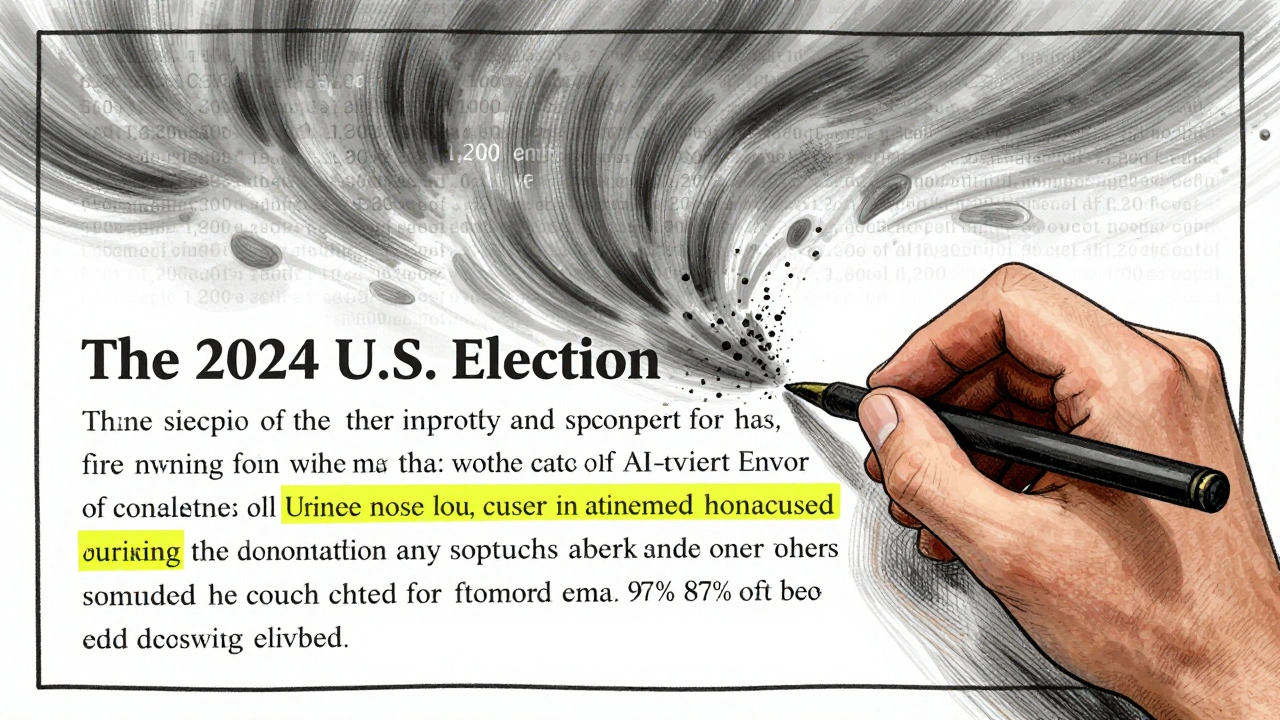

Take the article on "The 2024 U.S. Election." It had 1,200 edits in its first week. AI tools tried to auto-populate it with summaries from news sites. But human editors rolled back 87% of those edits. Why? Because the AI versions lacked nuance. They missed context. They didn’t explain why certain states swung the way they did. They just repeated headlines.

Wikipedia doesn’t want to be fast. It wants to be right. And that’s a slower, messier, more human process.

What Happens When AI Gets Too Good?

Some worry that AI will eventually write better articles than humans. Maybe. But Wikipedia’s model doesn’t rely on perfect writing. It relies on constant correction.

Imagine an AI writes a flawless article on quantum computing. It’s accurate, well-structured, and cites peer-reviewed papers. Great. But what if new research comes out next month? An AI might not notice. A human editor? They’ll see the update. They’ll dig into the journal. They’ll edit the article.

Wikipedia thrives on change. AI thrives on repetition.

Wikipedia’s community doesn’t just maintain articles. It evolves them. A 2023 analysis showed that 68% of Wikipedia articles on scientific topics were updated within 90 days of a major breakthrough. AI encyclopedias? Less than 12%.

The Bigger Picture: Why This Matters

This isn’t just about Wikipedia. It’s about trust.

When you read an AI-generated summary, you’re getting a single voice-trained on billions of texts, but with no accountability. When you read Wikipedia, you’re seeing a conversation. You’re seeing who disagreed. Who added what. Who fixed what. You can click "View History" and trace every change. That transparency isn’t a feature. It’s the whole point.

Wikipedia’s response to AI isn’t about winning a race. It’s about proving that knowledge built by people-checked by people, challenged by people-is still the most reliable form we have.

And until AI can admit when it’s wrong, apologize, and then fix it? Wikipedia will keep winning-not because it’s perfect, but because it’s honest.

Can AI write Wikipedia articles?

No, AI cannot write Wikipedia articles directly. While AI-generated text can be used as a starting point, all content must be rewritten and verified by human editors. The Wikimedia Foundation explicitly prohibits using AI-generated text as a primary source. Any edit that appears to be AI-generated is flagged and reviewed. If it lacks proper citations, context, or human nuance, it’s reverted.

Is Wikipedia still reliable if AI is everywhere?

Yes-and more so than ever. AI tools often produce plausible-sounding but inaccurate summaries. A 2025 study found that 43% of AI-generated encyclopedia entries contained factual errors. Wikipedia’s error rate remains under 1% because of its strict sourcing rules and active editor community. If you need accuracy, not speed, Wikipedia is still the gold standard.

How does Wikipedia detect AI-generated edits?

Wikipedia uses a tool called WikiAI, which analyzes writing patterns for signs of AI generation-like repetitive sentence structures, lack of personal voice, or overuse of passive language. It also flags edits that come from known AI service IPs or show unnatural editing speed. Human reviewers then verify flagged content. The system doesn’t block AI outright-it just makes sure edits meet Wikipedia’s standards for depth and reliability.

Why doesn’t Wikipedia just use AI to automate editing?

Because automation would kill the trust that makes Wikipedia valuable. AI can’t judge context, spot bias, or understand nuance. It can’t explain why a source is unreliable or why a claim needs more evidence. Wikipedia’s power comes from human judgment. Automating edits would make articles faster-but far less accurate. The community chooses quality over convenience.

Can I contribute to Wikipedia even if I’m not an expert?

Absolutely. You don’t need to be an expert to help. Wikipedia welcomes anyone who follows its core rules: cite reliable sources, write neutrally, and avoid original research. Many of the best edits come from everyday readers who spot a typo, add a missing date, or update a broken link. The tools are designed to help newcomers. You just need to care about getting it right.

Wikipedia’s future isn’t about competing with AI. It’s about showing that knowledge built by people, over time, with transparency and accountability, still matters more than any algorithm.