Wikipedia doesn’t have cops. No lawyers. No courts. Yet every year, hundreds of editors get locked out of editing entire topics-sometimes for years. How? Through something called topic-area arbitration. It’s not a court, but it acts like one. And unlike most online communities, Wikipedia’s system actually works. Not perfectly. Not always fairly. But often enough to keep major topics from collapsing under endless edit wars.

What Is Topic-Area Arbitration?

Wikipedia’s arbitration system is the last step in resolving serious conflicts. When editors can’t agree on how to handle a topic-say, editing policies for articles on climate change, gender identity, or political figures-they go through mediation first. If that fails, someone can request arbitration. The Arbitration Committee (ArbCom) then steps in. But not every case gets a full committee review. Many are handled by topic-area arbitration, a streamlined version focused on one subject area.

Think of it like a zoning board for content. If a group of editors keeps reverting each other’s edits on articles about U.S. elections, or constantly rewrites sections on transgender healthcare, ArbCom can declare that area "high-conflict." Then they assign remedies: restrictions on who can edit, mandatory summaries before changes, or even temporary bans on specific users from those pages.

Common Remedies and How They’re Applied

Arbitration doesn’t punish. It manages. The goal is to keep editing alive while reducing hostility. Here are the most common remedies:

- Topic bans: A user can’t edit any article in a defined area-for example, all articles about Israel-Palestine relations. This isn’t a global ban. They can still fix typos in articles about cats or upload photos to Commons.

- Conditional editing: Editors must submit a summary of their changes before saving. This forces them to explain why they’re making a change, which often stops edit wars before they start.

- Co-editing requirements: Certain edits, especially on controversial topics, require at least two editors to agree before they go live. It’s like a built-in review system.

- Page protection levels: Articles may be semi-protected (only autoconfirmed users can edit) or fully protected (only administrators). This is temporary, usually lasting six months to two years.

- Neutral language mandates: ArbCom may require that all edits to a topic follow a specific tone, such as avoiding loaded terms like "radical" or "extremist." This isn’t censorship-it’s standardization.

These aren’t random decisions. They’re based on years of data. Between 2018 and 2025, ArbCom issued 147 topic-area remedies. Of those, 89% targeted areas with repeated edit wars lasting over 18 months. The most common triggers? Biased sourcing, repeated reverts, and personal attacks in edit summaries.

How Enforcement Actually Works

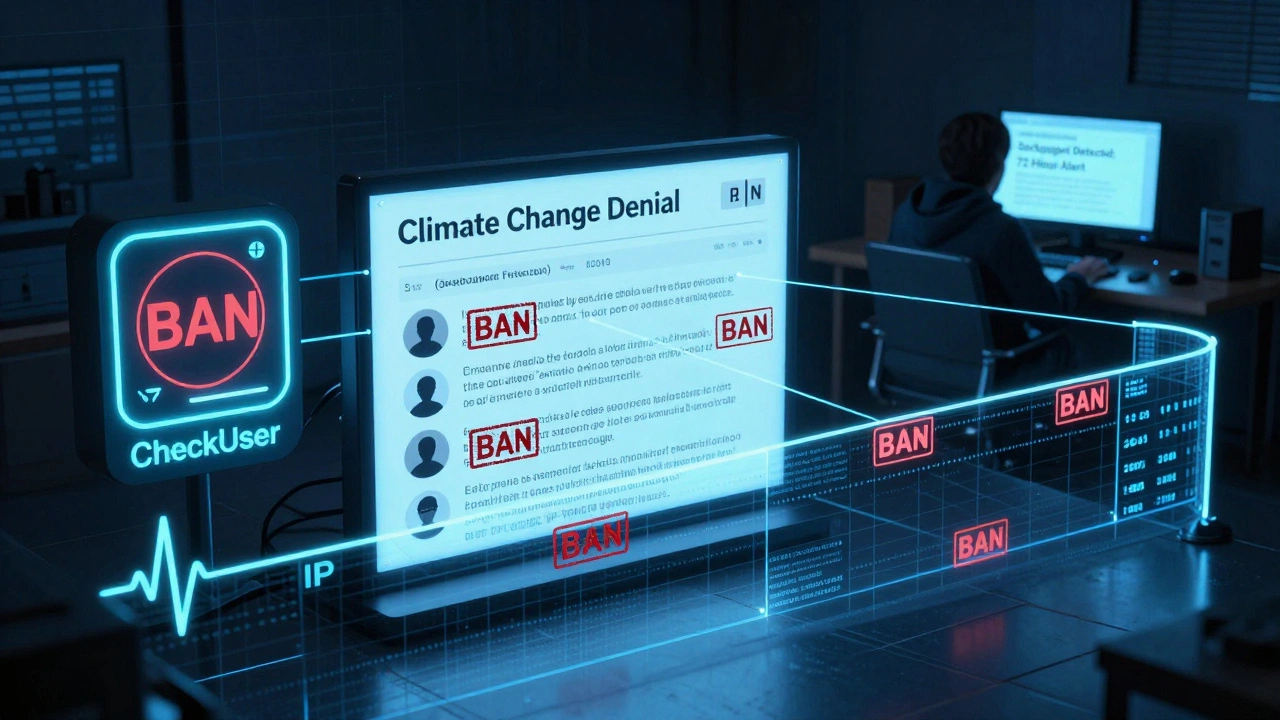

Here’s the part most people don’t get: enforcement is mostly automated. When a topic ban is issued, Wikipedia’s software checks every edit attempt. If a banned user tries to edit a restricted article, the system blocks it. No human needs to approve each block. The ban is enforced by code.

But enforcement isn’t perfect. Some users work around bans by creating new accounts. That’s sockpuppetry, and it’s a serious violation. ArbCom uses tools like the CheckUser tool to trace edits by IP address, editing patterns, and language use. In 2024, 32% of topic-ban violations involved sockpuppets. Most were caught within 72 hours.

Administrators don’t enforce bans alone. They’re guided by detailed case logs. Every arbitration case includes a public summary with the exact wording of the remedy, the evidence reviewed, and the rationale. This transparency is key. If a user feels wronged, they can appeal. And appeals happen-about 15% of topic-area bans are modified or lifted after review.

What Makes These Remedies Effective?

Why do these rules work when other online communities fail? Three things:

- Clarity: The rules are written in plain language. No legalese. If you’re banned from editing articles about vaccines, you know exactly what that means.

- Consistency: ArbCom applies the same standards across topics. A ban on editing articles about religion carries the same weight as one on editing articles about sports. Bias isn’t tolerated just because it’s "popular."

- Community buy-in: Editors who follow the rules help enforce them. If someone tries to sneak in a biased edit, others revert it-and report it. The system relies on peer pressure as much as software.

There’s data to back this up. A 2023 study of 42 topic-area arbitration cases found that articles under active remedies saw a 68% drop in edit wars and a 54% increase in article stability. In other words, the rules don’t just stop fights-they help articles improve.

When Remedies Fail

Not every case ends well. Sometimes, the remedy doesn’t match the problem. A user might be banned from editing articles about a political party, but the real issue is that they’re using a biased source across all topics. The ban doesn’t fix the root cause.

Other times, the community resists. In 2022, ArbCom issued a topic ban on a group of editors who repeatedly inserted unsubstantiated claims into articles about U.S. elections. But a large faction of editors argued the ban was "censorship." The backlash led to a public review, and the ban was narrowed. It’s messy. It’s human.

And sometimes, the system is too slow. One case involving disputed historical narratives took 11 months to resolve. By then, hundreds of harmful edits had been made. That’s why some editors push for faster triage-like giving regional arbitrators more power to act quickly.

Real Impact: What Changed After Arbitration

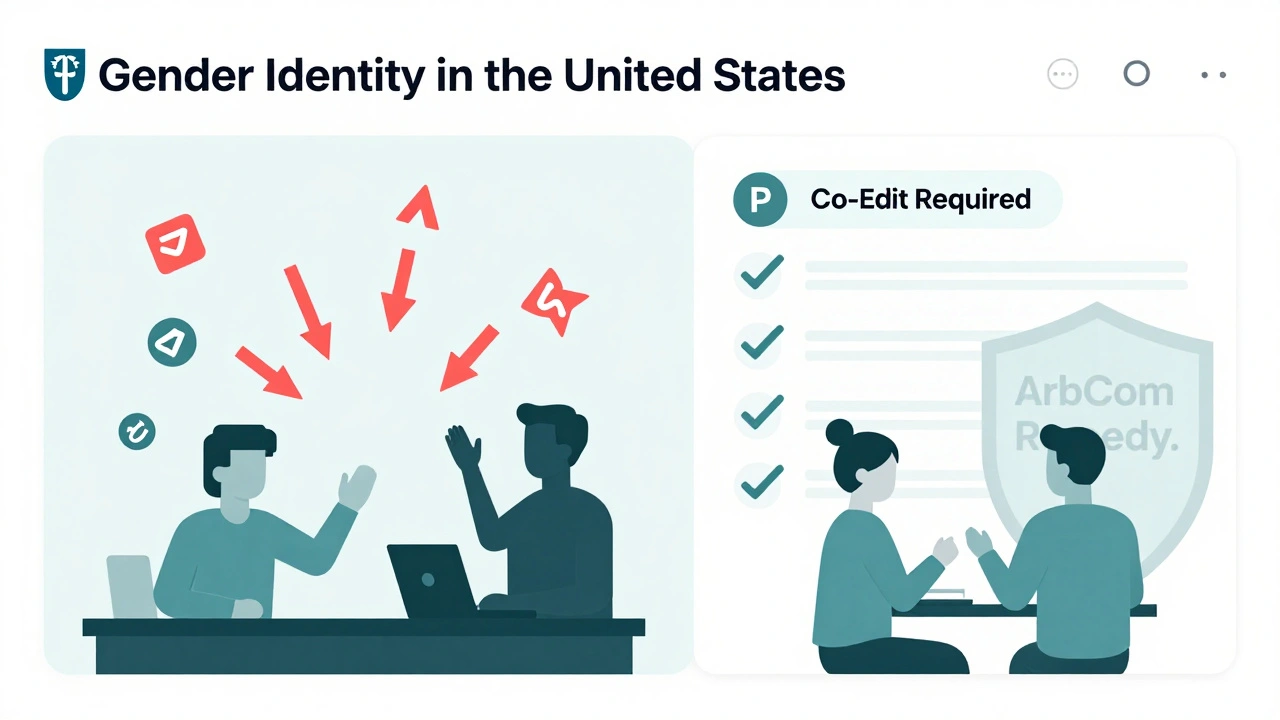

Look at the article on Gender identity in the United States. Before 2021, it was battleground. Over 300 edits per week. Reverts every hour. After arbitration, a topic ban was placed on three users who consistently inserted fringe theories. Co-editing was required for major changes. Neutral language guidelines were added.

Two years later, edits dropped to 12 per week. The article’s quality score (measured by independent WikiProjects) rose from C-class to GA-class (Good Article). The number of citations from peer-reviewed journals doubled.

Same with Climate change denial. After arbitration in 2020, the article was semi-protected and required citations from IPCC reports. The result? False claims about "global warming pausing" dropped by 92% in the following year.

These aren’t isolated cases. They’re the norm.

What’s Next for Wikipedia Arbitration?

ArbCom is experimenting with new tools. One pilot program lets editors flag articles for pre-emptive review if they see signs of conflict brewing. Another lets users request a "mediation bot" that suggests neutral phrasing before an edit is saved.

There’s also talk of expanding topic-area arbitration to non-English Wikipedias. The Spanish and Arabic versions are already testing similar systems. The goal? Make the model global, not just English-speaking.

But the core idea stays the same: Wikipedia survives because its community chooses to follow rules-even when they’re inconvenient. Arbitration doesn’t stop conflict. It gives conflict structure. And that’s what keeps the encyclopedia alive.

Can anyone request topic-area arbitration on Wikipedia?

Yes, but not casually. Any registered editor can file a request through the Arbitration Committee’s official page. However, requests must show clear evidence of persistent conflict-like repeated reverts, personal attacks, or violations of content policies over several months. Requests without documented history are usually declined.

How long do topic-area bans last?

Most topic-area bans last between six months and two years. They’re not permanent. After six months, the banned user can request a review. If the conflict has calmed and the user shows understanding of the rules, the ban may be lifted or modified. Some bans, especially for repeat offenders, can be extended or made indefinite-but this is rare.

Do topic-area arbitrations affect editing on talk pages?

Usually not. Topic bans apply only to article space edits. Users can still discuss issues on talk pages, unless the ban specifically includes them-which happens only in extreme cases, like harassment or coordinated disruption. Talk pages are considered essential for conflict resolution, so they’re generally left open.

What happens if a banned user creates a new account?

Creating a new account to bypass a topic ban is called sockpuppetry and is a serious violation. If detected, the new account is blocked immediately, and the original ban is often extended. The CheckUser tool helps identify patterns like similar editing times, language quirks, or IP addresses. Repeat offenders can face indefinite bans from editing altogether.

Are topic-area arbitration decisions public?

Yes. Every arbitration case is documented on Wikipedia’s Arbitration Committee page. The full decision, including evidence, reasoning, and remedies, is published in plain language. Only personal information like real names or private messages is redacted. Transparency is a core part of the system’s legitimacy.