Online Encyclopedias: How Wikipedia Stays Trusted While AI Rises

When you think of online encyclopedias, digital reference platforms that collect and organize knowledge for public access. Also known as digital reference works, they’ve evolved from static CD-ROMs to live, constantly updated systems powered by people—or algorithms. Among them, Wikipedia, a free, collaboratively edited encyclopedia run by volunteers and supported by the Wikimedia Foundation stands out. It’s not the fastest, and it’s not the fanciest, but surveys show people still trust it more than AI-generated encyclopedias for accurate, verifiable facts. Why? Because every edit leaves a trace. Every claim has a source. And every change can be questioned, reviewed, or reverted by someone who actually read the material.

Wikimedia Foundation, the nonprofit that supports Wikipedia and its sister projects doesn’t run ads or sell data to advertisers. Instead, it fights for open knowledge—pushing back against copyright takedowns that erase history, demanding transparency from AI companies that scrape Wikipedia without credit, and training editors to spot bias. Meanwhile, AI encyclopedias, automated knowledge systems that generate answers using large language models look slick. They answer fast. But their citations? Often fake. Their sources? Sometimes made up. And their version of "consensus"? Just whatever the algorithm learned from the most popular, not the most accurate, data.

Behind the scenes, Wikipedia’s strength comes from its rules—not laws, but living practices. Reliable sources are the backbone. Due weight keeps minority views from being drowned out. The watchlist helps editors catch vandalism before it spreads. And projects like Wikidata connect facts across 300+ languages so a fact updated in Spanish shows up in English, too. This isn’t magic. It’s messy, human work. Thousands of volunteers spend hours every day checking citations, fixing grammar, and arguing over wording—all because they believe knowledge should be free, accurate, and open to all.

Some online encyclopedias chase clicks. Wikipedia chases truth. That’s why you’ll find stories here about how the Signpost picks its news, how Indigenous voices are being added back into articles, and how copy editors cleared over 12,000 articles in a single volunteer drive. You’ll also see how AI is creeping in—not as a helper, but as a threat to the integrity of what we call fact. This collection doesn’t just report on Wikipedia. It shows you how it works, why it matters, and who keeps it alive when no one’s watching.

AI-Assisted Editing on Wikipedia: How Guardrails, Review, and Quality Control Keep It Reliable

AI-assisted editing on Wikipedia uses smart tools to flag vandalism, enforce neutrality, and suggest improvements-keeping the world’s largest encyclopedia accurate and reliable. Human editors still have the final say, but AI makes their work faster and more effective.

Wikipedia's Response to Paid Editing Scandals

Wikipedia responded to paid editing scandals by enforcing transparency, requiring editors to disclose paid relationships. Volunteers and automated tools now flag suspicious edits, and companies like Google and Microsoft have adopted strict internal policies. Trust in Wikipedia remains intact because of its open, community-driven enforcement.

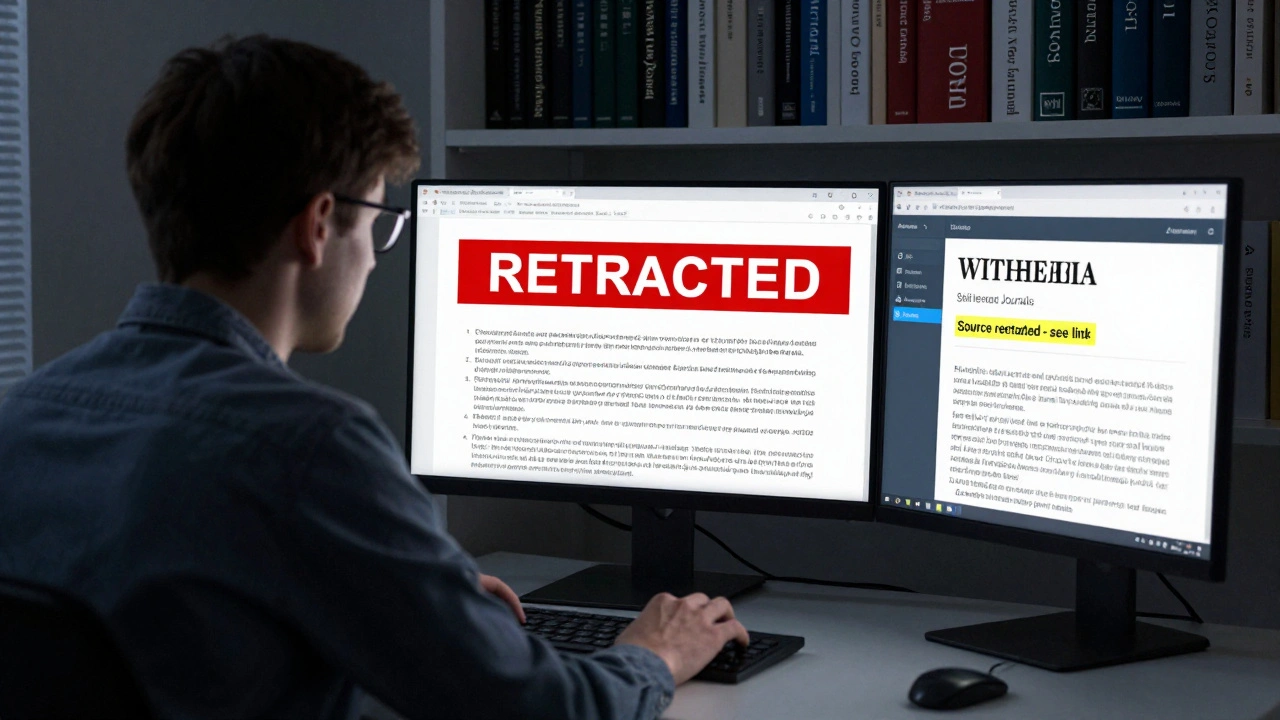

How to Handle Retractions and Corrections in Wikipedia References

Wikipedia relies on reliable sources, but when those sources are retracted or corrected, the article must change. Learn how to identify, document, and replace faulty citations to maintain trust and accuracy.

Using ORES and Machine Learning to Flag Risky Wikipedia Edits

ORES uses machine learning to detect vandalism on Wikipedia by analyzing edit patterns in real time. It helps human editors prioritize risky changes, reducing the time harmful content stays online. Trained on decades of edit history, it catches 80%+ of vandalism faster than humans alone.

How Press Releases Influence Wikipedia Article Updates

Press releases don’t directly update Wikipedia, but they can trigger changes when journalists turn them into credible news stories. Wikipedia relies on independent reporting-not corporate announcements-to verify and add information.

Comparative Journalism: Wikipedia vs Traditional Encyclopedias

Wikipedia and traditional encyclopedias approach knowledge in opposite ways - one open and dynamic, the other expert-driven and static. Which one should you trust? The answer isn't simple.

The Gender Gap in Wikipedia: What Research Shows

Research shows that fewer than 20% of Wikipedia editors are women, leading to significant gaps in coverage of women's history, achievements, and perspectives. This imbalance affects what information is preserved-and who gets remembered.

Wikipedia Oversight: How Suppression Requests Work and What Gets Hidden

Wikipedia oversight allows trusted editors to hide sensitive edits - like personal data or threats - from public view. It’s not censorship. It’s a safety tool that protects real people behind the screen. Only a few hundred users worldwide can use it, and only under strict rules.

How Wikipedia's Pending Changes and Autopatrol Protect Edit Quality

Wikipedia's Pending Changes and Autopatrol features protect article quality by filtering out vandalism while letting trusted editors make instant updates. Learn how these tools keep the encyclopedia accurate and up to date.

Training Modules for Students Editing Wikipedia: What to Include

Effective training modules for students editing Wikipedia must teach the Five Pillars, reliable sourcing, notability rules, and conflict navigation-not just editing tools. Real examples and structured practice turn beginners into confident contributors.

Wikimedia Foundation Challenges to Government Regulations

The Wikimedia Foundation is fighting government censorship worldwide to protect access to accurate, free knowledge. From Turkey to India, it refuses to remove factual content-even when governments demand it.

Notable Cases of Admin Abuse and How Communities Fought Back

When admins misuse power, communities don’t stay silent. From Wikipedia to Twitch, documented evidence and organized action have forced platforms to change. Here’s how real users fought back-and won.