When Neutrality Fails: The Reality of High-Stakes Editing

You might think that Wikipedia is a static repository of facts, frozen in time like a printed book. It isn't. It is a living, breathing battleground where millions of users fight over what constitutes "truth." When these fights involve celebrities, politicians, or corporations, they stop being simple disagreements and become high-profile Wikipedia disputes that expose the cracks in crowd-sourced knowledge systems.

We often assume that the sheer volume of editors guarantees accuracy. But when ego, money, or political power enters the chat room, the system strains. These aren't just minor tweaks to grammar; they are coordinated campaigns to reshape public perception. Understanding how these conflicts play out-and why they matter-is crucial for anyone who relies on digital information.

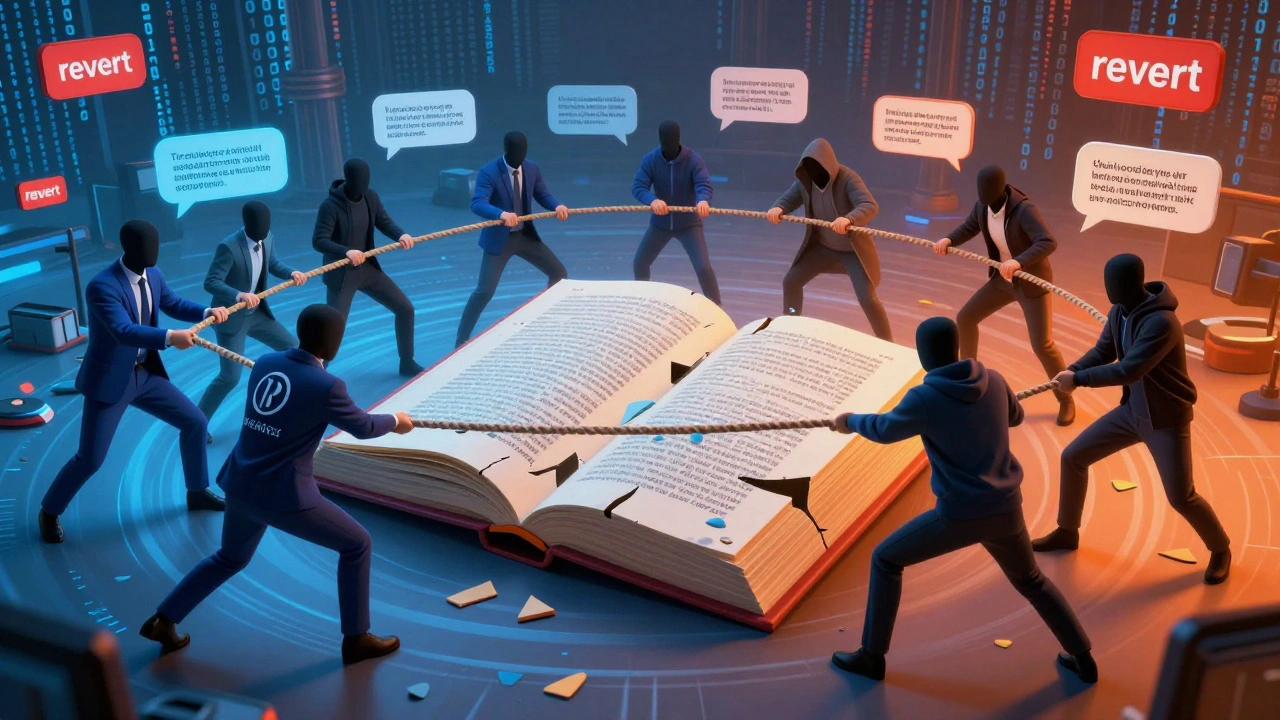

The Anatomy of an Edit War

To understand the big cases, you first need to see the mechanics of a small one. An edit war starts when two editors disagree on content. One reverts the other's change. The other reverts it back. This cycle repeats, sometimes hundreds of times, until a moderator steps in.

In high-profile scenarios, this dynamic scales up. Instead of two people, you have teams. A corporation hires a PR firm to "optimize" its page. A fan club mobilizes to protect their idol's reputation. A political opponent tries to insert damaging but unverified claims. The core issue remains the same: who gets to decide what stays?

- Reversion: The act of undoing another user's edit.

- Vandalism: Deliberate insertion of false or offensive content.

- Bio of Living Person (BLP): Strict guidelines requiring reliable sources for controversial statements about living individuals.

- Notability: The threshold required for a subject to deserve its own article.

These rules exist to maintain order, but they are frequently tested by those with something to lose.

Case Study 1: The Jimmy Wales Incident

No discussion of Wikipedia disputes is complete without mentioning the founder himself. In 2006, Jimmy Wales, co-founder of Wikipedia, became the center of a massive controversy. A user named Kirtap began editing Wales' biography, adding references to his involvement in various internet communities and labeling him a "troll."

This wasn't just a random attack. Kirtap had a history of ideological battles on the platform. He argued that Wales was biased and that his edits were not neutral. Wales, understandably, tried to correct the record. The situation escalated quickly, drawing international media attention. Critics pointed out the irony: the man who built the world's largest free encyclopedia couldn't control his own entry.

The lesson here? Even the architects of the system are subject to its rules. No one is above scrutiny. The incident forced the community to tighten policies around self-editing and conflict of interest, proving that transparency must apply to everyone, regardless of status.

Case Study 2: Corporate Reputation Management

Corporations have long understood the SEO value of Wikipedia. A top-ranking search result can shape investor sentiment and consumer trust. However, many companies make the mistake of trying to "manage" their pages directly.

Consider the case of several major tech firms caught hiring lobbyists to write glowing copy for their Wikipedia entries. These drafts often lacked citations, used promotional language, and ignored negative news. When discovered, these pages were flagged, and the employees involved faced disciplinary action. Some even lost their jobs.

This highlights a critical pitfall: attempting to bypass the editorial process. Wikipedia is not a marketing channel. It is a reference work. Companies that respect this distinction-by providing factual, sourced information rather than spin-tend to fare better in the long run. Those that try to game the system risk severe reputational damage when their tactics are exposed.

| Tactic | Description | Risk Level |

|---|---|---|

| Ghostwriting | Hiring third parties to create positive content anonymously | High |

| Source Manipulation | Citing unreliable or biased sources to support claims | Medium |

| Aggressive Reverting | Repeatedly removing negative but verified information | Very High |

| Constructive Engagement | Providing verifiable data to improve existing articles | Low |

Case Study 3: Political Bias and Election Interference

Politics brings passion, and passion brings bias. During election cycles, Wikipedia pages for candidates often become hotbeds of activity. In recent years, we've seen instances where coordinated groups attempted to skew narratives against opponents.

One notable example involved the removal of negative headlines from a candidate's page shortly before a primary vote. The edits were traced back to a single IP address range associated with a campaign volunteer group. While the changes were quickly reverted, the incident raised questions about the vulnerability of encyclopedic content during sensitive periods.

Wikipedia responded by implementing stricter monitoring tools and increasing the number of administrators active during election seasons. Yet, the challenge persists. How do you distinguish between legitimate criticism and malicious propaganda? The line is often blurry, and human judgment is fallible.

The Role of Bots and Automated Tools

Humans aren't the only players in this arena. Bots-automated scripts designed to perform repetitive tasks-are essential for maintaining Wikipedia. They fix typos, update templates, and delete obvious spam. But bots can also be weaponized.

In some disputes, bad actors deploy bots to flood talk pages with arguments, disrupt discussions, or rapidly revert edits to trigger automatic blocks. This creates chaos and overwhelms moderators. Detecting bot-driven behavior requires sophisticated algorithms and constant vigilance.

The rise of artificial intelligence adds another layer of complexity. AI-generated text can mimic human writing styles, making it harder to identify automated interference. As technology evolves, so too must the defenses protecting the integrity of the platform.

Lessons for Editors and Readers

If you're an editor, the key takeaway is patience. Never engage in an edit war. If you disagree with a change, discuss it on the talk page. Provide sources. Explain your reasoning. Emotional reactions rarely lead to productive outcomes.

For readers, the lesson is skepticism. Always check the talk page. Look at the revision history. See who has been editing and why. Wikipedia is generally reliable, but it is not infallible. High-profile topics are more likely to be contested, so extra diligence is warranted.

Here are three practical tips for navigating disputed content:

- Check Citations: Are claims backed by reputable sources? If not, treat them with caution.

- Review Revision History: Sudden bursts of activity may indicate coordinated efforts.

- Consult Talk Pages: Discussions often reveal underlying biases or unresolved conflicts.

The Future of Content Moderation

As Wikipedia grows, so does the scale of its challenges. With millions of articles and thousands of new edits every minute, manual oversight is impossible. The community relies on a combination of software tools, experienced volunteers, and clear policies to keep things running smoothly.

However, the pressure is mounting. Misinformation spreads faster than ever, and bad actors are becoming more sophisticated. Can a volunteer-driven model withstand these pressures? Many experts believe it can, provided the community remains engaged and adaptive.

New initiatives focus on improving source quality, enhancing detection of conflict-of-interest editing, and fostering a more inclusive environment for diverse perspectives. These efforts aim to strengthen the foundation of Wikipedia, ensuring it remains a trusted resource for generations to come.

Why Transparency Matters More Than Ever

At the heart of every dispute is a question of trust. Trust in the process, trust in the participants, and trust in the outcome. When transparency is compromised, trust erodes. That's why Wikipedia emphasizes open dialogue and documented decision-making.

Every policy change, every block, every deletion is recorded and accessible. This openness allows the community to hold each other accountable. It also enables outside observers to audit the system, identifying weaknesses and suggesting improvements.

In a world where information is increasingly curated by opaque algorithms, Wikipedia's commitment to transparency stands out. It reminds us that knowledge should be collaborative, not controlled. And while the journey is fraught with conflict, the destination-a freely accessible, comprehensive encyclopedia-is worth the effort.

What happens if I get into an edit war on Wikipedia?

If you participate in an edit war, your account may be temporarily blocked to prevent further disruption. Repeated offenses can lead to longer bans or permanent restrictions. The best approach is to stop reverting immediately and move the discussion to the article's talk page.

Can companies legally edit their own Wikipedia pages?

While not illegal, companies are strongly discouraged from editing their own pages due to conflict-of-interest policies. Doing so risks having contributions removed and damaging corporate credibility. Instead, companies should submit factual information through proper channels or hire neutral third-party editors.

How does Wikipedia handle vandalism?

Vandalism is typically detected by automated bots and reverted within minutes. Persistent vandals are blocked from editing. For more subtle forms of misinformation, human reviewers examine revisions and enforce content policies based on reliable sourcing.

Is Wikipedia biased towards certain viewpoints?

Wikipedia strives for neutrality, but bias can creep in due to uneven representation among editors. Controversial topics often reflect the dominant perspective of the contributing community. Ongoing efforts aim to diversify the editor base and mitigate systemic biases.

What is the "Talk Page" and why is it important?

The Talk Page is a forum attached to each article where editors discuss proposed changes. It serves as a record of consensus-building and helps resolve disputes amicably. Checking the Talk Page provides insight into ongoing debates and potential controversies surrounding an article.